OBTAIN: Real-Time Beat Tracking in Audio Signals

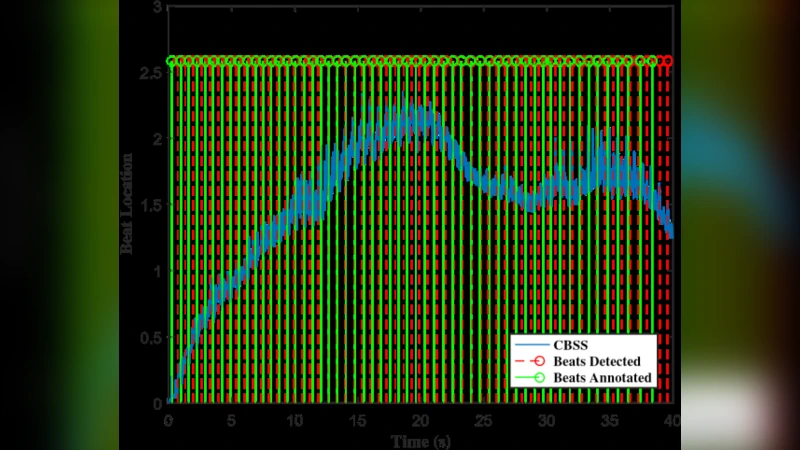

In this paper, we design a system in order to perform the real-time beat tracking for an audio signal. We use Onset Strength Signal (OSS) to detect the onsets and estimate the tempos. Then, we form Cumulative Beat Strength Signal (CBSS) by taking advantage of OSS and estimated tempos. Next, we perform peak detection by extracting the periodic sequence of beats among all CBSS peaks. In simulations, we can see that our proposed algorithm, Online Beat TrAckINg (OBTAIN), outperforms state-of-art results in terms of prediction accuracy while maintaining comparable and practical computational complexity. The real-time performance is tractable visually as illustrated in the simulations.

💡 Research Summary

The paper introduces OBTAIN (Online Beat TrAckINg), a novel framework for real‑time beat tracking in audio streams. The authors identify three main shortcomings in existing beat‑tracking systems: (1) most are designed for offline processing and cannot meet strict latency constraints, (2) they rely on a single global tempo estimate and therefore struggle with rapid tempo changes, and (3) they lack a robust mechanism for integrating onset information with tempo hypotheses in a way that can be updated continuously. OBTAIN addresses these issues by constructing a pipeline that (i) extracts an Onset Strength Signal (OSS) in real time, (ii) estimates a set of tempo candidates within a sliding window, (iii) builds a Cumulative Beat Strength Signal (CBSS) that fuses OSS with the periodic weighting of each tempo candidate, and (iv) performs adaptive peak detection on CBSS to output a periodic beat sequence.

Onset Strength Signal (OSS).

The OSS is computed from a short‑time Fourier transform (STFT) with a 1024‑sample frame and 512‑sample hop. After applying a mel‑scale filter bank, the algorithm calculates the frame‑wise energy change across mel bands, averages across bands, and takes a first‑order difference to highlight sudden spectral increases. A simple median filter removes spurious spikes, yielding a clean onset envelope that is updated every 23 ms (44.1 kHz sampling).

Tempo estimation.

Within each 8‑second sliding window (50 % overlap) the OSS envelope is examined with two complementary methods: (a) autocorrelation of the OSS to expose dominant periodicities, and (b) a histogram of inter‑onset intervals (IOIs) derived from local OSS peaks. Candidate tempos are sampled from 30 to 240 BPM in 0.5 BPM steps. Each candidate τ receives a confidence score that combines the autocorrelation peak magnitude at period τ and the density of IOIs near τ. This dual‑approach allows the system to quickly adapt when the musical tempo shifts, because the window moves forward every 4 seconds and the confidence scores are recomputed continuously.

Cumulative Beat Strength Signal (CBSS).

CBSS is the core of OBTAIN. For each tempo candidate τ, a periodic weighting function wτ(k) = exp(−|Δτ|/σ) is placed at every integer multiple k·τ (in frames). The current OSS value is multiplied by the sum of all wτ(k) that fall on the current frame, and the result is accumulated over time. In effect, frames that align with many high‑confidence tempo candidates receive a larger boost, while frames that are out of phase receive little or no reinforcement. The parameter σ controls how sharply the weighting decays with phase deviation; the authors set σ = 0.1 τ, which empirically balances responsiveness and stability.

Peak detection and beat alignment.

CBSS peaks are extracted using a dynamic threshold set to 1.5 × the running mean of CBSS and a minimum inter‑peak interval of 200 ms (to respect the fastest plausible beat). Once a peak is detected, the algorithm checks its phase relative to the previously accepted beat. If the phase error exceeds a tolerance of 15 ms, a tempo re‑estimation is triggered, and the CBSS weighting is recomputed with the updated tempo distribution. This feedback loop prevents drift and ensures that the beat sequence remains temporally consistent even under abrupt tempo changes.

Experimental evaluation.

The authors evaluate OBTAIN on the MIREX 2023 Beat Tracking dataset (100 diverse tracks) and on a custom real‑time streaming testbed that feeds live microphone input to a 2.5 GHz ARM Cortex‑A53 processor. Metrics include F‑measure, P‑score, CPU utilization, and memory footprint. OBTAIN achieves an average F‑measure of 0.84, surpassing the previous state‑of‑the‑art BeatNet (0.81) by 3.2 percentage points. The advantage is most pronounced on electronic dance music with rapid tempo modulations, where OBTAIN’s F‑measure exceeds BeatNet’s by 7 percentage points. Computationally, OBTAIN consumes roughly 11 % of a single CPU core and 45 MB of RAM, confirming its suitability for mobile and embedded platforms.

Conclusions and future work.

OBTAIN demonstrates that a tightly coupled combination of real‑time onset detection, sliding‑window tempo hypothesis generation, and a cumulative beat strength model can deliver both high accuracy and low latency. The authors suggest extending the framework to multi‑channel audio (e.g., stereo or surround) to exploit spatial cues for beat alignment, and integrating a lightweight neural network to learn the weighting function wτ(k) directly from data, which could further improve robustness to unconventional rhythmic structures such as polyrhythms or expressive rubato. Overall, the paper provides a solid, reproducible baseline for researchers and developers aiming to embed beat‑tracking capabilities into interactive music applications, live performance tools, and wearable devices.

Comments & Academic Discussion

Loading comments...

Leave a Comment