Unified Functorial Signal Representation III: Foundations, Redundancy, $L^0$ and $L^2$ functors

In this paper we propose and lay the foundations of a functorial framework for representing signals. By incorporating additional category-theoretic relative and generative perspective alongside the classic set-theoretic measure theory the fundamental concepts of redundancy, compression are formulated in a novel authentic arrow-theoretic way. The existing classic framework representing a signal as a vector of appropriate linear space is shown as a special case of the proposed framework. Next in the context of signal-spaces as a categories we study the various covariant and contravariant forms of $L^0$ and $L^2$ functors using categories of measurable or measure spaces and their opposites involving Boolean and measure algebras along with partial extension. Finally we contribute a novel definition of intra-signal redundancy using general concept of isomorphism arrow in a category covering the translation case and others as special cases. Through category-theory we provide a simple yet precise explanation for the well-known heuristic of lossless differential encoding standards yielding better compressions in image types such as line drawings, iconic image, text etc; as compared to classic representation techniques such as JPEG which choose bases or frames in a global Hilbert space.

💡 Research Summary

The paper proposes a comprehensive category‑theoretic framework for signal representation, extending the classical view of a signal as a vector in a linear space to a functorial perspective. The authors model a signal generation–observation system as a functor F from a source category C to a target category D. The source category C encodes the abstract generative structure of the signal (e.g., musical motifs, visual objects, linguistic units) and is taken to be a groupoid, i.e., a category in which every morphism is invertible. The target category D is chosen among familiar measure‑theoretic categories such as Meas (measurable spaces and measurable maps), Measure (measure spaces and measure‑preserving maps), LocMeas, and LocMeasure, thereby preserving the traditional set‑theoretic underpinnings of signal theory.

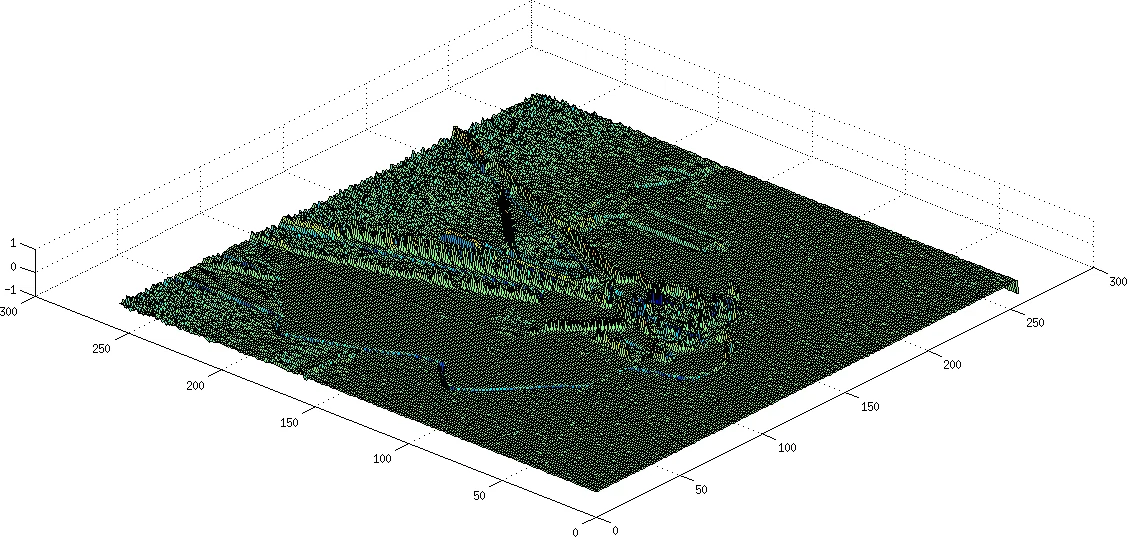

In this setting each object G ∈ Ob(C) is mapped by F to a concrete measurable function f_G ∈ Ob(D) (e.g., a time‑signal, an image patch). Morphisms a: G→H in C are sent to natural transformations F(a): f_G→f_H, which become isomorphisms in D whenever F preserves isomorphisms. This preservation allows the authors to define intra‑signal redundancy: if two generators are isomorphic in C, their corresponding sub‑signals are isomorphic in D, and one can be expressed as a differential (Δ) of the other. Consequently, differential (lossless) coding schemes—such as predictive coding used for line drawings, icons, and text—are explained as exploiting abundant isomorphisms in the source structure, which leads to higher compression ratios compared with global basis methods like JPEG.

The paper then reconstructs the classical L⁰ and L² function spaces as functors. For a measurable space (X, Σ_X) the space L⁰(X) of (almost everywhere) measurable real‑valued functions is identified with objects of Meas→, while L²(X) is identified with objects of LocMeasure. The authors define covariant functors L⁰: Meas→ → Riesz and contravariant functors L²: LocMeasure → Hilb. Morphisms (h, φ) in the source category induce bounded linear operators T(h, φ)⁻¹ between the corresponding L² spaces, precisely mirroring the differential operators used in predictive coding. Thus the global signal f decomposes as a coproduct (direct sum) of its local pieces f|_I, f|_J, … in L⁰ or L², and the arrows linking these pieces encode the transformations (translation, scaling, amplitude change) that generate redundancy.

A novel methodological contribution is the notion of trivial categorification: a measurable function f is simultaneously an object of Meas→ and an element of the Riesz space L⁰ because the underlying set ℝ carries a field structure. By exploiting this dual nature, the authors can perform pointwise algebraic operations (addition, multiplication) on objects that are otherwise only morphisms in a categorical sense. This enables a seamless blend of set‑theoretic measure theory with categorical structure, allowing the definition of equivalence classes modulo null sets, a.e. equality, and integrability within the same formalism.

The authors acknowledge that real‑world acquisition systems are imperfect: superposition of waveforms, noise, and non‑ideal sensors may cause the functor F to be non‑faithful, i.e., distinct generators may map to indistinguishable signals. To mitigate this, they propose using the measure‑theoretic null‑ideal and a.e. equivalence to collapse indistinguishable objects, and to treat problematic regions as separate Boolean or measure algebras. This “partial” categorical approach preserves as much structural information as possible while remaining computationally tractable.

Finally, the paper contrasts the functorial framework with classical signal representation along four dimensions:

- Source modeling – Classical methods ignore the generative source; the functorial approach explicitly models it as a category, distinguishing memoryless (discrete category) from memory‑bearing (groupoid) sources.

- Redundancy definition – Traditional redundancy is a scalar ratio; the new definition is relational, based on isomorphisms between sub‑signals.

- Signal space – Instead of a fixed Hilbert space L²(ℝⁿ), the signal space becomes a subcategory of Hilb or Riesz matched to the source’s structure, yielding signal‑specific bases.

- Dual use of measure theory – By treating measurable functions both as categorical objects and as elements of Riesz spaces, the framework retains the analytical tools of classical signal processing while gaining the structural insight of category theory.

In summary, the paper delivers a mathematically rigorous, unified view that integrates category theory, measure theory, and functional analysis to model generation, transformation, and compression of signals. It explains why differential coding excels for highly structured data, provides a pathway to construct source‑aware signal spaces, and opens new research directions at the intersection of information theory, signal processing, and higher‑category mathematics.

Comments & Academic Discussion

Loading comments...

Leave a Comment