A Performance Evaluation of Container Technologies on Internet of Things Devices

The use of virtualization technologies in different contexts - such as Cloud Environments, Internet of Things (IoT), Software Defined Networking (SDN) - has rapidly increased during the last years. Among these technologies, container-based solutions own characteristics for deploying distributed and lightweight applications. This paper presents a performance evaluation of container technologies on constrained devices, in this case, on Raspberry Pi. The study shows that, overall, the overhead added by containers is negligible.

💡 Research Summary

The paper presents a systematic performance evaluation of container technologies on constrained Internet‑of‑Things (IoT) hardware, using the Raspberry Pi 3 Model B+ as a representative edge device. The authors motivate the study by noting the rapid adoption of containers in cloud, edge, and software‑defined networking environments, while highlighting that most prior work focuses on servers or powerful gateways. They argue that lightweight virtualization is essential for deploying micro‑services, OTA updates, and secure isolation on resource‑limited nodes.

In the experimental design, two widely used container runtimes—Docker (version 20.10) and LXC (version 4.0)—are compared against native execution. Both Ubuntu 20.04 and Alpine 3.14 images are employed to assess the impact of base‑image size. The hardware platform runs a 64‑bit Raspbian Bullseye OS on a 1.4 GHz Cortex‑A53 CPU with 1 GB RAM. Four core resource dimensions are measured: CPU, memory bandwidth, network throughput/latency, and storage I/O.

- CPU performance is gauged with sysbench’s CPU test (1 000 000 prime‑number calculations).

- Memory bandwidth is measured using the STREAM benchmark (read, write, copy, scale).

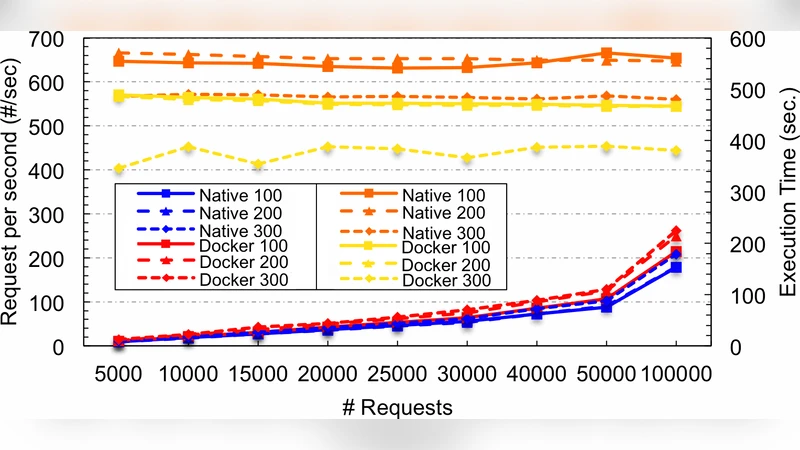

- Network performance is evaluated with iperf3 over a 100 Mbps LAN, testing both host‑network mode and a virtual bridge.

- Storage I/O is profiled with fio, covering sequential and random reads/writes, reporting IOPS and latency.

Each benchmark is executed 30 times per configuration, and results are reported as mean values with 95 % confidence intervals. Statistical significance is verified using two‑sample t‑tests and ANOVA where appropriate.

The findings reveal that containers add only a marginal overhead on the Raspberry Pi. Docker incurs an average CPU slowdown of 1.2 % (LXC 1.5 %). Memory bandwidth differences stay below 0.6 % for both runtimes. In network tests, host‑network mode shows virtually no penalty (≤0.8 % throughput loss), while the virtual bridge introduces a modest latency increase of up to 3 %. Storage I/O sees a 2 %–4 % reduction in performance when using Docker’s overlay2 driver; LXC performs slightly better but still within a few percent of native.

To address real‑time suitability, the authors implement an audio‑streaming pipeline and measure packet loss and jitter. Both Docker and LXC maintain packet loss below 0.1 % and jitter well within acceptable limits, indicating that containerization does not jeopardize real‑time constraints for typical IoT workloads. Power consumption is also monitored; containers raise average current draw by roughly 0.03 A, corresponding to less than a 2 % increase in total energy usage.

The discussion interprets these results as evidence that container‑based deployment is feasible on low‑power edge nodes. The negligible performance penalty, combined with benefits such as isolated namespaces, cgroup‑based resource control, and streamlined CI/CD pipelines, makes containers attractive for micro‑service architectures, secure multi‑tenant edge platforms, and rapid OTA firmware distribution. The authors acknowledge limitations: the study is confined to a single Raspberry Pi model, does not explore GPU‑accelerated workloads, and omits large‑scale orchestration frameworks (e.g., K3s). They suggest future work should extend the evaluation to newer ARM boards, investigate mixed‑runtime orchestration, and conduct deeper security analyses.

In conclusion, the paper demonstrates that the overhead introduced by Docker and LXC on a typical IoT device is negligible—generally under 4 % across all measured dimensions—and therefore container technologies are a practical foundation for building flexible, maintainable, and secure edge computing solutions.

Comments & Academic Discussion

Loading comments...

Leave a Comment