Dynamic Oracle for Neural Machine Translation in Decoding Phase

The past several years have witnessed the rapid progress of end-to-end Neural Machine Translation (NMT). However, there exists discrepancy between training and inference in NMT when decoding, which may lead to serious problems since the model might be in a part of the state space it has never seen during training. To address the issue, Scheduled Sampling has been proposed. However, there are certain limitations in Scheduled Sampling and we propose two dynamic oracle-based methods to improve it. We manage to mitigate the discrepancy by changing the training process towards a less guided scheme and meanwhile aggregating the oracle’s demonstrations. Experimental results show that the proposed approaches improve translation quality over standard NMT system.

💡 Research Summary

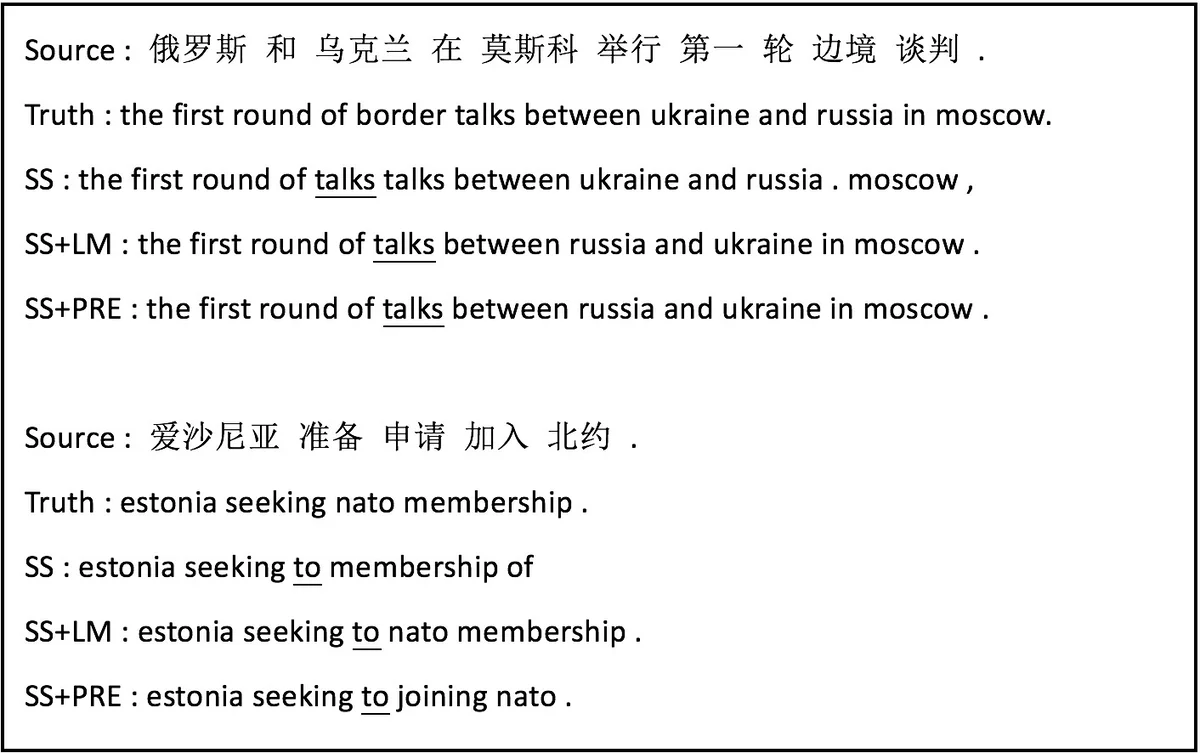

The paper addresses the well‑known exposure bias problem in neural machine translation (NMT), which arises from the mismatch between training and inference: during training the decoder always receives the ground‑truth previous token, while at test time it must rely on its own previous predictions. Scheduled Sampling (Bengio et al., 2015) mitigates this by randomly feeding the model’s own prediction with a probability that grows over training. However, the authors identify a critical limitation: even when a sampled prediction replaces the ground‑truth token, the subsequent input is still the original gold token, i.e., a static oracle is used. This can lead to incoherent training signals because the gold token may no longer be appropriate given the altered prefix.

To overcome this, the authors propose two dynamic‑oracle‑based extensions of Scheduled Sampling. A dynamic oracle supplies, at each time step, the most suitable token given the current (possibly erroneous) prefix, thereby keeping the training process consistent with the actual state the model will encounter at test time.

The first method uses a separately trained recurrent neural network language model (RNN‑LM) as the oracle. When the scheduled‑sampling decision selects the model’s own prediction, the LM is queried with the partially generated target sequence and returns the highest‑probability token. To preserve alignment with the source, the LM’s candidate set is restricted to words that appear in the source‑sentence reference, ensuring both fluency and relevance.

The second method replaces the LM with a pre‑trained NMT system. After a sampled prediction is taken, the pre‑trained translator generates the next token conditioned on both the source sentence and the current target prefix. This oracle is not limited to the source‑reference vocabulary, allowing richer, more diverse continuations that still respect source semantics. Consequently, this approach can inject translation diversity into training, alleviating the single‑reference constraint of standard NMT.

Both methods share a common algorithmic framework (Algorithm 1). At each decoding step t, a random number p∈

Comments & Academic Discussion

Loading comments...

Leave a Comment