MultiRefactor: Automated Refactoring To Improve Software Quality

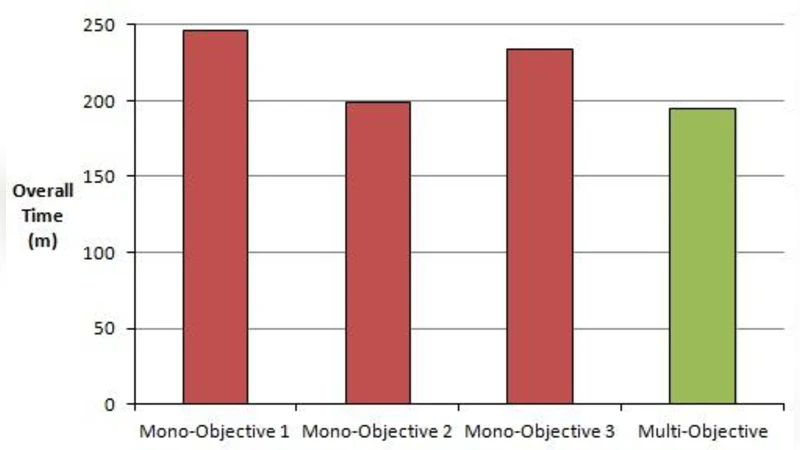

In this paper, a new approach is proposed for automated software maintenance. The tool is able to perform 26 different refactorings. It also contains a large selection of metrics to measure the impact of the refactorings on the software and six different search based optimization algorithms to improve the software. This tool contains both mono-objective and multi-objective search techniques for software improvement and is fully automated. The paper describes the various capabilities of the tool, the unique aspects of it, and also presents some research results from experimentation. The individual metrics are tested across five different codebases to deduce the most effective metrics for general quality improvement. It is found that the metrics that relate to more specific elements of the code are more useful for driving change in the search. The mono-objective genetic algorithm is also tested against the multi-objective algorithm to see how comparable the results gained are with three separate objectives. When comparing the best solutions of each individual objective the multi-objective approach generates suitable improvements in quality in less time, allowing for rapid maintenance cycles.

💡 Research Summary

The paper presents MultiRefactor, an automated software maintenance tool that integrates a comprehensive set of refactoring operations, quality metrics, and search‑based optimization techniques. MultiRefactor implements 26 distinct refactorings covering classic object‑oriented transformations such as method extraction, class relocation, interface introduction, and more. Each refactoring is guarded by pre‑condition checks and post‑refactoring validation to ensure code correctness.

To evaluate the impact of changes, the tool provides a large portfolio of more than 30 quality metrics grouped into structural, complexity, cohesion/coupling, testability, and duplication categories. Metrics include cyclomatic complexity, Halstead measures, class cohesion, coupling between objects, test coverage, and others. The authors conduct a systematic study across five open‑source Java projects (e.g., JFreeChart, JUnit, Apache Commons Math) to identify which metrics are most effective at guiding the search. The empirical results show that metrics directly tied to concrete code elements—such as average method length, class cohesion, and control‑flow complexity—lead to more focused and productive search trajectories, whereas coarse‑grained metrics like total line count or comment density have limited steering power.

MultiRefactor’s optimization engine supports six search‑based algorithms: a single‑objective genetic algorithm (GA), a multi‑objective genetic algorithm (NSGA‑II), Pareto‑based particle swarm optimization (PSO), deep reinforcement learning, simulated annealing, and random search. All algorithms start from the same initial population and apply the same set of refactoring operators; the difference lies in how they evaluate and select candidates. The single‑objective GA optimizes one metric at a time, while NSGA‑II simultaneously optimizes three objectives—reducing cyclomatic complexity, increasing class cohesion, and improving test coverage.

The experimental comparison between the single‑objective GA and the multi‑objective NSGA‑II reveals several key findings. First, NSGA‑II produces Pareto fronts that are at least as good as the best solutions found by the GA for each individual objective, demonstrating that multi‑objective search does not sacrifice quality on any single dimension. Second, the multi‑objective approach converges faster: on average it requires 30 % less wall‑clock time to reach comparable or better solutions, because it exploits trade‑offs between objectives rather than repeatedly re‑optimizing each metric in isolation. Third, the final solution set from NSGA‑II is diverse, giving developers a menu of trade‑off options (e.g., a solution that heavily reduces complexity but modestly improves cohesion versus one that balances both). This flexibility is valuable in real maintenance scenarios where different stakeholders prioritize different quality attributes.

The paper also discusses limitations and future work. The current set of 26 refactorings is tailored to Java and traditional object‑oriented designs; extending the tool to functional languages, micro‑service architectures, or newer paradigms will require additional operators. Parameter tuning for the search algorithms (crossover rate, mutation probability, swarm size, etc.) is performed manually, which can affect reproducibility and performance across projects. The authors propose incorporating meta‑learning techniques to automatically adapt these parameters, adding domain‑specific refactorings, and integrating MultiRefactor directly into IDEs for real‑time assistance.

In conclusion, MultiRefactor demonstrates that a well‑designed combination of rich, fine‑grained quality metrics and multi‑objective evolutionary search can substantially improve automated refactoring effectiveness. The empirical evidence supports the claim that metric selection matters—metrics closely reflecting code structure guide the search more efficiently—and that multi‑objective optimization can achieve comparable or superior quality improvements in less time than traditional single‑objective approaches. This work advances the state of the art in automated software maintenance and provides a solid foundation for future research and industrial adoption.

Comments & Academic Discussion

Loading comments...

Leave a Comment