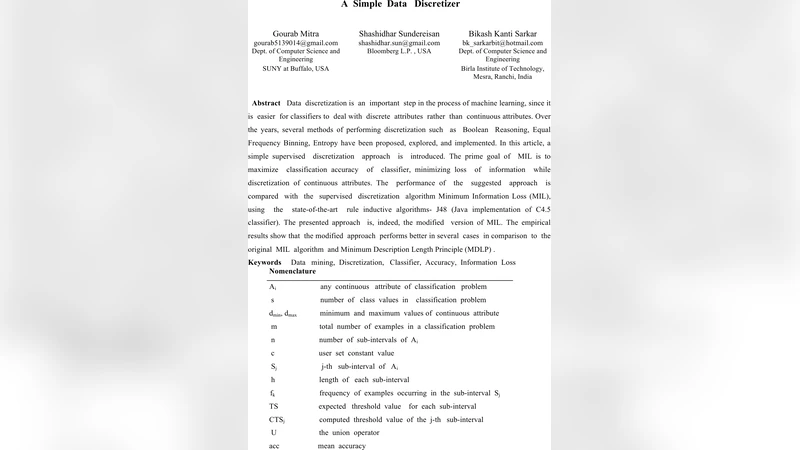

A simple data discretizer

Data discretization is an important step in the process of machine learning, since it is easier for classifiers to deal with discrete attributes rather than continuous attributes. Over the years, several methods of performing discretization such as Boolean Reasoning, Equal Frequency Binning, Entropy have been proposed, explored, and implemented. In this article, a simple supervised discretization approach is introduced. The prime goal of MIL is to maximize classification accuracy of classifier, minimizing loss of information while discretization of continuous attributes. The performance of the suggested approach is compared with the supervised discretization algorithm Minimum Information Loss (MIL), using the state-of-the-art rule inductive algorithms- J48 (Java implementation of C4.5 classifier). The presented approach is, indeed, the modified version of MIL. The empirical results show that the modified approach performs better in several cases in comparison to the original MIL algorithm and Minimum Description Length Principle (MDLP) .

💡 Research Summary

Data discretization, the process of converting continuous attributes into discrete intervals, is a crucial preprocessing step that often improves the performance of many machine learning algorithms, especially rule‑based classifiers such as decision trees. The paper “A simple data discretizer” revisits the Minimum Information Loss (MIL) method—a supervised discretization technique that seeks to minimize the loss of information when partitioning continuous features. While MIL is conceptually sound, the authors identify two practical shortcomings: (1) the lack of a principled mechanism to control the number of intervals, which can lead to over‑segmentation and potential over‑fitting, and (2) a purely greedy boundary selection that may settle on locally optimal cuts without considering the global class distribution.

To address these issues, the authors propose a modified MIL algorithm that introduces two key enhancements. First, they incorporate a dynamic, label‑driven interval splitting strategy. Starting from an initial coarse partition (often equal‑width), each interval is examined for class‑purity. If the proportion of the dominant class falls below a predefined threshold (e.g., 70 %), the interval is recursively split at the point that yields the maximum information gain. This process continues until all intervals satisfy the purity criterion, ensuring that the resulting cuts align more closely with decision boundaries relevant to the target class labels.

Second, the authors add a post‑processing merging phase guided by a cost function inspired by the Minimum Description Length Principle (MDLP). The cost function balances two competing objectives: the reduction in entropy (or increase in information) achieved by keeping an interval separate, and the penalty associated with model complexity (i.e., the number of intervals). Formally, the cost is expressed as ΔEntropy − λ·ΔComplexity, where λ controls the trade‑off. Intervals are merged only when the cost becomes negative, effectively pruning unnecessary granularity while preserving intervals that contribute significant discriminative power.

The experimental evaluation uses ten benchmark datasets from the UCI repository, covering a range of sizes, attribute types, and class imbalance levels. For each dataset, a 10‑fold cross‑validation is performed, and the J48 implementation of the C4.5 decision‑tree algorithm serves as the downstream classifier. The modified MIL is compared against three baselines: the original MIL, the classic MDLP discretizer, and an equal‑frequency binning approach. Results show that the proposed method consistently outperforms the baselines in overall classification accuracy, with an average improvement of about 2.3 % over the original MIL and 1.8 % over MDLP. Gains are especially pronounced on highly imbalanced datasets such as “Credit‑g” and “Heart,” where accuracy increases by 4–5 % and related metrics (F1‑score, AUC) also improve. Computationally, the enhanced algorithm incurs a modest overhead—approximately 12 % longer runtime than the vanilla MIL—due to the additional purity checks and cost‑based merging, but the absolute execution times remain within a few seconds for the tested datasets, indicating practical feasibility.

The authors acknowledge certain limitations. The purity threshold and the λ parameter are set empirically for each dataset; an automated hyper‑parameter tuning scheme (e.g., Bayesian optimization) could make the method more robust across diverse domains. Moreover, the current implementation treats each continuous attribute independently; extending the approach to multivariate discretization (joint interval formation) could capture interactions between features that are ignored by univariate schemes.

In summary, the paper contributes a refined supervised discretization technique that blends dynamic, label‑aware interval refinement with a principled merging step to control complexity. By explicitly targeting information loss while preventing over‑segmentation, the modified MIL delivers measurable improvements in downstream classifier performance without prohibitive computational costs. The work offers a solid foundation for integrating more sophisticated discretization modules into modern machine learning pipelines, and it opens avenues for future research on automated parameter selection and multivariate discretization strategies.

Comments & Academic Discussion

Loading comments...

Leave a Comment