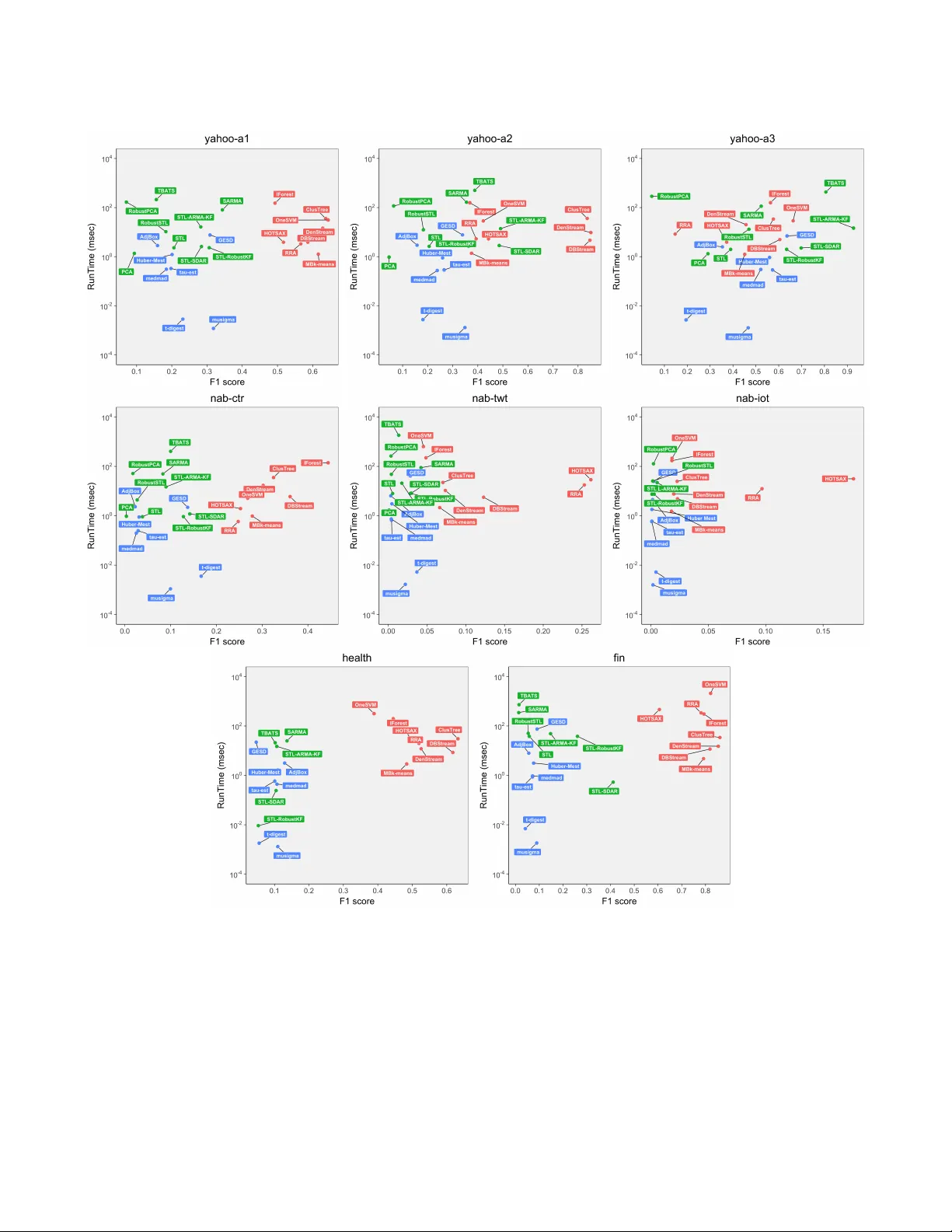

On the Runtime-Efficacy Trade-off of Anomaly Detection Techniques for Real-Time Streaming Data

Ever growing volume and velocity of data coupled with decreasing attention span of end users underscore the critical need for real-time analytics. In this regard, anomaly detection plays a key role as an application as well as a means to verify data …

Authors: Dhruv Choudhary, Arun Kejariwal, Francois Orsini