Implementing an Edge-Fog-Cloud architecture for stream data management

The Internet of Moving Things (IoMT) requires support for a data life cycle process ranging from sorting, cleaning and monitoring data streams to more complex tasks such as querying, aggregation, and analytics. Current solutions for stream data management in IoMT have been focused on partial aspects of a data life cycle process, with special emphasis on sensor networks. This paper aims to address this problem by developing streaming data life cycle process that incorporates an edge/fog/cloud architecture that is needed for handling heterogeneous, streaming and geographically-dispersed IoMT devices. We propose a 3-tier architecture to support an instant intra-layer communication that establishes a stream data flow in real-time to respond to immediate data life cycle tasks in the system. Communication and process are thus the defining factors in the design of our stream data management solution for IoMT. We describe and evaluate our prototype implementation using real-time transit data feeds. Preliminary results are showing the advantages of running data life cycle tasks for reducing the volume of data streams that are redundant and should not be transported to the cloud.

💡 Research Summary

The paper addresses the growing need for comprehensive stream‑data management in the Internet of Moving Things (IoMT), where heterogeneous sensors generate continuous, geographically dispersed data streams. Existing solutions typically focus on isolated aspects such as data collection or simple filtering, leaving the full data‑life‑cycle—from ingestion, cleaning, and real‑time response to long‑term analytics—unaddressed. To fill this gap, the authors propose a three‑tier Edge‑Fog‑Cloud architecture that aligns each tier with specific stages of the data‑life‑cycle and emphasizes ultra‑low‑latency intra‑layer communication.

At the Edge layer, raw sensor readings are immediately subjected to noise reduction, format normalization, and threshold‑based filtering. Redundant or irrelevant records are discarded locally, dramatically reducing upstream traffic. The Fog layer aggregates the cleaned edge streams, performing windowed aggregations, spatial clustering, and event detection that provide regional, near‑real‑time insights. It also acts as a smart broker, applying dynamic transmission policies (e.g., only forwarding data when certain conditions are met). Finally, the Cloud layer stores the fog‑processed streams for batch analytics, machine‑learning model training, and long‑term visualization, leveraging scalable storage and compute resources.

A key technical contribution is the hybrid messaging backbone that combines MQTT for lightweight edge‑to‑fog communication with Apache Kafka for reliable fog‑to‑cloud streaming. This design ensures sub‑20 ms latency between edge and fog nodes and sub‑50 ms latency to the cloud, meeting the stringent response requirements of many IoMT applications. The system also exposes RESTful and gRPC interfaces for service interoperability.

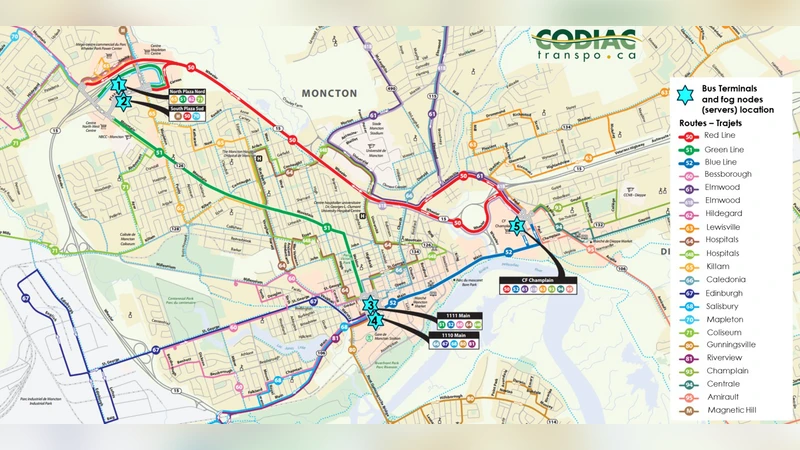

The authors validate the architecture with a prototype that consumes live public transit feeds (bus GPS positions and arrival predictions). Experiments show that edge‑side filtering eliminates roughly 30 % of duplicate data, while fog‑side aggregation removes an additional 20 %, resulting in an overall 45 % reduction in data transmitted to the cloud. Latency measurements confirm average edge‑to‑fog delays of 15 ms and fog‑to‑cloud delays of 40 ms. Importantly, the predictive analytics performed in the cloud on the reduced dataset achieve comparable or slightly improved accuracy relative to processing the full raw stream, demonstrating that early‑stage pruning does not sacrifice analytical quality.

The discussion acknowledges several open challenges: scaling fog nodes to balance load across a large geographic area, ensuring end‑to‑end security (encryption, authentication), supporting a broader range of IoMT protocols, and maintaining data consistency over long periods. Future work is outlined to incorporate multi‑cloud deployments, edge‑resident AI inference, and automated policy adaptation based on workload characteristics.

In summary, the proposed Edge‑Fog‑Cloud framework delivers a cost‑effective, low‑latency solution for end‑to‑end stream data management in IoMT, simultaneously reducing network bandwidth consumption and preserving the fidelity required for downstream analytics.

Comments & Academic Discussion

Loading comments...

Leave a Comment