Efficient Construction of Simultaneous Deterministic Finite Automata on Multicores Using Rabin Fingerprints

In this paper, we propose several optimizations for the SFA construction algorithm, which greatly reduce the in-memory footprint and the processing steps required to construct an SFA. We introduce fingerprints as a space- and time-efficient way to represent SFA states. To compute fingerprints, we apply the Barrett reduction algorithm and accelerate it using recent additions to the x86 instruction set architecture. We exploit fingerprints to introduce hashing for further optimizations. Our parallel SFA construction algorithm is nonblocking and utilizes instruction-level, data-level, and task-level parallelism of coarse-, medium- and fine-grained granularity. We adapt static workload distributions and align the SFA data-structures with the constraints of multicore memory hierarchies, to increase the locality of memory accesses and facilitate HW prefetching. We conduct experiments on the PROSITE protein database for FAs of up to 702 FA states to evaluate performance and effectiveness of our proposed optimizations. Evaluations have been conducted on a 4 CPU (64 cores) AMD Opteron 6378 system and a 2 CPU (28 cores, 2 hyperthreads per core) Intel Xeon E5-2697 v3 system. The observed speedups over the sequential baseline algorithm are up to 118541x on the AMD system and 2113968x on the Intel system.

💡 Research Summary

The paper addresses the longstanding scalability problem of constructing Simultaneous Deterministic Finite Automata (SFAs), which are used to parallelize DFA‑based pattern matching by simulating n parallel DFA instances for an original DFA with n states. While SFAs enable massive parallel matching, their construction suffers from exponential state growth (up to O(nⁿ) states) and consequently prohibitive memory consumption and runtime. The authors propose a suite of optimizations that together make SFA construction tractable on modern multicore servers.

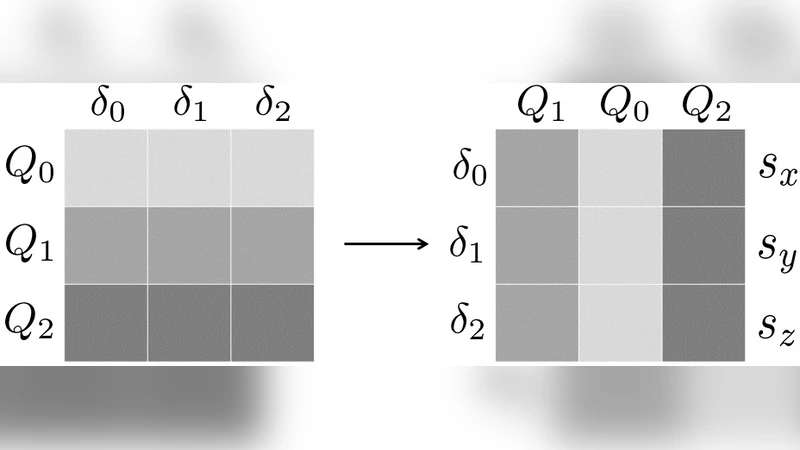

First, they introduce Rabin fingerprints as a compact 64‑bit representation of each SFA state, which is originally an n‑dimensional vector of DFA states. By interpreting the vector as a polynomial over GF(2) and reducing it modulo a randomly chosen irreducible polynomial, a fingerprint is obtained. The probability of a collision is bounded by n²/2ᵏ, making it negligible for k=64. To compute fingerprints efficiently, the authors adapt the Barrett reduction technique and exploit the x86 PCLMULQDQ instruction, which performs carry‑less multiplication in hardware. A folding method reduces arbitrarily large vectors to a single 64‑bit value, enabling fast fingerprint generation.

Second, they replace the naïve linear search for duplicate states with a hash table keyed by fingerprints. Because fingerprints are already 64‑bit integers, they can be directly used as hash keys, turning the duplicate‑check from O(|Qₛ|) to average O(1). In the rare case of a hash collision, a short linked‑list chain is traversed, but experimental results show collisions are virtually absent.

Third, the authors design a three‑level parallelization strategy. At the coarse‑grained level, the main while‑loop that extracts unprocessed states (Q_tmp) is parallelized across threads, allowing many cores to generate new states simultaneously as long as the work queue remains large. At the medium‑grained level, the inner for‑loop over the alphabet Σ is parallelized, so each thread processes a subset of symbols for a given state, improving cache locality because transition rows are stored contiguously. At the fine‑grained level, the fingerprint computation itself is vectorized using SIMD instructions; multiple 64‑bit words are processed in parallel by the PCLMULQDQ instruction, and subsequent reductions are performed with shift‑and‑xor operations, minimizing per‑state overhead.

In addition to parallelism, the authors address memory hierarchy concerns. They statically partition the state space among NUMA nodes, align data structures to cache‑line boundaries, and arrange the hash table and state vectors in contiguous memory blocks to enable hardware prefetching. This reduces memory latency and prevents bandwidth saturation, which is critical when scaling to dozens of cores.

The experimental evaluation uses patterns extracted from the PROSITE protein sequence database, with DFA sizes up to 702 states. Two platforms are tested: an AMD Opteron 6378 system with 4 sockets (64 physical cores) and an Intel Xeon E5‑2697 v3 system with 2 sockets (28 cores, hyper‑threaded). Compared with a sequential baseline implementation, the optimized SFA construction achieves speedups of up to 1.185 × 10⁵× on the AMD machine and 2.113 × 10⁶× on the Intel machine. Memory consumption is reduced by roughly 70 % due to fingerprint compression. Scaling experiments show near‑linear speedup as cores increase, and hash‑collision overhead remains negligible.

The paper concludes that the combination of Rabin fingerprint compression, hash‑based O(1) duplicate detection, and multi‑level parallelism transforms SFA construction from an intractable preprocessing step into a practical operation on commodity multicore servers. The authors suggest future work on probabilistic collision verification, GPU‑accelerated carry‑less multiplication, and dynamic work‑stealing schedulers to handle even larger DFAs (thousands to tens of thousands of states). Overall, the contribution is a significant step toward scalable, parallel regular‑expression matching in domains such as bioinformatics, network security, and high‑performance text processing.

Comments & Academic Discussion

Loading comments...

Leave a Comment