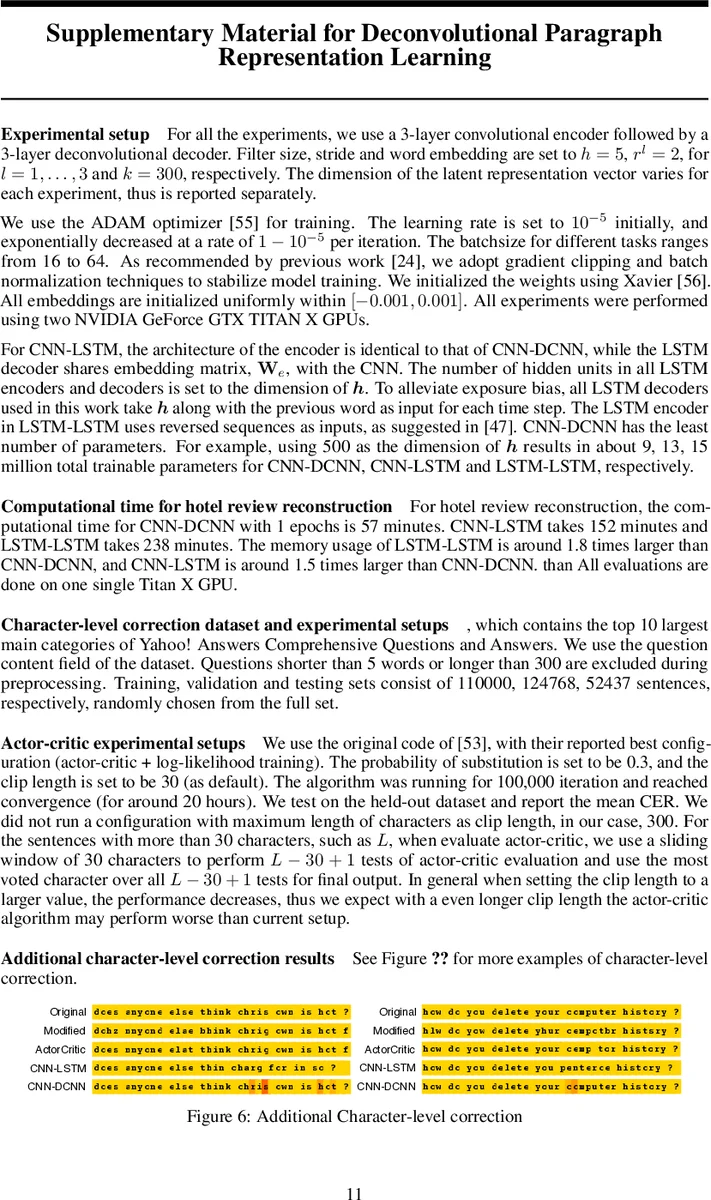

Deconvolutional Paragraph Representation Learning

Learning latent representations from long text sequences is an important first step in many natural language processing applications. Recurrent Neural Networks (RNNs) have become a cornerstone for this challenging task. However, the quality of sentences during RNN-based decoding (reconstruction) decreases with the length of the text. We propose a sequence-to-sequence, purely convolutional and deconvolutional autoencoding framework that is free of the above issue, while also being computationally efficient. The proposed method is simple, easy to implement and can be leveraged as a building block for many applications. We show empirically that compared to RNNs, our framework is better at reconstructing and correcting long paragraphs. Quantitative evaluation on semi-supervised text classification and summarization tasks demonstrate the potential for better utilization of long unlabeled text data.

💡 Research Summary

The paper addresses a fundamental limitation of recurrent neural network (RNN) based autoencoders for long text: during decoding the model suffers from exposure bias and teacher‑forcing artifacts, causing reconstruction quality to deteriorate as sequence length grows. To eliminate these issues, the authors propose a fully convolutional sequence‑to‑sequence autoencoder that uses a deep 1‑D convolutional neural network (CNN) as encoder and a transposed‑convolution (deconvolution) network as decoder.

Encoder design. Each input sentence is first transformed into a word‑embedding matrix X∈ℝ^{k×T}. A stack of L convolutional layers with filter size h, stride r(l) and number of filters p_l progressively reduces the temporal dimension while increasing the channel dimension. The final layer collapses the spatial dimension to 1, yielding a fixed‑size latent vector h∈ℝ^{p_L} that aggregates information from the entire paragraph regardless of its original length. This hierarchical processing mimics the way CNNs capture low‑level n‑gram patterns in early layers and higher‑level semantic or syntactic structures in deeper layers.

Decoder design. The decoder mirrors the encoder with L transposed‑convolution layers. Starting from h, each deconvolution layer upsamples the representation using the same filter sizes and strides, ultimately reconstructing a matrix ˆX that has the same shape as the original embedding matrix. Columns of ˆX are L2‑normalized, enabling a cosine‑similarity based softmax:

p(ˆw_t = v) = exp

Comments & Academic Discussion

Loading comments...

Leave a Comment