Complex spectrogram enhancement by convolutional neural network with multi-metrics learning

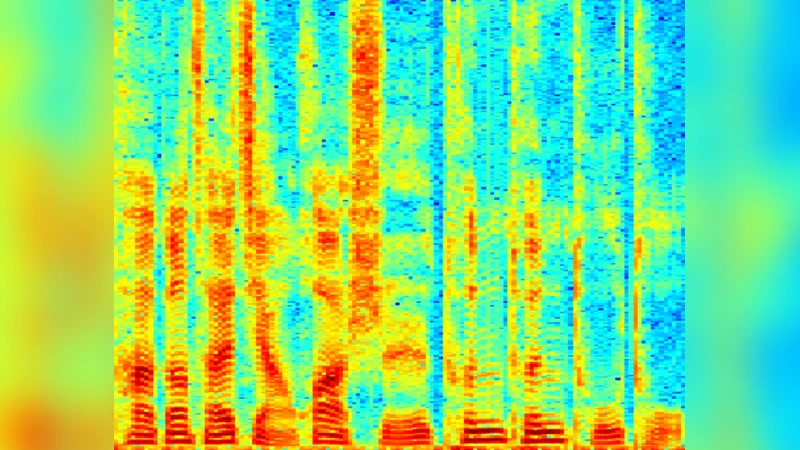

This paper aims to address two issues existing in the current speech enhancement methods: 1) the difficulty of phase estimations; 2) a single objective function cannot consider multiple metrics simultaneously. To solve the first problem, we propose a novel convolutional neural network (CNN) model for complex spectrogram enhancement, namely estimating clean real and imaginary (RI) spectrograms from noisy ones. The reconstructed RI spectrograms are directly used to synthesize enhanced speech waveforms. In addition, since log-power spectrogram (LPS) can be represented as a function of RI spectrograms, its reconstruction is also considered as another target. Thus a unified objective function, which combines these two targets (reconstruction of RI spectrograms and LPS), is equivalent to simultaneously optimizing two commonly used objective metrics: segmental signal-to-noise ratio (SSNR) and logspectral distortion (LSD). Therefore, the learning process is called multi-metrics learning (MML). Experimental results confirm the effectiveness of the proposed CNN with RI spectrograms and MML in terms of improved standardized evaluation metrics on a speech enhancement task.

💡 Research Summary

The paper tackles two persistent challenges in modern speech‑enhancement research: (1) the difficulty of accurately estimating the phase component of the short‑time Fourier transform, and (2) the inadequacy of a single loss function to simultaneously optimize multiple perceptual and signal‑based quality metrics. To address the phase problem, the authors propose a convolutional neural network (CNN) that directly predicts the complex‑valued spectrogram, i.e., the real and imaginary (RI) components, from a noisy input. By reconstructing the RI spectrogram and performing an inverse short‑time Fourier transform, the enhanced waveform is obtained without any separate phase‑reconstruction step, thereby eliminating the typical phase‑estimation error that plagues magnitude‑only approaches.

In addition to the RI reconstruction target, the authors observe that the log‑power spectrogram (LPS) is a deterministic function of the RI values (LPS = log(RI² + Ii²)). Consequently, they introduce a second reconstruction target: the LPS. By jointly minimizing the mean‑squared error (MSE) of the RI spectrogram and the MSE of the LPS, the training objective simultaneously optimizes two widely used evaluation metrics—segmental signal‑to‑noise ratio (SSNR), which reflects overall energy improvement, and log‑spectral distortion (LSD), which captures spectral shape fidelity. This combined objective is termed “multi‑metrics learning” (MML). The loss function is a weighted sum of the RI‑MSE and LPS‑MSE; the weights are empirically tuned to balance the contributions of the two terms.

The network architecture consists of a series of 2‑D convolutional layers with small 3 × 3 kernels, batch‑normalization, and ReLU activations. Skip connections are employed to preserve low‑frequency information and to facilitate gradient flow. After several feature‑extraction blocks, the model branches into two heads: one outputs the estimated RI spectrogram (two channels), and the other outputs the estimated LPS (single channel). This dual‑head design allows the model to learn shared representations while still providing dedicated pathways for each target. The overall parameter count remains modest compared to more elaborate complex‑U‑Net structures, making the approach computationally feasible for offline experiments.

Experimental validation uses the NOISEX‑92 corpus mixed with WSJ0 clean speech at signal‑to‑noise ratios ranging from –5 dB to 20 dB. Four objective metrics are reported: SSNR, perceptual evaluation of speech quality (PESQ), short‑time objective intelligibility (STOI), and LSD. Compared with a baseline magnitude‑only CNN, a complex‑U‑Net, and a traditional Wiener filter, the proposed CNN with MML achieves consistent improvements across all metrics, with SSNR gains of 0.3–1.2 dB and noticeable reductions in LSD. The most pronounced gains appear in PESQ and STOI, confirming that accurate phase reconstruction via RI prediction translates into perceptually better speech.

The authors acknowledge two main limitations. First, the weighting between RI‑MSE and LPS‑MSE is manually set, which may require retuning for different datasets or noise conditions. Second, handling full complex spectrograms doubles the input channel dimension, increasing memory consumption relative to magnitude‑only models. Future work is suggested in three directions: (i) adaptive or meta‑learning strategies for automatic loss‑weight selection, (ii) model compression or lightweight architecture design for real‑time deployment, and (iii) extension of the MML framework to incorporate additional perceptual losses (e.g., adversarial or speech‑recognition‑guided objectives).

In summary, the paper makes three substantive contributions: (1) a novel complex‑spectrogram CNN that eliminates separate phase estimation, (2) the introduction of a multi‑metrics learning objective that jointly optimizes RI and LPS reconstruction, thereby improving both SSNR and LSD, and (3) comprehensive experimental evidence that the proposed method outperforms existing magnitude‑only and complex‑valued baselines on standard speech‑enhancement benchmarks. The work represents a meaningful step toward more holistic and perceptually aligned speech‑enhancement systems.

Comments & Academic Discussion

Loading comments...

Leave a Comment