Exploiting Low-dimensional Structures to Enhance DNN Based Acoustic Modeling in Speech Recognition

We propose to model the acoustic space of deep neural network (DNN) class-conditional posterior probabilities as a union of low-dimensional subspaces. To that end, the training posteriors are used for dictionary learning and sparse coding. Sparse rep…

Authors: Pranay Dighe, Gil Luyet, Afsaneh Asaei

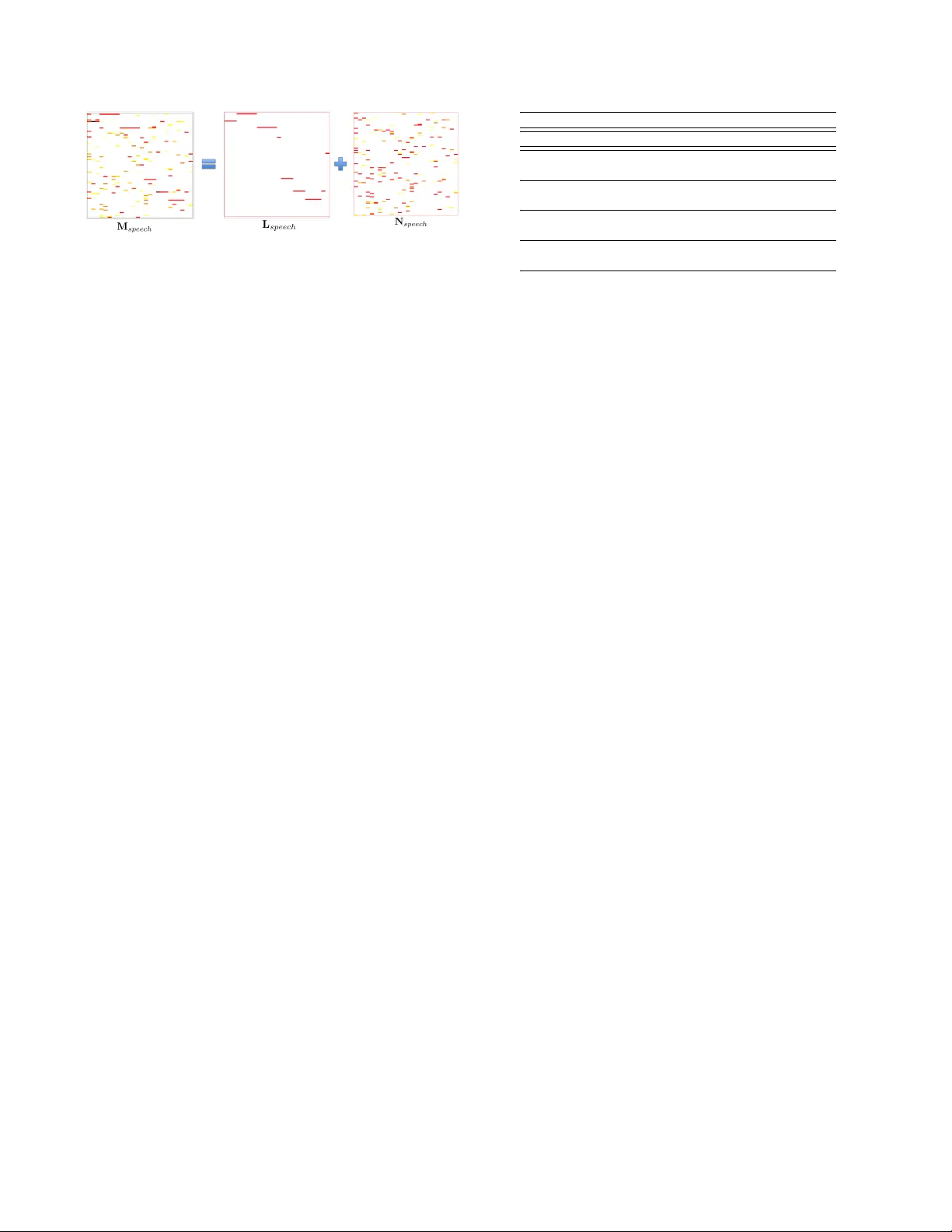

EXPLOITING LO W -DIMENSIONAL STR UCTURES TO ENHANCE DNN B ASED A COUSTIC MODELING IN SPEECH RECOGNITION Pranay Dighe ? ◦ Gil Luyet † ? Afsaneh Asaei ? Herv ´ e Bourlar d ? ◦ ? Idiap Research Institute, Martigny , Switzerland ◦ ´ Ecole Polytechnique F ´ ed ´ erale de Lausanne (EPFL), Switzerland † Uni versity of Fribour g, Switzerland { pranay.dighe,afsaneh.asaei,herve.bourlard } @idiap.ch, gil.luyet@unifr.ch ABSTRA CT W e propose to model the acoustic space of deep neural network (DNN) class-conditional posterior probabilities as a union of low- dimensional subspaces. T o that end, the training posteriors are used for dictionary learning and sparse coding. Sparse representation of the test posteriors using this dictionary enables projection to the space of training data. Relying on the fact that the intrinsic di- mensions of the posterior subspaces are indeed v ery small and the matrix of all posteriors belonging to a class has a very low rank, we demonstrate how low-dimensional structures enable further en- hancement of the posteriors and rectify the spurious errors due to mismatch conditions. The enhanced acoustic modeling method leads to impro vements in continuous speech recognition task using hybrid DNN-HMM (hidden Markov model) framew ork in both clean and noisy conditions, where upto 15 . 4% relativ e reduction in word error rate (WER) is achiev ed. Index T erms — Sparse coding, Dictionary learning, Deep neural network, Union of Low Dimensional Subspaces, Acoustic modeling. 1. INTR ODUCTION A need for sparse representations for better acoustic modeling of speech has been advocated consistently for better characterization of the underlying low-dimensional and parsimonious structure of speech [1, 2, 3, 4]. T wo major emerging trends, namely deep neu- ral networks (DNN) and exemplar-based sparse modeling, are dif- ferent approaches of exploiting sparsity in speech representations to achiev e inv ariance, discrimination and noise separation [5, 4, 6]. On the other hand, speech utterances are formed as a union of words which in turn consist of phonetic components and sub- phonetic attributes. Each linguistic component is produced through activ ation of a few highly constrained articulatory mechanisms lead- ing to generation of speech data in union of lo w-dimensional sub- spaces [7, 8, 9]. Howe ver , most existing speech classification and acoustic modeling methods do not explicitly take into account the multi-subspace structure of the data. The present study focuses on e xploiting the multi-subspace lo w- dimensional structure of speech learned from the training data to enhance DNN based acoustic modeling of unseen test data. Hence, this also has the potential to enable domain adaptation and handling mismatch in the framew ork of DNN based acoustic modeling. 1.1. Prior W orks Sparse representation has been prov en po werful as features used for acoustic modeling. As argued in [2], if data is projected into high-dimensional space, the underlying structures are dis- entangled. These structures form a union of low-dimensional sub- spaces which models the non-linear manifold where speech data re- sides. Prior work on sparse representation includes ex emplar-based methods [3, 10] where sparse representation, learned using spectral features achieve promising performance in automatic speech recog- nition (ASR) specially due to their robustness in handling noise and corruption. Recent adv ancement in DNN based acoustic modeling relies on estimation of highly sparse sub-word class-conditional poste- rior probabilities. While the conv entional Gaussian mixture mod- els (GMM) are statistically inefficient in modeling data lying on or near non-linear manifolds [11, 7, 8], DNNs achieve accurate sparse acoustic modeling through multiple layers of non-linear transforma- tions [12]. The hidden layers of DNN successiv ely learn underlying structures at dif ferent le vels and express them as highly in variant and discriminativ e representations towards deeper layers. While enforc- ing sparsity constraints during DNN training is mostly employed for the purpose of regularization to prevent overfitting, various studies hav e shown that sparsity in DNN architectures directly contributes tow ards simpler networks and superior performance in ASR. Suc- cessful application of sparse activity [13] (very few neurons being activ e), sparse connecti vity [14] (v ery fe w non-zero weights) as well as better performance of sparsity inducing techniques like dropout neural network training [15] confirm the belief that ‘sparser’ is bet- ter for acoustic modeling in ASR. 1.2. Motivation and Contrib utions W e point out two issues with respect to the state-of-the-art DNN based acoustic models which motivate further consideration of sparse modeling: Q1. Previous studies [16, 17] hav e found sparse activ ations in DNNs by showing how individual neurons in hidden layers learn being selectiv ely active in different ways tow ards dis- tinct phone patterns. Since this sparsification learned by hid- den layers is not explicitly hand-crafted, we ask upto what extent the union of low-dimensional subspaces structure for speech is actually being exploited by DNNs ? Q2. Despite of being ef fective in seen conditions, DNNs are found highly sensitiv e to unseen variations in data [12]. The mis- match condition causes erroneous estimates of posterior prob- abilities which is exhibited as spurious noises in the output posterior probabilities. Can we correct these errors through a low-dimensional model to improve acoustic modeling in noisy conditions ? In this paper, we address those issues by explicit modeling of the underlying structures in speech using prior knowledge that speech data lives in the union of lo w-dimensional subspaces. W e implement this idea using the principled dictionary learning and sparse cod- ing algorithms over DNN posterior probabilities to recover sparse representations where non-zero values correspond to class-specific subspaces. These subspace sparse representations are then used to enhance the original DNN posterior probabilities through dictionary based reconstruction. W e build upon compressi ve sensing and sub- space sparse recovery theory to provide theoretical support for va- lidity of our approach. W e also elaborate on our choice of features (DNN based posterior probabilities) and algorithms for dictionary learning [18] and structured sparse coding [19] which essentially distinguish our approach from previous ex emplar based sparse rep- resentation methods [20, 3, 10]. W e demonstrate improv ements in performance achieved by the proposed enhanced acoustic modeling in hybrid DNN-HMM continuous ASR system using Numbers’95 database [21] and show increased rob ustness in noisy conditions. In the rest of the paper, the proposed subspace sparse acoustic modeling method is elaborated in Section 2. The experimental anal- ysis are carried out in Section 3. Section 4 provides the concluding remarks and directions for future work. 2. SUBSP A CE SP ARSE A COUSTIC MODELING In this section, we model the space of DNN class-conditional poste- rior probabilities as a union of low-dimensional subspaces. Relying on the subspace sparse representation, we show how the posteriors can be enhanced for more accurate class-specific representations. 2.1. Subspace Sparse Representation Speech features reside on or near non-linear manifolds which can be best characterized by union of lo w-dimensional subspaces. The pro- posed approach relies on the fact that a data point in a union of sub- spaces can be more efficiently reconstructed using a sparse combina- tion of data points from its own subspace than data points from other subspaces, thus resulting in a subspace-sparse r epresentation [22]. T o state it more precisely , let S = {S ` } L ` =1 be a set of linear disjoint subspaces associated to L classes in R m such that the di- mensions of individual subspaces { r ` } L ` =1 are smaller than the di- mension of the actual space, i.e. ∀ ` , r ` < m . Speech features z lie in the union ∪ L ` =1 S ` of these lo w-dimensional subspaces. Let D ` ∈ R m × n ` be the class-specific over-complete dictionary for sub- space S ` where n ` is the number of atoms in D ` and n ` > r ` . Each data point in S ` can then be represented as a sparse linear combina- tion of the atoms from D ` . Defining ` 1 -norm of a vector (denoted by k . k 1 ) as the sum of the absolute values of its components, the subspace sparse reco very (SSR) property [22] for union of disjoint subspaces asserts that ` 1 - norm sparse representation of a data point ov er collection of all class- specific dictionaries { D ` } L ` =1 can lead to separation of the class- specific subspaces by selecting atoms only from the underlying class of the data point for its reconstruction. Thus, the obtained sparse representations hav e activ ations only for the atoms corresponding to the actual subspace S ` where z liv es. Considering a speech utterance as the union of words, phones or sub-phonetic components, the subspaces S ` can be modeled at dif- ferent le vels (time granularity) corresponding to any of these speech units. Consequently a dictionary D can be constructed by learning basis sets D ` for individual classes. In the present study , we focus on context-dependent senones (c.f. Section 2.2.1) for their superior quality in DNN-HMM framework. Nevertheless there is no theoret- ical/algorithmic impediment in applying it for larger units such as words. The rigorous proof of SSR property (see Theorem 2 in [22]) requires certain conditions and assumptions on disjoint subspaces. Since we train DNN with binary senone target outputs, the intersec- tion of senone subspaces is expected to be a rare event and suggests disjointedness of subspaces. Although further theoretical analysis is beyond the scope of the present work, experiments conducted in Section 3 empirically confirm that SSR property indeed holds for subspace-sparse modeling of senones. 2.2. Class-Specific Dictionary Learning There are two key considerations for dictionary learning in sparse subspace acoustic modeling. Namely , the choice of features and al- gorithmic dev elopments. 2.2.1. Senone P osterior Probabilities as Speec h F eatur es A posterior feature z is a vector consisting of class-conditional probabilities at the output layer of DNN. In contrast to spectral features, posterior features are prov en highly ef fectiv e for sparse modeling [23, 24]. They are inherently sparse and inv ariant to speaker/en vironmental conditions presented in the DNN training data. Although we choose to work with posterior probabilities at context-dependent senone levels (tied triphone states) [25], the the- oretical underpinning of the proposed approach is applicable to any type of speech units. 2.2.2. Dictionary Learning and Sparse Coding Algorithms Building on our previous work on dictionary learning for sparse modeling of posterior features [23], we use the online dictionary learning [18] algorithm for solving l 1 sparse coding problem e x- pressed as arg min D , A T X t =1 k z t − D α t k 2 2 + λ k α t k 1 , s.t. k d j k 2 2 ≤ 1 ∀ j (1) where A = [ α 1 . . . α T ] and d j denotes each atom of the dictionary . Class-specific data of senone posterior features is obtained through GMM-HMM based forced alignment on training data, which is then used to learn individual over -complete basis set D ` for each senone subspace S ` using dictionary learning algorithm. These class-specific dictionaries are concatenated into a larger dic- tionary D = [ D 1 · · · D ` · · · D L ] for subspace-sparse acoustic mod- eling. Since an y posterior feature obtained from DNN lies in a union of subspaces ∪ L ` =1 S ` , a test posterior feature z can be reconstructed using the atoms of dictionary D . According to SSR property , only the atoms associated to the correct class (underlying subspace) of z will be used for sparse representation. It may be noted that dictionary learning approach is fundamen- tally different from dictionary construction using a random subset [3, 10] of training features since we use all of the training data to compute an over -complete basis set for sparse representation which is far smaller (less than 3% in case of Numbers’95 database) than the actual collection size yet more effecti ve in sparse representation [23]. 2.3. Enhanced Acoustic Modeling W e use group sparsity based hierarchical Lasso algorithm [19] for sparse coding to enforce group sparsity in α based on the internal partitioning of dictionary D into senone-specific sub-dictionaries D ` . The high dimensional group sparse representation α is com- puted for each DNN output posterior feature z by sparse recovery ov er D . Projection of a test posterior feature z on training data space is giv en by computing D α . Fig. 1 . DNN output senone posteriors z are projected to the space of training posteriors using D α . Resulting projected posteriors are used for typical decoding in DNN-HMM framew ork. Note that D α is an approximation of posterior feature z based on ` 1 -norm sparse reconstruction using atoms of D . Consequently , it has the same dimension as z and it is forced to lie in a probability simplex by normalization. Figure 1 summarizes this procedure. 3. EXPERIMENT AL ANAL YSIS In this section, we provide empirical analysis of the theoretical re- sults established in Section 2. These experiments confirm that the information bearing components of DNN class-conditional proba- bilities indeed li ve in a very low-dimensional space. Exploiting this structure enables enhancement of DNN based acoustic models and remov es the effect of high-dimensional noise leading to improv e- ment in DNN-HMM speech recognition performance. 3.1. Database and Speech Featur es W e use Numbers’95 database for this study where only the utter- ances consisting of digits are considered (more details in [23]). The phoneset includes 27 phones and accordingly 557 context dependent tied states referred to as senones are learned by forced alignment of the training data using Kaldi speech recognition toolkit [26]. A DNN is trained using sequence discriminativ e training [27] with 3 hidden layers each having 1024 nodes. For e very 10 ms speech frame, the DNN input is a vector of MFCC+ ∆ + ∆∆ features with a context of 9 frames (39 × 9=351 dimension). The DNN output is a vector of posterior probabilities corresponding to 557 senone classes. W e use DNN posteriors as features z for dictionary learning and sparse coding ( ?? ). 3.2. Low-rank P osterior Reconstruction As explained in Sections 2.2–2.3, DNN posteriors are used to learn senone-specific dictionaries D ` from the training data. Number of atoms n ` in each senone dictionary D ` is approximately 100. A value of λ = 0 . 2 , optimized on dev elopment data, was used for sparse coding to get sparse representations α . Subsequently D α pro- jected posterior probabilities are computed for the test data. Sparsity leads to selection of a few subspaces of the training data resulting in new test posteriors which (1) live in low-dimensions, (2) are pro- jected onto the subspace of the training posteriors, and (3) separated from the subspaces of other senone classes. W e in vestigate these properties below through further analysis. T o provide an insight into the dimension of the senone sub- spaces, we construct matrices of 1000 class-specific senone poste- riors and compute the number of singular v alues required to pre- serve 95% v ariability of the data. Due to skewed distribution of the posteriors, we take their log prior to singular value decompo- sition. W e refer to the number of required singular values as roughly the “Rank” of senone matrices. An ideal posterior feature should hav e its maximum component at the support indicating its associ- ated class. Hence, we group the posteriors as “correct” if the max- imum component corresponds to the correct class and “incorrect” if the maximum component corresponds to the incorrect class. T a- ble 1 shows the average number of required singular values ov er all senones for DNN and projected posteriors. Another approach re- ferred to as robust PCA based posteriors will be discussed in the subsequent section. W e can see that the “correct” posteriors li ve in a space which has far lower dimension than the space of “incorrect” posteriors. In other words, the information bearing components in “correct” senone pos- teriors are fewer resulting in matrices which have lower rank com- pared to “incorrect” posteriors. Giv en that the ranks are nevertheless very low (compared to the dimension of the senone posteriors which is 557), the “incorrect” posterior are exposed to a high-dimensional spurious noise. Therefore, to enhance the posterior probabilities, the low-dimensional subspace has to be modeled/identified and the posterior has to be pr ojected onto that space. T o further in vestigate the subspaces selected for sparse recov ery , the values in sparse representation α for each class are summed to form α -sum vectors and the “Rank” of senone-specific α -sum matrices are computed. According to SSR property , it is expected that sparse recov ery should select the subspaces from the underlying classes so the “Rank” of α -sum matrices has to be 1. In fact, we found that the empirical results averaged ov er the whole test set conformed to this theoretical insight indicating that subspace sparse reco very leads to selection of the subspaces be- longing to the underlying senone classes. The class-specific dictionary learning for sparse coding enables us to model the non-linear manifold of the training data as a union of low-dimensional subspaces. A DNN posterior z from the test data may not lie on this manifold due to presence of high-dimensional noise embedded in its components. It is important to extract the low-dimensional structure in z while separating the effect of noise. Sparse coding does exactly this by finding the true underlying sub- spaces in sparse representation α and enables projecting z on the class-specific subspace of the training data manifold via D α recon- struction. 3.3. Low-rank and Sparse Decomposition T o further study the true underlying dimension of the senone-specific subspaces, we consider rob ust principle component analysis (RPCA) based decomposition of the senone posteriors [28]. The idea of RPCA is to decompose a data matrix M as M = L + N (2) where matrix L has low-rank and matrix N is sparse (see Figure 2). Building upon the observ ations in Section 3.2, the lo w-rank com- ponent L corresponds to the enhanced posteriors while the high di- mensional erroneous estimates are separated out in the sparse matrix N . W e collect posterior features for each senone from training data using gr ound truth based GMM-HMM forced alignment. RPCA de- composition is applied to data of each senone-class to re veal the true underlying dimension of the class-specific senone subspaces. The DNN Projected Robust PCA Rank-Correct 36.6 11.9 7.6 Rank-Incorrect 45.5 21.7 11.7 T able 1 . Comparison of “Rank” of DNN posterior matrix, projected posterior matrix and RPCA senone posterior matrix. Fig. 2 . Decomposing a DNN estimated senone posterior matrix M speech into a low-rank matrix L speech of enhanced posteriors and a sparse matrix N speech of spurious noise. rank of senone posteriors (i.e. rank of L ) obtained after RPCA de- composition for both “Correct” and “Incorrect” classes are listed in T able 1. W e can see that the true dimension (7.6) of the class-specific subspaces of senone posteriors is indeed far lo wer than the DNN posteriors (36.6) and yet lower than the projected posteriors (11.9). Exploiting this multi low-rank structure of speech can lead to poste- rior enhancement via lo w-rank representation at utterance le vel [29]. The low-rank bottleneck layer based DNN is studied in [30] which shows that low-dimensional structuring of DNN architecture yields smaller footprint and faster training. In contrast, our proposed method suggests an added layer of sparse coding for structuring DNN outputs relying on the generic sparse and low-rank structures. Since, these generic structures are characterized from the training data, this approach enables us to handle mismatches in DNN train and test conditions. 3.4. Enhanced DNN-HMM Speech Recognition Continuous speech recognition is performed using DNN posteriors as well as projected posteriors in the framework of con ventional hy- brid DNN-HMM. HMM topology learned during training of the h y- brid DNN-HMM is used for decoding the word transcription in all cases. Hence, all parameters of different ASR systems shown here are the same and the only difference is in terms of senone posterior probabilities at each frame which results in different best paths being decoded by the V iterbi algorithm. T o demonstrate the increased robustness in projected posteriors as compared to the DNN posteriors, we also compared their perfor- mance in noisy conditions where artificial white Gaussian noise was added at signal level to the test utterances at signal-to-noise (SNR) ratios of 10 dB, 15 dB and 20 dB. DNN trained on clean speech is used for computing posteriors from noisy test spectral features so that the artificially added noise acts as an unseen variation in the data for DNN. Comparison of ASR performance is sho wn in T able 2 in terms of W ord Error Rate (WER) percentage. W e can see that the projected posteriors outperform DNN pos- teriors in all cases suggesting that projection based on D α provides enhanced acoustic models for DNN-HMM decoding. W e note that in all experiments, a consistent decrease in insertion and substitution errors is observed when using projected posteriors in place of DNN posteriors. This implies fewer wrong hypotheses being made in case of projected posteriors at word level as compared to DNN posteriors. A similar insight comes by comparing the GMM-HMM based forced senone alignment (ground truth) with senone alignments achieved by best V iterbi paths in projected posterior and DNN posterior systems. Senone classification error of 24.1% in case of DNN posteriors is reduced to 19.8% in case of projected posteriors. Improv ement in senone alignments and subsequent reduction in WER proves supe- rior quality of projected posteriors ov er DNN posteriors and supports the hypothesis that projection moves the test features closer to the subspace of the correct classes. SNR Posteriors WER (%) Ins Del Subs Clean RPCA 0.4 36 18 4 Clean DNN 2.6 111 96 152 Projected 2.2 72 100 137 20db DNN 4.0 160 121 293 Projected 3.5 90 162 233 15db DNN 6.8 205 249 498 Projected 6.2 130 298 442 10db DNN 14.0 199 950 801 Projected 13.9 117 1064 763 T able 2 . Comparison of ASR performance using DNN posteriors and projected posteriors in clean and noisy conditions on Numbers’95 database. RPCA posteriors indicate an ideal enhancement through low-dimensional posterior reconstruction. Breakdown of WER in terms of insertions (Ins), deletions (Del), and substitutions (Subs) has also been shown out of a total of 13967 words in all test utterances. Finally , RPCA posteriors (matrix L obtained from lo w-rank and sparse decomposition as explained in Section 3.3) which hav e ranks close to the true underlying dimensions of senone subspaces perform extremely well in ASR (c.f. T able 2). WER of 2.6% using DNN posteriors (“Rank” 36.6) reduces to a WER of 2.2% using projected posteriors (“Rank” 11.9) i.e. a relati ve improv ement of 15.4%, and when RPCA posteriors (“Rank” 7.6) are used, it is reduced to a mere 0.4%. Since RPCA based lo w-rank reconstruction of posteriors has been done using ground truth senone alignment, ASR performance in this case is the best case scenario and demonstrates the scope of improv ement possible ev en after DNN based acoustic modeling. 4. CONCLUSIONS AND FUTURE DIRECTIONS In this paper, we demonstrated explicit modeling of low-dimensional structures in speech using dictionary learning and sparse coding over the DNN class conditional probabilities. W e showed that albeit their po wer in representation learning, DNN based acoustic mod- eling still has room for improvement in 1) exploiting the union of low-dimensional subspaces structure underlying speech data and 2) acoustic modeling in noisy conditions. Using dictionary learning and sparse coding, DNN posteriors were transformed to projected posteriors which were shown to be more suitable acoustic models. Sparse reconstruction moves the test posteriors closer to the correct underlying class of the data by exploiting the f act that the true infor- mation is embedded in a lo w-dimensional subspace thus separating out the high dimensional erroneous estimates. Improv ements in ASR performance were shown for both clean and noisy conditions paving the way to wards an ef fecti ve rob ust ASR framew ork using DNN in unseen conditions. The importance of low-dimension structures was further confirmed through RPCA analysis. The proposed method can be improved through discriminativ e dictionary learning for better class-specific subspace modeling. Fur- thermore, we will study the low-rank clustering techniques to en- hance posterior probabilities exploiting their lo w-dimensional multi- subspace structure. Moreover , we will consider further analysis on challenging databases and in particular the case of accented non- nativ e speech recognition. Projection of accented speech posteri- ors on dictionaries trained with native language speech can result in transformation of accented phonetic space to nativ e phonetic space and lead to improv ements in accented speech recognition task. 5. A CKNO WLEDGMENTS The research leading to these results has receiv ed funding from by SNSF project on “Parsimonious Hierarchical Automatic Speech Recognition (PHASER)” grant agreement number 200021-153507. 6. REFERENCES [1] Jeff A Bilmes, “What HMMs can do, ” IEICE TRANSA CTIONS on Information and Systems , vol. 89, no. 3, pp. 869–891, 2006. [2] Y oshua Bengio, “Learning deep architectures for AI, ” F oun- dations and trends R in Machine Learning , vol. 2, no. 1, pp. 1–127, 2009. [3] T ara N Sainath, Bhuvana Ramabhadran, Michael Pichen y , David Nahamoo, and Dimitri Kanevsk y , “Exemplar -based sparse representation features: From TIMIT to L VCSR, ” IEEE T ransactions on A udio, Speech, and Language Pr ocessing , vol. 19, no. 8, pp. 2598–2613, 2011. [4] G. Saon and Jen-Tzung Chien, “Bayesian sensing hidden markov models, ” IEEE T ransactions on Audio, Speech, and Language Pr ocessing , vol. 20, no. 1, pp. 43–54, Jan 2012. [5] Li Deng and Xiao Li, “Machine learning paradigms for speech recognition: An overvie w , ” IEEE T ransactions on Audio, Speech, and Language Pr ocessing , v ol. 21, no. 5, pp. 1060– 1089, 2013. [6] Jort Gemmeke and Bert Cranen, “Noise robust digit recogni- tion using sparse representations, ” Pr oceedings of ISCA 2008 ITRW Speech Analysis and Processing for knowledge discov- ery , 2008. [7] Li Deng, “Switching dynamic system models for speech artic- ulation and acoustics, ” in Mathematical F oundations of Speech and Language Pr ocessing , pp. 115–133. Springer New Y ork, 2004. [8] Simon King, Joe Frankel, Karen Livescu, Erik McDermott, K orin Richmond, and Mirjam W ester , “Speech production knowledge in automatic speech recognition, ” The Journal of the Acoustical Society of America , vol. 121, no. 2, pp. 723– 742, 2007. [9] Leo J Lee, Paul Fieguth, and Li Deng, “ A functional articula- tory dynamic model for speech production, ” in ICASSP . IEEE, 2001, vol. 2, pp. 797–800. [10] Jort F Gemmeke, T uomas V irtanen, and Antti Hurmalainen, “Exemplar -based sparse representations for noise robust au- tomatic speech recognition, ” IEEE T ransactions on Audio, Speech, and Language Pr ocessing , v ol. 19, no. 7, pp. 2067– 2080, 2011. [11] Geoffrey Hinton, Li Deng, Dong Y u, George E Dahl, Abdel- rahman Mohamed, Navdeep Jaitly , Andrew Senior, V incent V anhoucke, Patrick Nguyen, T ara N Sainath, et al., “Deep neu- ral networks for acoustic modeling in speech recognition: The shared views of four research groups, ” IEEE Signal Pr ocessing Magazine , v ol. 29, no. 6, pp. 82–97, 2012. [12] Dong Y u, Michael L Seltzer , Jinyu Li, Jui-T ing Huang, and Frank Seide, “Feature learning in deep neural networks-studies on speech recognition tasks, ” arXiv pr eprint arXiv:1301.3605 , 2013. [13] Jian Kang, Cheng Lu, Meng Cai, W ei-Qiang Zhang, and Jia Liu, “Neuron sparseness versus connection sparseness in deep neural network for large vocabulary speech recognition, ” in ICASSP , April 2015, pp. 4954–4958. [14] Dong Y u, Frank Seide, Gang Li, and Li Deng, “Exploiting sparseness in deep neural networks for large vocabulary speech recognition, ” in ICASSP , 2012, pp. 4409–4412. [15] Nitish Sriv astav a, Geoffrey Hinton, Alex Krizhe vsky , Ilya Sutske ver , and Ruslan Salakhutdinov , “Dropout: A simple way to pre vent neural networks from o verfitting, ” The J ournal of Machine Learning Researc h , vol. 15, no. 1, pp. 1929–1958, 2014. [16] T asha Nagamine, Micheal L. Seltzer , and Nima Mesgarani, “Exploring how deep neural networks form phonemic cate- gories, ” Interspeech , 2015. [17] Abdel rahman Mohamed, Geoffre y Hinton, and Gerald Penn, “Understanding ho w deep belief networks perform acoustic modelling, ” in ICASSP . IEEE, 2012, pp. 4273–4276. [18] Julien Mairal, Francis Bach, Jean Ponce, and Guillermo Sapiro, “Online learning for matrix factorization and sparse coding, ” J ournal of Machine Learning Researc h (JMLR) , vol. 11, pp. 19–60, 2010. [19] Pablo Sprechmann, Ignacio Ramirez, Guillermo Sapiro, and Y onina C Eldar, “C-HiLasso: A collaborativ e hierarchical sparse modeling frame work, ” Signal Pr ocessing, IEEE Tr ans- actions on , vol. 59, no. 9, pp. 4183–4198, 2011. [20] T ara N Sainath, Bhuvana Ramabhadran, David Nahamoo, Dimitri Kanevsky , and Abhina v Sethy , “Sparse representa- tion features for speech recognition, ” in Interspeech , 2010, pp. 2254–2257. [21] R. A. Cole, M. Noel, T . Lander, and T . Durham, “New tele- phone speech corpora at CSLU, ” 1995. [22] Ehsan Elhamifar and Rene V idal, “Sparse subspace clustering: Algorithm, theory , and applications, ” IEEE T ransactions on P attern Analysis and Machine Intelligence , v ol. 35, no. 11, pp. 2765–2781, 2013. [23] Pranay Dighe, Afsaneh Asaei, and Herv ´ e Bourlard, “Sparse modeling of neural network posterior probabilities for ex emplar-based speech recognition, ” Speech Communication , 2015. [24] Afsaneh Asaei, Benjamin Picart, and Herv ´ e Bourlard, “ Anal- ysis of phone posterior feature space exploiting class-specific sparsity and MLP-based similarity measure, ” in ICASSP , 2010. [25] Dong Y u and Li Deng, Automatic Speech Recognition - A Deep Learning Appr oach , Springer, 2015. [26] Daniel Povey , Arnab Ghoshal, Gilles Boulianne, Luk ´ a ˇ s Bur- get, Ond ˇ rej Glembek, Nagendra Goel, Mirko Hannemann, Petr Motl ´ ı ˇ cek, Y anmin Qian, Petr Schwarz, et al., “The kaldi speech recognition toolkit, ” 2011. [27] Karel V esel ` y, Arnab Ghoshal, Luk ´ as Burget, and Daniel Pove y , “Sequence-discriminativ e training of deep neural networks., ” 2013. [28] Emmanuel J Cand ` es, Xiaodong Li, Y i Ma, and John Wright, “Robust principal component analysis?, ” J ournal of the A CM (J ACM) , vol. 58, no. 3, pp. 11, 2011. [29] Guangcan Liu, Zhouchen Lin, Shuicheng Y an, Ju Sun, Y ong Y u, and Y i Ma, “Rob ust recovery of subspace structures by low-rank representation, ” IEEE T ransactions on P attern Anal- ysis and Machine Intelligence , , no. 99, pp. 1–1, 2013. [30] T ara N Sainath, Brian Kingsbury , V ikas Sindhwani, Ebru Arisoy , and Bhuvana Ramabhadran, “Lo w-rank matrix factor- ization for deep neural network training with high-dimensional output targets, ” in ICASSP . IEEE, 2013, pp. 6655–6659.

Original Paper

Loading high-quality paper...

Comments & Academic Discussion

Loading comments...

Leave a Comment