Gender and Emotion Recognition with Implicit User Signals

We examine the utility of implicit user behavioral signals captured using low-cost, off-the-shelf devices for anonymous gender and emotion recognition. A user study designed to examine male and female sensitivity to facial emotions confirms that females recognize (especially negative) emotions quicker and more accurately than men, mirroring prior findings. Implicit viewer responses in the form of EEG brain signals and eye movements are then examined for existence of (a) emotion and gender-specific patterns from event-related potentials (ERPs) and fixation distributions and (b) emotion and gender discriminability. Experiments reveal that (i) Gender and emotion-specific differences are observable from ERPs, (ii) multiple similarities exist between explicit responses gathered from users and their implicit behavioral signals, and (iii) Significantly above-chance ($\approx$70%) gender recognition is achievable on comparing emotion-specific EEG responses– gender differences are encoded best for anger and disgust. Also, fairly modest valence (positive vs negative emotion) recognition is achieved with EEG and eye-based features.

💡 Research Summary

This paper investigates the feasibility of recognizing a user’s gender and emotional state from implicit behavioral signals captured by low‑cost, off‑the‑shelf devices. The authors argue that conventional gender and emotion recognition (GR and ER) systems rely on facial images or speech, which are biometric identifiers and raise privacy concerns because they can be recorded covertly. In contrast, electroencephalography (EEG) and eye‑movement data are less identifiable and can only be obtained with explicit user cooperation, making them more privacy‑friendly.

To explore this, a user study was conducted with 28 university students (14 male, 14 female). Stimuli consisted of six basic emotions (anger, disgust, fear, happiness, sadness, surprise) displayed on 24 models from the Radboud Faces Database. Each emotion was morphed from 25 % to 100 % intensity in 5 % steps; only the 25 %–50 % (low intensity, LI) and 55 %–100 % (high intensity, HI) ranges were used, yielding 864 LI and 1440 HI images. In each trial, a fixation cross appeared for 500 ms, followed by a 4‑second face presentation, after which participants had up to 30 seconds to select the perceived emotion from seven options (six emotions plus neutral). The experiment was split into four blocks of 72 trials each, lasting about 90 minutes in total.

During the task, EEG was recorded with the 14‑channel Emotiv EPOC headset (plus two references) at 128 Hz, and eye movements were captured with the EyeTribe eye‑tracker. Behavioral results showed an overall mean response time (RT) of 1.5 s and a mean recognition rate (RR) of 64 %. High‑intensity faces were recognized faster and more accurately than low‑intensity faces. Consistent with prior psychological literature, females responded significantly faster to negative emotions (anger, disgust, fear, sadness) and achieved higher accuracy on those emotions than males.

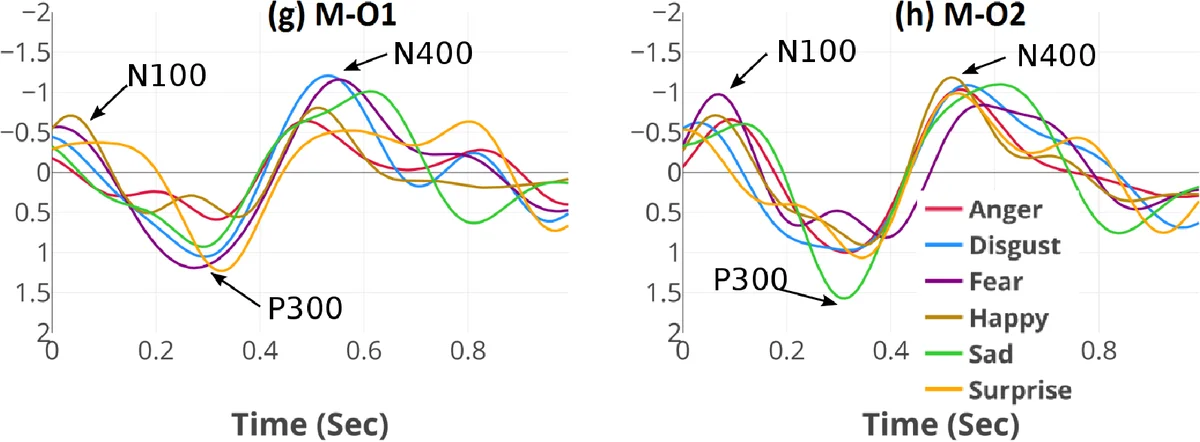

EEG preprocessing involved band‑pass filtering (0.1–45 Hz), removal of noisy epochs, and independent component analysis (ICA) to eliminate eye‑blink, eye‑movement, and muscle artifacts. Epochs of 4.5 s (including the fixation period) were extracted, yielding a 14 × 128 × 4 data matrix per trial. Principal component analysis (PCA) reduced dimensionality while preserving 90 % of variance before feeding the data to classifiers (SVM, Random Forest, etc.). Event‑related potential (ERP) analysis revealed that females exhibited stronger N170 and P300 components when processing negative faces, indicating heightened neural processing.

Eye‑tracking analysis focused on fixation density across three facial regions (eyes, nose, mouth). Females tended to fixate more on the eyes, whereas males showed relatively more fixations on the mouth. These gaze patterns aligned with the observed behavioral differences and contributed to classification performance.

Classification experiments demonstrated that gender can be inferred from emotion‑specific EEG responses with an average accuracy of about 70 %, especially for anger and disgust. Valence (positive vs. negative) discrimination using combined EEG and eye‑movement features achieved an area‑under‑the‑curve (AUC) of roughly 0.62, comparable to prior work using laboratory‑grade equipment.

The study’s contributions are threefold: (1) it provides one of the first demonstrations of gender and emotion recognition from implicit, privacy‑preserving signals; (2) it shows that low‑cost consumer devices, despite poorer signal quality, still capture meaningful ERP and gaze information related to gender and emotion; (3) it validates that explicit behavioral differences (faster RTs, higher accuracy for females on negative emotions) are mirrored in implicit neural and ocular responses.

Limitations include the relatively noisy EEG recordings from the Emotiv headset and the modest spatial accuracy of the EyeTribe tracker, which may constrain real‑time applications. Future work should explore larger, more diverse participant pools, richer emotional stimuli (including mixed or subtle emotions), and deep‑learning architectures that can jointly model EEG and eye‑movement streams for improved robustness and scalability.

Comments & Academic Discussion

Loading comments...

Leave a Comment