📝 Original Info

- Title: Large-Scale Domain Adaptation via Teacher-Student Learning

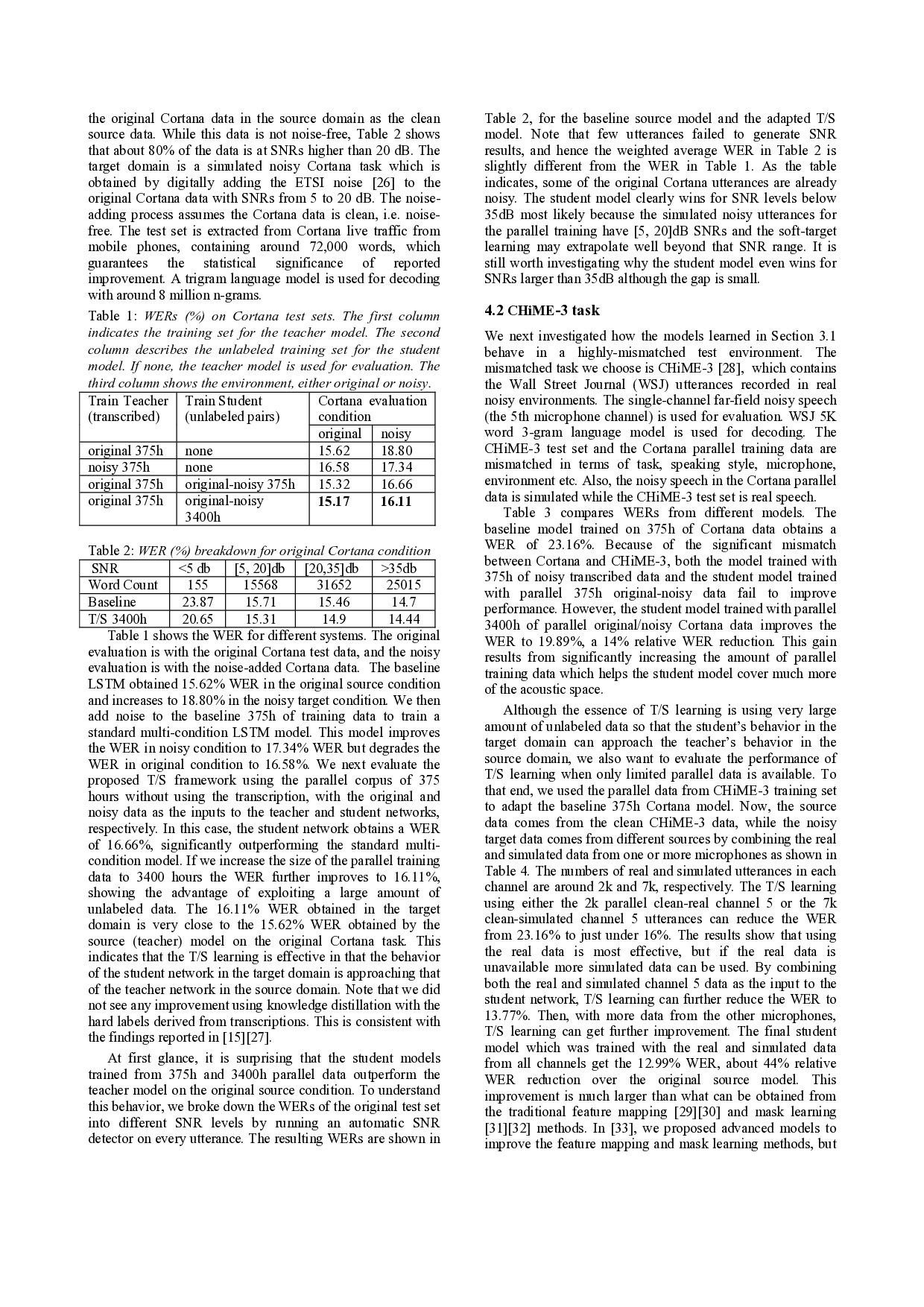

- ArXiv ID: 1708.05466

- Date: 2017-08-21

- Authors: Researchers from original ArXiv paper

📝 Abstract

High accuracy speech recognition requires a large amount of transcribed data for supervised training. In the absence of such data, domain adaptation of a well-trained acoustic model can be performed, but even here, high accuracy usually requires significant labeled data from the target domain. In this work, we propose an approach to domain adaptation that does not require transcriptions but instead uses a corpus of unlabeled parallel data, consisting of pairs of samples from the source domain of the well-trained model and the desired target domain. To perform adaptation, we employ teacher/student (T/S) learning, in which the posterior probabilities generated by the source-domain model can be used in lieu of labels to train the target-domain model. We evaluate the proposed approach in two scenarios, adapting a clean acoustic model to noisy speech and adapting an adults speech acoustic model to children speech. Significant improvements in accuracy are obtained, with reductions in word error rate of up to 44% over the original source model without the need for transcribed data in the target domain. Moreover, we show that increasing the amount of unlabeled data results in additional model robustness, which is particularly beneficial when using simulated training data in the target-domain.

💡 Deep Analysis

Deep Dive into Large-Scale Domain Adaptation via Teacher-Student Learning.

High accuracy speech recognition requires a large amount of transcribed data for supervised training. In the absence of such data, domain adaptation of a well-trained acoustic model can be performed, but even here, high accuracy usually requires significant labeled data from the target domain. In this work, we propose an approach to domain adaptation that does not require transcriptions but instead uses a corpus of unlabeled parallel data, consisting of pairs of samples from the source domain of the well-trained model and the desired target domain. To perform adaptation, we employ teacher/student (T/S) learning, in which the posterior probabilities generated by the source-domain model can be used in lieu of labels to train the target-domain model. We evaluate the proposed approach in two scenarios, adapting a clean acoustic model to noisy speech and adapting an adults speech acoustic model to children speech. Significant improvements in accuracy are obtained, with reductions in word er

📄 Full Content

Large-Scale Domain Adaptation via Teacher-Student Learning

Jinyu Li, Michael L. Seltzer, Xi Wang, Rui Zhao, and Yifan Gong

Microsoft AI and Research, One Microsoft Way, Redmond, WA 98052

{jinyli; mseltzer; xwang; ruzhao; ygong}@microsoft.com

Abstract

High accuracy speech recognition requires a large amount of

transcribed data for supervised training. In the absence of such

data, domain adaptation of a well-trained acoustic model can

be performed, but even here, high accuracy usually requires

significant labeled data from the target domain. In this work,

we propose an approach to domain adaptation that does not

require transcriptions but instead uses a corpus of unlabeled

parallel data, consisting of pairs of samples from the source

domain of the well-trained model and the desired target

domain. To perform adaptation, we employ teacher/student

(T/S) learning, in which the posterior probabilities generated

by the source-domain model can be used in lieu of labels to

train the target-domain model. We evaluate the proposed

approach in two scenarios, adapting a clean acoustic model to

noisy speech and adapting an adults’ speech acoustic model to

children’s speech. Significant improvements in accuracy are

obtained, with reductions in word error rate of up to 44% over

the original source model without the need for transcribed data

in the target domain. Moreover, we show that increasing the

amount of unlabeled data results in additional model

robustness, which is particularly beneficial when using

simulated training data in the target-domain.

Index Terms: teacher-student learning, parallel unlabeled data

- Introduction

The success of deep neural networks [1][2][3][4][5] relies on

the availability of a large amount of transcribed data to train

millions of model parameters. However, deep models still

suffer reduced performance when exposed to test data from a

new domain. Because it is typically very time-consuming or

expensive to transcribe large amounts of data for a new

domain, domain-adaptation approaches have been proposed to

bootstrap the training of a new system from an existing well-

trained model [6][7][8][9]. These supervised methods still

require transcribed data from the new domain and thus their

effectiveness is limited by the amount of transcribed data

available in the new domain. Although unsupervised

adaptation methods can be used by generating labels from a

decoder, the performance gap between supervised and

unsupervised adaptation is large [7].

In this work, we propose an approach to domain

adaptation that does not require transcriptions but instead uses

a corpus of unlabeled parallel data, consisting of pairs of

samples from the source domain of the well-trained source

model and the target domain. There are many important

scenarios in which collecting a virtually unlimited amount of

parallel data is relatively simple. For example, to collect noisy

or reverberant data from a particular set of environments,

speech can be captured simultaneously using a close-talking

microphone and a microphone located at a distance from the

user. Such a collection effort can also be simulated by

acoustically replaying a pre-existing corpus of high signal-to-

noise ratio speech files in the target environment or by

digitally simulating the target environment offline [10][11].

To perform adaptation without the use of transcriptions,

we propose to use teacher/student (T/S) learning. In T/S

learning, the data from the source domain are processed by the

source-domain model (teacher) to generate the corresponding

posterior probabilities or soft labels. These posterior

probabilities are used in lieu of the usual hard labels derived

from the transcriptions to train the target (student) model with

the parallel data from the target domain. With this approach,

the network can be trained on a potentially enormous amount

of training data and the challenge of adapting a large-scale

system shifts from transcribing thousands of hours of audio to

the potentially much simpler and lower-cost task of designing

a scheme to generate the appropriate parallel data.

The proposed approach is closely related to other

approaches for adaptation or retraining that employ knowledge

distillation [12]. In these approaches, the soft labels generated

by a teacher model are used as a regularization term to train a

student model with conventional hard labels. Knowledge

distillation was used to train a system on the Aurora 2 digit

recognition task [13], using the clean and noisy training sets

[14]. In [15] it was shown that for the multi-channel CHiME-4

task [16], soft labels could be derived using enhanced features

generated by a beamformer then processed through a network

trained with conventional multi-style training [17]. However,

it is unclear whether this approach is superior to simply using

the enhanced features for the recognition at test time as well.

Knowled

…(Full text truncated)…

📸 Image Gallery

Reference

This content is AI-processed based on ArXiv data.