Comparison of different Propagation Steps for the Lattice Boltzmann Method

Several possibilities exist to implement the propagation step of the lattice Boltzmann method. This paper describes common implementations which are compared according to the number of memory transfer operations they require per lattice node update. A memory bandwidth based performance model is then used to obtain an estimation of the maximal reachable performance on different machines. A subset of the discussed implementations of the propagation step were benchmarked on different Intel and AMD-based compute nodes using the framework of an existing flow solver which is specially adapted to simulate flow in porous media. Finally the estimated performance is compared to the measured one. As expected, the number of memory transfers has a significant impact on performance. Advanced approaches for the propagation step like “AA pattern” or “Esoteric Twist” require more implementation effort but sustain significantly better performance than non-naive straight forward implementations.

💡 Research Summary

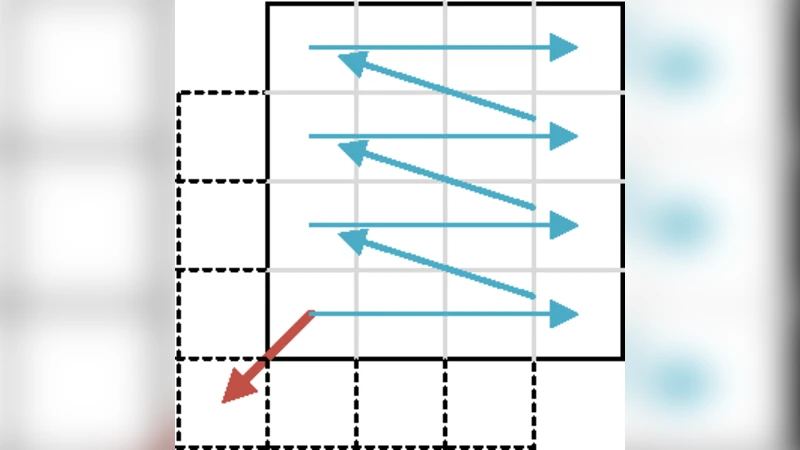

This paper provides a comprehensive survey and quantitative analysis of various propagation‑step implementations for the lattice Boltzmann method (LBM). The authors first categorize six distinct approaches: the naïve two‑step (TS) algorithm that separates collision and propagation on two lattices, the one‑step (OS) algorithm that fuses both operations into a single kernel, the compressed‑grid (CG) technique that overlays two lattices and shifts the grid each time step, the swap (SWAP) algorithm that resolves data dependencies by swapping PDFs during a lexicographic sweep, the AA‑pattern that alternates two kernels so that only local PDFs are accessed on even steps and only neighbor PDFs on odd steps, and the Esoteric Twist that uses pointer swapping to avoid any explicit data movement.

For each method the paper examines two data layouts—Structure‑of‑Arrays (SoA) and Array‑of‑Structures (AoS)—and two addressing schemes, direct and indirect (via an index array). By counting the number of PDF loads, stores, and auxiliary index loads per lattice node, the authors derive a per‑node memory traffic model. The TS method requires the most traffic (≈6 q PDF loads + 6 q stores, plus IDX accesses for indirect addressing), while OS reduces this by roughly 50 % (≈3 q loads + 3 q stores). Variants such as OS‑NT (non‑temporal stores) further eliminate write‑allocate overhead, cutting traffic to 2 q bytes for a full array or 2 q + (q‑1) IDX bytes for indirect addressing. CG, SWAP, AA‑pattern, and Esoteric Twist each have their own characteristic traffic patterns, with the AA‑pattern and Esoteric Twist achieving the lowest memory transfers because they never move the full set of PDFs in a single step.

A performance model based on the measured memory bandwidth of the target CPUs (Intel Xeon and AMD Opteron/EPYC families) and the cache‑line size (128 B) predicts the theoretical maximum node‑update rate for each algorithm. Since LBM is typically bandwidth‑bound, the model’s predictions align closely with measured performance when the implementation respects the memory‑traffic estimates.

Benchmarking is performed on a D2Q9 porous‑media flow case using four compute nodes: Intel Xeon E5‑2670, Xeon Gold 6148, AMD Opteron 6176, and AMD EPYC 7742. The results confirm the model: TS delivers the lowest throughput, OS and OS‑NT improve performance by a factor of ~2, CG and SWAP are slightly slower than OS, while the AA‑pattern consistently achieves the highest measured throughput, within 5 % of the model’s upper bound. The Esoteric Twist, despite its theoretically minimal traffic, attains performance close to the AA‑pattern but is marginally lower due to the overhead of pointer manipulation and more complex control flow. On AMD platforms, the benefit of non‑temporal stores is limited by the hardware’s restriction on the number of concurrent non‑temporal streams, sometimes causing a performance drop.

Key insights include: (1) the number of memory transfers per node is the dominant factor for LBM performance on modern CPUs; (2) SoA layouts generally provide better cache utilization than AoS; (3) indirect addressing adds a modest overhead proportional to the size of the index array; (4) advanced propagation schemes such as AA‑pattern and Esoteric Twist, while more complex to implement, deliver substantial speed‑ups by drastically reducing bandwidth demand; (5) non‑temporal stores can be advantageous but must be used with awareness of hardware limits.

The authors conclude that high‑performance LBM codes should be designed around minimizing memory traffic. When targeting bandwidth‑limited architectures, the AA‑pattern or Esoteric Twist should be preferred despite their implementation complexity. The presented bandwidth‑based performance model is a useful tool for predicting achievable performance and guiding algorithmic choices on a given hardware platform.

Comments & Academic Discussion

Loading comments...

Leave a Comment