On the Importance of Consistency in Training Deep Neural Networks

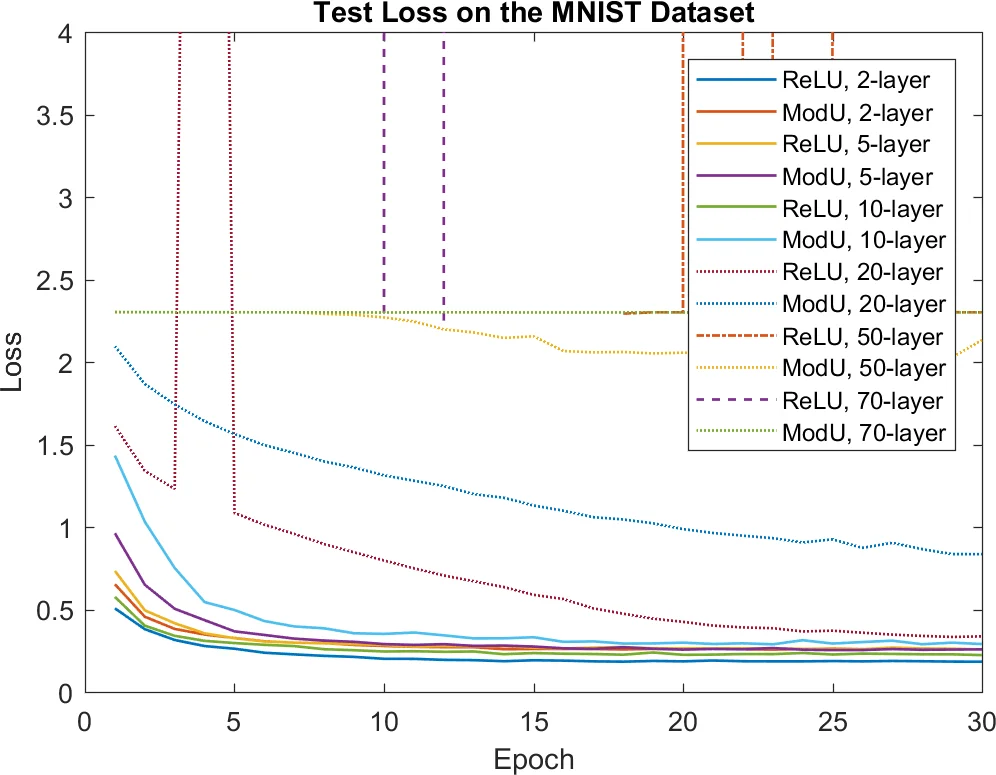

We explain that the difficulties of training deep neural networks come from a syndrome of three consistency issues. This paper describes our efforts in their analysis and treatment. The first issue is the training speed inconsistency in different layers. We propose to address it with an intuitive, simple-to-implement, low footprint second-order method. The second issue is the scale inconsistency between the layer inputs and the layer residuals. We explain how second-order information provides favorable convenience in removing this roadblock. The third and most challenging issue is the inconsistency in residual propagation. Based on the fundamental theorem of linear algebra, we provide a mathematical characterization of the famous vanishing gradient problem. Thus, an important design principle for future optimization and neural network design is derived. We conclude this paper with the construction of a novel contractive neural network.

💡 Research Summary

The paper “On the Importance of Consistency in Training Deep Neural Networks” argues that the persistent difficulty of training deep neural networks stems from three intertwined consistency problems: (1) inconsistent training speed across layers, (2) scale mismatch between layer inputs and residuals, and (3) inconsistency in residual (gradient) propagation, commonly known as the vanishing gradient problem. The authors propose a unified solution framework based on second‑order optimization, simple RMS‑based normalization, and an operator‑theoretic analysis that together restore consistency at every stage of training.

Layer‑wise speed consistency.

The authors model each layer as a local linear system with input matrix (X_i) and residual vector (R_i = \partial z / \partial y_i). They formulate a per‑layer minimization problem (\min_P \frac12|P X_i + R_i|^2) and show that its solution satisfies the regularized normal equations (P (X_i X_i^\top + \lambda I) = -R_i X_i^\top). By interpreting ((X_i X_i^\top + \lambda I)^{-1}) as a pre‑conditioner, the update becomes (P = -\partial z / \partial W_i (X_i X_i^\top + \lambda I)^{-1}), which is precisely a layer‑wise Levenberg‑Marquardt (or Newton) step. This yields a curvature‑aware descent direction that automatically adapts the effective learning rate for each layer, eliminating the bottleneck caused by a single global step size. The authors discuss practical implementation: for layers with a few hundred hidden units, direct matrix multiplication and inversion are feasible; for larger layers they propose stochastic chunking, where hidden units are randomly partitioned into manageable groups, allowing fast matrix operations without sacrificing accuracy.

Scale consistency.

Empirical observation shows that early layers receive inputs in (

Comments & Academic Discussion

Loading comments...

Leave a Comment