Debugging Transactions and Tracking their Provenance with Reenactment

Debugging transactions and understanding their execution are of immense importance for developing OLAP applications, to trace causes of errors in production systems, and to audit the operations of a database. However, debugging transactions is hard for several reasons: 1) after the execution of a transaction, its input is no longer available for debugging, 2) internal states of a transaction are typically not accessible, and 3) the execution of a transaction may be affected by concurrently running transactions. We present a debugger for transactions that enables non-invasive, post-mortem debugging of transactions with provenance tracking and supports what-if scenarios (changes to transaction code or data). Using reenactment, a declarative replay technique we have developed, a transaction is replayed over the state of the DB seen by its original execution including all its interactions with concurrently executed transactions from the history. Importantly, our approach uses the temporal database and audit logging capabilities available in many DBMS and does not require any modifications to the underlying database system nor transactional workload.

💡 Research Summary

The paper addresses the long‑standing challenge of debugging database transactions after they have executed, especially in environments where concurrency anomalies and low isolation levels make bugs hard to reproduce. Traditional transaction debuggers either require a separate development copy of the database (violating the “no‑change” requirement) or treat SQL statements as opaque black boxes, offering no insight into intermediate tuple versions or data flow. Moreover, most DBMS time‑travel facilities only expose committed snapshots, discarding the transient states that exist within a transaction’s execution. The authors identify four core obstacles (C1–C4): (1) debugging must not alter production data; (2) past database states are transient; (3) data flow inside SQL statements is invisible; (4) non‑serializable isolation levels (e.g., Snapshot Isolation) introduce anomalies such as write‑skew that are difficult to reproduce.

To overcome these obstacles, the authors propose a novel “reenactment” technique. Reenactment leverages two widely available DBMS features: audit logging (which records every executed SQL statement together with timestamps, parameters, and transaction identifiers) and temporal tables / time‑travel queries (which allow access to past versions of data). By extracting the exact sequence of DML statements of a target transaction, together with the timestamps of each statement, the system reconstructs the exact snapshot of the database that the transaction saw at each step. It then re‑executes the statements as a pure SQL query, preserving the original isolation level (the paper demonstrates support for both Serializable and Read‑Committed under Snapshot Isolation). Because the reenactment query is expressed entirely in SQL, it can be run on any DBMS that supports audit logs and temporal queries, without any modification to the underlying engine.

Provenance tracking is achieved by instrumenting the reenactment query with additional columns that capture the lineage of each generated tuple: the originating transaction, the input tuple identifiers, and the transformation applied. The result is a provenance graph where nodes represent tuple versions and edges denote derivation relationships. This graph can be visualized interactively, allowing a developer to trace exactly which input rows contributed to a particular output row and how intermediate updates evolved.

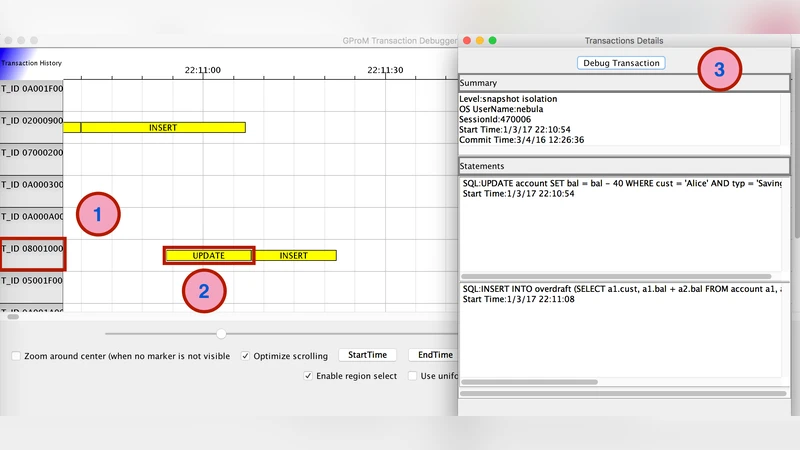

The paper also introduces a graphical debugger built on top of this technology. The main view shows a timeline of transactions extracted from the audit log; selecting a transaction opens a detail pane with metadata (isolation level, commit time, user, session, and the SQL text of each statement). Clicking “Debug Transaction” opens a panel that displays, column‑wise, the initial state of each accessed table and the state after each statement. Rows affected by the transaction are highlighted, and clicking any row pops up its provenance graph. Users can toggle the visibility of unaffected rows or filter tables to reduce visual clutter.

A key feature is support for “what‑if” scenarios. Users may (1) edit data in a table, which the system captures in a temporary version and substitutes in the reenactment query, or (2) modify the transaction’s SQL (add, delete, or change statements). The system then recomputes the reenactment, instantly showing how the changes would affect the outcome. The paper illustrates this with a “promotion” technique: adding a dummy UPDATE that forces the transaction to abort when a concurrent execution would otherwise cause a write‑skew, thereby preventing the anomaly.

Performance evaluation shows that enabling audit logging and time‑travel incurs modest overhead (≈5 % for mixed workloads, ≈20 % for write‑only workloads), which is acceptable given that many production systems already enable these features for compliance reasons. Moreover, because reenactment operates on read‑only snapshots, it never mutates live data, satisfying the non‑intrusive requirement (C1). The approach also reconstructs intermediate tuple versions, addressing C2, and provides full data‑flow visibility, addressing C3. Finally, by faithfully reproducing the interleaving of concurrent transactions under Snapshot Isolation, it enables debugging of anomalies that arise under non‑serializable isolation (C4).

In summary, the authors deliver a practical, DBMS‑agnostic framework that combines audit‑log‑driven reconstruction, temporal query‑based state recovery, and SQL‑level provenance instrumentation to enable post‑mortem, non‑invasive debugging of transactions. The system not only reveals the hidden data‑flow inside SQL statements but also empowers developers to experiment with hypothetical changes, making it valuable for OLAP application development, production error diagnosis, and regulatory auditing.

Comments & Academic Discussion

Loading comments...

Leave a Comment