Photographic Image Synthesis with Cascaded Refinement Networks

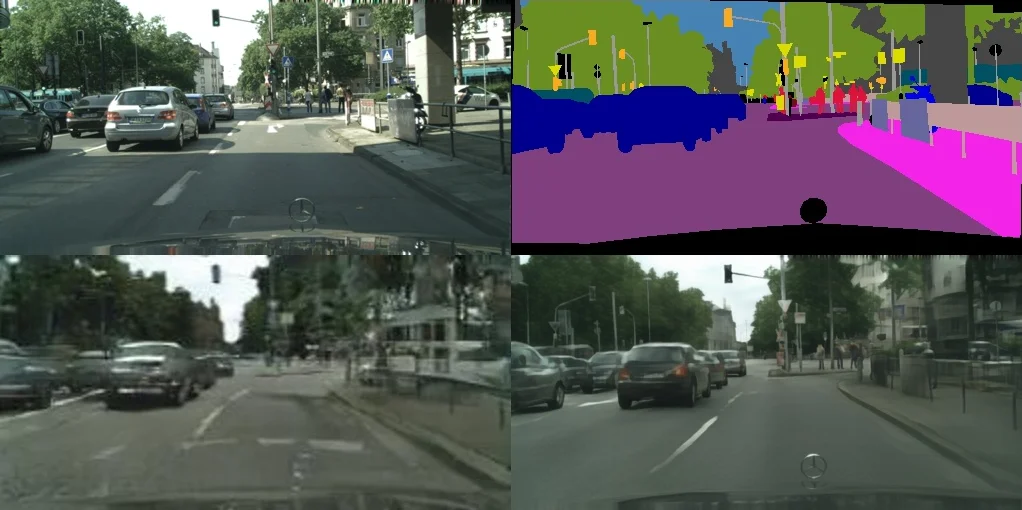

We present an approach to synthesizing photographic images conditioned on semantic layouts. Given a semantic label map, our approach produces an image with photographic appearance that conforms to the input layout. The approach thus functions as a rendering engine that takes a two-dimensional semantic specification of the scene and produces a corresponding photographic image. Unlike recent and contemporaneous work, our approach does not rely on adversarial training. We show that photographic images can be synthesized from semantic layouts by a single feedforward network with appropriate structure, trained end-to-end with a direct regression objective. The presented approach scales seamlessly to high resolutions; we demonstrate this by synthesizing photographic images at 2-megapixel resolution, the full resolution of our training data. Extensive perceptual experiments on datasets of outdoor and indoor scenes demonstrate that images synthesized by the presented approach are considerably more realistic than alternative approaches. The results are shown in the supplementary video at https://youtu.be/0fhUJT21-bs

💡 Research Summary

The paper introduces a novel approach for synthesizing photorealistic images from pixel‑wise semantic label maps, termed the Cascaded Refinement Network (CRN). Unlike most contemporary image‑to‑image translation methods that rely on Generative Adversarial Networks (GANs), CRN is a single feed‑forward convolutional network trained end‑to‑end with a perceptual (feature‑matching) loss. The authors treat the task as an “inverse semantic segmentation” problem: given a semantic layout L (a one‑hot tensor of size m × n × c), the network g(L; θ) must produce a color image I ∈ ℝ^{m×n×3} that respects the layout. Because many different photographs can correspond to the same layout, the problem is inherently one‑to‑many and under‑constrained, making the choice of loss crucial.

Network architecture

CRN consists of a cascade of refinement modules M_i, each operating at a specific resolution. The first module M_0 receives a heavily down‑sampled layout (4 × 8) and outputs an initial feature map F_0. Subsequent modules double the spatial resolution (e.g., 8 × 16, 16 × 32, …) and each takes as input the concatenation of the layout down‑sampled to the current resolution and the previous module’s feature map up‑sampled by bilinear interpolation. No transposed convolutions are used, avoiding checkerboard artifacts. Within a module, three convolutional layers are employed: an input layer, an intermediate layer, and an output layer. Each layer is followed by a 3 × 3 convolution, layer normalization, and a leaky ReLU. The final module’s output is linearly projected (1 × 1 convolution) to three RGB channels. The number of feature channels d_i is large (1024 for the first five modules, then 512, 128, and 32) resulting in a total of about 105 M parameters, which the authors argue is necessary to store the “memory” required for high‑resolution detail.

Training loss

Instead of a pixel‑wise L2 loss, the authors adopt a perceptual loss based on a pre‑trained VGG‑19 network Φ. For a set of layers {Φ_l} (conv1_2 through conv5_2), the loss is L(θ)=∑_l λ_l‖Φ_l(I)−Φ_l(g(L;θ))‖_1. The λ coefficients are initially set to the inverse of the number of elements in each layer and later rescaled after 100 epochs to balance contributions. This loss encourages the synthesized image to match the reference image in both low‑level texture and high‑level semantic structure, while tolerating legitimate variations (e.g., a different car color).

Generating diverse outputs

To address the one‑to‑many nature, the authors modify the network to output 3 × k channels, producing k images in a single forward pass. An additional diversity‑promoting term in the loss encourages the k images to be different from each other, allowing a single layout to yield a collection of plausible photographs.

Experiments

The method is evaluated on Cityscapes (outdoor urban scenes, up to 1024 × 2048) and NYU‑Depth V2 (indoor scenes). Baselines include Pix2Pix (GAN‑based), a GAN‑augmented version of the cascade, and VAE‑based models. Human perceptual studies on Amazon Mechanical Turk show that CRN’s outputs are judged significantly more realistic than any baseline. Quantitative metrics such as SSIM and LPIPS also favor CRN, especially at higher resolutions where GAN‑based methods suffer from blur or mode collapse. The authors demonstrate that adding a new refinement module simply doubles the resolution, confirming the scalability of the architecture.

Strengths and limitations

Key strengths are the elimination of unstable adversarial training, the ability to synthesize high‑resolution images (up to 2 MP) with coherent global structure, and the use of a perceptual loss that balances detail and semantics. The cascade design efficiently propagates global layout information from low‑resolution stages to fine‑grained details at higher stages. However, diversity control remains coarse; the method cannot explicitly condition on attributes like lighting or material beyond what is implicitly learned from the dataset. The reliance on VGG‑19 for perceptual loss may limit the capture of textures not well represented in ImageNet‑trained features. Moreover, the large model size demands substantial GPU memory, which could hinder deployment on resource‑constrained platforms.

Conclusion and future work

The paper proves that a well‑designed feed‑forward network, trained with a perceptual loss, can rival or surpass GAN‑based approaches for semantic‑layout‑to‑image synthesis, even at very high resolutions. Future directions suggested include integrating stochastic latent variables (e.g., conditional VAEs) for finer‑grained diversity, adding explicit style or illumination controls, and exploring more lightweight architectures that retain quality while reducing memory footprints. Overall, the work offers a compelling alternative to adversarial methods for conditional image generation and opens avenues for practical, high‑fidelity rendering from semantic specifications.

Comments & Academic Discussion

Loading comments...

Leave a Comment