LanideNN: Multilingual Language Identification on Character Window

In language identification, a common first step in natural language processing, we want to automatically determine the language of some input text. Monolingual language identification assumes that the given document is written in one language. In multilingual language identification, the document is usually in two or three languages and we just want their names. We aim one step further and propose a method for textual language identification where languages can change arbitrarily and the goal is to identify the spans of each of the languages. Our method is based on Bidirectional Recurrent Neural Networks and it performs well in monolingual and multilingual language identification tasks on six datasets covering 131 languages. The method keeps the accuracy also for short documents and across domains, so it is ideal for off-the-shelf use without preparation of training data.

💡 Research Summary

The paper introduces LanideNN, a character‑level language identification system that can handle both monolingual and multilingual texts, even when languages switch arbitrarily within a document. The core idea is to slide a fixed‑size window of 200 Unicode characters over the input, embed each character into a 200‑dimensional vector, and feed the sequence into a bidirectional recurrent neural network built from Gated Recurrent Units (GRUs). The forward and backward GRU streams each contain 500 hidden units; their hidden states are summed and passed through a soft‑max layer that outputs a probability distribution over 132 classes (131 languages plus an HTML tag class). The language label for each character is simply the class with the highest probability.

Training uses the Adam optimizer (learning rate = 0.0001, batch size = 64) and a cross‑entropy loss. To mitigate over‑fitting, dropout with a rate of 0.5 is applied to the character‑embedding layer. The model is trained for roughly 530 k steps on a single GTX Titan Z GPU, taking about five days, and early stopping is based on development‑set error.

A major contribution is the construction of a large, balanced multilingual corpus. Starting from the Wikipedia‑derived W2C collection (106 languages), the authors augment it with Tatoeba (307 languages), Leipzig corpora (220 languages), EMILLE (14 Indian languages), and Haitian Creole SMS data. After extensive cleaning, deduplication, and line‑level interleaving, they retain 131 languages plus a special HTML tag class. To reflect real‑world language prevalence, they cap the number of characters per language (up to 10 M for the most spoken languages, 5 M for the next tier, and 1 M for the rest). The final training set is split into non‑overlapping training, development, and test partitions. The test set consists of 100 short lines per language (average length 142 characters), shuffled and stripped of line‑break markers so that the model must infer language boundaries without relying on explicit delimiters.

Evaluation is performed on three well‑known monolingual benchmarks compiled by Baldwin and Lui (2010): EuroGov (Western European government texts), TCL (a multilingual news collection with varied encodings), and a Wikipedia dump. LanideNN achieves 97.7 % accuracy on EuroGov, 95.4 % on TCL, and 89.3 % on Wikipedia, outperforming or matching established tools such as Langid.py (97.0 %/98.7 %/91.3 %), CLD2, and TextCat. Notably, LanideNN maintains high performance on the TCL set despite its heterogeneous encodings, because all inputs are converted to Unicode before processing.

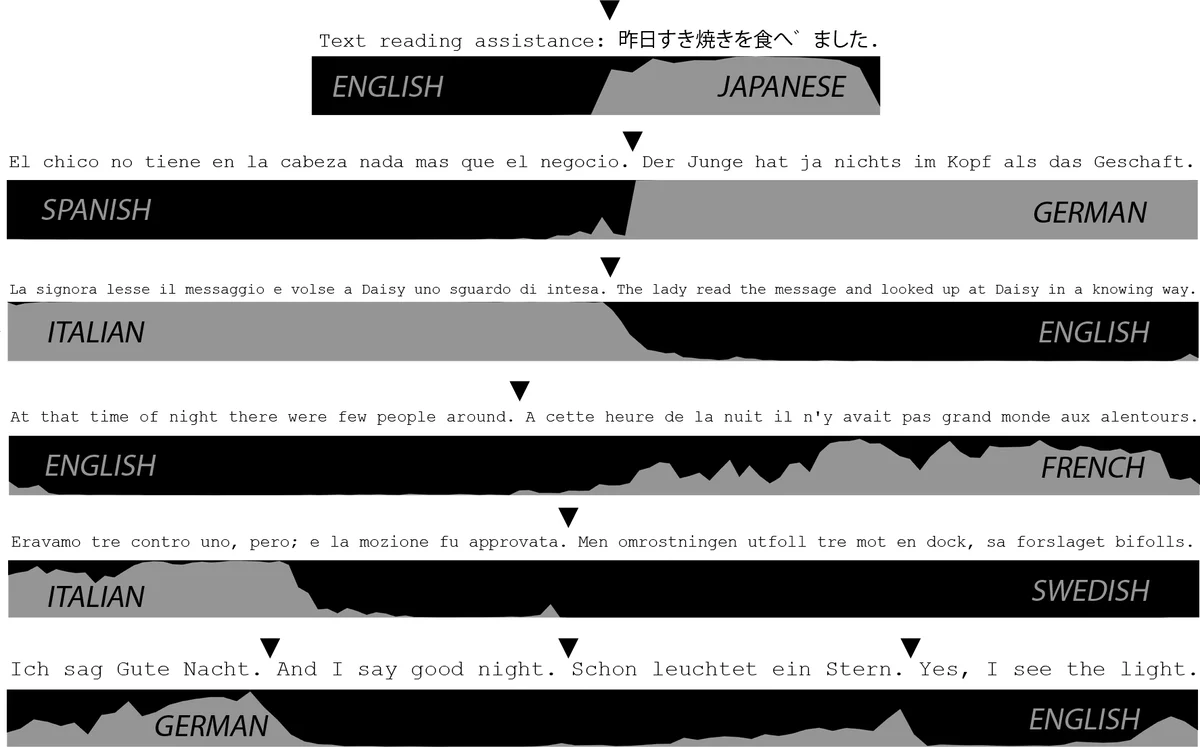

Beyond monolingual classification, the authors demonstrate the system’s ability to label each character, which enables detection of language switches at a fine granularity. Visualizations show that the model quickly learns to assign the same label to consecutive characters belonging to the same language, while correctly flipping labels at true code‑switch points. This capability is especially valuable for studying code‑switching phenomena in social media, email, or chat logs, where word boundaries may be ambiguous or scripts may be mixed.

Strengths of LanideNN include: (1) No need for language‑specific preprocessing or tokenization; raw Unicode characters are sufficient. (2) Robustness to short texts and domain shifts, making it an “off‑the‑shelf” solution for a wide range of applications. (3) Coverage of a large number of languages (131) within a single model, simplifying deployment. (4) Ability to produce per‑character language tags, facilitating downstream tasks such as span extraction or multilingual downstream processing.

Limitations are also acknowledged. The fixed 200‑character, non‑overlapping window can cause boundary artifacts for longer documents, as a language change occurring near a window edge may be missed or mis‑classified. The training data are not publicly released due to licensing constraints, which hampers reproducibility and community benchmarking. Languages with fewer training characters (capped at 1 M) may still suffer from insufficient representation, potentially leading to lower accuracy on low‑resource languages. Finally, the model cannot handle languages that were not present in the training set, limiting its applicability in truly open‑world scenarios.

Future work suggested by the authors includes: (a) Introducing overlapping windows or a hierarchical architecture to capture longer‑range dependencies while preserving fine‑grained labeling. (b) Exploring transformer‑based encoders or leveraging multilingual pretrained models (e.g., mBERT, XLM‑R) to improve representation power and to better handle unseen languages. (c) Applying data‑augmentation or meta‑learning techniques to alleviate the imbalance among language resources. (d) Extending the system to an open‑set setting where unknown languages are detected and flagged rather than forced into one of the known classes.

In conclusion, LanideNN represents a practical, high‑accuracy solution for character‑level language identification across a broad spectrum of languages and domains. Its ability to operate on raw Unicode streams, maintain performance on short and noisy texts, and provide per‑character language tags makes it especially suitable for modern multilingual web content, social media analysis, and any pipeline that requires robust language detection without extensive preprocessing or language‑specific tuning.

Comments & Academic Discussion

Loading comments...

Leave a Comment