Generalized Common Informations: Measuring Commonness by the Conditional Maximal Correlation

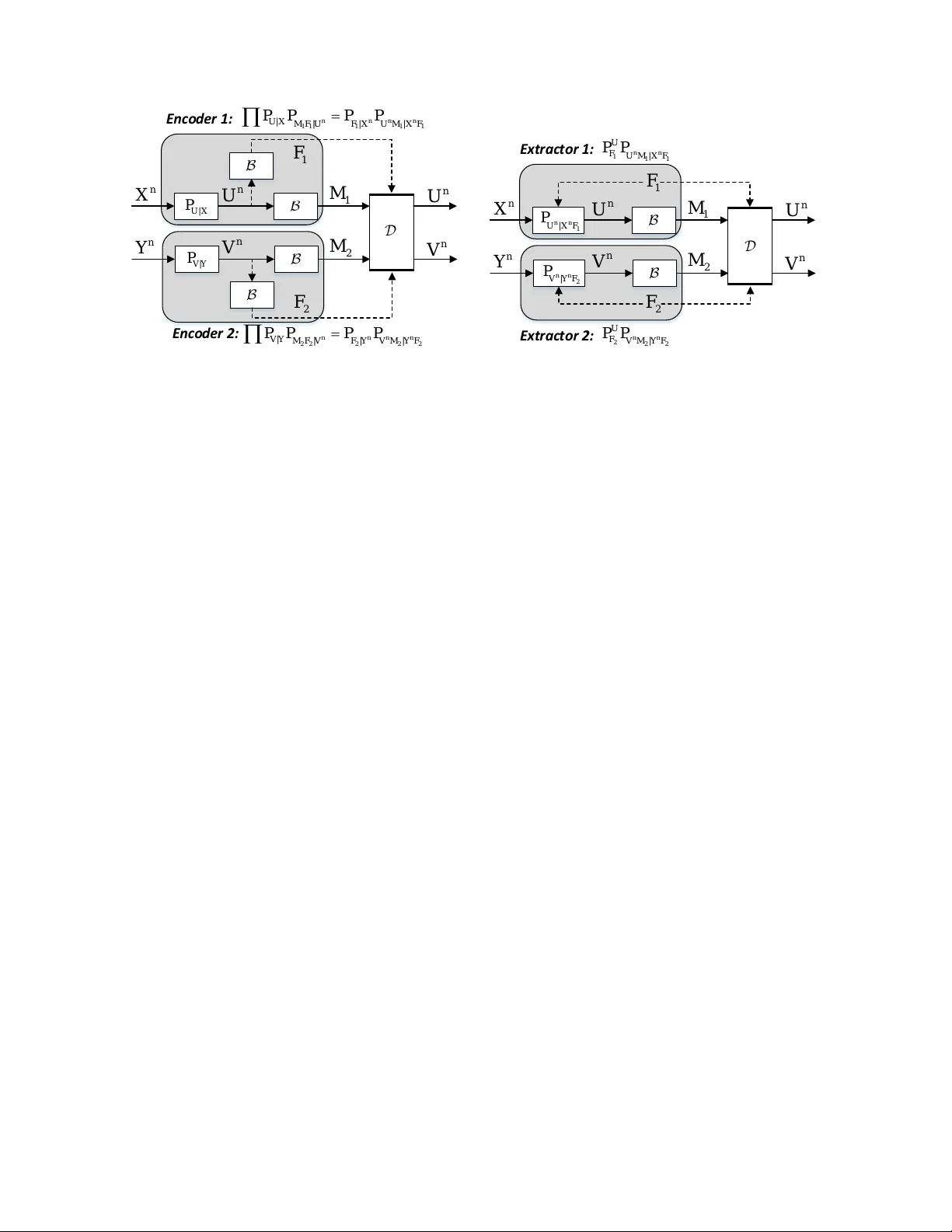

In literature, different common informations were defined by G\'acs and K\"orner, by Wyner, and by Kumar, Li, and Gamal, respectively. In this paper, we define two generalized versions of common informations, named approximate and exact information-c…

Authors: Lei Yu, Houqiang Li, Chang Wen Chen