Large volume of Genomics data is produced on daily basis due to the advancement in sequencing technology. This data is of no value if it is not properly analysed. Different kinds of analytics are required to extract useful information from this raw data. Classification, Prediction, Clustering and Pattern Extraction are useful techniques of data mining. These techniques require appropriate selection of attributes of data for getting accurate results. However, Bioinformatics data is high dimensional, usually having hundreds of attributes. Such large a number of attributes affect the performance of machine learning algorithms used for classification/prediction. So, dimensionality reduction techniques are required to reduce the number of attributes that can be further used for analysis. In this paper, Principal Component Analysis and Factor Analysis are used for dimensionality reduction of Bioinformatics data. These techniques were applied on Leukaemia data set and the number of attributes was reduced from to.

Deep Dive into Using PCA and Factor Analysis for Dimensionality Reduction of Bio-informatics Data.

Large volume of Genomics data is produced on daily basis due to the advancement in sequencing technology. This data is of no value if it is not properly analysed. Different kinds of analytics are required to extract useful information from this raw data. Classification, Prediction, Clustering and Pattern Extraction are useful techniques of data mining. These techniques require appropriate selection of attributes of data for getting accurate results. However, Bioinformatics data is high dimensional, usually having hundreds of attributes. Such large a number of attributes affect the performance of machine learning algorithms used for classification/prediction. So, dimensionality reduction techniques are required to reduce the number of attributes that can be further used for analysis. In this paper, Principal Component Analysis and Factor Analysis are used for dimensionality reduction of Bioinformatics data. These techniques were applied on Leukaemia data set and the number of attributes

(IJACSA) International Journal of Advanced Computer Science and Applications,

Vol. 8, No. 5, 2017

415 | P a g e

www.ijacsa.thesai.org

Using PCA and Factor Analysis for Dimensionality

Reduction of Bio-informatics Data

M. Usman Ali

Department of Computer Science

COMSATS Institute of Information

Technology

Sahiwal, Pakistan

Shahzad Ahmed

Department of Computer Science

COMSATS Institute of Information

Technology

Sahiwal, Pakistan

Javed Ferzund

Department of Computer Science

COMSATS Institute of Information

Technology

Sahiwal, Pakistan

Atif Mehmood

Riphah Institute of Computing and Applied Sciences (RICAS)

Riphah International University

Lahore, Pakistan

Abbas Rehman

Department of Computer Science

COMSATS Institute of Information Technology

Sahiwal, Pakistan

Abstract—Large volume of Genomics data is produced on

daily basis due to the advancement in sequencing technology.

This data is of no value if it is not properly analysed. Different

kinds of analytics are required to extract useful information from

this raw data. Classification, Prediction, Clustering and Pattern

Extraction are useful techniques of data mining. These

techniques require appropriate selection of attributes of data for

getting accurate results. However, Bioinformatics data is high

dimensional, usually having hundreds of attributes. Such large a

number of attributes affect the performance of machine learning

algorithms used for classification/prediction. So, dimensionality

reduction techniques are required to reduce the number of

attributes that can be further used for analysis. In this paper,

Principal Component Analysis and Factor Analysis are used for

dimensionality

reduction

of

Bioinformatics

data.

These

techniques were applied on Leukaemia data set and the number

of attributes was reduced from to.

Keywords—Bioinformatics; Statistics; Microarray; Leukaemia;

Feature Selection; Statistical tests; PCA; Factor Analysis; R tool

I.

INTRODUCTION

Bioinformatics experiments are based on Genome, DNA,

RNA and Chromosomes. Genomics plays an imperative role in

this field. Huge amount of data has been produced in Genomics

with a substantial portion produced in Functional Genomics (in

the form of protein-protein association), Structural Genomics

(in the form of 3-D structure). By using NGS (Next Generation

Sequencing) technique, a lot of work has been done in the field

of Microarray. This technique is helpful to identify human

diseases. NGS is sequencing technique which is used to detect

sequences of proteomics for next generation.

Genetic Diseases are caused by Genetic disorders that are

more complex because of multiple genes interaction. These

disorders are breast cancer, colon cancer, skin cancer, autism,

progeria, and haemophilia. These are caused by mutation in

genes or sometimes inherit from parents. Leukaemia is a

cancer of blood cells that occurs due to genome abnormality.

Microarray includes genes expression data that is present at

large scale.

Bioinformatics data needs to be store in an efficient manner

and include a lot of Attributes (Variables). The major problem

is that, most of tools crash when large data stored in it.

Statistics

plays

superlative

role

in

the

field

of

Bioinformatics, Mathematics and Computer Science. It is used

to extract, organise, analyse and visualise large amount of data.

. For this purpose, a lot of tools like Excel, Weka, Matlab and

R are available. Many Statistical tests are used for the

extraction of relevant information. These are t-test, chi-squared

test (χ2-test), ANOVA (Analysis of Variance), Kruskal-Wallis,

Friedman and PCA (Principle Component Analysis) tests [1]

Statistical t-test is used to check the difference between sample

Mean and hypothesised value. ANOVA is parametric

(distribution) test used to check the difference of dependent

variables with levels of independent variables. Kruskal-Wallis

is non-parametric (distribution free) test in which assumptions

are not including unlike ANOVA. Friedman test is used when

there is one distributed dependent variable and one

independent variable with two or many levels. It is used to

check the difference in reading and math scores and writing.

Chi-squared test is used to compare the observed data with data

according to specific hypothesis. PCA test is used to

Select/Extract relevant and specific information about variables

(attributes) in large dataset. In PCA, correlation is found

between principal components and original data. All of these

tests applied in Statistical tool.

R is open source Statistical tool that is used for loading,

extracting, interpretation and analysis of data. It includes many

operations such as standard deviation, correlation, mean,

variance, median, mode, graphs, plot, charts, and histograms. It

automatically loads libraries and packages. It performs

Machine Learning Classification and Clustering tasks very

quickly and effective

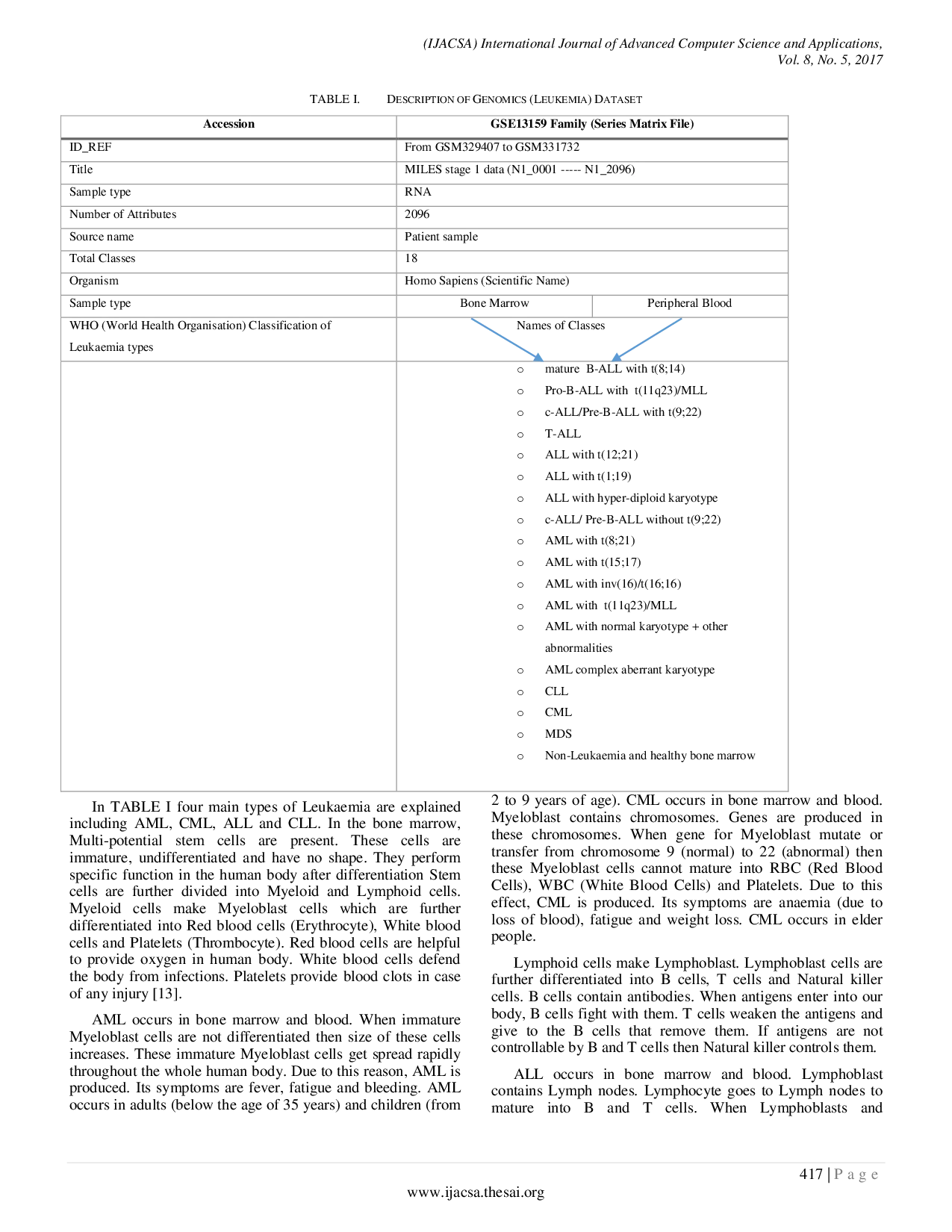

…(Full text truncated)…

This content is AI-processed based on ArXiv data.