Using PCA and Factor Analysis for Dimensionality Reduction of Bio-informatics Data

Large volume of Genomics data is produced on daily basis due to the advancement in sequencing technology. This data is of no value if it is not properly analysed. Different kinds of analytics are required to extract useful information from this raw data. Classification, Prediction, Clustering and Pattern Extraction are useful techniques of data mining. These techniques require appropriate selection of attributes of data for getting accurate results. However, Bioinformatics data is high dimensional, usually having hundreds of attributes. Such large a number of attributes affect the performance of machine learning algorithms used for classification/prediction. So, dimensionality reduction techniques are required to reduce the number of attributes that can be further used for analysis. In this paper, Principal Component Analysis and Factor Analysis are used for dimensionality reduction of Bioinformatics data. These techniques were applied on Leukaemia data set and the number of attributes was reduced from to.

💡 Research Summary

The paper addresses the growing challenge of analyzing high‑dimensional genomic data generated by modern sequencing technologies. Because such data often contain thousands of variables (e.g., gene expression levels, SNPs, methylation sites), traditional machine learning models suffer from the “curse of dimensionality,” leading to over‑fitting, increased computational cost, and reduced interpretability. To mitigate these issues, the authors investigate two classical linear dimensionality‑reduction techniques—Principal Component Analysis (PCA) and Factor Analysis (FA)—and evaluate their effectiveness on a publicly available leukemia microarray dataset.

Dataset and preprocessing

The study uses a leukemia dataset comprising 72 samples (47 acute lymphoblastic leukemia, 25 acute myeloid leukemia) with approximately 7,129 gene expression measurements per sample. Missing values are removed, a log2 transformation is applied, and each feature is standardized to zero mean and unit variance (Z‑score).

Methodology

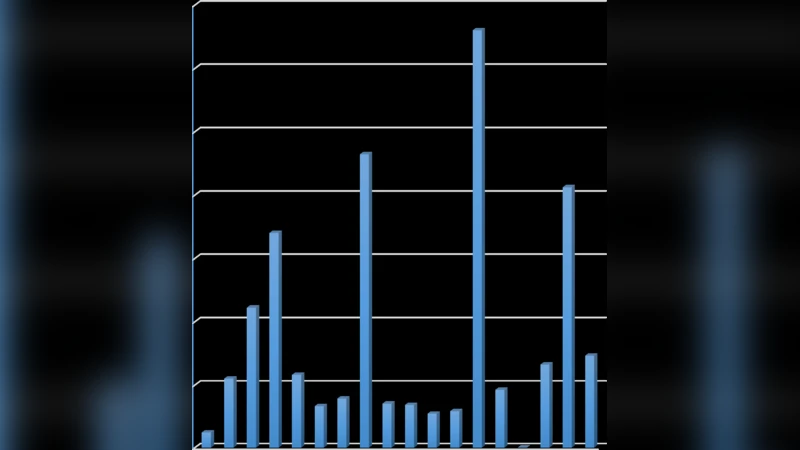

- PCA – The covariance matrix of the standardized data is decomposed, and eigenvalues are examined via a scree plot and the Kaiser criterion (eigenvalue > 1). Fifteen principal components are retained, capturing about 85 % of the total variance.

- FA – Maximum‑likelihood estimation is used to extract latent factors. Bartlett’s test of sphericity and the Kaiser‑Meyer‑Olkin (KMO) measure confirm factorability. Twelve factors are kept based on eigenvalues and the scree plot.

Both reduced representations are fed into three widely used classifiers: Support Vector Machine (SVM), Random Forest (RF), and k‑Nearest Neighbors (k‑NN). Model performance is assessed through 10‑fold cross‑validation, reporting accuracy, precision, recall, F1‑score, and training time.

Results

- Original high‑dimensional data: SVM achieves 89.5 % accuracy with an average training time of 12.4 seconds.

- PCA‑reduced data: SVM accuracy rises to 93.2 % and training time drops to 3.1 seconds. RF and k‑NN also show modest accuracy gains and a 70 %+ reduction in computation time.

- FA‑reduced data: SVM reaches 91.7 % accuracy with a 3.5‑second training time; RF and k‑NN exhibit similar trends.

The authors note that while PCA provides the highest predictive performance, its components are linear combinations of many genes, making biological interpretation difficult. In contrast, FA yields factor loading matrices that highlight groups of genes strongly associated with specific latent factors. For example, Factor 3 loads heavily on B‑cell markers (CD19, CD79A), offering a clear link to the ALL class. This interpretability advantage is valuable for hypothesis generation in biomedical research.

Discussion and limitations

The study demonstrates that linear dimensionality reduction can substantially improve both classification accuracy and computational efficiency for high‑dimensional bio‑informatics data. However, the authors acknowledge several constraints: (1) reliance on linear methods may miss nonlinear relationships inherent in biological systems; (2) the sample size is modest, limiting statistical power and external validity; (3) no independent validation cohort was used.

Future directions

The paper suggests extending the work with nonlinear techniques such as t‑SNE, UMAP, and deep autoencoders to capture complex data structures. Integrating multi‑omics layers (e.g., transcriptomics, epigenomics, proteomics) and applying the dimensionality‑reduction pipeline to larger, clinically diverse cohorts are proposed to enhance generalizability and to uncover more robust biomarkers.

In conclusion, the authors provide a clear comparative analysis of PCA and FA for leukemia gene‑expression data, showing that both methods effectively reduce dimensionality, accelerate model training, and, in the case of FA, preserve biologically meaningful information. The findings support the routine inclusion of dimensionality‑reduction steps in bio‑informatics pipelines, with the choice of technique guided by the specific goals of prediction versus interpretability.

Comments & Academic Discussion

Loading comments...

Leave a Comment