The Role of Conversation Context for Sarcasm Detection in Online Interactions

Computational models for sarcasm detection have often relied on the content of utterances in isolation. However, speaker’s sarcastic intent is not always obvious without additional context. Focusing on social media discussions, we investigate two issues: (1) does modeling of conversation context help in sarcasm detection and (2) can we understand what part of conversation context triggered the sarcastic reply. To address the first issue, we investigate several types of Long Short-Term Memory (LSTM) networks that can model both the conversation context and the sarcastic response. We show that the conditional LSTM network (Rocktaschel et al., 2015) and LSTM networks with sentence level attention on context and response outperform the LSTM model that reads only the response. To address the second issue, we present a qualitative analysis of attention weights produced by the LSTM models with attention and discuss the results compared with human performance on the task.

💡 Research Summary

This paper investigates the importance of conversational context for detecting sarcasm in online interactions, focusing on two research questions: (1) does modeling the surrounding dialogue improve sarcasm detection performance, and (2) can we identify which parts of the context trigger a sarcastic reply. To answer these questions, the authors compile two distinct datasets: (a) a subset of the Internet Argument Corpus (Sarcasm Corpus V2) consisting of discussion‑forum posts, and (b) a collection of tweets labeled as sarcastic via hashtags such as #sarcasm or #irony and as non‑sarcastic via sentiment hashtags. The forum data contain longer, multi‑sentence posts and are annotated through crowdsourcing, whereas the Twitter data are shorter, often single‑sentence, and the sarcasm label is directly signaled by the author’s hashtag.

The experimental framework compares three families of models. The first baseline uses a Support Vector Machine (SVM) with handcrafted discrete features: n‑grams, LIWC‑based pragmatic cues, sentiment lexicon counts, and a set of sarcasm indicators (interjections, capitalization, intensifiers, etc.). Two SVM variants are evaluated—one that uses only the reply (SVM r) and another that concatenates the reply with its preceding context (SVM c+r). The second family consists of a plain Long Short‑Term Memory (LSTM) network that reads only the reply (LSTM r). The third family incorporates context through two neural architectures: (i) a conditional LSTM, where the context LSTM is processed first and its final cell state initializes the reply LSTM (as in Rocktäschel et al., 2015), and (ii) a sentence‑level attention LSTM that encodes each sentence as the average of its word embeddings, computes attention scores over sentences in both context and reply, and combines the attended vectors for classification. A hierarchical version that also attends over words is explored but found to overfit given the modest dataset sizes.

Training uses pre‑trained word embeddings (100‑dim skip‑gram vectors for Twitter, 300‑dim Google News vectors for forums) kept fixed, with dropout 0.5, L2 regularization, batch size 16, and an 80/10/10 train/validation/test split.

Results on the forum dataset show that the SVM baselines perform poorly, and adding context even hurts performance. All LSTM‑based models benefit from context. The conditional LSTM achieves the highest scores (F1 = 73.32 % for sarcastic class, 70.56 % for non‑sarcastic), improving over the reply‑only LSTM by 6 % and 3 % respectively. The sentence‑level attention LSTM also reaches competitive performance (F1 ≈ 73.7 % for sarcasm) and, crucially, provides interpretable attention weights. The hierarchical attention model underperforms (F1 ≈ 69.9 % for sarcasm), likely due to over‑parameterization. Similar trends appear in the Twitter experiments: models that ingest the preceding tweet outperform those that consider only the target tweet.

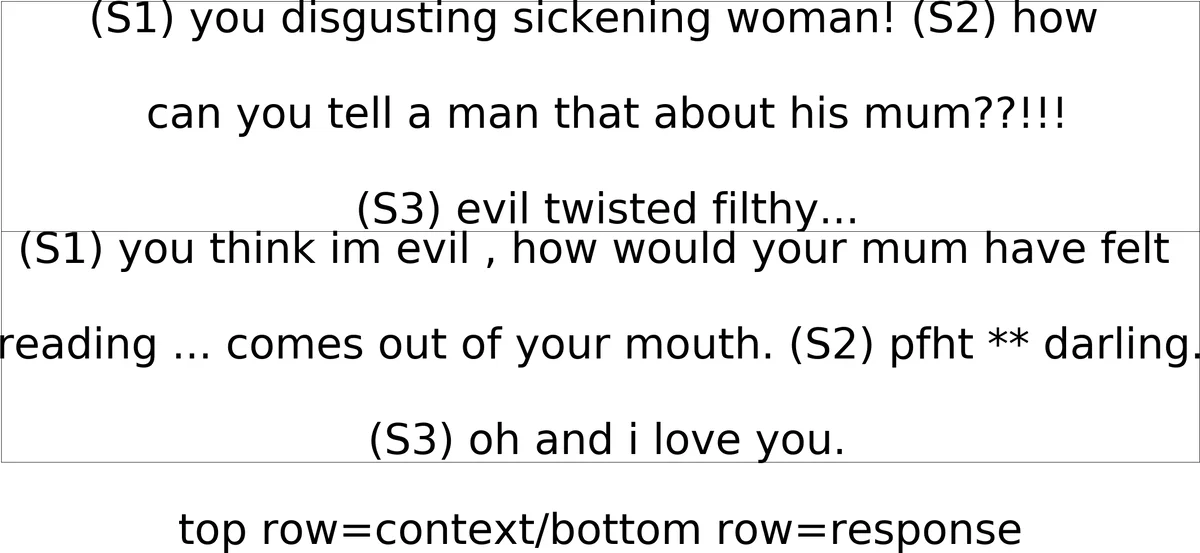

A qualitative analysis inspects the attention distributions. Human annotators typically rely on sentiment incongruity (positive wording in a negative situation) and lexical cues of irony. The attention‑based LSTM often assigns high weights to the very sentences humans deem pivotal, such as those containing rhetorical questions or explicit sarcasm markers (“Are you kidding me?!”). However, occasional mis‑alignments are observed, where the model over‑focuses on intensifiers or capitalized words, leading to false positives. This analysis demonstrates that attention mechanisms can surface plausible explanatory evidence but are not a full substitute for human judgment.

In summary, the paper makes three key contributions: (1) empirical evidence that conversational context substantially boosts sarcasm detection across heterogeneous social media platforms; (2) demonstration that conditional encoding and sentence‑level attention are effective neural strategies for integrating context; and (3) a methodology for interpreting model decisions via attention, with a comparison to human performance. The authors release their code and datasets, encouraging further work on multi‑turn dialogue, larger pre‑trained language models, and multimodal sarcasm detection.

Comments & Academic Discussion

Loading comments...

Leave a Comment