LIG-CRIStAL System for the WMT17 Automatic Post-Editing Task

This paper presents the LIG-CRIStAL submission to the shared Automatic Post- Editing task of WMT 2017. We propose two neural post-editing models: a monosource model with a task-specific attention mechanism, which performs particularly well in a low-resource scenario; and a chained architecture which makes use of the source sentence to provide extra context. This latter architecture manages to slightly improve our results when more training data is available. We present and discuss our results on two datasets (en-de and de-en) that are made available for the task.

💡 Research Summary

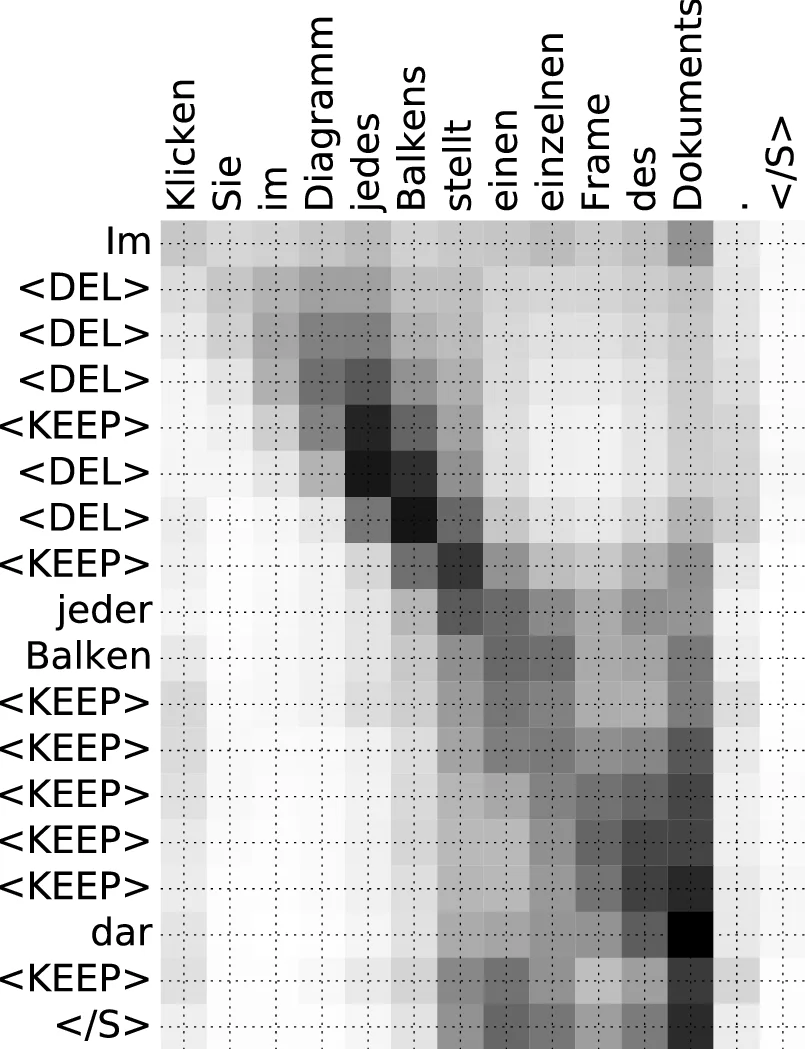

This paper describes the LIG‑CRIStAL participation in the WMT 2017 Automatic Post‑Editing (APE) shared task. The authors propose two neural architectures that operate on edit‑operation sequences rather than on raw words. The first model is a mono‑source system that receives only the machine‑translated (MT) output and predicts a sequence of edit actions—KEEP, DEL, INS(word) and EOS—using a forced (hard) attention mechanism. The forced attention aligns each decoder step t with the i‑th input token, where i is defined as the number of KEEP and DEL tokens already emitted plus one. This deterministic alignment eliminates the need for a learned soft alignment and makes the model especially robust when training data are scarce.

The second architecture is a chained model that incorporates the source sentence (SRC). It consists of two encoder‑decoder components. The first component (SRC→MT) is a conventional NMT model with global soft attention; its attention vectors cᵢ summarize which parts of the source contributed to each MT token. The second component (MT→OP) is the same forced‑attention edit‑operation predictor used in the mono‑source model, but it also receives the cᵢ vectors from the first component as additional context. Both components are trained jointly by minimizing the sum of the translation loss (SRC→MT) and the post‑editing loss (MT→OP).

To train and evaluate the systems, the authors use two language pairs: English→German (IT domain) and German→English (medical domain). For each pair they have a small “real” APE corpus (12 k or 23 k sentence triples) and a larger synthetic corpus (500 k for EN‑DE, up to 4 M for EN‑DE, and a self‑generated 10 M for DE‑EN). Unlike previous work, they avoid large external parallel corpora; instead they create synthetic data by translating a monolingual English corpus (Common Crawl) into SRC and MT using small NMT models trained only on the APE data. This approach is more realistic for industrial settings where massive parallel data are unavailable, but it results in a limited vocabulary on the synthetic side.

Model configurations are modest: bidirectional LSTM encoders of size 128, 128‑dimensional word embeddings, teacher forcing during training, a max‑out layer before the final projection, and pure SGD with an initial learning rate of 1.0. Learning‑rate decay is applied per epoch (0.8) for models trained on real data and more aggressively (0.5 per half‑epoch) for models that also use synthetic data. No sub‑word segmentation is employed, because the edit‑operation vocabulary already includes a large number of insertion tokens.

Results on the English→German task show that the forced‑attention mono‑source model outperforms both the raw MT baseline and a statistical post‑editing (SPE) baseline, achieving TER reductions of about 2–3 points over the MT baseline when trained on 12 k or 23 k real triples. The global‑attention baseline (Libovický et al., 2016) performs worse, confirming that the hard alignment is advantageous in low‑resource conditions. When the synthetic 500 k corpus is added, the chained model yields a modest further TER improvement (≈ 0.5–1.0 points) and a slight BLEU increase, demonstrating that source‑side context can be beneficial when more data are available. However, even the best LIG‑CRIStAL system remains behind the top‑ranked systems that exploit massive parallel data and larger word‑based models.

For the German→English direction, the proposed models largely degenerate to emitting KEEP tokens only, resulting in post‑edited outputs that are almost identical to the MT input. Consequently, TER and BLEU scores are essentially unchanged from the baseline. The authors attribute this to the higher baseline quality (BLEU ≈ 70) and the morphological richness of German, which leaves little room for simple edit operations to improve the translation.

The paper discusses several limitations. First, the edit‑operation distribution is heavily skewed toward KEEP (≈ 70 % of tokens), causing the models to favor a “do‑nothing” strategy. This class imbalance hampers learning of DEL and INS actions, especially when training data are limited. Second, the edit‑operation sequences are generated automatically via a shortest‑path algorithm, which may not reflect the actual sequence of actions a human post‑editor would take. Third, the models operate at the word level, whereas many post‑editing corrections involve character‑level changes (e.g., fixing a typo without deleting the whole word). The authors suggest future work on weighted loss functions or multi‑task training to mitigate class imbalance, collecting finer‑grained human editing logs (keystrokes, mouse clicks) to obtain more realistic edit sequences, and exploring character‑level models despite the increased computational cost.

In summary, the LIG‑CRIStAL contribution demonstrates that a hard‑aligned edit‑operation predictor can achieve solid gains in low‑resource APE scenarios, and that incorporating source‑side attention via a chained architecture can provide incremental benefits when larger synthetic corpora are available. Nonetheless, challenges remain in handling class imbalance, realistic edit‑sequence modeling, and extending the approach to morphologically complex languages or character‑level editing. The paper offers a clear analysis of these issues and outlines concrete directions for future research.

Comments & Academic Discussion

Loading comments...

Leave a Comment