Oseba: Optimization for Selective Bulk Analysis in Big Data Processing

Selective bulk analyses, such as statistical learning on temporal/spatial data, are fundamental to a wide range of contemporary data analysis. However, with the increasingly larger data-sets, such as weather data and marketing transactions, the data organization/access becomes more challenging in selective bulk data processing with the use of current big data processing frameworks such as Spark or keyvalue stores. In this paper, we propose a method to optimize selective bulk analysis in big data processing and referred to as Oseba. Oseba maintains a super index for the data organization in memory to support fast lookup through targeting the data involved with each selective analysis program. Oseba is able to save memory as well as computation in comparison to the default data processing frameworks.

💡 Research Summary

The paper addresses a fundamental inefficiency in modern in‑memory big‑data frameworks such as Apache Spark when they are used for “selective bulk analysis” – analytical tasks that only need a subset of the data, for example a specific time window or spatial region. Typical Spark programs apply transformations (e.g., filter, map, reduce) to every RDD partition, even when the analysis only concerns a few partitions. This leads to unnecessary scanning of all partitions, creation of intermediate filter‑RDDs, and consequently large memory footprints and long execution times.

To solve this, the authors propose Oseba, a content‑aware indexing mechanism that enables Spark to directly locate and load only the partitions that actually contain the required data. Oseba consists of two complementary designs. The first is a simple metadata table that records, for each partition, its identifier and the data range it holds (e.g., start and end timestamps). With this table, a binary search can quickly identify the partitions covering a user‑specified range. However, the table’s space complexity grows linearly with the number of partitions (O(m)), and lookup cost is O(log m), which can become prohibitive at very large scales.

The second, more sophisticated design is the Compressed Index with Associated Search List (CIAS). CIAS exploits two observations common to many time‑series or spatial datasets: (1) Spark partitions are usually of uniform size (e.g., 64 MB), and (2) the data points are evenly spaced in the time dimension. By mathematically modeling the relationship between partition IDs and their data ranges, CIAS stores a compact “compressed index” (essentially a formula or a small set of parameters) together with an “associated search list” that enumerates the exact partition IDs needed for any given range. This representation makes the metadata size independent of the number of partitions, while still allowing O(1)‑ish computation to retrieve the relevant IDs.

In practice, Oseba integrates CIAS into Spark’s RDD metadata. When a user issues a filter operation for a particular period, Oseba consults the CIAS, determines the exact partitions to read, and bypasses the full‑cluster scan. No intermediate filter‑RDDs are materialized, so memory consumption stays low.

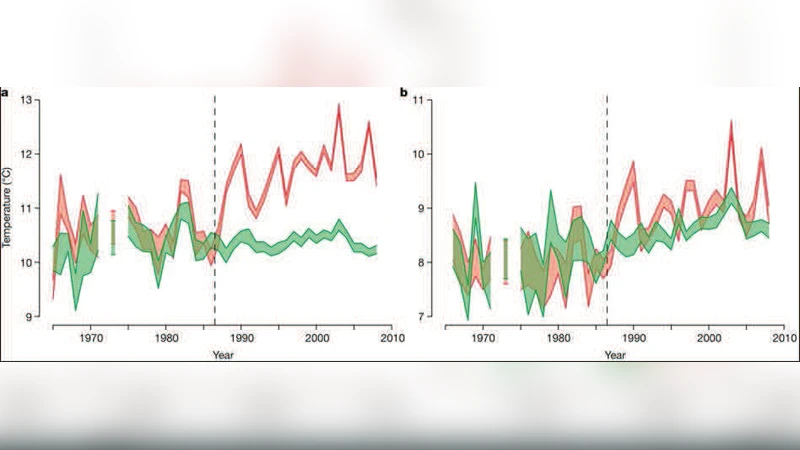

The authors evaluate Oseba on the Marmot cluster (128 nodes, 256 cores) using Spark 1.0.2. They process a 480 MB climate time‑series dataset split into 15 partitions and perform five selective analyses on distinct periods, each computing max, mean, and standard deviation of temperature. Compared with the default Spark workflow, Oseba reduces peak memory usage from roughly 1.8 GB (≈3.8 × the raw data size) to a level that remains almost constant throughout the experiment. Execution time also improves: the total runtime drops from over 120 seconds to about 70 seconds, a reduction of more than 40 %. The first analysis shows only a modest gain (since the data are still in memory), but subsequent analyses benefit increasingly as the default approach continues to allocate new filter‑RDDs.

Related work is surveyed, including Hadoop/HDFS, Dryad, Pregel, Piccolo, and various key‑value stores. While these systems support large‑scale processing, they either operate on the whole dataset or require fine‑grained item access that incurs additional reliability overhead. Oseba, by contrast, retains Spark’s coarse‑grained, in‑memory processing model while adding a lightweight, content‑aware layer that makes selective bulk analysis efficient.

In conclusion, Oseba demonstrates that maintaining lightweight, range‑based metadata about partitions can dramatically cut both memory overhead and execution time for selective bulk analyses in Spark. The approach is especially suitable for datasets with uniform partition sizes and regular temporal or spatial intervals, which covers many real‑world time‑series and geospatial workloads. Future directions include extending CIAS to handle variable‑size partitions, non‑uniform data distributions, and multi‑dimensional query predicates, as well as building automated metadata management services to generate and update CIAS structures transparently.

Comments & Academic Discussion

Loading comments...

Leave a Comment