Probabilistic Graphical Models on Multi-Core CPUs using Java 8

In this paper, we discuss software design issues related to the development of parallel computational intelligence algorithms on multi-core CPUs, using the new Java 8 functional programming features. In particular, we focus on probabilistic graphical…

Authors: Andres R. Masegosa, Ana M. Martinez, Hanen Borchani

Probabilistic Graphical Mo dels on Multi-Core CPUs using Ja v a 8 Andr ´ es R. Masegosa ∗ , Departmen t of Computer and Information Science, Norw egian Univ ersity of Science and T ec hnology , T rondheim, Norwa y Ana M. Mart ´ ınez and Hanen Borc hani , Departmen t of Computer Science, Aalb org Universit y , Denmark April 28, 2016 Abstract In this pap er, w e discuss soft ware design issues related to the dev elopmen t of parallel computa- tional in telligence algorithms on multi-core CPUs, using the new Jav a 8 functional programming features. In particular, we fo cus on probabilistic graphical mo dels (PGMs) and present the paral- lelisation of a collection of algorithms that deal with inference and learning of PGMs from data. Namely , maximum lik eliho od estimation, imp ortance sampling, and greedy searc h for solving com binatorial optimisation problems. Through these concrete examples, we tac kle the problem of defining efficient data structures for PGMs and parallel processing of same-size batc hes of data sets using Ja v a 8 features. W e also provide straightforw ard tec hniques to code parallel algorithms that seamlessly exploit m ulti-core processors. The experimental analysis, carried out using our op en source AMIDST (Analysis of MassIve Data ST reams) Jav a to olbox, shows the merits of the prop osed solutions. 1 In tro duction In the last decade, the chip-man ufacturing industry has relied on m ulti-core arc hitectures to b oost the p erformance of computing pro cessors. Previously , improv emen ts of computer pow er were driven b y increasing the op erating frequency of the chips. Ho wev er, this frequency scaling strategy started to produce diminishing gains due to several factors. F or example, the pow er consumption exponen- tially increases with each factorial increase of the op erating frequency (that is, simply b ecause p ow er is consumed by the CMOS circuits every time they c hange their state). This problem cannot b e mitigated b y shrinking the comp onents of the processors due to leak age problems [1, 2]. As a result ∗ Corresponding author: Andr ´ es R. Masegosa (Email: andres.masegosa@idi.ntn u.no). 1 of using sev eral pro cessing cores on a c hip, industry was able to multiply the computing p erformance of the pro cessors while k eeping the clock frequency fixed. Computational in telligence metho ds hav e b een strongly influenced by the emergence of parallel computing architectures. The literature on distributed and parallel algorithms has explo ded in the last years [3 – 8]. Consequen tly , computational in telligence softw are libraries, especially in the field of “Big data”, ha ve emerged to help developers to lev erage the computational p ow er of systems with m ultiple pro cessing units [9, 10]. Classic scientific programming libraries lik e R or Matlab ha v e also tried to adapt to m ulti-core CPUs or computer clusters b y providing sp ecialised libraries [11–13]. Ho wev er, programming computational intelligence metho ds on parallel architectures is not an easy task yet. Apart from the latter dev elopments, there are man y other parallel programming languages suc h as Orca [14], Occam ABCL [15], SNO W [16], MPI [17], P ARLOG [18], and Scala [19]. Nev- ertheless, at the time of writing, none of them seems to be widely accepted b y the computational in telligence communit y . F or example, if we take a lo ok at some reference ven ues suc h as the JMLR Soft ware track 1 , IEEE F uzzy Softw are for Soft Computing series [20], and the Journal of Statistical Soft ware 2 , the v ast ma jority of the prop osed soft w are libraries do not rely on an y of these paral- lel programming languages [ ? ]. Moreo v er, only a few released libraries con tain implementations of computational in telligence techniques that are able to exploit m ulti-core CPUs [21–24]. Enterprise- supp orted scien tific computing libraries constitute an exception [12], b ecause in general programming concurren t and parallel applications is tough, time consuming, error prone, and requires sp ecific programming skills that ma y b e b ey ond the expertise of C. I. researchers. The latest Jav a release (JDK 8.0, March 2014) is an attempt to bring a new API to code trans- paren t and seamless parallel applications to the general public (that is, non-sp ecialized programmers in parallel and concurrent methods) [25]. Note that, from the v ery b eginning, Jav a architects tried to address the problem of programming parallel and concurrent applications. Jav a 1.0 had threads and lo c ks, Jav a 5 included thread p ools and concurren t collections, while Ja v a 7 added the fork/join framew ork. All these functionalities made parallel programming more practical, yet not trivial to accomplish [26]. T o handle this, Ja v a 8 introduces a completely new approach to parallelism based on a functional programming st yle [25] through the use of map-reduce op erations [27]. Although map-reduce op erations existed already as higher order functions in the programming literature, they only started to be widely used with the rise of parallel pro cessing [27]. Roughly sp eaking, map-reduce op erations are usually emplo y ed for parallel pro cessing of collections of elements ( Stream ob jects in Ja v a 8). The map op eration applies a stateless user-defined transformer function (mapp er) to eac h elemen t of the collection, whereas the reduce op eration applies another stateless user-defined func- tion (reducer) that combines the outputs of the mapp er to produce a single output result. Both the 1 http://www.jmlr.org/mloss/ 2 http://www.jstatsoft.org/ 2 mapp er and the reducer can be concisely describ ed using lambda expressions in Jav a 8. The stateless nature of the mapper and the reducer allo ws to safely parallelise their application ov er the different elemen ts of the collection. Note that the term MapReduce commonly refers to tw o different concepts: 1) the Go ogle priv ate parallel engine (out of the scope of this paper); and 2) the MapReduce pro- gramming paradigm, which corresp onds to the description ab o ve and commonly deals with problems suc h as distributed data or serialization [27]. In this work we consider the map-reduce concept from a functional programming p erspective. As we fo cus on m ulti-core instances we do not need to deal with issues related to distributed systems. Man y experts argue that the introduction of these new functional programming features (that is, lambda expressions, map-reduce operations, and streams) is, in man y wa ys, the most profound c hange to Ja v a during the last few years [25, 26]. As a concrete example of the potential of the new Ja v a 8 API, let us assume that we hav e a collection of do cumen ts stored in a List ob ject, called do cs, and w e w ant to pro duce, exploiting a multi-core CPU, a Map ob ject containing all distinct w ords along with the num be r of o ccurrences of e ac h word in the different do cumen ts. As shown in Algorithm 1, this task can b e done using just three lines of code in Jav a 8. On the opposite, using the previous Jav a versions (or many other programming languages), co ding this task would require dozens of lines of co de as well as an explicit and complex management of multiple threads and concurrency . Nevertheless, using Ja v a 8 presen ts the challenge of dealing with a new API and a new programming syntax that requires a learning curve. The syntax in Program 1 includes lambda expressions (such as s -> s ) and map ( flatMap ) and reduce ( collect ) op erations that will b e later defined in Section 2.1. Readers can also find more details ab out the basics of Ja v a 8 and more adv anced functionalities in [26]. Program 1 - W ord o ccurrences in do cumen ts Map wordToCount = docs.parallelStream() .flatMap(doc -> Stream.of(doc.split( "\\s+" ))) .collect(Collectors.toMap(s -> s, s -> 1, Integer::sum)); Moreo ver, readers that are familiar with big data platforms such as Apache Spark 3 [28] or Apache Flink 4 [51] will notice the syn tax similarities b etw een these tw o platforms and Jav a 8. This indeed ma y facilitate knowledge transfer among the men tioned platforms. How ever, it is imp ortan t to note that their purposes are differen t. In fact, Jav a 8 streams target m ulti-core platforms, whereas Spark and Flink are intended to be used for distributed data processing on m ulti-no de clusters. Spark and Flink could also b e used for multi-core platforms but the main problem is that they may in- tro duce o v erheads, whic h consequently discourages their use in suc h settings, as will be empirically 3 Apache Spark: http://spark.apache.org 4 Apache Flink: https://flink.apache.org 3 demonstrated in Section 6.2. It is also w orth highligh ting that Ja v a is quite a mature programming language (almost 20 y ears since the first release), widely used (around 3 billions devices), and has a large communit y (around 9 millions dev elop ers). Although Flink and Spark show ed recen tly a great p oten tial, Ja v a 8 still en tails a m uc h more easy-to-use and robust solution for parallel processing in a m ulti-core en vironment. In addition, luckily , all these platforms can b e used together since Jav a 8 can b e incorp orated on top of Spark or Flink to reduce potential ov erheads in terms of multi-core parallelisation 5 . Probabilistic graphical models (PGMs) [29 – 32] hav e been state-of-the-art to ols for reasoning under uncertain ty in the last t wo decades. They hav e been successfully used in many real-w orld problems in volving high n umber of v ariables with complex dep endency structures. Application examples include text classification, genomics, automatic robot control, fault diagnosis, etc. (see [33] for an extensiv e review on real applications). Mean while, a large set of sp ecialised libraries on PGMs based on sev eral programming languages 6 ha ve also emerged in recent years from man y differen t univ ersities. Ho wev er, to the b est of our knowledge, none of them provides explicit and general supp ort for parallel/concurren t processing. In our opinion, the main reasons for this absence are the relatively recen t interest in concurren t/parallel algorithms as well as the complexit y of coding such algorithms. In this paper we presen t some parts of the design of a softw are to ol for PGMs and show how this design lev erages some of the new Jav a 8 features for dev eloping easy-to-co de parallel algorithms on m ulti-core CPUs. In particular, we discuss the design of a collection of algorithms that are mainly related to the scalable represen tation, inference, and learning of PGMs from data. It is imp ortan t here to highligh t that the discussed soft ware design solutions are generic and not restricted to the scop e of PGMs, since they could b e applied to other computational in telligence softw are libraries. Moreo ver, these solutions hav e b een adopted in the recen t developmen t of our AMIDST (Analysis of MassIv e Data ST reams) Ja v a toolb ox 7 . It is imp ortant to clarify that the aim of this paper is not to demonstrate that our AMIDST pack age is more efficien t than other a v ailable PGM soft wares, but rather to show ho w con venien t it is to use Jav a 8 for coding algorithms running on m ulti-core CPUs. More sp ecifically , this paper aims to help other dev elop ers to understand the new Ja v a 8 features and to reduce the learning curve by sho wing how Ja v a 8 can solv e concrete issues that arise when co ding parallel/concurren t computational in telligence algorithms. The remainder of this pap er is organised as follows: In Section 2 we in tro duce the main concepts related to both Ja v a 8 and PGMs. Section 3 describes some issues related to the design of data structures that supp ort functional programming style while Section 4 examines the parallelisation of 5 Last releases of Apache Flink and Apache Spark start to partially support Jav a 8. 6 See http://www.cs.ubc.ca/ ~ murphyk/Software/bnsoft.html for a non-exhaustiv e list referencing around sevent y different PGM libraries. 7 AMIDST is an op en source to olbox written in Ja v a and is av ailable at http://amidst.github.io/toolbox/ under the Apache Software License 2.0. 4 algorithms for p erforming maxim um likelihoo d estimation, Mon te Carlo inference, and greedy searc h. Next, Section 5 discusses the parallel pro cessing of data sets. Finally , w e present a brief experimental ev aluation showing the adv an tages of using the prop osed design solutions for parallel computation in Section 6 and we conclude in Section 7. All the Jav a source codes of the provided examples can b e do wnloaded from the AMIDST to olb o x website http://amidst.github.io/toolbox/ . 2 Bac kground In this section, we start b y briefly defining the main features related to the functional programming st yle in Ja v a 8. Afterwards, w e introduce Ba yesian net work models. 2.1 F unctional programming style in Ja v a 8 Ja v a 8 in tro duces a new w ay of programming based on metho d references, lam b da expressions, streams, and parallel streams. In what follows, we provide a brief description of these different concepts and illustrate them with running examples. W e also refer the readers to [26] for a deep revision of all these concepts. Consider that w e w ant to determine the num b er of ev en and odd n um b ers in a given list of in tegers. Using prior v ersions of Ja v a, w e might consider to create a class MyIntegerUtils (see Program 2.1). This class defines an inner in terface and three static methods, namely , isEven , isOdd , that tak e an input integer, and filterNumberList , that tak es as inputs a list of integers and a predicate. This predicate might b e later defined as an anonymous class implementing the interface NbrPredicate , as sho wn in Program 2.2. In the follo wing subsections, we will describe how Ja v a 8, using its functional programming fea- tures, gets rid of all this v erbose code and pro vides instead a muc h simpler and shorter syn tax. 2.1.1 Metho d references Metho d r efer enc es allow us to use an a v ailable method of a class and pass it directly as an argument to another metho d through the :: syntax. Th us, the abov e example can be then simplified using the metho d references ::isEven and ::isOdd as shown in Program 2.3. By using metho d references, there is not a need to create an y anonymous classes as previously done in Program 2.2. In this example, the in terface NbrPredicate defines ho w the passed metho ds should look like. That is, it states that the passed metho ds accept an integer as a unique input argumen t and return a b oolean v alue. Both metho ds isEven and isOdd from the MyIntegerUtils class fulfil this condition. 5 Program 2.1 - A class to determine the n um b er of even and o dd num b ers in a list of integers (using older versions of Jav a) public class MyIntegerUtils { public interface NbrPredicate{ boolean test(Integer integer); } public static boolean isEven(Integer i){ return i % 2 == 0; } public static boolean isOdd(Integer i){ return i % 2 == 1; } public static int filterList(List input, NbrPredicate predicate){ int count = 0; for (Integer i : input){ if (predicate.test(i)) count++; } return count; } } Program 2.2 - Anonymous classes implementing the in terface NbrPredicate and an example of use public static void main (String[] args){ NbrPredicate preEven = new NbrPredicate(){ @Override public boolean test(Integer integer){ return MyIntegerUtils.isEven(integer);} } NbrPredicate preOdd = new NbrPredicate(){ @Override public boolean test(Integer integer){ return MyIntegerUtils.isOdd(integer);} } List list= Arrays.asList(0, 3, 5, 6, 8, 10, 12, 15); System.out.println( "The count of even numbers is " + MyIntegerUtils.filterList(list, preEven)); System.out.println( "The count of odd numbers is " + MyIntegerUtils.filterList(list, preOdd)); } 6 Program 2.3 - Use of method references in Jav a 8 List list= Arrays.asList(0,3,5,6,8,10,12,15); System.out.println( "The count of even numbers is" + MyIntegerUtils.filterList(list, MyIntegerUtils::isEven)); System.out.println( "The count of odd numbers is" + MyIntegerUtils.filterList(list, MyIntegerUtils::isOdd)); 2.1.2 Lam b da expressions As an alternativ e to pass method references, Jav a 8 allows a richer use of functions as v alues b y including lambda expressions (or anon ymous functions). An example of a lam b da expression could b e (int n) -> n + 1 and it refers to “a function, that when called with the integer argumen t n , returns the v alue n+1 ”. Although this kind of functionality can of course be obtained b y defining new metho ds and classes, in many cases it allows for concise statemen ts whic h mak e the co de muc h more simple and re adable. Program 2.4 sho ws ho w our previous example lo oks like when using lam b da expressions suc h as i -> i % 2 == 0 , whic h defines a function that when called with the in teger argument i , returns true if the num b er is even or false otherwise. As can be seen, with this simple co de w e can get rid of of the previously defined statics metho ds isEven and isOdd of the MyIntgerUtils class. Let us note that b oth lam b da expressions in Program 2.4 are consistent with the NbrPredicate in terface. Otherwise, we would get a compilation error (w e also ha ve type safet y for lam b da expres- sions). Program 2.4 - Use of lam b da expressions in Ja v a 8 List list= Arrays.asList(0,3,5,6,8,10,12,15); System.out.println( "The count of even numbers is" + MyIntegerUtils.filterList(list, i -> i % 2 == 0)); System.out.println( "The count of odd numbers is" + MyIntegerUtils.filterList(list, i -> i % 2 == 1)); 2.1.3 Streams, intermediate and terminal op erations A java.util.Stream represen ts a sequence of elements on whic h one or more op erations can b e p er- formed. These op erations are defined by the corresp onding op erators which are either: interme diate , that return a new transformed stream (m ultiple intermediate operators can b e sequen tially applied suc h as filter , map , skip , and limit ), or terminal , that end the pro cess of the sequence of elements b y applying some final op eration (for example forEach , findFirst , collect , and count ). In terms of map-r e duc e operations, in termediate operations can b e regarded as map op erations whereas terminal op erations can b e matc hed to r e duc e op erations. 7 In addition, all the Ja v a collections (lists, sets, maps, etc.) contain the metho d stream() that returns a java.util.Stream ob ject cov ering all the elemen ts of the collection. Using Ja v a 8 Streams, the example ab o ve can be rewritten in a muc h more concise and readable manner, as shown in Program 3. Program 3 uses the intermediate op eration filter that accepts a predicate (a function that tak es a giv en ob ject and returns a true/false v alue) and produces a new stream ob ject whic h only co vers those elements that satisfy the given predicate (it discards those elemen ts whic h do not satisfy the predicate). In this particular example, the predicate is defined b y the lam b da expressions. Next, the terminal op eration count() is called on the result to return the num b er of elemen ts in the filtered stream. As can be seen, using streams and lambda expressions, we can replace with t wo lines of co de the following elemen ts: one class, one in terface, three static metho ds, and tw o anonymous classes. Program 3 - Use of streams in Jav a 8 List list= Arrays.asList(0,3,5,6,8,10,12,15); System.out.println( "The count of even numbers is" + list.stream().filter(i -> i % 2 == 0).count()); System.out.println( "The count of odd numbers is" + list.stream().filter(i -> i % 2 == 1).count()); 2.1.4 P arallel Streams Let us imagine that our running example inv olves pro cessing a very large sequence of n um b ers. It is straigh tforward to see that this problem can benefit from parallel pro cessing on m ulti-core processors: the problem can b e independently divided in to sub-problems (that is, coun ting even/odd num b ers for non-o verlapping sub-c hains) whic h can be solv ed simultaneously (in parallel, such that each sub-c hain is iterated in a separate thread/core), and finally combining the differen t sub-solutions to obtain a global solution (summing up all the partial coun ts). Ja v a 8 enables co ding of this parallel task in a trivial and effortless manner because all the stream op erators can either b e applied sequentially (as describ ed ab o v e, on a single thread) or in parallel (where multipl e threads are used). Parallel streams are inv ok ed using the parallelStream() metho d, presen t in all the Jav a collections. Program 4 sho ws a running example of using parallel streams in Ja v a 8. There is not a need to mention that co ding this parallel operation using older Ja v a versions migh t require a far more complex, hard to read and debug, Jav a code. Program 4 - Use of parallel streams in Ja v a 8 List list = Arrays.asList(0,3,5,6,8,10,12,15); System.out.println( "The count of even numbers is" + list.parallelStream().filter(i -> i % 2 == 0).count()); System.out.println( "The count of odd numbers is" + list.parallelStream().filter(i -> i % 2 == 1).count()); 8 2.2 Ba y esian netw orks Ba yesian net works (BNs) [29–31] are widely used PGMs for reasoning under uncertain t y . A B N defined o ver a set of random v ariables X = { X 1 , . . . , X n } can b e graphically represen ted b y a directed acyclic graph (DA G). Each no de, labelled X i in the example graph depicted in Fig. 1, is asso ciated with a factor or conditional probability table p ( X i | P a ( X i )), where P a ( X i ) are the paren ts of X i in the graph. A BN representation defines a joint pr ob ability distribution p ( X ) ov er the v ariables in volv ed which factorizes, according to the chain rule, as follows p ( X ) = n Y i =1 p ( X i | P a ( X i )) . Fig. 1 sho ws an example of a BN mo del o ver five v ariables. A conditional probability dis- tribution is associated with each no de in the netw ork describing its conditional probabilit y distri- bution given its parents in the netw ork, and the join t distribution factorises as p ( X 1 , . . . , X 5 ) = p ( X 1 ) p ( X 2 | X 1 ) p ( X 3 | X 1 ) p ( X 4 | X 2 , X 3 ) p ( X 5 | X 3 ) . X 1 X 2 X 3 X 4 X 5 Figure 1: Example of a BN mo del with fiv e v ariables. A BN is called hybrid if some of its v ariables are discrete while others are contin uous. In the AMIDST modelling framework, we sp ecifically consider c onditional line ar Gaussian (CLG) BNs [30, 34, 35]. In a CLG mo del, the lo cal distributions of the con tin uous v ariables are sp ecified as CLG distributions and the discrete v ariables are required to only hav e discrete parents. 3 Designing Data Structures for PGMs using Ja v a 8 One of the main conclusions drawn when dev eloping the AMIDST to olb o x is that the design of the basic data structures for PGMs should b e carefully set up to enable the use of a functional programming style when dev eloping the subsequent algorithms. As we discuss in this section, many differen t soft ware design proposals could even tually be found for represen ting the same data structure, but not all of them are v alid for later use by the functional programming methods of Ja v a 8 API. The AMIDST to olbox contains tw o main distinct data structures for representing a PGM: a 9 first data structure to represen t the directed acyclic graph (D AG) defining the graphical structure of the model; and a s econd data structure that stores the parameters of the conditional probability distributions. Man y different implemen tations of a D AG can b e imagined and, probably , all of them will hav e their pros and cons. F or example, we can represent a D AG by a b o olean matrix, as in the case of the w ell-known BNT pack age [36], where the ( i, j )-entry is set to tr ue to represent a directed link from the i -th random v ariable to the j -th random v ariable. In this section, w e simply discuss our exp eriences with those differen t represen tations that later on we found to b e more suitable for b eing in tegrated with the Ja v a 8 API. Our main lesson learn t and our suggested design p attern [37] is the follo wing: represent data structures as lists of self-contained and indep enden t elemen ts. W e exemplify this intuition b y discussing the representation of t wo of our main data structures. In the AMIDST toolb ox, a DA G is represented as a list of ParentSet ob jects, one for eac h v ariable defining our PGM mo del (see Section 2.2). Eac h ParentSet ob ject is self-contained by k eeping the follo wing information: a field pointing to the v ariable stored in this v ertex of the DA G; and a list of the parent v ariables of this v ertex. As an example, in Program 5, w e show ho w our D AG represen tation allo ws us to concisely co de a parallel version of t wo standard operations for D AG ob jects, namely , getNumberOfLinks() and getChildrenOf(Variable mainVar) , that can b e quite b eneficial in terms of computational performance for very large DA Gs. Using the aforemen tioned alternativ e matrix-based represen tation for D AGs, it would not b e possible to co de these metho ds in suc h a concise and elegant manner. The method getNumberOfLinks() in Program 5 simply iterates o ver the list of ParentSet ob jects defining the D AG using a Ja v a Stream ob ject. Then, using the mapToInt op erator, it transforms eac h ParentSet ob ject into an integer v alue representing the num ber of parent v ariables of this ob ject. The returned Stream of in teger v alues is finally reduced with the terminal op erator count . The metho d getChildrenOf(Variable mainVar) in Program 5 also iterates ov er the list of ParentSet ob jects. Then, it filters those ParentSet ob jects whic h contain the v ariable mainVar as a parent v ariable. Using the map op erator, it subsequently extracts for eac h filtered ParentSet ob- ject its main v ariable. Finally , it collects all these main v ariables in a list using the terminal op erator collect . As men tioned b efore, the subtype of PGMs considered and implemented in the AMIDST to ol- b o x is BNs, see Section 2.2. Once again, w e found that the b est w ay to represen t the data struc- ture for the parameters of a BN w as to use a list of ConditionalDistribution ob jects. Eac h ConditionalDistribution ob ject is self-contained by k eeping, among other things, a reference to all the v ariables inv olv ed (child and parent v ariables) and a metho d to compute the lo cal sufficien t statistics vector from a given data sample [38]. 10 Program 5 - Determine the n umber of links in a giv en DA G & the set of c hildren of a given v ariable // This method efficiently calculates the number of links in a particular DAG (represented by this) public long getNumberOfLinks(){ return this .getParentSets().parallelStream() .mapToInt(parentSet -> parentSet.getParents().size()).count(); } // This method efficiently returns all the children for a particular Variable public List getChildrenOf(Variable mainVar){ return this .getParentSets().parallelStream() .filter(parentSet -> parentSet.getParents().contains(mainVar)) .map(parentSet -> parentSet.getMainVar()) .collect(Collectors.toList()); } A standard task inv olving this data structure is to compute the global sufficien t statistics vector for a BN mo del. By definition, this global sufficient statistics v ector is comp osed of the concatenation of all the lo cal sufficient statistics v ectors asso ciated with each ConditionalDistribution ob ject of the BN. Program 6 sho ws the code for computing this “comp ound” vector. It starts b y iterating o ver the ConditionalDistribution ob jects of a BN and mapping each one with a new created IndexedElement ob ject. Eac h IndexedElement ob ject contains the lo cal sufficien t statistics vector asso ciated to the mapped ConditionalDistribution ob ject and it is indexed b y the corresp onding ID of its main v ariable (that is why it was imp ortan t to include this v ariable in the data structure and make it self-con tained). Finally , all these newly created IndexedElement ob jects are collected in a list through the terminal op erator Collector . Program 6 - Compute a BN global sufficient statistics v ector // This method returns an instantiation of our defined interface Vector that represents the global vector of sufficient statistics of a BN for a given DataInstance. In order to efficiently accomplish that, both ConditionalDistribution and IndexedElement objects are defined as independent and self-contained public Vector getSufficientStatistics(DataInstance dataInstance){ List list = bayesianNetwork.getConditionalDistributions() .stream() .map(condProb -> new IndexedElement(condProb.getVariable().getIndex(), condProb.getSufficientStatistics(dataSample)) .collect(Collectors.toList()); return new CompoundVector(list); } 11 As can be seen, the final output of this metho d is a Comp oundV e ctor ob ject, which implemen ts our defined interface V e ctor . This Comp oundV e ctor is again designed as a list of independent and self-con tained Indexe dElement ob jects (desired properties for parallelisation). This Comp oundV e ctor class is frequently used in AMIDST as it allows us to parallelise sev eral v ector op erations. 4 P arallelisation of algorithms As commented on in previous sections, Streams in Jav a 8 allow us to parallelise tasks without ha ving to explicitly deal with threads and their synchronisation. Note that, sometimes, not all the tasks should b e parallelised, since an attempt to do so may result in to more inefficien t versions comparing to their sequential coun terparts. Here we include a non-exhaustive list of the main criteria that an algorithm should fulfil in order to be p oten tially parallelisable when implemented in Jav a 8: • It is generally desired that all parallel subtasks, in to whic h the algorithm is decomposed, entail a significan t CPU-intensiv e pro cessing (on the order of 1-10 milliseconds). More sp ecifically , this pro cessing time has to b e large enough to comp ensate for the o verload that may b e in tro duced due to b oth thread creation (and synchronisation if needed) and I/O operations for the case of parallel data pro cessing. • All parallel subtasks m ust run completely independently or only access a non-mo difiable shared data structure. This stateless prop erty is actually a requiremen t for the use of parallel Jav a Streams [26]. • The lo w-latency random access of the elemen ts of the parallel stream represents an additional requiremen t. In Section 5, w e discuss what to do in cases where this requiremen t is not readily a v ailable, as it occurs when the elements are placed on a hard disk drive. W e exemplify all these insights through three different algorithms for PGMs, namely , parallel maxim um likelihoo d estimation, a parallel Mon te Carlo metho d using imp ortance sampling, and a greedy searc h metho d for solving a combinatorial optimisation problem also p erformed in parallel. Man y other parallel algorithms are also included in our AMIDST to olbox. As men tioned before, a k ey adv antage of the new Ja v a 8 API is that it facilitates co ding parallel algorithms using just a few lines of co de. 4.1 P arallel maxim um likelihoo d estimation Maxim um lik eliho od estimation (MLE) [39] is an estimation method of probabilit y functions. Given a particular data set of i.i.d. samples D = { x 1 , . . . , x n } and an underlying statistical model, which in our case is a BN whose probability distributions belong to the exp onen tial family [38], the MLE 12 metho d gives a unified approac h for calculating the parameters θ of the mo del that maximize the logarithm of the lik eliho od function (log-lik eliho od function) as follows θ M LE = arg max n X i =1 log p ( x i | θ ) = P s ( x i ) n , (1) where s ( · ) is a deterministic function that returns, for each data sample, a v ector of sufficient statistics, whic h dep ends on the particular model w e are learning. Note that, the previous equation only holds for fully observed data sets and mo dels that do not con tain latent v ariables. The key p oint is that the MLE algorithm can be expressed as the computation of an indep endent sum op eration o ver a collection of data samples, and satisfies the three aforementioned parallelisation requiremen ts. The MLE computations can therefore b e performed in an em barrassingly parallel manner. In what follows, we sho w in Program 7 ho w MLE can be implemen ted in Ja v a 8 using just a few lines of co de. Program 7 - Parallel maximum likelihoo d estimation // This method computes the MLE for a given DataStream and a BayesianNetwork // The input parameter batchSize can be used to customise the degree of parallelisation SufficientStatistics computeMLE(DataStream data, BayesianNetwork bayesianNetwork){ SufficientStatistics sumSS = data.parallelStream(batchSize) .map(bayesianNetwork::getSufficientStatistics) //see Program 6 .reduce( new ZeroVector(), Vector::sumVector); return sumSS.divideBy(data.getNumberOfInstances()); } The MLE algorithm is based on map and reduce operations. An illustration of this pro cess- ing flo w is given in Fig. 2. Our data set is accessed through the data ob ject. The me thod parellelStream(int batchSize) produces a stream of DataInstance ob jects. The map op erator transforms the stream of DataInstance ob jects to a stream of Vector ob jects. This transformation is made using the bayesianNetwork::getSufficientStatistics metho d previously described in Program 6 which, given a DataInstance ob ject, pro duces a Vector ob ject (it implements the func- tion s ( · ) referenced in Equation 1). Finally , the stream of Vector ob jects containing all the sufficien t statistics is reduced to a single Vector ob ject using the reduction op eration passed as an argument to the reduce op erator. This reduction op eration is Vector::sumVector , that is, a method that returns the sum of tw o giv en v ectors. The first input argument of the reduce op erator is new ZeroVector() whic h represen ts the final accum ulator ob ject. 13 Data Stream parallelStream Stream map reduce Figure 2: Pro cessing flow of the maximum likelihoo d estimation algorithm detailed in Program 7. The k ey element of this parallel MLE algorithm is the use of a stream of fixed-sized data batches created b y in voking the metho d parallelStream(batchSize) . This method is co ded using the functionalit y described in Section 5. As will b e shown in Section 6, the size of the batc h selected pla ys an important role in the parallelisation p erformance. Again, this example illustrates ho w simple it is to co de a parallel v ersion of an algorithm using Ja v a 8. Ev en though, traditionally , it has not been trivial to parallelise this widely used parameter estimation metho d in practice. F or instance, in 2002, Sw ann [40] presented a 34-page pap er to lo wer the cost of using MPI to solv e a maximum likelihoo d problem. 4.2 P arallel Mon te Carlo Mon te Carlo [41] methods are a widely kno wn and computationally intensiv e family of algorithms that has b een heavily used in the last few decades for solving a broad class of physical, mathematical, and statistical problems. Many Mon te Carlo methods can b e efficien tly run b y resorting to parallel implemen tations. How ev er, similar to the MLE algorithm, the supp ort for parallel implementations of Monte Carlo metho ds in PGM toolb o xes is quite limited [42]. In this section, we show how by using Ja v a 8, w e can easily implemen t in a few lines of co de parallel v ersions of a relev an t t yp e of a Mon te Carlo method: the Importance Sampling (IS) [41] algorithm. IS can b e defined as a v ariance reduction Monte Carlo tec hnique. It is based on pro ducing samples, using an auxiliary probabilit y distribution, from in teresting or imp ortant regions of our probabilit y distribution. F or instance, in man y applications, we w ant to calculate E ( f ( X )), that is, the exp ected v alue of a function of interest f ( x ) according to some probabilit y distribution p . Ho wev er, for man y interesting applications in Bay esian inference [41], this expected v alue cannot b e computed exactly (usually b ecause p is intractable). IS algorithms compute the estimates of this exp ected v alue b y generating a large set of samples { x i } i ∈{ 1 ,...,m } from an auxiliary tractable sampling distribution q that ov erweigh ts the interesting region, and then adjusts the estimation to account for the o versampling from this auxiliary distribution with the w eight w = p ( x i ) /q ( x i ) for each sample x i , i ∈ { 1 , . . . , m } . The estimate of the exp ected v alue is computed as follows, ˆ E ( f ( X )) = P m i =1 f ( x i ) w i P m i =1 w i · (2) Hence, an IS algorithm can b e decomposed into the computation of tw o differen t sums and fulfils 14 the aforementioned parallelisation requirements. The Jav a 8 co de of the IS algorithm is sho wn in Program 8.1. In the first part of the co de, we randomly generate a set of samples { x i } i ∈{ 1 ,...,m } and store them in a list, the weightedSampleList ob ject. This sampling pro cess can b e trivially parallelised using Ja v a 8 Streams. A parallel stream of Integer ob jects is created with the desired sampleSize , whic h allo ws generating weigh ted samples in parallel via the operator mapToObj . Note that the samplingModel ob ject already con tains information ab out the sampling distribution. In the second part of this co de, w e iterate o v er the previously generated samples to compute the expected v alue of the function f according to Equation 2. More precisely , eac h of the previously generated samples is mapp ed to a tw o-elemen t list, where the first elemen t corresponds to the sum of the n umerator and the second element corresp onds to the sum of the denominator of Equation 2. The reduce operator simply adds up the tw o-elemen t list in a similar w ay as it was used in Program 7. The code snipp et in Program 8.2 sho ws an example of t wo queries that can be inv ok ed, and illustrates also the use of lam b da expressions. Note that there is a subtle issue in Program 8.1 which needs further discussion. In this code, w e ha v e used the class java.util.Random to generate, in parallel, pseudo-random n umbers in order to produce the w eighted samples. Instances of java.util.Random are thread-safe. How ev er, the concurren t use of the same java.util.Random ob ject across threads will encounter con tention and ma y potentially lead to a p oor performance. Instead, w e could use the class ThreadLocalRandom in a multithreaded environmen t, in order to generate random n umbers lo cally in each of the threads running in parallel, as sho wn in Program 8.3. Program 8.1 - Parallel imp ortance sampling public double getExpectedValue(String varName, Function f){ Random random = new Random(0); // 1. Parallel random generation of samples Stream weightedSampleList = IntStream.range(0, sampleSize).parallel() .mapToObj(i -> samplingModel.getWeightedSample(random)) // 2. map: each sample is mapped into two elements // 3. reduce: numerators and denominators for all samples are summed up List sum = weightedSampleList.parallel() .map(w-> Arrays.asList(f(w.getValue(varName)) * w.getWeight(), w.getWeight())) .reduce(Arrays.asList(0.0,0.0), (a,b)-> Arrays.asList(a.get(0)+ b.get(0), a.get(1)+ b.get(1))); // 4. The resulting values refer to the numerator and denominator in Equation 2, respectively return sum.get(1)/sum.get(0); } 15 Program 8.2 - Examples of queries using Program 8.1 System.out.println( "p(A<0.7) = " + ImportanceSampling.getExpectedValue( "A" , a->{(a<7)? 1.0 : 0.0})); System.out.println( "E(A) = " + ImportanceSampling.getExpectedValue( "B" , b->b); Program 8.3 - Use of ThreadLo calRandom for generating m ultithreaded random n umbers (in ternally generated seed) .mapToObj(i -> SamplingModel.getWeightedSample(ThreadLocalRandom.current())) Although w e are av oiding the collision of threads when accessing a shared resource, ThreadLocalRandom ob jects are initialised with an internally generated seed that cannot otherwise be mo dified using the curren t Jav a API, so the results are not reproducible. Thus, we introduce in Program 8.4 another alternativ e for parallel random num ber generation. W e create our own LocalRandomGenerator that will allo w us to generate pseudo-random num b ers locally in each thread with a particular seed. This approac h migh t lead to some ov erhead, b y creating new instances of Random ob jects. How ev er, it allo ws us to provide a repro ducible alternativ e. In [43] we prov ide an exp erimental analysis that shows high computational p erformance impro ve- men ts with the parallelisation of IS algorithms based on the presen ted design solutions using the AMIDST to olbox. Program 8.4 - A solution for generating parallel random n um b ers // Class definition for reproducible multithreaded random number generation public class LocalRandomGenerator { final Random random; public LocalRandomGenerator( int seed){ random = new Random(seed); } public Random current(){ return new Random(random.nextInt()); } } // Example of use LocalRandomGenerator randomGenerator = new LocalRandomGenerator(seed); ... .mapToObj(i -> SamplingModel.getWeightedSample(randomGenerator.current())) ... 16 4.3 P arallel greedy searc h There are many different domains in which a greedy searc h (GS) algorithm can b e used as a heuristic to find a lo cal optimal solution to a combinatorial optimisation problem. When more exhaustive algorithms are computationally prohibitive, then a local solution can b e a viable approximation to the global one. F or PGMs, GS algorithms are widely used to solve problems suc h as structural learning [44] or wrapper feature subset selection [45]. Program 9.1 - Parallel greedy searc h for wrapper feature subset selection // WrapperFeatureSelection implements the GreedyAlgorithm interface GreedyAlgorithm algorithm = new WrapperFeatureSelection(data, classVariable); Candidate bestCandidate = algorithm.createEmptyCandidate(); bestCandidate.setScore(Double.NEGATIVE_INFINITY); // Sequential part: compare the best candidate for each iteration against the global best candidate do { List> candidates = algorithm.getCandidates(); //Parallel part: get the best candidate for the current iteration (inner candidate) Candidate bestInnerCandidate = candidates.parallelStream() .map(candidate -> algorithm.evaluate(candidate)) .reduce(bestCandidate, (a, b) -> { if (a.getScore() > b.getScore() + threshold) return a; else return b; }); algorithm.updateBestCandidate(bestCandidate); } while (bestInnerCandidate != bestCandidate); In a v ery general con text, a GS algorithm requires 1) a set of candidates, from whic h a solution is gradually created; 2) an ob jectiv e function, whic h ev aluates the fitness of a candidate solution; 3) a selection function, which chooses the b est candidate to add to the solution; and 4) a solution function, which indicates if the curren t solution is the final one. Con trary to the t wo ab o ve-described MLE and IS algorithms, a GS algorithm cannot b e fully parallelised. In fact, a GS algorithm is sequen tial, whic h means that the solution of the next step dep ends on the solution of the current step. How ev er, there is ro om for parallelisation when building the candidate solution at a sp ecific step. F or instance, in man y cases, the selection function consists simply of finding the element in a v ery large set of candidates with the highest score according to the ob jectiv e function. Program 9.1 con tains the Ja v a 8 co de of a general parallel GS algorithm (particularised for wrapp er feature subset selection [45]). In eac h iteration of the main do-while lo op, the bestInnerCandidate is ev aluated 17 and selected in parallel using p ar al lel str e ams , map , and reduce op erators (in this case, the reduce op erator finds the e lemen t in the stream with the maximum v alue). This code can b e instantiated in to different greedy searc h problems by implemen ting the Candidate and Gr e e dyAlgorithm in terfaces defined in Program 9.2. Again, to the b est our kno wledge, there is no PGM to olbox whic h provides this kind of function- alit y . The abov e example shows ho w the implemen tation of a parallel greedy search solver is quite straigh tforward when using Jav a 8 API. Program 9.2 - Interfaces to b e implemented for any greedy searc h problem public interface Candidate { E getObject(); double getScore(); void setScore( double score); } public interface GreedyAlgorithm { Candidate createEmptyCandidate(); List> getCandidates(); Candidate evaluate(Candidate candidate); void updateBestCandidate(Candidate candidate); } 5 P arallel pro cessing of data sets In many settings, data sets can b e defined as a collection of indep enden t items (documents, customer profiles, genetic profiles, patien t health records, etc.), and thereby many data mining and machine learning techniques can be described as algorithms whic h indep enden tly pro cess each of these items, once or multiple times. F or instance, this applies to any algorithm fitting the statistical query mo del [4, 46] or any Ba yesian learning approach ov er i.i.d. data samples and exp onen tial family mo dels [47], as in the case of the MLE algorithm discussed in Section 4.1. The latter settings are quite suitable for coding parallel implementations of these algorithms using Ja v a 8 Streams. When dev eloping the AMIDST to olb o x we heavily used the follo wing design pattern for many data pro cessing algorithms by creating a stream of data item ob jects. Eac h data item is pro cessed using a giv en operation according to the sp ecific algorithm (usually using a map operation) and all in termediate results are com bined using a particular reduction operation. The parallel MLE algorithm prop osed in Section 4.1 is an example of these kinds of algorithms and many other mac hine learning metho ds can b e expressed in terms of map-reduce operations [4]. This parallelisation approach based on Ja v a 8 Streams needs, in principle, the data set to b e stored 18 in RAM to support parallel random access to the different data samples, as discussed in Section 2.1.4. Although the RAM allocation of the data set is doable in many cases, modern data sets are usually to o big to b e held in RAM. When data is placed on a hard disk, it has to b e accessed in a purely sequen tial manner, whic h is in complete con tradiction with the random access requiremen ts of parallel pro cessing. In the next subsection, we discuss the design solution used in AMIDST to approach this problem and set the basis for the dev elopment of parallel algorithms for processing large data samples that do not fit in main memory . In AMIDST, we emplo y this functionalit y for man y different algorithms, as it is the case for the previously discussed MLE algorithm (see Section 4.1). W e also use this solution in AMIDST to pro vide, for example, a parallel implementation of the K-me ans algorithm for data sets that do not fit in memory , whic h is co ded using a few lines of co de (plus some simple external classes). If we lo ok at other parallel implemen tations of the K-me ans algorithm, for example, using the OpenMP [48] framework in C++ 8 , we see that it is far more complex to co de and requires explicit m ulti-thread handling (as well as it assumes that all data fit in main memory!). 5.1 Streams of data batc hes In this section, w e describ e a solution for the ab o v e problem based on the use of streams of data b atches (a small group of data items). This approach loads data batches on demand, then p erforms parallel pro cessing of the data batches by exploiting the fact that, for man y mac hine learning algorithms, the time of pro cessing a data batch, denoted by P , is larger than the time to load this batc h from the hard disk to main memory , denoted b y L . Using this approac h, the first core loads the first batch of data. Next, this first core starts to pro cess it, releases the I/O disk channel, and mean while, the second core starts loading the second batc h of data. In Fig. 3, w e visually illustrate ho w this standard approac h would work on a four-core CPU for a setting where P > 3 ∗ L (the time of pro cessing a data batc h is 3 times larger than the time for loading this batch from disk). In the case of Ja v a 8, this could b e p erformed b y relying on the lazy ev aluation feature of Jav a 8 Streams (see Section 2.1.3). It is not hard to see that this strategy is limited in the lev el of parallelism it can achiev e, whic h basically depends on the ratio b et w een P and L . More precisely , with this approac h, the maximum n umber of cores working at the same time is b P /L + 1 c . This quotient dep ends on the algorithm and the CPU computing pow er, through the v alue of P , as w ell as the I/O speed, thorough the v alue of L . Moreo ver, it also dep ends on the size of the batc h that affects both P and L and represen ts the unique parameter that can b e tuned in order to ac hieve an optimal p erformance. 8 http://users.eecs.northwestern.edu/ ~ ewkliao/Kmeans/index.html 19 3L 4L L + P T ime Line 2L + P Core 1 Core 2 Core 3 Core 4 Processing Load Batch 1 Load% Processing Load Batch 3 Processing Load Batch 4 Processing Load Batch 5 2L + 2P Stage 1 Stage 2 Stage 3 Processing Load Batch 2 Figure 3: Example of the distribution of five data batches with the same demands in terms of loading and processing time, denoted as L and P respectively , in a four-core CPU. The red line indicates the time frame for whic h all data batc hes are being processed at the same time. 5.2 The Spliter ator interface Unfortunately , Ja v a 8 do es not provide native metho ds for creating a stream of batches of the same size from a disk file 9 . How ever, w e can create customized Ja v a Streams b y using the java.util.Spliter ator in terface 10 . A v ery important prop ert y of this interface is that w e do not need to create thr e ad-safe classes implemen ting this interface. Ja v a 8 guaran tees to use y our Spliter ator ob jects in a single thread at a time. This mak es the implemen tation of Spliter ator a more simple op eration. In what follo ws, we show which are the main elements of the Spliter ator ob ject, providing a stream of batches of a fixed size. Our Spliter ator implementation assumes that the data set is placed in a text file where each line of the file con tains a data item (usually a data item is comp osed of a sequence of comma-separated n umbers, but it can also b e, for example, a long string con taining an XML document). Spliter ator is a com bined name whic h refers to t wo main abstractions b ehind Ja v a Streams: iter ating and splitting . These tw o abstractions are sp ecified in the following tw o metho ds of the Spliter ator interface: • boolean tryAdvance(Consumer action) : This metho d p erforms the actual 9 W e could use the class java.nio.file.Files to obtain a parallel stream of lines from a file but the size of the batches can not b e set and grows in arithmetic progression. 10 See https://www.airpair.com/java/posts/parallel- processing- of- io- based- data- with- java- streams/ for further details on this approach. 20 pro cessing of the stream. It consumes one item of the stream and in vok es the passed-in action on it. As shown in Program 10, this method is implemented as follo ws: it first reads a new line from the file, creates a new data item ob ject from this line, and then processes it with the action ob ject. The action ob ject encapsulates the op eration that will b e applied later to the data item in the pro cessing flo w of the Jav a Stream b y using the metho ds map , filter , etc. Program 10 - Iterating abstraction of the Spliterator // This method performs the actual processing of the stream boolean tryAdvance(Consumer action){ // 1. Read one line String line = getBufferedReader().readLine(); if (line!= null ){ // 2. Create a new DataItem object from each line DataItem dataItem = new DataItem(line); // 3. Process the DataItem with the action object action.accept(dataItem); return true ; } else { return false ; } } • Spliterator trySplit() : This method is in charge of splitting off a part of the sequence that the curren t Spliter ator is handling. The result is a new Spliter ator ob ject that will handle the new split sequence. Ja v a 8 uses this metho d to p erform the parallel processing of the stream. A t the b eginning, when a stream op eration is inv ok ed o ver a list of elemen ts, a Spliter ator ob ject is created and handled b y one thread. Then, at some p oint, this thread inv okes the trySplit metho d to create a new Spliter ator ob ject and assign it to a new thread. That new ob ject will no w b e in charge of processing, in parallel, the new split sequence. In our case, when this metho d is in v oked, w e read B consecutiv e lines, with B b eing the size of the data batc h, and create a new Spliter ator ob ject for handling this sequence of B lines. In that w a y , once a new thread is assigned to this new Spliter ator , it will only pro cess this batc h of data. In Program 11, we detail the Jav a code for this metho d in our Spliter ator class. Once the Spliterator class is defined, we can create a fixed-batch-size stream using the class ja v a.util.stream.StreamSupp ort. This is how the metho d parallelStream(int batchSize) of the class DataStream is coded in AMIDST. 21 Program 11 - Spliting abstraction of the Spliterator // This method split off the part of the sequence for this Spliterator public Spliterator trySplit(){ final HoldingConsumer holder = new HoldingConsumer<>(); // 1. Check that the end of the sequence is not reached if (!spliterator.tryAdvance(holder)) return null ; // 2. Populate data batch with batchSize items of the sequence final DataItem[] batch = new DataItem[batchSize]; int j = 0; do { batch[j] = holder.value; } while (++j < batchSize && tryAdvance(holder)); // 3. Create a new Spliterator that is assigned to a new thread return Spliterators.spliterator(batch, 0, j, characteristics()); } 6 Exp erimen tal Ev aluation 6.1 Sequen tial vs P arallel Jav a 8 programming In this section w e giv e some exp erimen tal examples to sho w the computational adv an tages of using parallel streams as well as some of the tradeoffs we need to make when coding parallel algorithms in Ja v a 8. F or this purp ose, w e use a simple algorithm like MLE which, as shown in Section 4.1, can be trivially parallelised using Ja v a streams. Ideally , the higher the n umber of cores is used, the higher the sp eedup is obtained. But, of course, this speedup is at some p oin t limited b y the o v erload in tro duced b y the thread creation. Moreov er, as previously discussed in Section 5.1, algorithms based on parallel pro cessing of data batc hes, as it is the case of parallel MLE, should also consider that there is a limit in the lev el of parallelisation they can ach ieve. This limit is determined b y the expression b P /L + 1 c , where P denotes the time of pro cessing a data batc h and L denotes the time needed to load this batc h from the hard disk to main memory . T o show this trade-off, we ha ve conducted some exp eriments p erforming MLE using a syn thetic data set of one million samples. Samples hav e b een randomly generated using the Bay esian netw ork structure, depicted in Fig. 4, with random parameters. The considered Bay esian netw ork consists of t wo multinomial sup er-p ar ent v ariables (one of them could be considered the class v ariable, denoted C , and the other denoted S P M ); t w o Gaussian sup er-p ar ent v ariables, denoted S P G 1 and S P G 2 ; and 100 multinomial v ariables ( M 1 , . . . , M 100 ) and 100 Gaussian v ariables ( G 1 , . . . , G 100 ) that dep end on all the super-parent v ariables. Our task here is to learn the parameters of this Ba yesian netw ork using MLE. 22 8" SPM M 1 M 100 G 1 G 100 … … C SPG 2 SPG 1 Figure 4: A Ba y esian net w ork structure. Fig. 5(a) shows the time needed to complete the ab o ve task in a machine with 32 cores when fixing the batch size to 1000 samples and v arying the n um b er of cores 11 . As can b e seen, using parallelisation, even with only 2 cores, a significant reduction (b y a half ) of the time required to learn from a million samples. So, for this simple algorithm, where computational complexity of pro cessing a data batc h is linear in the num b er of samples of the batch, the optimal v alue is obtained with only four cores. So, as discussed abov e, it seems that we reach the maximum degree of parallelisation with four cores for a batc h size of 1000 samples. By using more cores we in tro duce some o v erhead. ● ● ● ● ● ● 150 200 250 300 350 Number of cores Time (seconds) 1 2 4 8 16 32 ● ● ● ● ● ● ● 125 130 135 140 145 150 Batch size Time (seconds) 100 200 500 1000 2000 5000 10000 (a) Comparing the n umber of cores (b) Comparing the batc h size (with batchSize=1000) (with all av ailable cores) Figure 5: Exp erimen ts running the MLE algorithm in parallel for differen t n umber of cores and batc h sizes. Fig. 5(b) sho ws the same parallel MLE experiment carried out in all av ailable 32 cores but mo difying the batc h size. In this case, the optimal v alue is obtained with a batc h size of 2000 11 Jav a 8 API do es not allow to fix the num b er of cores to b e used in a parallel stream, but w e can fix the maximum num ber of allowed cores by using the linux command taskset , which ma y introduce some ov erhead. 23 samples. Using a small batch size in troduces a high o v erload in terms of thread creation because the problem is divided into many small sub-problems. Whereas using a batch size that is to o big can underuse the offered capacity of the computer in terms of av ailable cores b ecause the problem is divided in to few big sub-problems. In any case, w e can observe how these differences in times when using different batc h sizes are relativ ely small (approximately 30 seconds in the w orst case) compared to the time differences when no parallelisation is carried out (more than 200 seconds). W e b eliev e that the optimal batch size is unfortunately problem dependent. It can b e a go od idea to p erform, for instance, a parallel cross-v alidation ev aluation in order to determine its v alue. W e ha v e so far p erformed exp erimen ts using real datasets of different sizes and observ ed that the optimal batch size is generally in the order of one thousand [49, 50]. 6.2 Ja v a 8 vs Big data platforms for multi-core parallelisation In this section, we would like to highligh t the high o verheads asso ciated to a big data platform lik e Apac he Flink [51] when used for parallel pro cessing in a m ulti-core computer. As mentioned b efore, Apache Flink is an open source platform for distributed stream and batch data pro cessing. It executes arbitrary data-flow programs in a data-parallel and pip elined manner, enabling the execution of bulk/batch and stream pro cessing programs. F or this comparison, Apac he Flink should behav e similar to Apache Spark [28] as they approach similar data distributed processing problems. F or this experiment, w e co ded in Flink the same parallel MLE algorithm used in the previous section and ran it ov er the same data set and on the same 32-cores serv er. The obtained running time with Flink w as 1388 seconds, that is, around 23 min utes to learn the parameters of the BN net w ork in Fig. 4 from 1 million samples. In Jav a 8, it to ok less than 3 min utes to complete the same task. In this case, Apache Flink’s run time is significantly higher due to the o verhead in tro duced. This o verhead is basically related to all the sp ecial functionalities cov ered in order to ha ve a platform that is able to access distributed data files, to reco v er from failures in the hardw are, to balance the computational load across the no des of the clusters, etc. Therefore, w e may conclude that, in general, suc h big data platforms are esp ecially designed to preferably run on m ulti-no de clusters of commo dit y hardw are, not on a single computer with multi-core processing units. 7 Conclusions In this paper, we ha ve presen ted different design solutions to deal with PGMs on multi-core CPUs using the new Ja v a 8 API. W e ha ve also demonstrated how this new API provides a rich set of functionalities that mak e the coding of concurren t/parallel applications a far simpler task compared to previous Ja v a releases and, probably , to many other programming languages. In addition, w e hav e 24 pro vided some general guidelines on ho w to design data structures, algorithms, and parallelisable data pro cessing metho ds using Jav a 8 features. W e hop e that these guidelines can b e of help to developers and researc hers that aim to build computational in telligence soft ware exploiting m ulti-core CPU arc hitectures. In our opinion, a key asp ect that makes the functional programming Jav a 8 features quite app ealing for developing computational in telligence soft ware is that these functional primitives decouple the problem of what can be parallelised from the problem of how to run an algorithm in parallel. Actually , once the algorithm has b een expressed in terms of map-reduce op erations o ver Ja v a streams, w e cannot con trol whic h part of the computations is performed on a sp ecific core or ho w many cores should be used. This is completely transparent to the user. In that wa y , we are really going beyond multi- core CPU arc hitectures. F or example, researc hers at Oracle (the Jav a o wner) [52] recently prop osed an extension of Jav a Streams, called DistributableStream , for data pro cessing o ver a cluster of computers with a distributed file system such as Hado op or Cassandra. With this approach, the same code that runs in parallel on a m ulti-core CPU using nativ e Ja v a Streams, can also b e run on a distributed cluster using the class DistributableStream . W e en vision that the forthcoming Ja v a releases may giv e supp ort to other kinds of parallel computing arc hitectures introducing more sp ecifications on what can be parallelisable rather than how to p erform parallel computing. Ac kno wledgmen ts This work was p erformed as part of the AMIDST pro ject. AMIDST has received funding from the Europ ean Union’s Sev enth F ramework Programme for researc h, technological dev elopment and demonstration under grant agreement no 619209. References [1] D. J. F rank, “P ow er-constrained cmos scaling limits,” IBM Journal of R ese ar ch and Development , v ol. 46, no. 2.3, pp. 235–244, 2002. [2] P . P . Gelsinger, “Micropro cessors for the new millennium: Challenges, opp ortunities, and new fron tiers,” in IEEE International Solid-State Cir cuits Confer enc e , 2001, pp. 22–25. [3] K. Liu, H. Kargupta, J. Ryan, and K. Bhaduri, “Distributed data mining bibliograph y ,” http: //www.csee.um b c.edu/%7ehillol/DDMBIB, 2006, online; accessed 4 Nov ember 2015. [4] C. Chu, S. K. Kim, Y.-A. Lin, Y. Y u, G. Bradski, A. Y. Ng, and K. Olukotun, “Map-reduce for mac hine learning on m ulticore,” A dvanc es in neur al information pr o c essing systems , v ol. 19, p. 281, 2007. 25 [5] A. Umbark ar and M. Joshi, “Review of parallel genetic algorithm based on computing paradigm and diversit y in search space,” ICT A CT Journal on Soft Computing , v ol. 3, pp. 615–622, 2013. [6] I. Robles, R. Alcal´ a, J. M. Ben ´ ıtez, and F. Herrera, “Evolutionary parallel and gradually dis- tributed lateral tuning of fuzzy rule-based systems,” Evolutionary Intel ligenc e , v ol. 2, no. 1-2, pp. 5–19, 2009. [7] G. Luque, E. Alba, and B. Dorronsoro, “P arallel genetic algorithms,” Par al lel metaheuristics: A new class of algorithms , pp. 107–125, 2005. [8] H. Adeli and K. C. Sarma, Cost optimization of structur es: fuzzy lo gic, genetic algorithms, and p ar al lel c omputing . John Wiley & Sons, 2006. [9] A. Agarwal, O. Chap elle, M. Dud ´ ık, and J. Langford, “A reliable effective terascale linear learning system,” Journal of Machine L e arning R ese ar ch , v ol. 15, pp. 1111–1133, 2014. [10] G. D. F. Morales and A. Bifet, “Samoa: Scalable adv anced massive online analysis,” Journal of Machine L e arning R ese ar ch , vol. 16, pp. 149–153, 2015. [11] D. Eddelbuettel, High-Performanc e and Par al lel Computing with R , 2015. [Online]. Av ailable: h ttp://cran.r- pro ject.org/w eb/views/HighPerformanceComputing.h tml [12] G. Sharma and J. Martin, “Matlab ® : a language for parallel computing,” International Journal of Par al lel Pr o gr amming , vol. 37, no. 1, pp. 3–36, 2009. [13] M. Kane, J. W. Emerson, and S. W eston, “Scalable strategies for computing with massiv e data,” Journal of Statistic al Softwar e , vol. 55, no. 14, pp. 1–19, 2013. [14] F. Neese, “The orca program system,” Wiley Inter disciplinary R eviews: Computational Mole cular Scienc e , vol. 2, no. 1, pp. 73–78, 2012. [Online]. Av ailable: http://dx.doi.org/10.1002/w cms.81 [15] D. May , “Occam,” SIGPLAN Not. , vol. 18, no. 4, pp. 69–79, Apr. 1983. [16] L. Tierney , A. J. Rossini, N. Li, and H. Sevcik o v a, snow: Simple Network of Workstations , 2015, r pack age v ersion 0.4-1. [Online]. Av ailable: h ttp://CRAN.R- pro ject.org/pack age=sno w [17] M. P . F orum, “Mpi: A message-passing in terface standard,” Kno xville, TN, USA, T ech. Rep., 1994. [18] S. Gregory , Par al lel lo gic pr o gr amming in P ARLOG : the language and its implementation , ser. In ternational series in logic programming. W okingham, England Reading, Mass. Addison-W esley Pub. Co. c1987, 1987, includes index. [Online]. Av ailable: h ttp://opac.inria.fr/record=b1086382 26 [19] M. Odersky and al., “An Overview of the Scala Programming Language,” EPFL, Lausanne, Switzerland, T ech. Rep. IC/2004/64, 2004. [20] J. Alcal´ a-Fdez and J. M. Alonso, “Special issue on soft ware to ols for soft computing,” Interna- tional Journal of Computational Intel ligenc e Systems , vol. 6, no. sup1, pp. 1–2, 2013. [21] S. R. Piccolo and L. J. F rey , “Ml-flex: A flexible toolb ox for performing classification analyses in parallel,” The Journal of Machine L e arning R ese ar ch , vol. 13, no. 1, pp. 555–559, 2012. [22] M. Schmidberger, M. Morgan, D. Eddelbuettel, H. Y u, L. Tierney , and U. Mansmann, “State- of-the-art in parallel computing with R,” Journal of Statistic al Softwar e , vol. 47, no. 1, pp. 1–27, 2009. [23] Y. Lo w, J. Gonzalez, A. Kyrola, D. Bickson, C. Guestrin, and J. M. Hellerstein, “Graphlab: A new framew ork for parallel machine learning,” CoRR , vol. abs/1006.4990, 2010. [Online]. Av ailable: h [24] E. P . Xing, Q. Ho, W. Dai, J. K. Kim, J. W ei, S. Lee, X. Zheng, P . Xie, A. Kumar, and Y. Y u, “P etuum: A new platform for distributed machine learning on big data,” in Pr o c e e dings of the 21th ACM SIGKDD International Confer enc e on Know le dge Disc overy and Data Mining, Sydney, NSW, A ustr alia, August 10-13, 2015 , L. Cao, C. Zhang, T. Joac hims, G. I. W ebb, D. D. Marginean tu, and G. Williams, Eds. ACM, 2015, pp. 1335–1344. [Online]. Av ailable: h ttp://doi.acm.org/10.1145/2783258.2783323 [25] R. W arburton, Java 8 L amb das: Pr agmatic F unctional Pr o gr amming . O’Reilly Media, Inc., 2014. [26] R.-G. Urma, M. F usco, and A. Mycroft, Java 8 in Action . Manning Publications, 2014. [27] J. Dean and S. Ghemaw at, “Mapreduce: Simplified data processing on large clusters,” in Pr o c e e dings of the 6th Confer enc e on Symp osium on Op e arting Systems Design & Implementation - V olume 6 , ser. OSDI’04. Berk eley , CA, USA: USENIX Asso ciation, 2004, pp. 10–10. [Online]. Av ailable: h ttp://dl.acm.org/citation.cfm?id=1251254.1251264 [28] M. Zaharia, M. Chowdh ury , M. J. F ranklin, S. Shenker, and I. Stoica, “Spark: Cluster computing with w orking sets,” in Pr o c e e dings of the 2Nd USENIX Confer enc e on Hot T opics in Cloud Computing , ser. HotCloud’10. Berkeley , CA, USA: USENIX Association, 2010, pp. 10–10. [Online]. Av ailable: http://dl.acm.org/citation.cfm?id=1863103.1863113 [29] J. Pearl, Pr ob abilistic R e asoning in Intel ligent Systems: Networks of Plausible Infer enc e . San Mateo, CA.: Morgan Kaufmann Publishers, 1988. 27 [30] S. Lauritzen, Gr aphic al Mo dels . Oxford Univ ersity Press, 1996. [31] F. V. Jensen and T. D. Nielsen, Bayesian Networks and De cision Gr aphs . Springer V erlag, 2007. [32] D. Koller and N. F riedman, Pr ob abilistic gr aphic al mo dels: principles and te chniques . MIT press, 2009. [33] O. P ourret, P . Na ¨ ım, and B. Marcot, Bayesian networks: a pr actic al guide to applic ations . John Wiley & Sons, 2008, v ol. 73. [34] S. L. Lauritzen, “Propagation of probabilities, means, and v ariances in mixed graphical asso ci- ation mo dels,” Journal of the Americ an Statistic al Asso ciation , vol. 87, no. 420, pp. 1098–1108, 1992. [35] S. Lauritzen and F. Jensen, “Stable local computation with conditional Gaussian distributions,” Statistics and Computing , v ol. 11, no. 2, pp. 191–203, 2001. [36] K. Murphy et al. , “The Ba yes net to olbox for Matlab,” Computing scienc e and statistics , vol. 33, no. 2, pp. 1024–1034, 2001. [37] E. Gamma, R. Helm, R. Johnson, and J. Vlissides, Design p atterns: elements of r eusable obje ct- oriente d softwar e . P earson Education, 1994. [38] J. M. Winn and C. M. Bishop, “V ariational message passing,” Journal of Machine L e arning R ese ar ch , v ol. 6, pp. 661–694, 2005. [39] F. W. Sc holz, “Maxim um likelihoo d estimation,” Encyclop e dia of Statistic al Scienc es , pp. 1–11, 2004. [40] C. A. Swann, “Maxim um lik eliho od estimation using parallel computing: An introduction to MPI,” Computational Ec onomics , vol. 19, no. 2, pp. 145–178, 2002. [41] J. Hammersley and D. Handscomb, Monte Carlo Metho ds . Meth uen & Co, 1964. [42] J.-B. T ristan, D. Huang, J. T assarotti, A. C. Poco c k, S. Green, and G. L. Steele, “Augur: Data- parallel probabilistic mo deling,” in A dvanc es in Neur al Information Pr o c essing Systems , 2014, pp. 2600–2608. [43] A. Salmer´ on, D. Ramos-L´ op ez, H. Borchani, and et al., “Parallel imp ortance sampling in condi- tional linear Gaussian netw orks,” in Confer encia de la Aso ciaci´ on Esp a ˜ nola p ar a la Inteligencia A rtificial , v ol. in press, 2015. 28 [44] D. Hec k erman, D. Geiger, and D. M. Chic k ering, “Learning Ba yesian netw orks: The com bination of knowledge and statistical data,” Machine L e arning , vol. 20, no. 3, pp. 197–243, 1995. [45] R. Kohavi and G. H. John, “W rapp ers for feature subset selection,” Artificial intel ligenc e , v ol. 97, no. 1, pp. 273–324, 1997. [46] M. Kearns, “Efficien t noise-tolerant learning from statistical queries,” Journal of the ACM , v ol. 45, no. 6, pp. 983–1006, 1998. [47] T. Bro deric k, N. Boyd, A. Wibisono, A. C. Wilson, and M. I. Jordan, “Streaming v ariational Ba yes,” in A dvanc es in Neur al Information Pr o c essing Systems , 2013, pp. 1727–1735. [48] X. Tian, A. Bik, M. Girk ar, P . Grey , H. Saito, and E. Su, “In tel ® op enmp c++/fortran compiler for hyper-threading tec hnology: Implemen tation and p erformance.” Intel T e chnolo gy Journal , v ol. 6, no. 1, 2002. [49] H. Borchani, A. M. Mart ´ ınez, A. Masegosa, H. Langseth, T. D. Nielsen, A. Salmer´ on, A. F ern´ andez, A. L. Madsen, and R. S´ aiz, “Mo deling concept drift: A probabilistic graphical mo del based approach,” in A dvanc es in Intel ligent Data Analysis XIV , ser. Lecture Notes in Computer Science, E. F romont, T. De Bie, and M. v an Leeu wen, Eds. Springer International Publishing, 2015, v ol. 9385, pp. 72–83. [Online]. Av ailable: h ttp://dx.doi.org/10.1007/978- 3- 319- 24465- 5 7 [50] ——, “Dynamic ba yesian mo deling for risk prediction in credit op erations,” in Pr o c e e dings of the Thirte enth Sc andinavian Confer enc e on Artificial Intel ligenc e (SCAI 2015) , in press. [51] P . Carb one, G. F´ ora, S. Ewen, S. Haridi, and K. Tzoumas, “Light weigh t asynchronous snapshots for distributed dataflows,” CoRR , vol. abs/1506.08603, 2015. [Online]. Av ailable: h [52] X. Su, G. Swart, B. Go etz, B. Oliv er, and P . Sandoz, “Changing engines in midstream: A ja v a stream computational mo del for big data processing,” Pr o c e e dings of the VLDB Endowment , v ol. 7, no. 13, pp. 1343–1354, 2014. 29

Original Paper

Loading high-quality paper...

Comments & Academic Discussion

Loading comments...

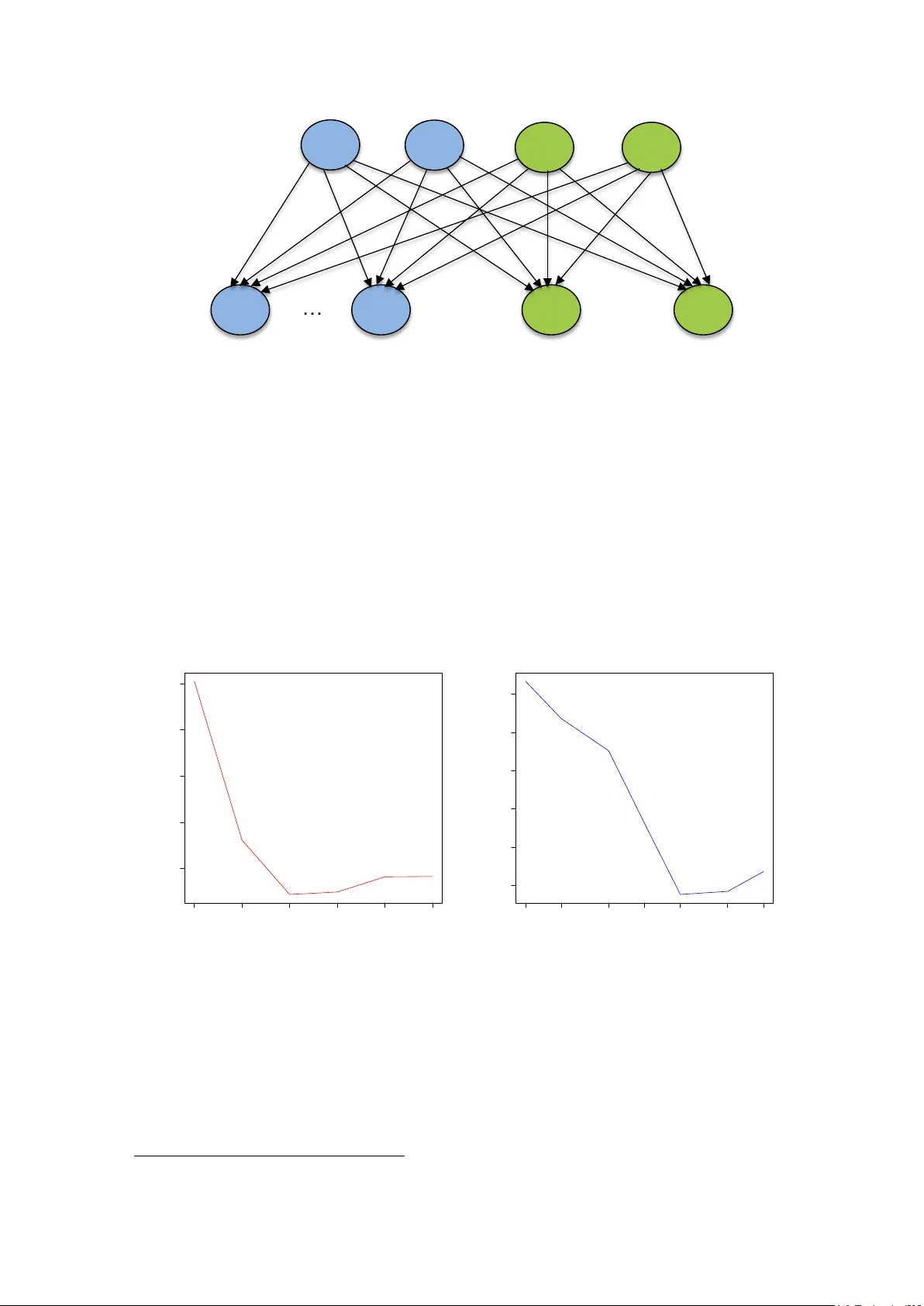

Leave a Comment