Differential Testing for Variational Analyses: Experience from Developing KConfigReader

Differential testing to solve the oracle problem has been applied in many scenarios where multiple supposedly equivalent implementations exist, such as multiple implementations of a C compiler. If the multiple systems disagree on the output for a given test input, we have likely discovered a bug without every having to specify what the expected output is. Research on variational analyses (or variability-aware or family-based analyses) can benefit from similar ideas. The goal of most variational analyses is to perform an analysis, such as type checking or model checking, over a large number of configurations much faster than an existing traditional analysis could by analyzing each configuration separately. Variational analyses are very suitable for differential testing, since the existence nonvariational analysis can provide the oracle for test cases that would otherwise be tedious or difficult to write. In this experience paper, I report how differential testing has helped in developing KConfigReader, a tool for translating the Linux kernel’s kconfig model into a propositional formula. Differential testing allows us to quickly build a large test base and incorporate external tests that avoided many regressions during development and made KConfigReader likely the most precise kconfig extraction tool available.

💡 Research Summary

This experience paper presents how differential testing—a technique that uses multiple implementations as oracles to solve the test oracle problem—can be systematically applied to the development of variational (or variability‑aware) analyses. The author’s case study is the creation of KConfigReader, a tool that translates the Linux kernel’s kconfig configuration model into a propositional formula suitable for SAT solving.

Variational analyses aim to analyze an entire configuration space (often exponential in size) much faster than brute‑force analysis of each configuration separately. Because the result of a correct variational analysis must be equivalent to the result obtained by applying a traditional, non‑variational analysis to every configuration, the traditional analysis can serve as a perfect oracle. The paper argues that this equivalence makes differential testing an ideal fit for variational analyses.

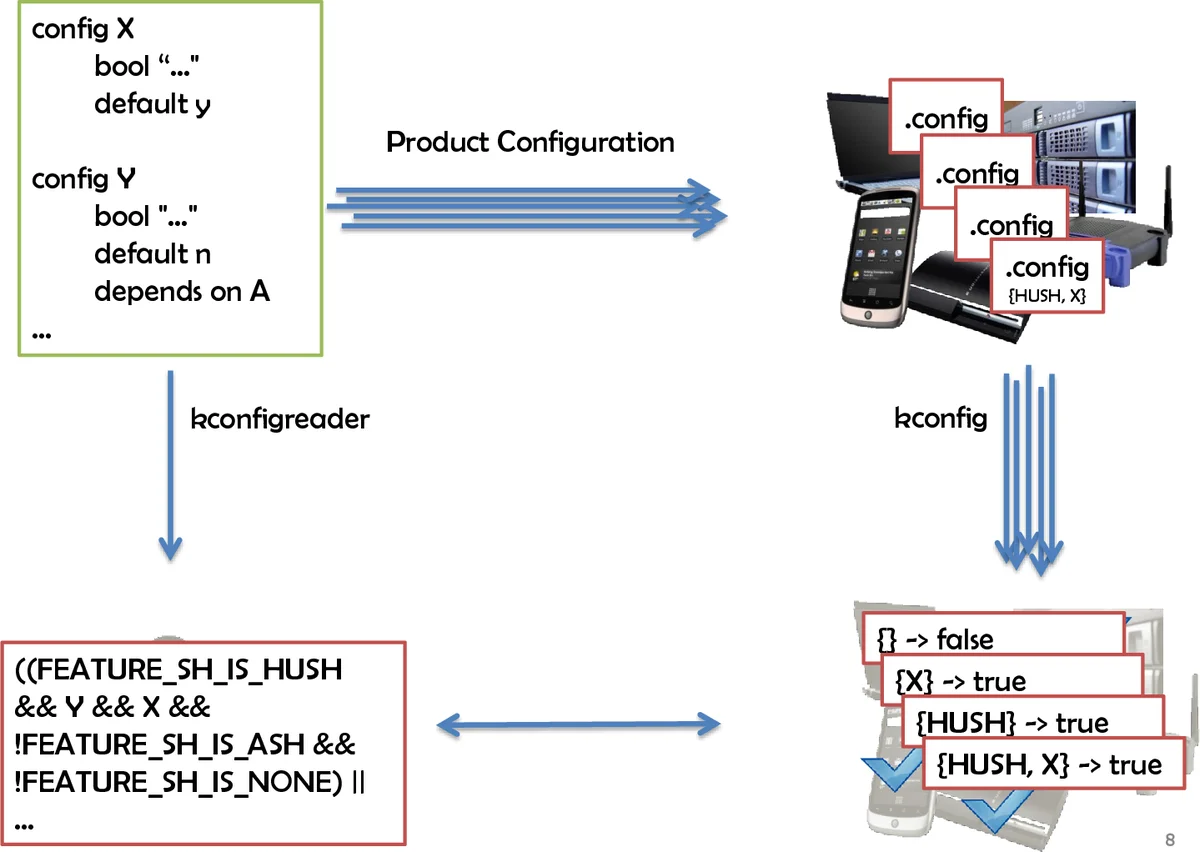

In the KConfigReader project, the author built a test harness that automatically generates all possible configurations for a given small kconfig snippet (up to ten Boolean options, i.e., at most 1 024 configurations). For each configuration the harness creates a .config file, invokes the existing kconfig “conf” utility, and observes whether the utility leaves the file unchanged (meaning the configuration is valid) or repairs it (meaning the configuration is invalid). This process yields a ground‑truth truth table without any manual specification of expected results.

Simultaneously, KConfigReader parses the same kconfig snippet and produces a propositional formula where each variable corresponds to an option. The test harness evaluates this formula under each configuration and checks whether the Boolean result matches the truth table produced by the “conf” oracle. Any mismatch is reported as a test failure, immediately exposing a bug in the translation logic.

The author reports that this approach enabled rapid development of a large regression suite, detection of subtle bugs in handling choices, hidden options, tristate values, numeric constraints, and the order‑dependent semantics of kconfig. The differential‑testing infrastructure required only a few scripts to enumerate configurations, invoke the oracle, and compare results, demonstrating that setting up such a framework is relatively straightforward.

Through extensive testing, KConfigReader achieved higher precision than earlier tools such as LVAT and Undertaker, a claim later corroborated by an independent study (El‑Sharkawy et al., 2015). The paper emphasizes that while exhaustive enumeration is feasible only for small configuration spaces, the same principle can be applied to larger spaces by sampling representative configurations or focusing on corner‑case constructs.

Beyond the specific case, the author generalizes the methodology: any variational analysis that claims soundness and completeness with respect to a traditional analysis can be validated via differential testing. This includes variational parsers, type checkers, data‑flow analyses, model checkers, and more. The key insight is that the existence of a reference implementation eliminates the need for manually written assertions, allowing developers to generate tests automatically, explore obscure language features, and maintain a robust regression suite throughout the evolution of the underlying system (in this case, the Linux kernel’s kconfig language).

In conclusion, the paper advocates for broader adoption of differential testing in the variational‑analysis community, arguing that it dramatically simplifies quality assurance, encourages test‑driven development, and leads to more reliable analysis tools. The author’s experience with KConfigReader serves as concrete evidence that differential testing can turn a traditionally hard‑to‑test domain into one where bugs are discovered early, regressions are caught automatically, and the final tool attains a level of precision that would be difficult to achieve otherwise.

Comments & Academic Discussion

Loading comments...

Leave a Comment