Distributed Coordinate Descent for Generalized Linear Models with Regularization

Generalized linear model with $L_1$ and $L_2$ regularization is a widely used technique for solving classification, class probability estimation and regression problems. With the numbers of both features and examples growing rapidly in the fields lik…

Authors: Ilya Trofimov, Alex, er Genkin

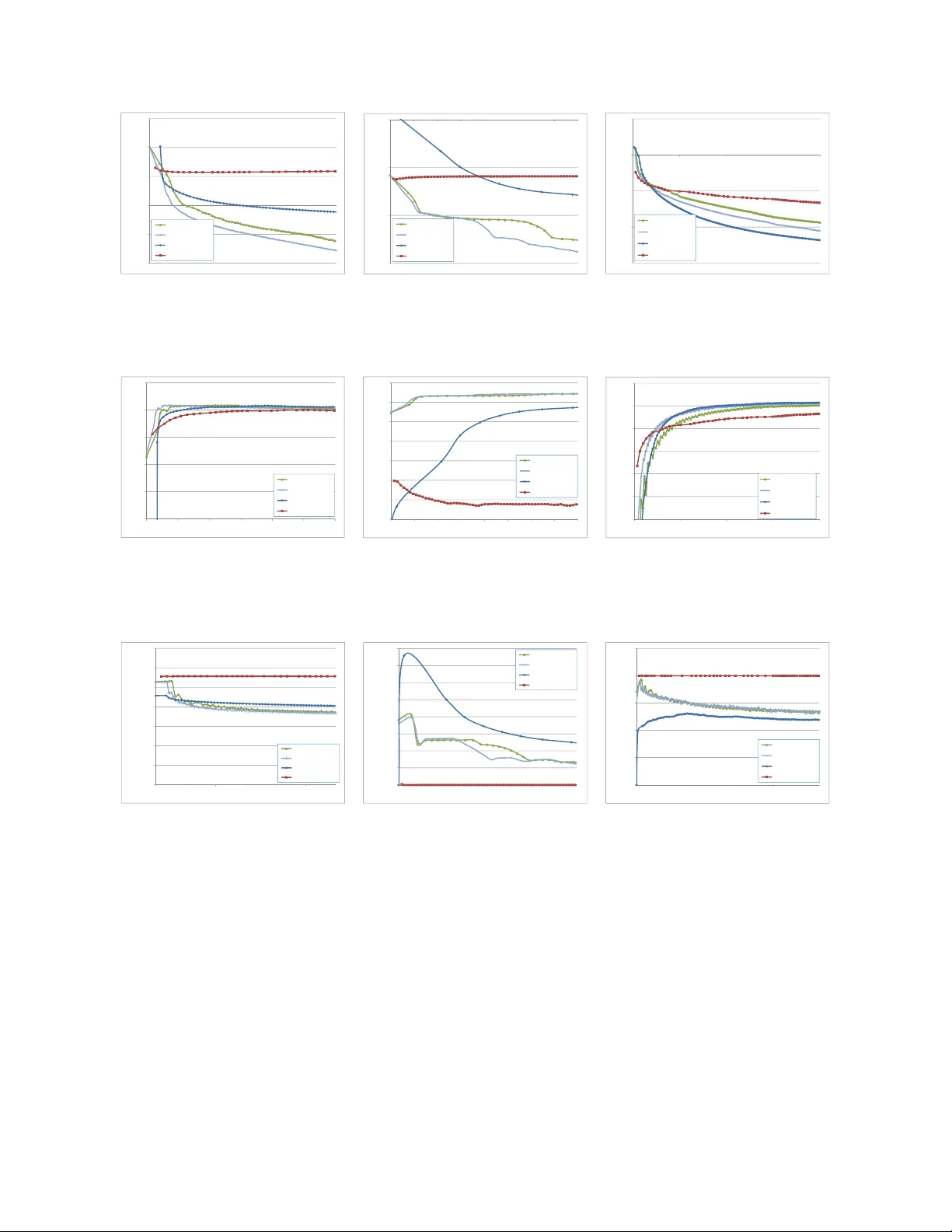

Distributed Co ordinate Descen t for Generalized Linear Mo dels with Regularization Ily a T rofimo v 1 , * and Alexander Genkin 2 , ** 1 Y andex 2 NYU L angone Me dic a l Center Generalized linear mo del with L 1 and L 2 regularization is a widely used tec hnique for solving classification, class probabilit y estimation and regression problems. With the n umbers of b oth features and examples gro wing rapidly in the fields like text min- ing and clic kstream data analysis parallelization and the use of cluster architectures b ecomes imp ortan t. W e present a nov el algorithm for fitting regularized generalized linear mo dels in the distributed environmen t. The algorithm splits data b et ween no des b y features, uses coordinate descen t on eac h no de and line search to merge results globally . Conv ergence proof is pro vided. A mo difications of the algorithm ad- dresses slo w no de problem. F or an imp ortan t particular case of logistic regression w e empirically compare our program with sev eral state-of-the art approaches that rely on differen t algorithmic and data spitting metho ds. Exp erimen ts demonstrate that our approac h is scalable and sup erior when training on large and sparse datasets. Keyw ords : large-scale learning · generalized linear mo del · regularization · sparsit y 1. INTR ODUCTION Generalized linear mo del (GLM) with regularization is the metho d of choice for solving classification, class probabilit y estimation and regression problems in text classification [1], clic kstream data analysis [2], web data mining [3] and compressed sensing [4]. Despite the fact that GLM can build only linear separating surfaces and regressions, with prop er regularization it can achiev e go o d testing accuracy for high dimensional input spaces. F or sev eral problems the testing accuracy has shown to be close to that of nonlinear k ernel * Electronic address: trofim@yandex- team.ru ** Electronic address: alexander.genkin@gmail.com 2 metho ds [5]. A t the same time training and testing of linear predictors is m uch faster. It mak es the GLM a go od choice for large-scale problems. Cho osing the righ t regularizer is problem dep endent. L 2 -regularization is known to shrink co efficien ts to wards zero leaving correlated ones in the mo del. L 1 -regularization leads to a sparse solution and typically selects only one co efficien t from a group of correlated ones. Elastic net regularizer is a linear com bination of L 1 and L 2 . It allows to select a trade-off b et w een them. Other regularizers are less often used: group lasso [6, 7] which includ es and excludes v ariables in groups, non-smo oth bridge [8] and non-con vex SCAD [9] regularizers. Fitting a statistical mo del on a large dataset is time consuming and requires a careful c ho osing of an optimization algorithm. Not all metho ds working on a small scale can b e used for large scale problems. A t present time algorithms dedicated for optimization on a single mac hine are well developed. Fitting commonly used GLMs with L 2 regularization is equiv alen t to minimization of a smo oth con v ex function. On the large scale this problem is typically solv ed by the conjugate gradien t method, “Limited memory BF GS” (L-BF GS) [10, 31], TRON [36]. Coordinate descen t algorithms w orks well in primal [1] and dual [11]. Also this problem can b e effectively solv ed b y v arious online learning algorithms [2, 12]. Using L 1 regularization is harder b ecause it requires to optimize a con vex but non-smo oth function. A broad survey [3] suggests that co ordinate descen t metho ds are the b est choice for L 1 -regularized logistic regression on the large scale. Widely used algorithms that fall in to this family are: BBR [1], GLMNET [13], newGLMNET [14]. Co ordinate descen t metho ds also work w ell for large-scale high dimensional LASSO [8]. Softw are implemen tations of these metho ds start with loading the full training dataset into RAM, which limits the p ossibilit y to scale up. Completely different approach is online learning [2, 15–17]. This kind of algorithms do not require to load training dataset in to RAM and can access it sequentially (i.e. reading from disk). Balakrishnan and Madighan [15], Langford et al. [16] rep ort that online learning p erforms well when compared to batch counterparts (BBR and LASSO). No wada ys w e see the growing num b er of problems where b oth the num b er of examples and the num b er of features are very large. Many problems gro w b ey ond the capabilities of a single mac hine and need to b e handled b y distributed systems. Distributed machine learning is now an area of active researc h. Efficien t computational arc hitectures and optimizations 3 tec hniques allo w to find more precise solutions, pro cess larger training dataset (without subsampling), and reduce the computational burden. Approac hes to distributed training of GLMs naturally fall into t w o groups by the wa y they split data across computing no des: by examples [18] or b y features [19]. W e b eliev e that algorithms that split data b y features can ac hiev e better p erformance and faster training sp eed than those that split b y examples. Our experiments so far confirm that b elief. When splitting data b y examples, online learning comes in handy . A mo del is trained in online fashion on eac h subset, then parameters of are a v eraged and used as a w armstart for the next iteration, and so on [18, 20]. The L-BF GS and conjugate gradient metho ds can b e easily implemented for example-wise splitting [18]. The log-lik eliho od and its gradien t are separable ov er examples. Th us they can b e calculated in parallel on parts of training set and then summed up. P arallel blo c k-co ordinate descen t is a natural algorithmic framework if w e c ho ose to split b y features. The challenge here is ho w to combine steps from coordinate blo c ks, or computing no des, and how to organize comm unication. When features are indep enden t, parallel updates can b e com bined straigh tforwardly , otherwise they may come in to conflict and not yield enough impro vemen t to ob jective; this has b een clearly illustrated in [4]. Bradley et al. [4] prop osed Shotgun algorithm based on randomized co ordinate descent. They studied how man y v ariables can b e up dated in parallel to guaran tee conv ergence. Ho et al. [21] presented distributed implementation of this algorithm compatible with State Synchronous P arallel P arameter Serv er. Ric ht´ arik and T ak´ aˇ c [22] use randomized blo ck-coordinate descen t and also exploit partial separabilit y of the ob jective. The latter relies on sparsity in data, whic h is indeed characteristic to many large scale problems. They present theoretical estimates of sp eed-up factor of parallelization. P eng et al. [19] prop osed a greedy blo ck-coordinate descen t metho d, which selects the next co ordinate to up date based on the estimate of the exp ected improv ement in the ob jectiv e. They found their GRo c k algorithm to b e sup erior o ver parallel FIST A [33] and ADMM [29]. Smith et al. [34] in tro duce aggregation parameter, whic h con trols the level of adding v ersus a veraging of partial solutions of all mac hines. Meier et al. [7] used blo c k-co ordinate descen t for fitting logistic regression with the group lasso p enalt y . They use a diagonal appro ximation of a Hessian and make steps o ver blocks of v ariables in a group follo wed by a line search to ensure con vergence. All groups are pro cessed sequentially; parallel version of the algorithm is not studied there, though the 4 authors men tion this p ossibilit y . In contrast, our approach is to mak e parallel steps on all blo c ks, then use combined up date as a direction and p erform a line search. W e show that sufficient data for the line search ha ve the size O ( n ) , where n is the num b er of training examples, so it can b e p erformed on one machine. Consequently , that’s the amoun t of data sufficient for communication b et ween mac hines. Overall, our algorithm fits in to the framew ork of CGD metho d prop osed b y T seng and Y un [23], whic h allo ws us to prov e con vergence. Our main contributions are the follo wing: • W e prop ose a new parallel co ordinate descen t algorithm for L 1 and L 2 regularized GLMs (Section 3) and pro ve its conv ergence for linear, logistic and probit regression (Section 5) • W e demonstrate ho w to guaran tee sparsity of the solution b y means of trust-region up dates (Section 4) • W e develop a computationally efficient softw are architecture for fitting GLMs with regularizers in the distributed settings (Section 6) • W e sho w ho w our algorithm can b e mo dified to solv e the “slo w no de problem” which is common in distributed mac hine learning (Section 7) • W e empirically show effectiveness of our algorithm and its implementation in compar- ison with several state-of-the art metho ds for the particular case of logistic regression (Section 8) The C++ implemen tation of our algorithm, whic h we call d-GLMNET , is publicly av ailable at https://github.com/IlyaTrofimov/dlr . 2. PR OBLEM SETTING T raining linear classification and regression leads to the optimization problem β ∗ = argmin β ∈ R p f ( β ) , (1) f ( β ) = L ( β ) + R ( β ) . (2) 5 Where L ( β ) = P n i =1 ` ( y i , β T x i ) is the negated log-lik eliho o d and R ( β ) is a regularizer. Here y i are targets, x i ∈ R p are input features, β ∈ R p is the unknown vector of weigh ts for input features. W e will denote b y nnz the num b er of non-zero en tries in all x i . The function ` ( y , ˆ y ) is a example-wise loss whic h w e assume to b e conv ex and t wice differentiable. Many statistical problems can b e expressed in this form: logistic, probit, Poisson regression, linear regression with squared loss, etc. Some penalty R ( β ) is often added to a void ov erfitting and n umerical ill-conditioning. In this w ork we consider the elastic net regularizer R ( β ) = λ 1 k β k 1 + λ 2 2 k β k 2 . W e solve the optimization problem (1) b y means of a blo c k co ordinate descen t algorithm. The first part of the ob jective - L ( β ) is con vex and smo oth. The second part is a regular- ization term R ( β ) , which is con vex but non-smo oth when λ 1 > 0 . Hence one cannot use directly efficient optimization tec hniques like conjugate gradien t metho d or L-BFGS which are often used for logistic regression with L 2 -regularization. Our algorithm is based on building lo cal appro ximations to the ob jective (2). A smo oth part L ( β ) of the ob jective has quadratic approximation n X i =1 ` ( y i , ( β + ∆ β ) T x i ) ≈ L q ( β , ∆ β ) = n X i =1 ` ( y i , β T x i ) + ∂ ` ( y i , β T x i ) ∂ ˆ y ∆ β T x i + 1 2 (∆ β T x i ) ∂ 2 ` ( y i , β T x i ) ∂ ˆ y 2 (∆ β T x i ) = C ( β ) + 1 2 n X i =1 w i ( z i − ∆ β T x i ) 2 . (3) w i = ∂ 2 ` ( y i , β T x i ) ∂ ˆ y 2 , z i = − ∂ ` ( y i , β T x i ) /∂ ˆ y ∂ 2 ` ( y i , β T x i ) /∂ ˆ y 2 and C ( β ) do esn’t dep end on ∆ β C ( β ) = L ( β ) − 1 2 n X i =1 z 2 i w i . The core idea of GLMNET and newGLMNET is iterativ e minimization of the p enalized quadratic appro ximation to the ob jectiv e argmin ∆ β { L q ( β , ∆ β ) + R ( β + ∆ β ) } (4) 6 via cyclic co ordinate descen t. This form (3) of approximation allows to mak e Newton up dates of the vector β without storing the Hessian explicitly . The approximation (4) has a simple closed-form solution with resp ect to a single v ariable ∆ β j ∆ β ∗ j = T ( P n i =1 w i x ij q i , λ 1 ) P n i =1 w i x 2 ij + λ 2 − β j , (5) T ( x, a ) = sgn( x ) max( | x | − a, 0) , q i = z i − ∆ β T x i + ( β j + ∆ β j ) x ij . 3. P ARALLEL COORDINA TE DESCENT In this work w e introduce a nov el architecture for a parallel co ordinate descent in a dis- tributed settings (multiple computational no des). The natural wa y to do it is to split training data set by features (“v ertical” splitting). W e will denote ob jects related to different com- putational nodes b y upp er indexes and use low er indexes for example and feature n umbers. More formally: let us split p input features into M disjoin t sets S k M [ k =1 S k = { 1 , ..., p } , S m ∩ S k = ∅ , k 6 = m. Our approac h is to optimize the quadratic approximation (4) in parallel o v er blo c ks of w eigh ts ∆ β m . The following prop osition explains ho w this idea mo difies the original GLMNET algorithm. Prop osition 1. Optimizing the quadr atic appr oximation (4) in p ar al lel over blo cks of weights ∆ β m is e quivalent to optimizing the quadr atic appr oximation to the obje ctive argmin ∆ β L ( β ) + ∇ L ( β ) T ∆ β + 1 2 ∆ β T e H ( β )∆ β + R ( β + ∆ β ) (6) with blo ck-diagonal e H ( β ) appr oximation of the Hessian ( e H ( β )) j l = ( ∇ 2 L ( β )) j l , if ∃ m : j, l ∈ S m , 0, otherwise. (7) 7 Pr o of. Let ∆ β = P M m =1 ∆ β m , where ∆ β m j = 0 if j / ∈ S m . Then L q ( β , ∆ β m ) = L ( β ) + ∇ L ( β ) T ∆ β m + 1 2 ∆( β m ) T ∇ 2 L ( β )∆ β m = L ( β ) + ∇ L ( β ) T ∆ β m + 1 2 X j,k ∈ S m ( ∇ 2 L ( β )) j k ∆ β m j ∆ β m k . By summing this equation o v er m M X m =1 L q ( β , ∆ β m ) = M X m =1 L ( β ) + ∇ L ( β ) T ∆ β m + 1 2 X j,k ∈ S m ( ∇ 2 L ( β )) j k ∆ β m j ∆ β m k ! = M L ( β ) + ∇ L ( β ) T ∆ β + 1 2 ∆ β T e H ( β )∆ β . (8) F rom the equation (8) and separabilit y of the L 1 and L 2 p enalties it follows that solving the problem in the equation (6) is equiv alent to solving M indep enden t sub-problems argmin ∆ β m ( L q ( β , ∆ β m ) + X j ∈ S m R ( β j + ∆ β m j ) ∆ β m j = 0 if j / ∈ S m ) , m = 1 . . . M (9) and can b e done in parallel o v er M nodes. Doing parallel up dates ov er blocks of w eights is the core of the prop osed d-GLMNET algo- rithm. Also it is p ossible to minimize a more general appro ximation L g en q ( β , ∆ β ) def = L ( β ) + ∇ L ( β ) T ∆ β + 1 2 ∆ β T ( µ ( e H ( β ) + ν I ))∆ β , argmin ∆ β L g en q ( β , ∆ β ) + R ( β + ∆ β ) , (10) where µ ≥ 1 , ν > 0 , without storing the Hessian explicitly . The one-dimensional up date rule mo difies accordingly ∆ β ∗ j = T ( P n i =1 w i x ij r i + ν β j , λ 1 ) µ P n i =1 w i x 2 ij + λ 2 + ν − β j , (11) r i = z i − µ ∆ β T x i + µ ( β j + ∆ β j ) x ij . Applying µ > 1 impro ves sparsit y of the solution in case of L 1 regularization (see Section 4). A ddition of ν I guarantees that matrix is positive definite, whic h is essential for con vergence (see Section 5). W e describe a high-lev el structure of d-GLMNET in the Algorithm 1. Algorithm 2 presen ts our approach for minimization of the lo cal appro ximation (10) with resp ect to ∆ β m . d-GLMNET mak es one cycle of co ordinate descen t ov er input features, while 8 Algorithm 1: Ov erall pro cedure of d-GLMNET. Input : training dataset, λ 1 , λ 2 , feature splitting S 1 , . . . , S M , η 1 ≥ 1 , η 2 ≥ 1 . 1 β ← 0 . 2 µ ← 1 . 3 while not c onver ge d do 4 Do in parallel o ver M no des: 5 Minimize L g en q ( β , ∆ β m ) + R ( β + ∆ β m ) with resp ect to ∆ β m (Algorithm 2). 6 ∆ β ← P M m =1 ∆ β m . 7 Find α ∈ (0 , 1] by the line search pro cedure (Algorithm 3). 8 β ← β + α ∆ β . 9 if α < 1 then 10 µ ← η 1 µ . 11 else 12 µ ← max(1 , µ/η 2 ) . Return: β . Algorithm 2: Solving quadratic sub-problem at node m . 1 ∆ β m ← 0 . 2 foreac h j ∈ S m do 3 Minimize L g en q ( β , ∆ β m ) + R ( β + ∆ β m ) with resp ect to ∆ β m j using (11). Return: ∆ β m . GLMNET and newGLMNET use multiple passes; we found that our approach w orks w ell in practice. Lik e in other Newton-like algorithms a line searc h should b e done to guarantee con ver- gence. Algorithm 3 describ es our line searc h pro cedure. W e found that selecting α init b y minimizing the ob jective (2) (step 4, Algorithm 3) sp eeds up the conv ergence of the Algo- rithm 1. W e used b = 0 . 5 , σ = 0 . 01 , γ = 0 in line searc h procedure for n umerical exp eriments (Section 8). 9 Algorithm 3: Line searc h pro cedure. Data: δ > 0 , 0 < b < 1 , 0 < σ < 1 , 0 ≤ γ < 1 . 1 if α = 1 yields sufficient de cr e ase in the obje ctive (12) then 2 α ← 1 . 3 else 4 Find α init = argmin δ <α ≤ 1 f ( β + α ∆ β ) . 5 Armijo rule: let α b e the largest elemen t of the sequence { α init b j } j =0 , 1 ,... satisfying f ( β + α ∆ β ) ≤ f ( β ) + ασ D , (12) D = ∇ L ( β ) T ∆ β + γ ∆ β T ( µ ( e H ( β ) + ν I ))∆ β + R ( β + ∆ β ) − R ( β ) . 6 end Return: α . 4. ENSURING SP ARSITY Applying µ > 1 is required for providing sparse solution in case of L 1 regularization. Sparsit y ma y suffer from the line searc h. Algorithm 1 starts with β = 0 , so absolute v alues of β tend to increase. Ho wev er there may b e cases when ∆ β j = − β j for some j on step 5 of Algorithm 1, so β j can go bac k to 0 . In that case, if line searc h on step 7 selects α < 1 , then the opp ortunit y for sparsity is lost. Parallel steps ov er blo cks of weigh ts ∆ β m come in conflict and for some datasets Algorithm 3 selects α < 1 almost alwa ys. T o guarantee sparsit y of the solution an algorithm m ust select step size α = 1 often enough. In App endix A we prov e that when µ ≥ Λ max (1 − σ ) λ min the line search is not required at all. Here Λ max , λ min are maximal and minimal eigenv alues of H ( β ) and e H ( β ) resp ectiv ely . Ho wev er it is hard to compute Λ max , λ min for an arbitrary dataset. Also µ ≥ Λ max (1 − σ ) λ min ma y yield very small steps and slow con vergence. F or his reason in d-GLMNET the µ parameter is c hanged adaptiv ely , see Algorithm 1. In n umerical experiments we used η 1 = η 2 = 2 . Note that line search alwa ys yields α = 1 when µ ≥ Λ max (1 − σ ) λ min , th us adaptive algorithm preserv es 1 ≤ µ < η 1 Λ max (1 − σ ) λ min . Alternativ ely problem (10) with µ > 1 can b e in terpreted as minimization of the La- 10 grangian for the constrained optimization problem argmin ∆ β L ( β ) + ∇ L ( β ) T ∆ β + 1 2 ∆ β T ( e H ( β ) + ν I )∆ β + R ( β + ∆ β ) sub ject to: ∆ β T ( e H ( β ) + ν I )∆ β ≤ r , with Lagrange m ultiplier µ − 1 . Steps are constrained to the iteration sp ecific trust region radius r . 5. CONVER GENCE Algorithm d-GLMNET falls in to the general framework of blo c k-co ordinate gradien t descen t (CGD) prop osed by T seng and Y un [23], whic h w e briefly describ e here. CGD is ab out minimization of a sum of a smo oth function and separable con vex function (2); in our case, negated log-likelihoo d and elastic net p enalty . At eac h iteration CGD solves p enalized quadratic appro ximation problem argmin ∆ β L ( β ) + ∇ L ( β ) T ∆ β + 1 2 ∆ β T H ∆ β + R ( β + ∆ β ) , (13) where H is some p ositiv e definite matrix, p ossibly iteration sp ecific. F or conv ergence it also requires that for some e min , e max > 0 for all iterations e min I H e max I . (14) A t eac h iteration updates are done o ver some subset of weigh ts. After that a line searc h b y the Armijo rule should b e conducted. If all weigh ts are up dated every T ≥ 1 consecutiv e iterations then CGD conv erges globally . T seng and Y un [23] pro ve also that if L ( β ) is strictly con vex and weigh ts are up dated by a prop er schedule (particularly by updating all weigh ts at each iteration, whic h is our case) then f ( β ) con verges as least Q-linearly and β con verges at least R-linearly , Firstly , let us show for which loss functions (14) holds. F rom loss function conv exit y follo ws 0 ∇ 2 L ( β ) . If the second deriv ative of loss function is b ounded ∂ 2 ` ( y , ˆ y ) ∂ ˆ y 2 < M , (15) then a T ∇ 2 L ( β ) a = n X i =1 ( a T x i ) ∂ 2 ` ( y i , β T x i ) ∂ ˆ y 2 ( a T x i ) ≤ k a k 2 n X i =1 k x i k 2 M , 11 and w e conclude that for some Λ min , Λ max > 0 Λ min I ∇ 2 L ( β ) + ν I Λ max I . (16) The assumption (15) holds for logistic and probit regressions, and for linear regression with squared loss, see App endix B. Secondly , let us prov e (14) for a blo c k-diagonal approximation H = µ ( e H ( β ) + ν I ) , where e H ( β ) is defined in (7). Denote its diagonal blo c ks by H 1 , ..., H M and represen t an arbitrary v ector a as a concatenation of sub v ectors of corresp onding size: a = (( a 1 ) T , ..., ( a M ) T ) T . Then w e hav e a T H a = M X m =1 ( a m ) T H m a m . Notice that H m = µ ( ∇ 2 L ( β m ) + ν I ) , where ∇ 2 L ( β m ) is a Hessian ov er the subset of features S m . So for each ( ∇ 2 L ( β m ) + ν I ) w e hav e Λ m min I ∇ 2 L ( β m ) + ν I Λ m max I , for m = 1 , . . . , M . That means µ Λ m min k x m k 2 ≤ ( a m ) T H m a m ≤ µ Λ m max k a m k 2 , for m = 1 , . . . , M . (17) Let λ min = min m =1 , ... ,M Λ m min , λ max = max m =1 , ... ,M Λ m max . Assume 1 ≤ µ ≤ µ max , whic h holds for constant or adaptiv ely changing µ (Section 4), and b y summing (17) up o ver m w e obtain the required λ min k a k 2 ≤ a T H a ≤ µ max λ max k a k 2 . 6. AR CHITECTURE F OR DISTRIBUTED TRAINING In this section we describ e details or softw are implementation of parallel co ordinate de- scen t. This implementation w orks in a distributed settings (m ultiple computational no des). When parallel co ordinate descen t is concerned the natural w ay is to split training data set by features (“vertical” splitting). Splitting of input features leads to splitting the matrix X of features in to M parts X m . The node m stores the part X m of training dataset corresp onding to a subset S m of input features. Let β = (( β 1 ) T , . . . , ( β M ) T ) T . 12 Algorithm 4: Distributed coordinate descent. Input : training dataset, λ 1 , λ 2 , feature splitting S 1 , . . . , S M . 1 while not c onver ge d do 2 Do in parallel o ver M no des: 3 Read part of training dataset X m sequen tially . 4 Find up dates ∆ β m and X m ∆ β m for w eights in P m ⊆ S m . 5 Sum up v ectors X m ∆ β m using MPI_AllReduce: 6 X ∆ β ← P M m =1 X m ∆ β m . 7 Find step size α using line searc h (Algorithm 3). 8 β m ← β m + α ∆ β m . 9 X β ← X β + αX ∆ β . Return: β . Algorithm 4 presen ts a high-lev el structure of our approac h. Eac h no de mak es a step ∆ β m o ver its blo ck of v ariables S m . Then all these steps are summed up and multiplied by a prop er step size m ultiplier. Algorithm 4 has sev eral k ey features: 1. The weigh ts vector β is stored in the distributed manner across all no des; a no de m stores β m . 2. The program stores in RAM only vectors y , X β 1 , X ∆ β , β m , ∆ β m . Th us the memory fo otprin t at node m is 3 n + 2 | S m | . 3. The program maintains vector X β synchronized across all no des after eac h iteration. Sync hronization is done by means of summation X m ∆ β m on step 6. The total com- m unication cost is M n . 4. At eac h iteration a subset P m ⊆ S m of w eigh ts is up dated. In Section 7 w e describ e t wo subset selection strategies. 1 F or a particular case of logistic regression one can store v ector exp( X β ) instead of X β to sp eed-up computations. 13 5. V arious types of co ordinate-wise up dates can b e implemen ted on step 4. Our up date is describ ed in Section 3. This is done b y one pass ov er the training dataset part X m . 6. The program reads training dataset sequentially from disk instead of RAM. It may slo w down the program in case of smaller datasets, but it makes the program more scalable. Also it conforms to the typical pattern of a m ulti-user Map/Reduce cluster system: large disks, many jobs started b y differen t users are running simultaneously . Eac h job might pro cess large data but it is allow ed to use only a small part of RAM at eac h no de. 7. Doing a linear searc h on step 7 requires calculating the log-lik eliho o d L ( β + α ∆ β ) and R ( β + α ∆ β ) for arbitrary α ∈ (0 , 1] . Since the v ector X β is synchronized b et ween no des, the log-likelihoo d can b e easily calculated. Eac h no de calculates the regularizer R ( β m ) separately and then the v alues are summed up via MPI_AllReduce 2 . This could be done for separable regularizers lik e L 1 , L 2 , group lasso, SCAD, e.t.c. 8. W e use Single Program Multiple Data (SPMD) programming st yle, i.e., all computa- tional no des execute the same co de and there’s no selected master. Some op erations (lik e linear searc h on step 7) are redundan tly executed on all no des. T ypically most datasets are stored in “by example” form, so a transformation to “b y fea- ture” form is required for distributed co ordinate descen t. F or large datasets this op eration is hard to do on a single no de. W e use a Map/Reduce cluster [24] for this purpose. Partitioning of the training dataset o ver no des is done b y means of a Reduce op eration in the streaming mo de. W e did not implement parallel co ordinate descen t completely in the Map/Reduce programming mo del since it is ill-suited for iterative mac hine learning algorithms [18, 25]. Using other programming mo dels like Spark [26] looks promising. 7. AD APTIVE SELECTION OF SUBSET TO UPD A TE Algorithm 4 is flexible in selecting the subset of w eights P m ⊆ S m , which are up dated on step 4. The simplest strategy is to up date alwa ys all weigh ts P m = S m . In this case 2 W e used an implemen tation from the V owpal W abbit pro ject https : //g ithub.com/J ohnLang f or d/v owpal _ wabbit 14 the algorithm becomes deterministic; each no de p erforms predefined computation b efore sync hronization. This is a case of a Bulk Sync hronous P arallel (BSP) programming mo del. Programs based on BSP mo del share a common w eak p oin t: the whole program p erformance is limited b y the slow est work er b ecause of the sync hronization step. Performance c haracter- istics of no des in a cluster may b e different due to several reasons: comp etition for resources with other tasks, different hardware, bugs in optimization settings e.t.c [24]. In context of distributed data pro cessing this problem is kno wn as a “slow no de problem”. In Map/Reduce clusters the slow no de problem is t ypically solved by “bac kup tasks” [24], when the scheduler starts copies of the slow est task in a job on alternative no des. The completion of any copy is sufficien t for the whole job completion. Ho wev er this mechanism isn’t applicable for algorithms maintaining state, whic h is our case: algorithm d-GLMNET main tains state as vectors X β , β m . Man y mac hine learning systems try to ov ercome this w eak p oin t by moving to asyn- c hronous computations. Ho et al. [21] dev elop a Stale Sync hronous P arallel P arameter Serv er (SSPPS), which allo ws the fastest and the slow est no de to hav e some gap not exceed- ing a fixed num b er of iterations. The F ugue system [27] allows fast no des to mak e extra optimization on its subset of the training dataset while w aiting for slow ones. The Y!LD A [28] architecture for learning topic models on the large scale keeps global state in a parameter serv er, and eac h no de updates it async hronously . Our program resolv es “slo w no de” problem b y selecting the subset P m adaptiv ely . The program has an additional thread c hecking ho w many no des hav e already done up date o ver all w eigh ts in S m (Algorithm 4, step 4). If the fraction of suc h no des is greater then κM with some 0 < κ < 1 , then all no des break the optimization and proceed to sync hronization (Algorithm 4, step 6). Up dates of w eights in S m are don e cyclically , so on the next iteration a no de resumes optimization starting from the next weigh t in S m . F ast no des are allow ed to mak e more then one cycle of optimization, i.e. make t wo or more up dates of eac h w eight. W e used κ = 0 . 75 in all n umerical exp erimen ts. W e call this mec hanism “Asynchronous Load Balancing” (ALB) and the algorithm mo di- fied in this w ay - d-GLMNET-ALB . A p ossible drawbac k of this mec hanism is that a very slow no de ma y not be able to up date every w eigh t even after many iterations. How ever, w e hav e nev er observ ed suc h an extreme situation in practice. d-GLMNET-ALB algorithm con v erges globally , just lik e d-GLMNET . How ever, linear conv er- 15 gence cannot b e established b ecause d-GLMNET-ALB up dates blo cks of weigh ts in an non- deterministic order, so it doesn’t fit sc hedule requiremen ts sp ecified in [23]. 8. NUMERICAL EXPERIMENTS In this section w e ev aluate the p erformance of d-GLMNET for an important particular case: logistic regression with L 1 and L 2 regularization. Firstly , w e demonstrate the effect of adaptiv e µ in the Hessian appro ximation. This strat- egy was used only for exp eriments with L 1 regularization. With L 2 regularization we used constan t µ = 1 . Secondly , we p erform comparison with three state-of-the-art approaches: ADMM, distributed “online learning via truncated gradient” (for L 1 regularization), com- bination of distributed online learning with L-BF GS (for L 2 regularization). W e briefly describ e these approac hes b elo w. F or L 1 regularization, the sparsit y of the solution is an- other matter of interest. W e also ev aluated the eff ect of the “Async hronous Load Balancing” (ALB) tec hnique. Thirdly , w e show how the p erformance of d-GLMNET-ALB impro ves with the increase of n umber of computing no des. 8.1. Comp eting algorithms The first approac h for comparison is an adaptation of ADMM for L 1 -regularized logistic regression. W e implemented the algorithm from [29, sections 8.3.1, 8.3.3] 3 . It uses a sharing tec hnique [29, section 7.3] to distribute computations among no des. The sharing tec hnique requires dataset b eing split by features. Like our algorithm, it stores weigh ts β in a dis- tributed manner. W e used MPI_AllReduce to sum up Ax k and implemented a lo okup-table prop osed in [29, section 8.3.3] to sp eed up z − up date. Doing an x − up date inv olves solving a large scale LASSO. W e used a Sho oting [8] to do it since it is well suited for large and sparse datasets. Shooting algorithm is based on co ordinate descent. That is wh y this mo dification of ADMM can b e viewed as another wa y to do distributed co ordinate descent. F or each dataset we selected a parameter ρ ∈ [4 − 3 , . . . , 4 3 ] yielding best ob jective after 10 iterations and used it for final p erformance ev aluation. 3 The update rule for ¯ z k in [29, section 8.3.3] has an error. Instead of ( ρ/ 2) should b e ( ρN / 2) . The ADMM algorithm p erformed po orly b efore w e fixed it. 16 T able 1. Datasets summary . dataset size #examples (train/test/v alidation) #features nnz a vg nonzeros epsilon 12 Gb 0 . 4 × 10 6 / 0 . 05 × 10 6 / 0 . 05 × 10 6 2000 8 . 0 × 10 8 2000 w ebspam 21 Gb 0 . 315 × 10 6 / 0 . 0175 × 10 6 / 0 . 0175 × 10 6 16 . 6 × 10 6 1 . 2 × 10 9 3727 y andex_ad 56 Gb 57 × 10 6 / 2 . 35 × 10 6 / 2 . 35 × 10 6 35 × 10 6 5 . 7 × 10 9 100 A combination of distributed online learning with L-BFGS was presen ted in [18]. An Algorithm 2 from [18] describ es a whole combined approac h. The first part of it prop oses to compute a w eighted av erage of classifiers trained at M no des indep enden tly via online learning. The second part warmstarts L-BF GS with the result of the first part. This com bi- nation has fast initial conv ergence (due to online learning) and fast lo cal conv ergence (due to quasi-Newtonian up dates of L-BFGS). As we p oin ted out earlier, L-BFGS it not applicable for solving logistic regression with L 1 -regularization. Th us for exp eriments with L 1 regularization w e used only distributed online learning, namely “online learning via truncated gradien t” [16] . F or exp erimen ts with L 2 regularization w e ran full Algorithm 2 from [18]. Both of these algorithms require training dataset partitioning by examples o ver M no des. W e used the online learning and L-BF GS implementation from the op en source Vowpal Wabbit (VW) pro ject 4 . W e didn’t use feature hashing since it may decrease the qualit y of the classifier. As far as hyperparameters for online learning are concerned, we tested join tly learning rates (raging from 0 . 1 to 0 . 5 ) and p o wers of learning rate decay (raging from 0.5 to 0.9). Then we selected the b est com bination for eac h dataset (yielding the b est ob jectiv e) and used it for further tests. W e w ould like to note that the d-GLMNET and d-GLMNET-ALB don’t ha ve an y hyperparam- eters (except a regularization coefficient) and they are easier for practical usage. 8.2. Datasets and exp erimen tal settings W e used three datasets for numerical exp erimen ts: • epsilon - Syn thetic dataset. 4 https://github.com/JohnLangford/vowpal_wabbit , v ersion 7.5 17 T able 2. Computational load of the algorithms. Algorithm Iteration Complexit y Memory F o otprint Communication Cost Online learning via truncated gradien t O ( nnz ) 2 M p 2 M p L-BF GS O ( nnz ) 2 r M p a M p d-GLMNET O ( nnz ) 3 M n + 2 p M n ADMM O ( nnz ) 5 M n + p M n a The r parameter sp ecifies memory usage in L-BFGS. W e used default v alue r = 15 . • webspam - W ebspam classification problem. • yandex_ad - The clic k prediction problem - the goal is to predict the probability of click on the ad. This is a non-public dataset created from th e user logs of the commercial searc h engine (Y andex). T w o of these sets - “epsilon” and “webspam” are publicly a v ailable from the Pascal Large Scale Learning Challenge 2008 5 . W e randomly split the original test sets into new test and v alidation sets. The datasets are summarized in the T able 1. Numerical exp erimen ts w ere carried out at cluster of multicore blade servers having Intel(R) Xeon(R) CPU E5-2660 2.20GHz, 32 GB RAM, connected by Gigabit Ethernet. W e used 16 no des of a cluster in all numerical exp erimen ts. Each no de ran one instance of the algorithms. W e used Map/Reduce cluster to partition dataset b y features o v er nodes. This was done b y a Reduce op eration in streaming mo de using feature num b er as a key . Since Reduce op eration assigns partitions to nodes b y a hash of a k ey , the splitting of input features S 1 , . . . , S M w as pseudo-random. All algorithms requiring dataset partitioning b y feature used the same partitioning. T able 2 presents computational load on all no des for eac h of the algorithms. F or eac h dataset we selected L 1 and L 2 regularization coefficients from the range { 2 − 6 , . . . , 2 6 } yielding the b est classification quality on the v alidation set. Then w e ran the 5 http://largescale.ml.tu- berlin.de/ . W e used prepro cessing and train/test splitting from http://www.csie.ntu.edu.tw/~cjlin/libsvmtools/datasets/binary.html . 18 0.0001 0.001 0.01 0.1 1 0 1000 2000 3000 4000 5000 6000 7000 Rel ative Objective Suboptimality Time, sec d ‐ GLMNET , μ = 1 d ‐ GLMNET , adaptive μ (a) relativ e ob jectiv e sub optimalit y vs. time 0.12 0.13 0.14 0.15 0.16 0.17 0.18 0.19 0 1000 2000 3000 4000 5000 6000 700 0 auPRC Time, sec d ‐ GLMNET , μ = 1 d ‐ GLMNET , ada ptive μ (b) testing quality (area under precision-recall curve) vs. time 0.0E+0 5.0E+5 1.0E+6 1.5E+6 2.0E+6 2.5E+6 0 1000 2000 3000 4000 5000 6000 7000 Non ‐ zero weight s count Time, sec d ‐ GLMNET , μ = 1 d ‐ GLMNET , adaptive μ (c) n umber of non-zero w eights vs. time Figure 1. Constan t µ = 1 vs. adaptiv e µ for y andex_ad dataset, L 1 regularization. soft ware implemen tations of all algorithms ( d-GLMNET , ADMM, distributed “online learning via truncated gradient”, L-BFGS) with the b est h yp erparameters on all datasets. T o mak e ev aluation less dep endent on the current situation on the cluster, w e rep eated learning 9 times with eac h algorithm and selected a run with the median execution time. T o study conv ergence profile of each algorithm we recorded the relativ e ob jective sub opti- malit y and testing quality v ersus time. The optimal v alue of the ob jectiv e function f ∗ w as appro ximately ev aluated by running many iterations of liblinear 6 program for “epsilon” and “w ebspam” datasets and d-GLMNET for the biggest “y andex_ad” dataset. Then relative ob jective sub optimality was calculated as ( f − f ∗ ) /f ∗ , where f is the curren t v alue of the ob jective function. W e used area under precision-recall curv e (auPRC) as a testing quality measure (the definition is giv en in App endix C). Finally , we ev aluated the influence of the num b er of computing no des on the sp eed of d-GLMNET-ALB . Fig. 7 and 8 presen t execution times of the algorithm with v arious num b ers of no des relativ e to one no de. Time w as recorded when the algorithm came within 2 . 5% of the optimal ob jectiv e function v alue f ∗ . 8.3. Results and discussion Firstly we sho w how adaptiv ely c hanging µ parameter affects on d-GLMNET algorithm using “y andex_ad” dataset as an example. Fig. 1 compares tw o cases : constan t µ = 1 6 http://www.csie.ntu.edu.tw/~cjlin/liblinear/ 19 0.01 0.1 1 10 100 1000 0 200 400 600 800 1000 1200 Rela tive Ob jective Suboptimality Time, sec d ‐ GLMNET d ‐ GLMNET ‐ ALB ADMM VW (a) w ebspam 0.001 0.01 0.1 1 0 500 1000 1500 2000 2 500 3000 3500 4000 Rela tive Objective Suboptimality Time, sec d ‐ GLMNET d ‐ GLMNET ‐ ALB ADMM VW (b) y andex_ad 0.001 0.01 0.1 1 10 0 500 1000 1500 2000 Rela tive Objective Suboptimality Time, sec d ‐ GLMNET d ‐ GLMNET ‐ ALB ADMM VW (c) epsilon Figure 2. L 1 regularization: relative ob jectiv e sub optimalit y vs. time. 0.995 0.996 0.997 0.998 0.999 1 0 200 400 600 800 1000 1200 auPRC Time, sec d ‐ GLMNET d ‐ GLMNET ‐ ALB ADMM VW (a) w ebspam 0.12 0.13 0.14 0.15 0.16 0.17 0.18 0.19 0 500 1000 1500 2000 2500 3000 3500 4000 auPRC Time, sec d ‐ GLMNET d ‐ GLMNET ‐ ALB ADMM VW (b) y andex_ad 0.93 0.935 0.94 0.945 0.95 0.955 0.96 0 500 1000 1500 2000 auPRC Time, sec d ‐ GLMNET d ‐ GLMNET ‐ ALB ADMM VW (c) epsilon Figure 3. L 1 regularization: testing quality (area under precision-recall curv e) vs. time. 1.0E+0 1.0E+1 1.0E+2 1.0E+3 1.0E+4 1.0E+5 1.0E+6 1.0E+7 0 200 400 600 800 1000 1200 Non ‐ zero weights coun t, log ‐ scaled Time, sec d ‐ GLMNET d ‐ GLMNET ‐ ALB ADMM VW (a) w ebspam 0.0E+0 5.0E+5 1.0E+6 1.5E+6 2.0E+6 2.5E+6 3.0E+6 3.5E+6 4.0E+6 0 1000 2000 3000 4000 Non ‐ zero weights count Time, sec d ‐ GLMNET d ‐ GLMNET ‐ ALB ADMM VW (b) y andex_ad 0 500 1000 1500 2000 2500 0 500 1000 1500 2000 Non ‐ zero weights count Time, sec d ‐ GLMNET d ‐ GLMNET ‐ ALB ADMM VW (c) epsilon Figure 4. L 1 regularization: num b er of non-zero weigh ts vs. time. and adaptive µ . Adaptiv ely changing µ sligh tly impro ves sp eed of con vergence and testing accuracy but dramatically impro ves the sparsit y . T o ev aluate and compare the sp eed of the algorithms we created scatter plots “Relativ e ob jective sub optimalit y vs. time” (Fig. 2 and 5) and “T esting quality vs. time” (Fig. 3 and 6). With L 1 regularization, Fig. 2, 3 sho ws that d-GLMNET algorithm has the same or faster sp eed of ob jectiv e function optimization and improving testing accuracy on “webspam” and “y andex_ad” datasets then comp eting algorithms. The ADMM algorithm generally p erforms 20 0.001 0.01 0.1 1 10 0 200 400 600 800 1000 1200 1400 Rela tive Ob jective Suboptimality Time, sec d ‐ GLMNET d ‐ GLMNET ‐ ALB VW (a) w ebspam 0.00001 0.0001 0.001 0.01 0.1 1 0 1000 2000 3000 4000 5000 6000 Relati ve Objective Suboptimality Time, sec d ‐ GLMNET d ‐ GLMNET ‐ ALB VW (b) y andex_ad 0.0001 0.001 0.01 0.1 1 10 0 200 400 600 800 1000 1200 Relati ve Objective Suboptimality Time, sec d ‐ GLMNET d ‐ GLMNET ‐ ALB VW (c) epsilon Figure 5. L 2 regularization: relative ob jectiv e sub optimalit y vs. time. 0.995 0.996 0.997 0.998 0.999 1 0 200 400 600 800 1000 1200 1400 auPRC Time, sec d ‐ GLMNET d ‐ GLMNET ‐ ALB VW (a) w ebspam 0.14 0.15 0.16 0.17 0.18 0.19 0 10 00 2000 3000 4000 5000 6000 auPRC Time, sec d ‐ GLMNET d ‐ GLMNET ‐ ALB VW (b) y andex_ad 0.93 0.935 0.94 0.945 0.95 0.955 0.96 0 200 400 600 800 1000 1200 auPRC Time, sec d ‐ GLMNET d ‐ GLMNET ‐ ALB VW (c) epsilon Figure 6. L 2 regularization: testing quality (area under precision-recall curv e) vs. time. w ell and it is sligh tly b etter than d-GLMNET and d-GLMNET-ALB on “epsilon” dataset. The Vowpal Wabbit program has the same or w orse testing accuracy for all datasets but it p oorly optimizes the ob jective. Exp erimen ts with L 1 regularization sho wed that “Async hronous Load Balancing” alwa ys impro ved or left the same the p erformance of the d-GLMNET . Also for runs with L 1 -regularization we created a scatter plot “Number of non-zero w eights vs. time” (Fig. 4). The sparsity of solutions b y d-GLMNET is b etter then that of ADMM for “w ebspam” and “y andex_ad” datasets but sligh tly w orse for “epsilon” dataset. Sparsit y pro duced b y Vowpal Wabbit is inconsistent: to o sparse or to o dense when compared to other algorithms. With L 2 regularization, d-GLMNET optimizes the ob jective function faster (Fig. 5) and ac hieves b etter testing accuracy (Fig. 6) on sparse datasets with large num b er of features - “w ebspam” and “y andex_ad”. Ho w ever on dense dataset “epsilon”, where the n um b er of features is relatively small, L-BF GS w armstarted b y online learning is better. Again d-GLMNET-ALB is faster then it’s synchronous counterpart. Ev aluation of the sp eed of the d-GLMNET-ALB algorithm with differen t num b ers of com- 21 (a) w ebspam (b) y andex_ad (c) epsilon Figure 7. L 1 regularization: relative speedup of the d-GLMNET-ALB algorithm for the different n umber of no des: blue line. Linear sp eedup for reference (fictional): red line. (a) w ebspam (b) y andex_ad (c) epsilon Figure 8. L 2 regularization: relative speedup of the d-GLMNET-ALB algorithm for the different n umber of no des: blue line. Linear sp eedup for reference (fictional): red line. puting no des (Fig. 7 and 8) sho ws that on each dataset the sp eedup ac hieved with the increased n um b er of no des is limited. This happ ens b ecause of tw o reasons. First, blo c k- diagonal approximation of the Hessian b ecomes less accurate while splitting dataset o v er larger n umber of no des, up dates from no des comes in conflict more often, so the algorithm mak es smaller steps. Second, comm unication cost increases. 9. CONCLUSIONS AND FUTURE W ORK In this pap er we presen ted a no vel architecture for training generalized linear mo dels with regularization in the distributed setting based on parallel coordinate descent. W e implemen ted a nov el parallel co ordinate descent algorithm d-GLMNET and its mo dification d-GLMNET-ALB , whic h is immune to the “slow no de problem”. W e prop osed a trust-region 22 up date whic h yields a sparse solution in case of L 1 regularization. In a series of n umer- ical experiments w e demonstrated that our algorithms and soft w are implemen tation are w ell suited for training logistic regression with L 1 and L 2 regularization on the large scale. Exp erimen ts sho w that d-GLMNET is sup erior ov er sev eral state-of-the-art algorithms when training on sparse high-dimensional datasets. It p ossesses a faster con v ergence sp eed an d en- jo ys sp eedup when using m ultiple computing no des. This is essen tial for large-scale machine learning problems where long training time is often an issue. d-GLMNET can also b e extended to regularizers other than L 1 and L 2 . Optimizing quadratic appro ximation (4) o ver one w eigh t ∆ β j via any one-dimensional optimization algorithm is simple enough; it can b e done either exactly of appro ximately for an y separable regularizer: bridge, SCAD, e.t.c. Suc hard et al. [30] show ed that training GLMs on multicore giv es significant sp eedup. Com bining computations on m ulticore on each no de with the distributed architecture is a promising direction for further dev elopmen t. A CKNOWLEDGMENTS W e would like to thank John Langford for the advices on V owpal W abbit and Ily a Muc hnik for his contin uous supp ort. APPENDIX A: A V OIDING LINE SEAR CH Prop osition 2. When µ ≥ Λ max (1 − σ ) λ min the Armijo rule (12) with γ = 0 wil l b e satisfie d for α = 1 . Pr o of. Let g ( t ) = L ( β + t ∆ β ) . Then g 0 ( t ) = ∇ L ( β + t ∆ β ) T ∆ β , g 00 ( t ) = ∆ β T ∇ 2 L ( β + t ∆ β )∆ β . 23 W e obtain the upp er b ound for L ( β + ∆ β ) L ( β + ∆ β ) = g (1) = g (0) + Z 1 0 g 0 ( t ) dt ≤ g (0) + Z 1 0 g 0 (0) + t max z ∈ [0 , 1] | g 00 ( z ) | dt = L ( β ) + ∇ L ( β ) T ∆ β + 1 2 max z ∈ [0 , 1] | ∆ β T ∇ 2 L ( β + z ∆ β )∆ β | ≤ L ( β ) + ∇ L ( β ) T ∆ β + 1 2 Λ max k ∆ β k 2 . In the last inequalit y w e used ∇ 2 L ( β ) Λ max I which follows from (16). Then f ( β + ∆ β ∗ ) − f ( β ) = L ( β + ∆ β ∗ ) − L ( β ) + R (∆ β + β ∗ ) − R ( β ) ≤ ∇ L ( β ) T ∆ β + 1 2 Λ max k ∆ β k 2 + R ( β + ∆ β ∗ ) − R ( β ) = D + 1 2 Λ max k ∆ β k 2 . (A1) Where w e used D from Armijo rule (12) for a particular case γ = 0 D = ∇ L ( β ) T ∆ β + R ( β + ∆ β ∗ ) − R ( β ) . Since ∆ β ∗ minimizes (10) ∇ L ( β ) T ∆ β + R ( β + ∆ β ∗ ) + 1 2 (∆ β ∗ )( µ ( e H ( β ) + ν I ))∆ β ∗ ≤ R ( β ) , then D has the upp er b ound ∇ L ( β ) T ∆ β + R ( β + ∆ β ∗ ) − R ( β ) ≤ − 1 2 (∆ β ∗ )( µ ( e H ( β )) + ν I )∆ β ∗ D ≤ − 1 2 (∆ β ∗ ) µ ( e H ( β ) + ν I )∆ β ∗ . By noticing that λ min I e H ( β ) + ν I we obtain for µ ≥ Λ max (1 − σ ) λ min : 1 2 Λ max k ∆ β ∗ k 2 ≤ 1 2 (1 − σ ) µλ min k ∆ β ∗ k 2 ≤ 1 2 (1 − σ )(∆ β ∗ ) T ( µ ( e H + ν I ))∆ β ∗ ≤ − (1 − σ ) D . (A2) Substituting (A2) into (A1) yields f ( β + ∆ β ∗ ) − f ( β ) ≤ D − (1 − σ ) D = σ D , whic h pro ves that Armijo rule is satisfied for α = 1 . 24 APPENDIX B: LOSS FUNCTIONS SECOND DERIV A TIVE UPPER BOUNDS • Squared loss : ` ( y , ˆ y ) = 1 2 ( y − ˆ y ) 2 , ∂ 2 ` ( y , ˆ y ) ∂ ˆ y 2 = 1 . • Logistic loss : ` ( y , ˆ y ) = log (1 + exp( − y ˆ y )) . F or logistic loss ∂ 2 ` ( y , ˆ y ) ∂ ˆ y 2 = p ( ˆ y )(1 − p ( ˆ y )) , where p ( ˆ y ) = 1 / (1 + e − ˆ y ) and consequently ∂ 2 ` ( y , ˆ y ) ∂ ˆ y 2 ≤ 1 4 . • Probit loss : ` ( y , ˆ y ) = − log (Φ( y ˆ y )) , where Φ( · ) is a CDF of a normal distribution. Denote p ( ˆ y ) = 1 √ 2 π exp ( − ˆ y 2 / 2) . It is sufficient to giv e the pro of only for y = 1 b ecause ∂ 2 ` ( − 1 , ˆ y ) ∂ ˆ y 2 = ∂ 2 ` (1 , − ˆ y ) ∂ ˆ y 2 . W e hav e ∂ 2 ` ( y , ˆ y ) ∂ ˆ y 2 = ˆ y p ( ˆ y ) Φ( ˆ y ) + p 2 ( ˆ y ) Φ 2 ( ˆ y ) . When ˆ y ≥ 0 second deriv ativ e has upper b ound ˆ y p ( ˆ y ) Φ( ˆ y ) + p 2 ( ˆ y ) Φ 2 ( ˆ y ) ≤ 2 ˆ y p ( ˆ y ) + 4 p 2 ( ˆ y ) ≤ 2 p (1) + 4 p (0) , b ecause Φ( ˆ y ) ≥ Φ(0) = 1 / 2 and ˆ y p ( ˆ y ) reac hes maximum in ˆ y = 1 . When ˆ y ∈ ( − 1 , 0) second deriv ative is b ounded. The case ˆ y ≤ − 1 is a bit more complex. F rom [35] we ha ve | ˆ y | p ( ˆ y ) 1 + ˆ y 2 < Φ( ˆ y ) < p ( ˆ y ) | ˆ y | , then 1 Φ( ˆ y ) < 1 + ˆ y 2 | ˆ y | p ( ˆ y ) , ˆ y Φ( ˆ y ) < ˆ y | ˆ y | p ( ˆ y ) , and finally ∂ 2 ` ( y , ˆ y ) ∂ ˆ y 2 = ˆ y p ( ˆ y ) Φ( ˆ y ) + p 2 ( ˆ y ) Φ 2 ( ˆ y ) < ˆ y | ˆ y | + 1 + ˆ y 2 | ˆ y | 2 = − ˆ y 2 + 1 + 2 ˆ y 2 + ˆ y 4 ˆ y 2 = 2 + 1 ˆ y 2 ≤ 3 . Th us for all cases ˆ y ≥ 0 , ˆ y ∈ ( − 1 , 0) , ˆ y ≤ − 1 second deriv ative has the upper b ound. APPENDIX C: AREA UNDER PRECISION-RECALL CUR VE (AUPR C) Area under Precision-Recall curve is a classification qualit y measure. Consider n examples with binary class lab els y i ∈ {− 1 , +1 } and a classifier predictions with a real-v alued outcomes 25 p i ∈ [0 , 1] . Giv en a threshold a precision (Pr) and recall (Rc) are defined as follo ws P r ( a ) = |{ i | p i ≥ a & y i = +1 }| |{ i | p i ≥ a }| , Rc ( a ) = |{ i | p i ≥ a & y i = +1 }| |{ i | y i = +1 }| . Precision-Recall curv e is obtained b y v arying a ∈ [0 , 1] . The area under this curv e is consid- ered a classification quality measure. It is more sensitiv e than a commonly used ROC A UC in case of highly im balanced classes [32]. 1. Genkin, A., Lewis, D. D., Madigan, D. (2007). Large-scale Ba yesian logistic regression for text categorization. T ec hnometrics, 49(3), 291-304. 2. McMahan, H. B., Holt, G., Sculley , D., Y oung, M., Ebner, D., Grady , J., Nie, Davydo v, E., Golo vin, D., Chikkerur, S., Liu, D., W attenberg, M., Hrafnkelsson, A.M., Boulos, T., Kubica, J. (2013). A d clic k prediction: a view from the trenc hes. In Pro ceedings of the 19th A CM SIGKDD in ternational conference on Knowledge discov ery and data mining (pp. 1222-1230). ACM. 3. Y uan, G. X., Chang, K. W., Hsieh, C. J., Lin, C. J. (2010). A comparison of optimization metho ds and soft ware for large-scale l1-regularized linear classification. The Journal of Machine Learning Researc h, 11, 3183-3234. 4. Bradley , J. K., Kyrola, A., Bickson, D., & Guestrin, C. (2011). Parallel coordinate descen t for l1-regularized loss minimization. arXiv preprin t 5. Y uan, G. X., Ho, C. H., & Lin, C. J. (2012). Recen t adv ances of large-scale linear classification. Pro ceedings of the IEEE, 100(9), 2584-2603. 6. Y uan, M., & Lin, Y. (2006). Mo del selection and estimation in regression with grouped v ariables. Journal of the Ro yal Statistical So ciet y: Series B (Statistical Metho dology), 68(1), 49-67. 7. Meier, L., V an De Geer, S., & Buhlmann, P . (2008). The group lasso for logistic regression. Journal of the Ro yal Statistical So ciet y: Series B (Statistical Metho dology), 70(1), 53-71. 8. F u, W. J. (1998). P enalized regressions: the bridge v ersus the lasso. Journal of computational and graphical statistics, 7(3), 397-416. 9. F an, J., & Li, R. (2001). V ariable selection via nonconcav e penalized likelihoo d and its oracle prop erties. Journal of the American statistical Asso ciation, 96(456), 1348-1360. 26 10. Sutton, C., & McCallum, A. (2006). An introduction to conditional random fields for relational learning. In tro duction to statistical relational learning, 93-128. 11. Y u, H. F., Huang, F. L., & Lin, C. J. (2011). Dual co ordinate descent metho ds for logistic regression and maxim um entrop y mo dels. Mac hine Learning, 85(1-2), 41-75. 12. Karampatziakis, N., & Langford, J. (2010). Online imp ortance weigh t a ware up dates. arXiv preprin t 13. F riedman, J., Hastie, T., & Tibshirani, R. (2010). Regularization paths for generalized linear mo dels via co ordinate descen t. Journal of statistical softw are, 33(1), 1. 14. Y uan, G. X., Ho, C. H., & Lin, C. J. (2012). An improv ed glmnet for l1-regularized logistic regression. The Journal of Mac hine Learning Research, 13(1), 1999-2030. 15. Balakrishnan, S., & Madigan, D. (2008). Algorithms for sparse linear classifiers in the massiv e data setting. The Journal of Mac hine Learning Research, 9, 313-337. 16. Langford, J., Li, L., & Zhang, T. (2009). Sparse online learning via truncated gradient. In A dv ances in neural information pro cessing systems (pp. 905-912). 17. McMahan, H. B. (2011). F ollo w-the-Regularized-Leader and Mirror Descent: Equiv alence The- orems and L1 Regularization. In AIST A TS (pp. 525-533). 18. Agarwal, A., Chap elle, O., Dudik, M., & Langford, J. (2014). A reliable effective terascale linear learning system. The Journal of Mac hine Learning Research, 15(1), 1111-1133. 19. Peng, Z., Y an, M., & Yin, W. (2013). P arallel and distributed sparse optimization. In Signals, Systems and Computers, 2013 Asilomar Conference on (pp. 659-646). IEEE. 20. Zinkevic h, M., W eimer, M., Li, L., & Smola, A. J. (2010). P arallelized stochastic gradien t descen t. In Adv ances in neural information pro cessing systems (pp. 2595-2603). 21. Ho, Q., Cipar, J., Cui, H., Lee, S., Kim, J. K., Gibb ons, P . B., & Xing, E. P . (2013). More effectiv e distributed ml via a stale synchronous parallel parameter server. In A dv ances in neural information pro cessing systems (pp. 1223-1231). 22. Rich t´ arik, P ., & T ak´ aˇ c, M. (2015). P arallel coordinate descen t metho ds for big data optimiza- tion. Mathematical Programming, 1-52. 23. T seng, P ., & Y un, S. (2009). A co ordinate gradien t descent metho d for nonsmo oth separable minimization. Mathematical Programming, 117(1-2), 387-423. 24. Dean J, Ghema w at S. MapReduce : Simplified Data Pro cessing on Large Clusters. In: OSDI’ 04. San F rancisco; 2004. 27 25. Low, Y., Gonzalez, J. E., Kyrola, A., Bickson, D., Guestrin, C. E., & Hellerstein, J. (2014). Graphlab: A new framework for parallel machine learning. arXiv preprint 26. Zaharia, M., Chowdh ury , M., F ranklin, M. J., Shenker, S., & Stoica, I. (2010). Spark: Cluster Computing with W orking Sets. HotCloud, 10, 10-10. 27. Kumar, A., Beutel, A., Ho, Q., & Xing, E. P . (2014). F ugue: Slo w-work er-agnostic distributed learning for big mo dels on big data. 28. Smola, A., & Naray anam urthy , S. (2010). An architecture for parallel topic mo dels. Pro ceedings of the VLDB Endo wment, 3(1-2), 703-710. 29. Boyd, S., Parikh, N., Chu, E., Peleato, B., & Eckstein, J. (2011). Distributed optimization and statistical learning via the alternating direction metho d of m ultipliers. F oundations and T rends in Mac hine Learning, 3(1), 1-122. 30. Suchard, M. A., Simpson, S. E., Zorych, I., Ryan, P ., & Madigan, D. (2013). Massiv e paralleliza- tion of serial inference algorithms for a complex generalized linear mo del. ACM T ransactions on Mo deling and Computer Simulation (TOMACS), 23(1), 10. 31. No cedal, J. (1980). Up dating quasi-Newton matrices with limited storage. Mathematics of com- putation, 35(151), 773-782. 32. Jesse, D., & Mark, G. (2006). The relationship b et ween precision-recall and ROC curv es, 233- 240. In Pro ceedings of the 23rd In ternational Conference on Mac hine Learning. Association for Computing Mac hinery , New Y ork, NY. 33. Beck, A., & T eb oulle, M. (2009). A fast iterativ e shrink age-thresholding algorithm for linear in verse problems. SIAM journal on imaging sciences, 2(1), 183-202. 34. Smith, V., F orte, S., Jordan, M. I., & Jaggi, M. (2015). L1-Regularized Distributed Optimiza- tion: A Communication-Efficien t Primal-Dual F ramew ork. arXiv preprint 35. http://schools- wikipedia.org/wp/n/Normal_distribution.htm 36. Zhuang, Y., Chin, W. S., Juan, Y. C., & Lin, C. J. (2015). Distributed Newton Metho ds for Regularized Logistic Regression. In A dv ances in Knowledge Discov ery and Data Mining (pp. 690-703). Springer In ternational Publishing.

Original Paper

Loading high-quality paper...

Comments & Academic Discussion

Loading comments...

Leave a Comment