Variational Inference for Sparse and Undirected Models

Undirected graphical models are applied in genomics, protein structure prediction, and neuroscience to identify sparse interactions that underlie discrete data. Although Bayesian methods for inference would be favorable in these contexts, they are ra…

Authors: John Ingraham, Debora Marks

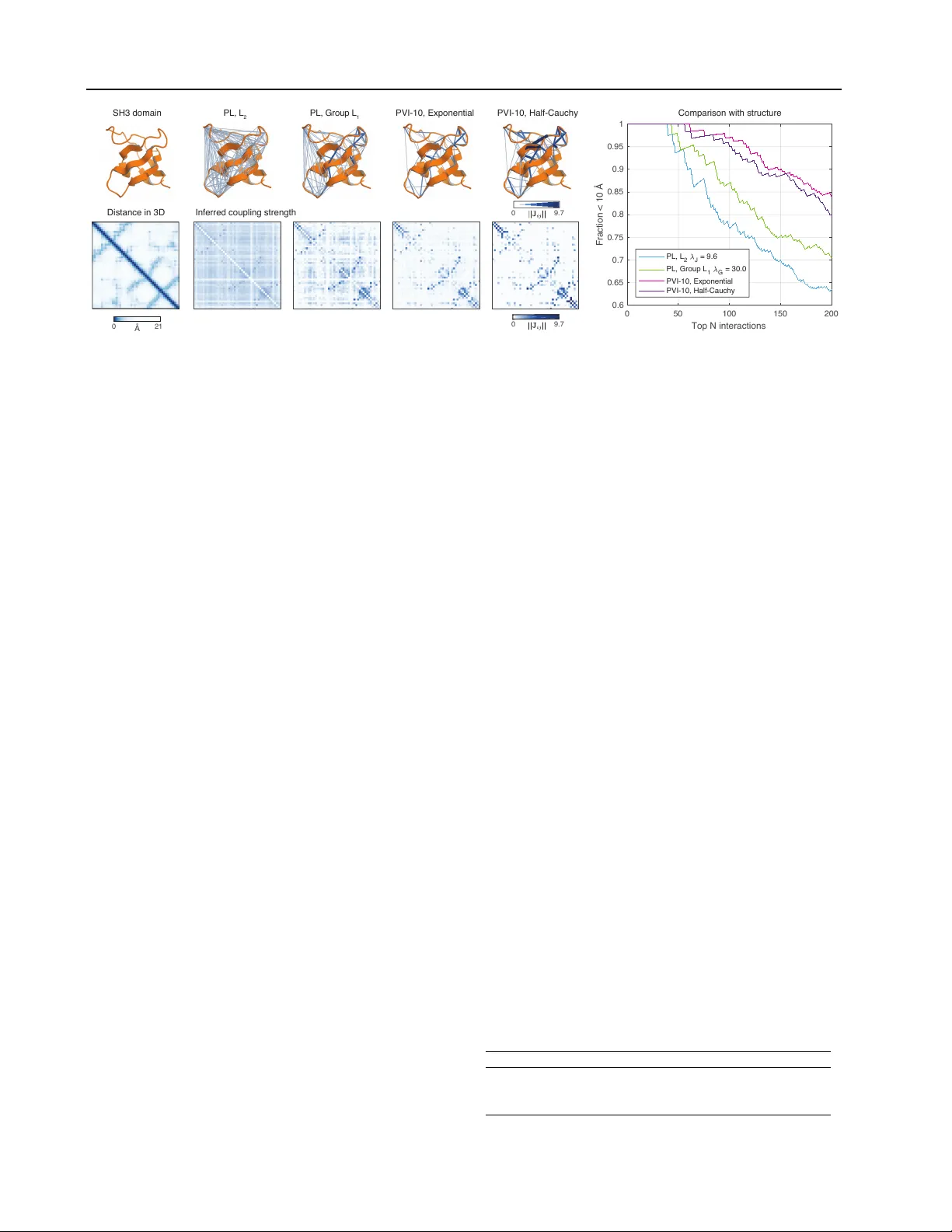

V ariational Infer ence f or Sparse and Undir ected Models John Ingraham 1 Debora Marks 1 Abstract Undirected graphical models are applied in ge- nomics, protein structure prediction, and neuro- science to identify sparse interactions that under - lie discrete data. Although Bayesian methods for inference would be fav orable in these contexts, they are rarely used because they require dou- bly intractable Monte Carlo sampling. Here, we dev elop a framework for scalable Bayesian in- ference of discrete undirected models based on two new methods. The first is Persistent VI, an algorithm for v ariational inference of discrete undirected models that a v oids doubly intractable MCMC and approximations of the partition func- tion. The second is Fadeout, a reparameteri- zation approach for variational inference under sparsity-inducing priors that captures a posteri- ori correlations between parameters and hyper- parameters with noncentered parameterizations. W e find that, together , these methods for varia- tional inference substantially impro v e learning of sparse undirected graphical models in simulated and real problems from physics and biology . 1. Introduction Hierarchical priors that fav or sparsity hav e been a central dev elopment in modern statistics and machine learning, and find widespread use for variable selection in biology , engineering, and economics. Among the most widely used and successful approaches for inference of sparse models has been L 1 regularization, which, after introduction in the context of linear models with the LASSO ( Tibshirani , 1996 ), has become the standard tool for both directed and undirected models alike ( Murphy , 2012 ). Despite its success, howe v er , L 1 is a pragmatic compro- mise. As the closest con ve x approximation of the idealized 1 Harvard Medical School, Boston, Massachusetts. Correspon- dence to: John Ingraham < ingraham@fas.harvard.edu > , Debora Marks < debbie@hms.harvard.edu > . Pr oceedings of the 34 th International Conference on Machine Learning , Sydney , Australia, PMLR 70, 2017. Copyright 2017 by the author(s). L 0 norm, L 1 regularization cannot model the hypothesis of sparsity as well as some Bayesian alternatives ( T ipping , 2001 ). T wo Bayesian approaches stand out as more ac- curate models of sparsity than L 1 . The first, the spike and slab ( Mitchell & Beauchamp , 1988 ), introduces discrete la- tent variables that directly model the presence or absence of each parameter . This discrete approach is the most di- rect and accurate representation of a sparsity hypothesis ( Mohamed et al. , 2012 ), but the discrete latent space that it imposes is often computationally intractable for models where Bayesian inference is difficult. The second approach to Bayesian sparsity uses the scale mixtures of normals ( Andrews & Mallows , 1974 ), a fam- ily of distributions that arise from integrating a zero mean- Gaussian ov er an unkno wn v ariance as p ( θ ) = Z ∞ 0 1 √ 2 π σ exp − θ 2 2 σ 2 p ( σ ) dσ. (1) Scale-mixtures of normals can approximate the discrete spike and slab prior by mixing both large and small val- ues of the v ariance σ 2 . The implicit prior of L 1 regulariza- tion, the Laplacian, is a member of the scale mixture family that results from an exponentially distributed variance σ 2 . Thus, mixing densities p ( σ 2 ) with sube xponential tails and more mass near the origin more accurately model sparsity than L 1 and are the basis for approaches often referred to as “Sparse Bayesian Learning” ( Tipping , 2001 ). Both the Student- t of Automatic Relev ance Determination (ARD) ( MacKay et al. , 1994 ) and the Horseshoe prior ( Carvalho et al. , 2010 ) incorporate these properties. Applying these fav orable, Bayesian approaches to sparsity has been particularly challenging for discrete, undirected models like Boltzmann Machines. Undirected models pos- sess a representational advantage of capturing ‘collectiv e phenomena’ with no directions of causality , but their like- lihoods require an intractable normalizing constant ( Mur- ray & Ghahramani , 2004 ). For a fully observed Boltzmann Machine with x ∈ { 0 , 1 } D the distribution 1 is p ( x | J ) = 1 Z ( J ) exp X i 0 for neighboring spins) and (ii) a Sherrington-Kirkpatrick spin glass diluted on an Erd ¨ os- Renyi random graph with av erage degree 2. W e sampled synthetic data for each system with the Swendsen-W ang algorithm (Appendix) ( Swendsen & W ang , 1987 ). Results On both the ferromagnet and the spin glass, we found that Persistent VI with a noncentered Horseshoe prior (Fadeout) gave estimates with systematically lo wer reconstruction error of the couplings J (Figure 4 ) versus a variety of standard methods in the field (Appendix). 4.2. Biology: Reconstructing 3D Contacts in Pr oteins from Sequence V ariation Potts model The Potts model generalizes the Ising model to non-binary categorical data. The f actor graph is the same (Figure 3 ), except each spin x i can adopt q different cate- gories with x ∈ { 1 , . . . , q } D and each J ij is a q × q matrix as p ( x | h , J ) = 1 Z ( h , J ) exp ( X i h i ( x i ) + X i

Original Paper

Loading high-quality paper...

Comments & Academic Discussion

Loading comments...

Leave a Comment