Proximity Without Consensus in Online Multi-Agent Optimization

We consider stochastic optimization problems in multi-agent settings, where a network of agents aims to learn parameters which are optimal in terms of a global objective, while giving preference to locally observed streaming information. To do so, we…

Authors: Alec Koppel, Brian M. Sadler, Alej

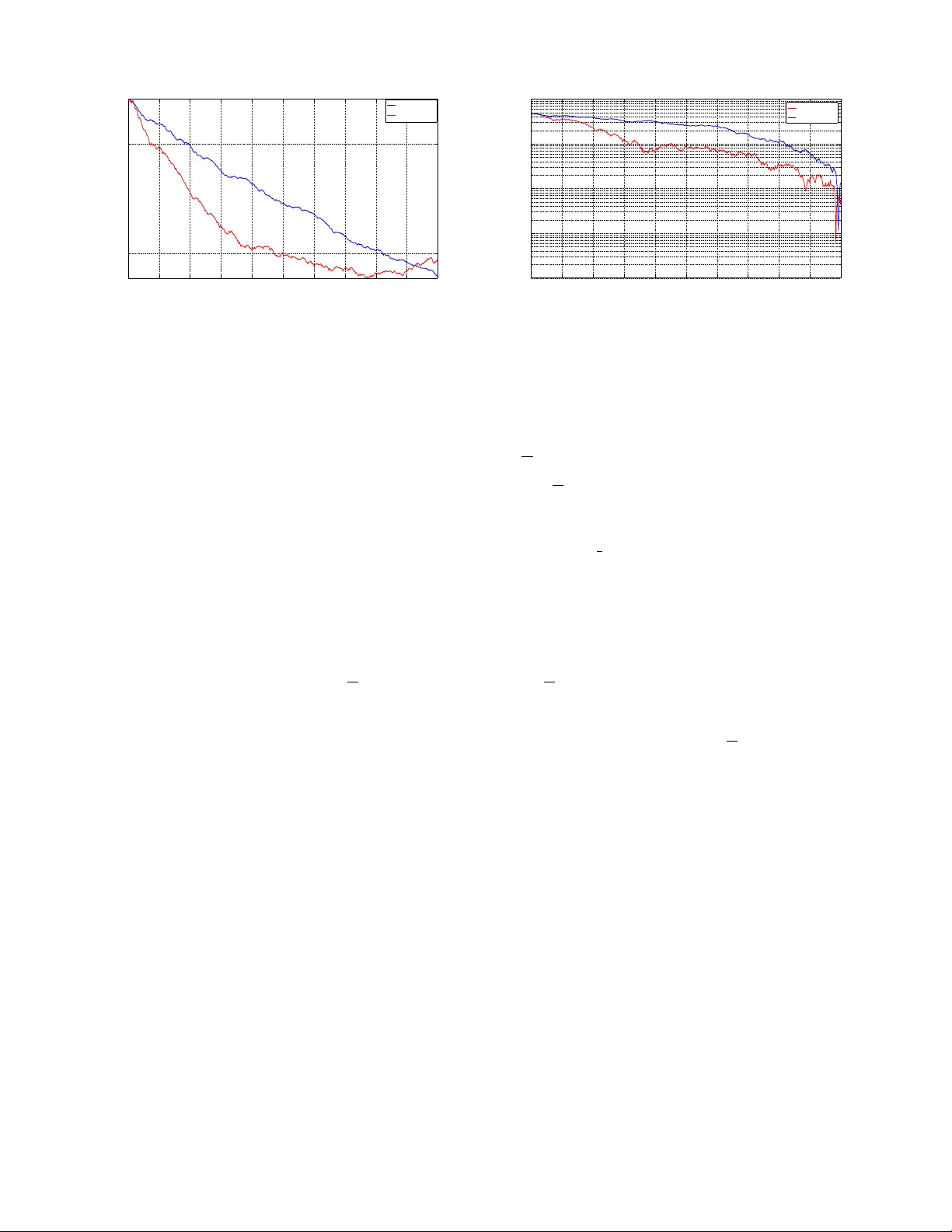

1 Proximity W ithout Consensus in Online Multi-Agent Optimization Alec K oppel ? , Brian M. Sadler † , and Alejandro Ribeiro ? Abstract W e consider stochastic optimization problems in multi-agent settings, where a network of agents aims to learn parameters which are optimal in terms of a global objectiv e, while giving preference to locally observ ed streaming information. T o do so, we depart from the canonical decentralized optimization frame work where agreement constraints are enforced, and instead formulate a problem where each agent minimizes a global objective while enforcing network proximity constraints. This formulation includes online consensus optimization as a special case, but allo ws for the more general hypothesis that there is data heterogeneity across the network. T o solve this problem, we propose using a stochastic saddle point algorithm inspired by Arro w and Hurwicz. This method yields a decentralized algorithm for processing observations sequentially received at each node of the network. Using Lagrange multipliers to penalize the discrepancy between them, only neighboring nodes exchange model information. W e establish that under a constant step-size regime the time-average suboptimality and constraint violation are contained in a neighborhood whose radius vanishes with increasing number of iterations. As a consequence, we prov e that the time-av erage primal vectors con ver ge to the optimal objectiv e while satisfying the network proximity constraints. W e apply this method to the problem of sequentially estimating a correlated random field in a sensor network, as well as an online source localization problem, both of which demonstrate the empirical v alidity of the aforementioned con vergence results. I . I N T RO D U C T I O N W e consider online multi-agent optimization problems, where a group of interconnected agents aim to minimize a global objectiv e f = P i f i which may be written as a sum of local objectiv es f i av ailable at different nodes i of a network G = ( V , E ) . The problem is online because information upon which the local objectives depend is sequentially and locally receiv ed by each agent. W e consider the setting where agents aim to keep their decision variables close to one another but not coincide in order to minimize this global objectiv e while gi ving preference to possibly distinct local signals. The motiv ation for this problem comes from the fact that consensus optimization methods implicitly operate on the hypothesis that the distribution of observations at each node is identical, which does not hold for a v ariety of problems in signal processing [3] and robotics [4]. Distributed optimization has a rich history in networked control systems, [5]–[8] wireless systems [9], [10], sensor networks [11], [12], and machine learning [13], [14]. Prior approaches to this problem require each agent to keep a local copy of the global decision v ariable, and approximately enforce an agreement constraint between the local copies at each iteration. T o do so, v arious information mixing strategies have been proposed in which agents combine local gradient steps with a weighted av erage of their neighbors variables [15]–[17], dual reformulations where each agent ascends in the dual domain [18], [19], and primal-dual methods which combine primal descent with dual ascent [4], [20]–[25]. Stochastic optimization, which allow for sequential processing of observ ations as they are recei ved, are mostly based upon stochastic approximation [26], [27], and hav e been successfully applied to e xtend multi-agent problems to the online domain [16], [24]. In distributed optimization problems, agent agreement may not always be the primary goal. In large-scale settings where one aims to le verage parallel processing architectures to alleviate computational bottlenecks, agreement constraints are suitable. In contrast, if there are different priors on information receiv ed at distinct subsets of agents, then requiring the network to reach a common decision may to degrade local predictiv e accuracy . Specifically , if the observ ations at each node are independent but not identically distrib uted, consensus yields the wr ong solution. Moreover , there are tradeof fs in complexity and communications, and it may be that only a subset of nodes requires a solution. In this paper , we seek to solve problems in which each agent aims to minimize a global cost P i f i subject to a network proximity constraint, which allows agents the leew ay to select actions which are good with respect to a global cost while not ignoring the structure of locally observed information. This setting may correspond to a multi-target tracking problem in a sensor network or a collaborative learning task in a robotic network where each robot is operating in a distinct domain. W e design multi-agent optimization strategies where agents reach a common understanding of global information, while still retaining their local perspecti ves. W ork in this paper is supported by NSF CCF-1017454, NSF CCF-0952867, ONR N00014-12-1-0997, ARL MAST CT A, and ASEE SMAR T . Part of the results in this paper appeared in [1], [2]. ? Department of Electrical and Systems Engineering, University of Pennsylvania, 200 South 33rd Street, Philadelphia, P A 19104. Email: { akoppel, aribeiro } @seas.upenn.edu. † U.S. Army Research Laboratory , Computation and Information Sciences Directorate, 2800 Powder Mill Road, Adelphi, MD 20783. Email: brian.m.sadler6.civ@mail.mil 2 W e propose a stochastic v ariant of the saddle point method [20], [21] to solve online multi-agent optimization problems with network proximity constraints. Our main technical contribution is to demonstrate that saddle point iterates con ver ge in expectation to a primal-dual optimal pair of this problem when a constant algorithm step-size is chosen. W e begin the paper in Section II with a discussion of decentralized stochastic optimization problems with network proximity constraints and present an example to decentralized estimation of a correlated random field in a sensor network to illustrate key concepts. The saddle point algorithm is dev eloped in Section III by drawing parallels with deterministic and stochastic optimization, and exploiting factorization properties of the Lagrangian. The method relies on the definition of an augmented stochastic Lagrangian associated with the instantaneous global cost function, and operates by alternating primal descent and dual accept steps. In Section IV, we establish the con ver gence properties of the proposed method to a primal-dual optimal pair in the constant step-size regime. W e demonstrate the proposed method’ s practical utility on a spatially correlated random field estimation problem in a sensor network Section V, and apply this tool to a decentralized online source localization problem in Section VI for a v ariety of problem instances. Finally , we conclude in Section VII. I I . P RO B L E M F O R M U L A T I O N W e consider agents i of a symmetric and connected network G = ( V , E ) with | V | = N nodes and |E | = M edges and denote as n i := { j : ( i, j ) ∈ E } the neighborhood of agent i . Each of the agents is associated with a (non-strongly) conv ex loss function f i : X × Θ i → R that is parameterized by a decision variable x i ∈ X ⊂ R p and a random variable θ i ∈ Θ i with a proper distribution. Throughout, we assume X is a compact conv ex subset of R p associated with the p -dimensional parameter vector of agent i . The functions f i ( x i , θ i ) for different θ i are interpreted as observ ations of a statistical model with a possible goal for agent i being the computation of the optimal local estimate, x L i := argmin x i ∈X F i ( x i ) := argmin x i ∈ R p E θ i [ f i ( x i , θ i )] . (1) In the online settings considered here the functions f i ( x i , θ i ) are termed instantaneous because the y are observ ed at particular points in time associated with realizations of the random v ariable θ i ; see Section III. W e refer to F i ( x i ) := E θ i [ f i ( x i , θ i )] as the local a verage function at node i . When we consider the network as a whole we can define the stacked vector x = [ x 1 , . . . , x N ] , which is an element of the product set X N ⊂ R N p , and the aggregate function F ( x ) := P N i =1 E θ i [ f i ( x i , θ i )] . It then follows that the set of problems in (2) is equi valent to the aggregate problem x L = argmin x ∈X N F ( x ) := argmin x ∈X N N X i =1 E θ i [ f i ( x i , θ i )] . (2) For conv enience, we further define the stacked instantaneous function as f ( x , θ ) = P i f i ( x i , θ i ) . That (1) and (2) describe the same problem is true because there is no coupling between the v ariables x i at different agents. In many situations, ho wev er , the parameters x L i that different agents want to estimate are related. It then makes sense to couple decisions of different agents as a means of letting agents exploit each others’ observ ations. Consensus optimization problems work on the hypothesis that all agents are interested in learning the same decision parameters x i for all i ∈ V . In this case, we modify (2) by introducing consensus constraints of the form x i = x j , for all j ∈ n i . (3) For a connected network this constraint makes all variables x i equal – hence the definition as a consensus problem. This hypothesis implicitly only mak es sense in cases where agents observe information drawn from a common distrib ution, which may be overly restrictiv e. In general, parameters of nearby nodes are expected to be close but are not necessarily all equal, as is the situation in, e.g., the estimation of a smooth field that is albeit not uniform. T o model this situation we introduce a conv ex local proximity function with real-valued range of the form h ij ( x i , x j ) and a tolerance γ ij ≥ 0 . These are used to couple the decisions of agent i to those of its neighbors j ∈ n i through the definition of the optimal estimates as the solution of the constrained optimization problem x ∗ := argmin x ∈ R N p N X i =1 E θ i [ f i ( x i , θ i )] s.t. h ij ( x i , x j ) ≤ γ ij , for all j ∈ n i . (4) In the formulation in (4) we assume that the proximity function h ij ( x i , x j ) that couples node i to node j is equi valent to the proximity function h j i ( x j , x i ) that couples node j to node i , i.e., that for all x i and x j we hav e h ij ( x i , x j ) = h j i ( x j , x i ) . This implies that the constraints h ij ( x i , x j ) ≤ γ ij and h j i ( x j , x i ) ≤ γ j i are redundant. W e also define the stacked constraint function h : X N → R M . W e keep them separate to maintain symmetry of the algorithm derived in Section III. The consensus constraints in (3) are a particular example of a proximity function h ij ( x i , x j ) but so is the norm constraint k x i − x j k 2 ≤ γ ij . This latter choice makes the estimates x ∗ i and x ∗ j of neighboring nodes close to each other b ut not 3 equal. Implicitly , this allows i to incorporate the (relev ant) information of neighboring nodes without the detrimental effect of incorporating the information of far away nodes that is only weakly correlated with the estimator of node i . The goal of this paper is to develop an algorithm to solve (4) in distributed online settings where nodes don’t know the distribution of the random v ariable θ i but observe local instantaneous functions f i ( x i , θ i ) sequentially . An important observ ation here is that the workhorse distributed gradient descent [15]–[17] and dual decomposition methods [18], [19] can’t be used to solve (4) because they work only when the constraints h ij ( x i , x j ) are linear . W e will see that a stochastic saddle point method can be distributed when the functions h ij ( x i , x j ) are not necessarily linear and con verges to the solution of (4) when local instantaneous functions f i ( x i , θ i ) are independently sampled over time. Before dev eloping this algorithm, we discuss a representativ e example to clarify ideas. Example (LMMSE Estimation of a Random Field). A Gauss-Markov random field is one in which the value of the field at the location of sensor i , denoted by x i , is of interest. Consider a sequential estimation problem in which the nodes of the sensor network acquire noisy linear transformations of the field’ s value at their respective positions. Formally , let θ i,t ∈ R q be the observ ation collected by sensor i at time t . Observations θ i,t are noisy linear transformations θ i,t = H i x i + w i,t of a signal x i ∈ R p contaminated with Gaussian noise w i,t ∼ N (0 , σ 2 I ) independently distributed across nodes and time. Ignoring neighboring observ ations, the minimum mean square error local estimation problem at node i can then be written in the form of (1) with f i ( x i , θ i ) = k H i x i − θ i k 2 . The quality of these estimates can be improved using the correlated information of adjacent nodes but would be hurt by trying to mak e estimates uniformly equal across the network. This problem specification can be captured by the mathematical formulation x ∗ := argmin x ∈X N N X i =1 E θ i h k H i x i − θ i k 2 i (5) s.t. (1 / 2) k x i − x j k 2 ≤ γ ij , for all j ∈ n i . The constraint (1 / 2) k x i − x j k 2 ≤ γ ij makes the estimate x ∗ i of node i close to the estimates x ∗ j of neighboring nodes j ∈ n i but not so close to the estimates x ∗ k of nonadjacent nodes k / ∈ n i . The problem formulation in (5) is a particular case of (4) with f i ( x i , θ i ) = k H i x i − θ i k 2 and h ij ( x i , x j ) = (1 / 2) k x i − x j k 2 . I I I . A L G O R I T H M D E V E L O P M E N T Recall that a decentralized algorithm is one in which node i has access to local functions f i ( x i , θ i ) and local constraints h ij ( x i , x j ) ≤ γ ij and exchanges information with neighbors j ∈ n i only . Recall also that the algorithm is further said to be online if the distribution of θ i is unknown and agent i has access to independent observations θ i,t that are acquired sequentially . Our goal is to de velop an online decentralized algorithm to solve (4). T o achiev e this we consider the approximate Lagrangian relaxation of (4) which we state as L ( x , λ ) = N X i =1 " E θ i [ f i ( x i , θ i )] (6) + 1 2 X j ∈ n i λ ij ( h ij ( x i , x j ) − γ ij ) − δ t 2 λ 2 ij # , where λ ij ∈ R + is a nonnegati ve Lagrange multiplier associated with the proximity constraint between node i and node j . Observe that (6) does not define the Lagrangian of the optimization problem (4), but instead defines an augmented Lagrangian due to the presence of the last term on the right-hand side. This last term − ( δ t / 2) λ 2 ij , with scalar parameters δ and t , is a regularizer on the dual variable, whose utility arises in controlling the accumulation of constraint violation of the algorithm ov er time. See Section IV for details. W e propose applying a stochastic saddle point algorithm to (6) which operates by alternating primal and dual stochastic gradient descent and ascent steps respecti vely . T o do so, consider the stochastic approximation of the augmented Lagrangian ev aluated at observed realizations θ i,t of the random v ariables θ i , which we define as ˆ L t ( x , λ ) = N X i =1 h f i ( x i , θ i,t ) + 1 2 X j ∈ n i λ ij ( h ij ( x i , x j ) − γ ij ) − δ t 2 λ 2 ij i . (7) Define the stacked dual variable as λ := [ λ 1 ; · · · ; λ M ] ∈ R M . Moreover , denote the network aggregate random variable as θ = [ θ 1 ; · · · ; θ N ] . Particularized to the stochastic Lagrangian stated in (7), the stochastic saddle point method takes the form x t +1 = P X N h x t − t ∇ x ˆ L t ( x t , λ t ) i , (8) λ t +1 = h λ t + t ∇ λ ˆ L t ( x t , λ t ) i + , (9) 4 Algorithm 1 SSPM: Stochastic Saddle Point Method Require: initialization x 0 and λ 0 = 0 , step-size t , regularizer δ 1: for t = 1 , 2 , . . . , T do 2: loop in parallel agent i ∈ V 3: Send primal and dual v ariables x i,t , λ ij,t to nbhd. j ∈ n i 4: Receiv e variables x j,t , λ ij,t from neighbors j ∈ n i 5: Update local parameter x i,t with (10) x i,t +1 = P X h x i,t − t ∇ x i f i ( x i,t ; θ i,t ) + X j ∈ n i ( λ ij,t + λ j i,t ) ∇ x i h ij ( x i,t , x j,t ) i . 6: end loop 7: loop in parallel communication link ( i, j ) ∈ E 8: Update dual variables at network link ( i, j ) [cf. (11)] λ ij,t +1 = h (1 − t δ ) λ ij,t + t ( h ij ( x i,t , x j,t ) − γ ij ) i + 9: end loop 10: end for where ∇ x ˆ L ( x t , λ t ) and ∇ λ ˆ L ( x t , λ t ) , are the primal and dual stochastic gradients of the augmented Lagrangian with respect to x and λ , respectiv ely . These stochastic subgradients are approximations of the gradients of (6) ev aluated at the current realization of the random variable θ . The notation P N X ( x ) denotes component-wise orthogonal projection of the indi vidual primal variables x i onto the given con ve x compact set X , and [ · ] + denotes the projection onto the M -dimensional nonnegati ve orthant R M + . As an ab use of notation, we also use [ · ] + to denote scalar positi ve projection where appropriate. The method stated in (8) - (9) can be implemented with decentralized computations across the network, as we state in the following proposition. Proposition 1 Let x i,t be the i th component of the primal iterate x t and λ ij,t the i, j th component the dual iterate λ t . The primal variable update is equivalent to the set of N parallel local variable updates x i,t +1 = P X h x i,t − t ∇ x i f i ( x i,t ; θ i,t ) + X j ∈ n i ( λ ij,t + λ j i,t ) ∇ x i h ij ( x i,t , x j,t ) i . (10) Likewise , the dual variable updates in (9) ar e equivalent to the M parallel updates λ ij,t +1 = h (1 − t δ ) λ ij,t + t ( h ij ( x i,t , x j,t ) − γ ij ) i + . (11) Proof: See Appendix A. W ith primal variables x i,t and Lagrange multipliers λ ij,t maintained and updated by node i , Proposition 1 implies that the saddle point method in (8)-(9) can be translated into a decentralized protocol in which: (i) The primal and dual variables variables of distinct agents across the network are decoupled from one another . (ii) The updates require e xchanges of information among neighboring nodes only . This protocol is summarized in Algorithm 1. Indeed, in the primal update in (11) agent i can compute the stochastic gradient ∇ x i f i ( x i,t ; θ i,t ) of its objecti ve function by making use of its local observ ations θ i,t and its decision v ariable x i,t at the pre vious time slot t . T o compute the gradients of the constraint functions ∇ x i h ij ( x i,t , x j,t ) the primal variables x j,t of neighboring nodes j ∈ n i are needed on top of the local variables x i,t , but these can be communicated from neighbors. T o implement (10) agent i also needs access to the Lagrange multipliers λ ij,t associated with the network proximity constraints h ij ( x i , x j ) and the multipliers λ j i,t associated with the network proximity constraints h j i ( x j , x i ) . The multipliers λ ij,t are locally a vailable at i and the multipliers λ j i,t can be communicated from neighbors. T o implement the dual update in (11) agent i needs access to its own dual variable λ ij,t as well as the local decision v ariables x i,t . It also needs access to the primal variables x j,t of neighbors j ∈ n i to compute the local dual gradient which is giv en as the constraint slack h ij ( x i,t , x j,t ) − γ ij . As in the primal, these neighboring variables can be communicated from neighbors. W e can then implement (10) after nodes exchange primal and dual variables x i,t and λ ij,t , proceed to implement (11) after they exchange updated primal v ariables x i,t , and conclude with the exchange of primal and dual variables x i,t and λ ij,t that are needed to implement the primal iteration at time t . These local operations repeated in synchron y by all nodes is equiv alent to the centralized operations in (8)-(9). W e analyze the iterations in (8)-(9), which implies con vergence of the equiv alent iterations in (10) - (11) in the following section. W e close here with an example and a remark. Example (LMMSE Estimation of a Random Field). Revisit the random filed estimation problem of Section II that we summarize in the problem formulation in (5). Recalling the identifications f i ( x i , θ i ) = k H i x i − θ i k 2 and h ij ( x i , x j ) = 5 (1 / 2) k x i − x j k 2 it follo ws that the local primal update in (10) takes the form x i,t +1 = P X h x i,t − t h 2 H T i H i x i,t − θ i,t + 1 2 X j ∈ n i λ ij,t + λ j i,t x i,t − x j,t ii . (12) Like wise, the specific form of the dual update in (11) is λ ij,t +1 = h (1 − t δ ) λ ij,t + ( t / 2) k x i,t − x j,t k 2 − γ ij i + . (13) The empirical utility of the decentralized estimation scheme in (12) - (13) is studied in Section V. Alternativ e functional forms for the network proximity constraints are studied for a source localization problem in Section VI . Remark 1 If the proximity constants are γ ij = γ j i and the initial Lagrange multipliers satisfy λ ij, 0 = λ j i, 0 it follows from (11) that λ ij,t = λ j i,t for all subsequent times t . This is as it should be because the constraints h ij ( x i , x j ) ≤ γ ij and h j i ( x j , x i ) ≤ γ j i are redundant. If these multipliers are equal for all times, the primal update in (10) does not necessitate exchange of dual variables. This does not sa ve communication cost as it is still necessary to exchange primal v ariables x i,t . I V . C O N V E R G E N C E A NA LY S I S W e turn to establishing that the saddle point algorithm defined by (8)-(9) con verges to the primal-dual optimal point of the problem stated in (4) when a constant algorithm step-size is used. In particular , we establish bounds on the objective function error sequence F ( x t ) − F ( x ∗ ) and the network-aggre gate constraint violation, where x ∗ is defined by (4). As a consequence, the time-average primal vector conv erges to the optimal objectiv e function F ( x ∗ ) at a rate of O (1 / √ T ) , while incurring constraint violation on the order of O ( T − 1 / 4 ) , where T is the total number of iterations. T o establish these results, we note some facts of the problem setting, and then introduce a few standard assumptions. First, observe that the dual stochastic gradient is independent of random v ariables θ i,t [cf. (11)], and hence for all t , ∇ λ L ( x t , λ t ) = ∇ λ ˆ L t ( x t , λ t ) . (14) Also pertinent to analyzing the performance of the stochastic saddle point method is the fact that the primal stochastic gradient of the Lagrangian is an unbiased estimator of the true primal gradient. Let F t be a sigma algebra that measures the history of the algorithm up until time t , i.e. a collection that contains at least the v ariables { x u , λ u , θ u } t u =1 ⊆ F t . That the primal stochastic gradient is an unbiased estimate of the true primal gradients means that, E h ∇ x ˆ L ( x t , λ t ) F t i = ∇ x L ( x t , λ t ) . (15) Furthermore, the compactness of the sets X permits the bounding of the magnitude of the iterates x i,t by a constant R/ N , which in turn implies that the netw ork-wide iterates may be bounded in magnitude as k x t k ≤ R for all t . (16) T o prov e con ver gence of the stochastic saddle point method, some conditions are required of the network, loss functions, and constraints, which we state belo w . Assumption 1 (Network connectivity) The network G is symmetric and connected with diameter D . Assumption 2 (Smoothness) The stacked instantaneous objective is Lipschitz continuous in e xpectation with constant L f , i.e. for distinct primal variables x , ˜ x ∈ X and all θ , we have E [ k f ( x , θ ) − f ( ˜ x , θ ) k ] ≤ L f k x − ˜ x k , (17) Mor eover , the stacked constraint function h ( x ) is Lipschitz continuous with modulus L h . That is, for distinct primal variables x , ˜ x ∈ X , we may write k h ( x ) − h ( ˜ x ) k ≤ L h k x − ˜ x k . (18) Assumption 1 ensures that the graph is connected and the rate at which information diffuses across the network is finite. This condition is standard in distributed algorithms. Assumption 2 states that the stacked objective and constraints are sufficiently smooth, and ha ve bounded gradients. These conditions are common in analysis of descent methods. 6 Assumption 2 taken with the bound on the primal iterates [cf. (16)] permits the bounding of the expected primal and dual gradients of the Lagrangian by constant terms and terms that depend the magnitude of the dual variable. In particular , we compute the mean-square-magnitude of the primal gradient of the stochastic augmented Lagrangian as E [ ∇ x ˆ L ( x , λ ) k 2 ] ≤ N max i E k∇ x i f i ( x i , θ ) k 2 (19) + M k λ k 2 max ( i,j ) ∈E k∇ x i h ij ( x i , x j ) k 2 ≤ N L 2 f + M L 2 h k λ k 2 ≤ ( N + M ) L 2 (1 + k λ k 2 ) where we have applied the triangle inequality , Cauchy-Schwartz in the first expression and considered the worst-case bounds. The second inequality makes use of the smoothness properties defined in (17) and the fact that the constraint h ij ( x i , x j ) is independent of θ . On the right-hand side of (19) we have defined L := max ( L f , L g ) to simplify the expression. W e further may derive a bound on the expected magnitude of the dual stochastic gradient of the augmented Lagrangian by making use of Assumption 2. In particular , we may write E h k∇ λ ˆ L ( x , λ ) k 2 i ≤ M max ( i,j ) ∈E ( h ij ( x i , x j ) − γ ij ) 2 + δ 2 2 t k λ k 2 (20) ≤ M L 2 h k x k 2 + δ 2 2 t k λ k 2 ≤ M L 2 h R 2 + δ 2 2 t k λ k 2 . The first inequality makes use of the triangle inequality and a worst-case bound on the constraint slack, whereas the second uses the Lipschitz continuity of the constraint (Assumption 2), and the last is an application of the compactness of the primal domain X N . W e proceed with a remark and then turn to establishing our main result. Remark 2 Rather than bound the primal and dual gradients of the Lagrangian by constants, as is conv entionally done in the analysis of primal-dual algorithms, we instead consider upper estimates in terms of the magnitude of the dual variable λ . In doing so, we alle viate the need for the dual v ariable to be restricted to a compact subset of the nonnegati ve real numbers R M + . The use of unbounded Lagrange multipliers allow us to mitigate the gro wth of constraint violation over time using the dual regularization term ( δ t / 2) k λ k 2 in (6). The follo wing lemma is used in the proof of the main theorem, and bounds the Lagrangian difference ˆ L t ( x t , λ t ) − ˆ L t ( x , λ t ) by a telescopic quantity in volving the primal and dual iterates, as well as the magnitude of the primal and dual gradients. Lemma 1 Denote as ( x t , λ t ) the sequence generated by the saddle point algorithm in (8) and (9) with stepsize t . If Assumptions 1 - 2 hold, the instantaneous Lagrangian differ ence sequence ˆ L t ( x t , λ t ) − ˆ L t ( x , λ t ) satisfies the decr ement pr operty ˆ L t ( x t , λ ) − ˆ L t ( x , λ t ) (21) ≤ 1 2 t k x t − x k 2 − k x t +1 − x k 2 + k λ t − λ k 2 − k λ t +1 − λ k 2 + t 2 k∇ x ˆ L t ( x t , λ t ) k 2 + k∇ λ ˆ L t ( x t , λ t ) k 2 . Proof: See Appendix B. Lemma 1 exploits the fact that the stochastic augmented Lagrangian is con vex-conca ve with respect to its primal and dual variables to obtain an upper bound for the dif ference ˆ L t ( x t , λ t ) − ˆ L t ( x , λ t ) in terms of the difference between the primal and dual iterates to a fixed primal-dual pair ( x , λ ) at the next and current time, as well as the square magnitudes of the primal and dual gradients. This property is the basis for establishing the con vergence of the primal iterates to their constrained optimum giv en by (4) in terms of objectiv e function ev aluation and constraint violation, when a specific constant algorithm step-size is chosen, as we state ne xt. Theorem 1 Denote ( x t , λ t ) as the sequence gener ated by the saddle point algorithm in (8) - (9) and suppose Assumptions 1 - 2 hold. Suppose the algorithm is run for T iterations with a constant step-size selected as t = = 1 / √ T , then the time aggr e gation of the objective function error sequence F ( x t ) − F ( x ∗ ) , with x ∗ defined as in (4) , grows sublinearly with the final iteration index T as T X t =1 [ F ( x t ) − F ( x ∗ )] ≤ O ( √ T ) . (22) Mor eover , the time-aggr e gation of the constr aint violation of the algorithm gr ows sublinearly final time T as X ( i,j ) ∈E h T X t =1 h ij ( x i,t , x j,t ) − γ ij i + ≤ O ( T 3 / 4 ) . (23) 7 Proof : W e first consider the expression in (21), and expand the left-hand side using the definition of the augmented Lagrangian in (7). Doing so yields the follo wing expression, N X i =1 [ f i ( x i,t , θ i,t ) − f i ( x i , θ i,t )] + δ t 2 ( k λ t k 2 − k λ k 2 ) (24) + X ( i,j ) ∈E [ λ ij ( h ij ( x i,t , x j,t ) − γ ij ) − λ ij,t ( h ij ( x i , x j ) − γ ij )] ≤ 1 2 t k x t − x k 2 − k x t +1 − x k 2 + k λ t − λ k 2 − k λ t +1 − λ k 2 + t 2 k∇ x ˆ L t ( x t , λ t ) k 2 + k∇ λ ˆ L t ( x t , λ t ) k 2 , after gathering like terms. Compute the expectation of (24) conditional on F 0 and substitute in the bounds for the mean-square- magnitude of the primal and dual gradients of the stochastic augmented Lagrangian giv en in (19) and (20), respecti vely , into the right-hand side to obtain F ( x t ) − F ( x ) + δ t 2 ( k λ t k 2 − k λ k 2 ) (25) + X ( i,j ) ∈E [ λ ij ( h ij ( x i,t , x j,t ) − γ ij ) − λ ij,t ( h ij ( x i , x j ) − γ ij )] ≤ 1 2 t k x t − x k 2 − k x t +1 − x k 2 + k λ t − λ k 2 − k λ t +1 − λ k 2 + t 2 ( N + M ) L 2 (1 + k λ t k 2 ) + M L 2 h R 2 + δ 2 2 t k λ t k 2 , where we ha ve also used the fact that the constraint functions h ij ( x i , x j ) appearing as the third term on the left-hand side are independent of θ , and noting that the right-hand side of (25) is equal to its expectation. Observe that x ∈ X is an arbitrary feasible point, which implies that h ij ( x i , x j ) ≤ γ ij for all ( i, j ) ∈ E . Making use of this property to annihilate the last term on the left-hand side of (25) and subtracting ( δ t / 2) k λ t k 2 from both sides yields F ( x t ) − F ( x ) + X ( i,j ) ∈E λ ij ( h ij ( x i,t , x j,t ) − γ ij ) − δ t 2 λ 2 ij ≤ 1 2 t k x t − x k 2 − k x t +1 − x k 2 + k λ t − λ k 2 − k λ t +1 − λ k 2 + t 2 K + (( N + M ) L 2 + δ 2 2 t − δ ) k λ t k 2 . (26) after reordering terms, and defining the constant K := ( N + M ) L 2 + M L 2 h R 2 . Now sum the expression (26) over times t = 1 , . . . , T for a fixed T , and select the constant δ to satisfy ( N + M ) L 2 + δ 2 2 t ≤ δ for a constant step-size t = to drop the term in volving k λ t k 2 from the right-hand side as T X t =1 [ F ( x t ) − F ( x )] + X ( i,j ) ∈E λ ij h T X t =1 ( h ij ( x i,t , x j,t ) − γ ij ) i − δ T 2 k λ k 2 ≤ 1 2 k x 1 − x k 2 + k λ 1 − λ k 2 + K 2 . (27) In (27), we exploit the telescopic property of the summand over differences in the magnitude of primal and dual iterates to a fixed primal-dual pair ( x , λ ) which appears as the first term on right-hand side of (26). By assuming the dual variable is initialized as λ 1 = 0 and subtracting the resulting (1 / 2 ) k λ k 2 term to the other side, the e xpression in (27) becomes T X t =1 [ F ( x t ) − F ( x )] + X ( i,j ) ∈E λ ij h T X t =1 ( h ij ( x i,t , x j,t ) − γ ij ) i − δ T 2 + 1 2 k λ k 2 ≤ 1 2 k x 1 − x k 2 + T K 2 . (28) At this point, we note that the left-hand side of the expression in (28) consists of two terms. The first is the accumulation over time of the global loss, which is a sum of all local losses at each node as defined in (2). The second term is the inner product of the an arbitrary Lagrange multiplier λ with the time-aggregation of constraint violation, and the last is a term which depends on the magnitude of this multiplier . W e may use these later terms to define an “optimal” Lagrange multiplier to control the 8 growth of the long-term constraint violation of the algorithm. T o do so, define the augmented dual function ˜ g ( λ ) using the later two terms on the left-hand side of (28) ˜ g ( λ ) = X ( i,j ) ∈E λ ij h T X t =1 ( h ij ( x i,t , x j,t ) − γ ij ) i − δ T 2 + 1 2 k λ k 2 . (29) Computing the gradient of (29) and solving the resulting stationary equation over the range R M + yields ˜ λ ij = 1 2( T δ + 1 / ) T X t =1 h ij ( x i,t , x j,t ) − γ ij + (30) for all ( i, j ) ∈ E . Substituting the selection λ = ˜ λ defined by (30) into (28) results in the following expression T X t =1 [ F ( x t ) − F ( x )] + X ( i,j ) ∈E h P T t =1 h ij ( x i,t , x j,t ) − γ ij i 2 + 2( T δ + 1 / ) ≤ 1 2 k x 1 − x k 2 + T K 2 . (31) Now select the constant step-size = 1 / √ T , and substitute the result into (31), using the formula for K defined follo wing expression (26), to obtain T X t =1 [ F ( x t ) − F ( x )] + X ( i,j ) ∈E h P T t =1 h ij ( x i,t , x j,t ) − γ ij i 2 + 2 √ T ( δ + 1) ≤ √ T 2 k x 1 − x k 2 + ( N + M ) L 2 + M L 2 h R 2 . (32) The expression in (32) allows us to deri ve both the con vergence of the global objecti ve and the feasibility of the stochastic saddle point iterates. W e first consider the objective error sequence F ( x t ) − F ( x ∗ ) . T o do so, subtract the last term on the left-hand side of (32) from both sides, and note that the resulting term is non-positiv e. This observ ation allows us to omit the constraint slack term in (32), which taken with the selection x = x ∗ [cf. (4)], yields T X t =1 [ F ( x t ) − F ( x ∗ )] ≤ √ T 2 k x 1 − x ∗ k 2 + K = O ( √ T ) , (33) which is as stated in (22). Now we turn to establishing a sublinear growth of the constraint violation in T , using the expression in (32). First, observe that the objectiv e function error sequence is bounded abov e as F ( x t ) − F ( x ∗ ) ≤ L f k x t − x ∗ k ≤ 2 L f R (34) Substituting (34) into the first term on the left-hand side of (32) and subtracting the result from both sides yields X ( i,j ) ∈E h P T t =1 h ij ( x i,t , x j,t ) − γ ij i 2 + 2 √ T ( δ + 1) ≤ √ T 2 k x 1 − x k 2 + K − 2 TL f R. (35) which, after multiplying both sides by 2 √ T ( δ + 1) and computing square-roots, yields X ( i,j ) ∈E h T X t =1 h ij ( x i,t , x j,t ) − γ ij i + (36) ≤ h 2 √ T ( δ + 1) √ T 2 k x 1 − x k 2 + K − 2 T L f R i 1 / 2 = O ( T 3 / 4 ) . as claimed in (23). Theorem 1 establishes that the stochastic saddle point method, when run with a fixed algorithm step-size, yields an objectiv e function error sequence whose difference is bounded by a constant strictly less times than T , the final iteration index. Moreov er , the time-accumulation of the constraint violation incurred by the algorithm is strictly smaller than T , the final iteration inde x. Thus, for larger T , the time-av erage difference between F ( x t ) and F ( x ∗ ) goes to null, as does the average constraint violation. Theorem 1 also allows us to establish con ver gence of the av erage iterates to a specific lev el of accuracy dependent on the total number of iterations T , as we subsequently state. 9 0 50 100 150 200 250 300 350 400 450 500 0.1 1 t , nu mb er o f i te ra ti o ns E [ f i ( x i ,t , θ i )] , L oc al O b je ct i ve L MM SE S ad dl e p t . (a) Local objective vs. iteration t 0 50 100 150 200 250 300 350 400 450 500 10 −4 10 −3 10 −2 10 −1 10 0 t , n umb e r of i te r at io ns k x i ,t − x ∗ k , St an dar d E rr or S add l e p t. LM M SE (b) Standard error vs. iteration t Fig. 1: Saddle point algorithm applied to the problem of estimating a correlated random field. Nodes are deployed uniformly in a square region of size 200 × 200 squared meters in a grid formation, and node estimators are correlated according to the distance-based model ρ ( x i , x j ) = e −k l i − l j k , where x i and x j are the decisions of nodes i and j , and l j are their respective locations. Indi vidual sensors learn global information while remaining close to nodes whose information they deem important. Exploiting the correlation structure of the field yields a reduction in the local estimation error and distance to the optimal LMMSE estimate. Corollary 1 Let ¯ x T = (1 /T ) P T t =1 x t be the vector formed by averaging the primal iterates x t over times t = 1 , . . . , T .. Under Assumptions 1 - 2, with constant algorithm step-size t = 1 / √ T , the objective function evaluated at ¯ x T satisfies F ( ¯ x T ) − F ( x ∗ ) ≤ O (1 / √ T ) (37) Mor eover , the constraint violation evaluated at the average vector ¯ x T satisfies X ( i,j ) ∈E h h ij ( ¯ x i,T , ¯ x j,T ) − γ ij i + = O ( T − 1 4 ) . (38) Proof : Consider the expressions in Theorem 1. In particular , to prov e (37), we consider the expression in (22), divide the expression by T , and use the definition of con vexity of the expected objectiv e F and constraint functions h ij ( x i , x i ) , which says that the a verage of function v alues upper bounds the function e v aluated at the av erage vector , i.e. F ( ¯ x T ) ≤ 1 T T X t =1 F ( x t ) , h ij ( ¯ x i,T , ¯ x j,T ) ≤ 1 T T X t =1 h ij ( ¯ x i,t , ¯ x j,t ) (39) Applying the relation (39) to the e xpressions in (22) and (23) di vided by T yields (37) and (38), respecti vely . Corollary 1 shows that the av erage saddle point primal iterates ¯ x t con ver ge to within a margin O (1 / √ T ) in terms of objectiv e function e valuation to the optimal objecti ve F ( x ∗ ) , where T is the number of iterations. Moreover , the primal av erage vector also achiev es yields the bound on the network proximity constraint violation as O ( T − 1 / 4 ) . T o summarize, when a constant step-size is used, with increasing T we hav e the following relations: the instantaneous iterates con ver ge on av erage, whereas the av erage iterates conv erge to the optimal objectiv e. This pattern also holds in terms of the algorithm’ s feasibility performance – the time average constraint violation approaches null with increasing T , whereas the constraint violation of the av erage vector approaches null. In the ne xt sections, we numerically analyze the performance of the saddle point method for a couple decentralized sequential estimation problems, illustrating their practical utility . V . R A N D O M F I E L D E S T I M AT I O N Consider the task of estimating a spatially correlated random field in a specified region A ⊂ R p by making use of a sensor network that we discussed in Sections II and III. Interconnected sensors collect observations θ i,t which are noisy linear transformations of the signal x ∈ R p they would like to estimate, which are related through the observation model θ it = H i x + w i,t with Gaussian noise w i,t ∼ N (0 , σ 2 I q ) i.i.d across time and node with σ 2 = 2 . The random field is parameterized by the correlation matrix R x , which is assumed to follow a spatial correlation structure of the form ρ ( x i , x j ) = e −k l i − l j k , where l i ∈ A and l j ∈ A are the respectiv e locations of sensor i and sensor j in the deployed region, see, e.g., [28]. Observe that now each node has a unique signal-to-noise ratio based upon its location and that information receiv ed at more distant nodes are less important; howe ver , their contribution to the aggregate objective F ( x ) still incentivizes global coordination. This task may be thought of as an online maximum likelihood estimation problem where the estimators of distinct sensors depend on one another . T o solve this problem, we deploy N = 50 sensors in a grid formulation, where neighboring nodes hav e a constant distance apart from one another in a 200 × 200 meter square region. W e make use of the saddle point algorithm [cf. (10) - (11)], whose 10 updates for the random field estimation problem is given by the e xplicit expressions in (12) and (13), respectiv ely . W e select γ ij = ρ ( x i , x j ) . Besides the local and global losses which on av erage con ver ge to a neighborhood of the constrained optima depending on the final iteration index T when a constant step-size is used (Theorem 1), we also study the standard error to the LMSE estimator x ∗ , i.e. k x i,t − x ∗ k . T o compute x ∗ for a single time slot, stack observations θ = [ θ 1 ; · · · ; θ N ] and observation models H = [ H 1 ; · · · ; H N ] . Then the least mean squared error (LMSE) estimator for a single time slot of this problem is x ∗ = ( HR x H T + 1 σ 2 I ) − 1 1 σ 2 I θ . T o compute the benchmark LMSE x ∗ for a given run, we stack signals θ i,t for all nodes i and times t at a centralized location into one large linear system and substitute the sample variance ˆ σ 2 in the prior computation. W e consider problem instances where observ ations and signal estimates are scalar ( p = q = 1 ), the scalar H = 1 , and the a priori signal x = 1 is set a vector of ones, and run the algorithm for for T = 500 iterations with a hybrid step-size strategy which is constant for the first t 0 iterations and then attenuates, i.e. t = min( , t 0 /t ) with t 0 = 100 and = 10 − 2 . W e further select the dual regularization parameter δ = 10 − 5 . The noise level is set to σ 2 = 10 . W e compare the performance of the algorithm with that of a simple LMMSE estimator strategy which does not take adv antage of the correlation structure of the sensor network. In Figure 1, we plot the results of this numerical experiment. Figure 1a shows the local objective E θ i [ f i ( x i,t , θ i )] of an arbitrarily chosen node i ∈ V versus iteration t . W e observe the numerical behavior of the global objective is similar to the local objectiv e, and is thus omitted. W e see that when nodes incorporate the correlation structure of the random field into their estimation strategy via the quadratic proximity constraint with γ ij chosen according to the correlation of node i and its neighbors j ∈ n i , the estimation performance improv es. In particular , to achie ve the benchmark E θ i [ f i ( x i , θ i )] ≤ . 1 , we require t = 247 versus t = 411 iterations respectiv ely for the case that the correlation structure is exploited via the saddle point algorithm as compared with a simple LMMSE scheme. W e observe that for small t the gain is substantial, b ut for large t the performance is comparable to the LMMSE strategy . This improved estimation performance is corroborated in the plot of the standard error k x i,t − x ∗ k to the optimal estimator as compared with iteration t in Figure 1b. W e see that to achie ve the benchmark k x i,t − x ∗ k ≤ . 1 , the saddle point algorithm requires t = 157 iterations as compared with t = 414 for the LMMSE estimator , more than twice as many . W e have observed that the benefit of using the saddle point method as compared with simple LMMSE is more substantial in problem instances where the signal to noise ratio is low , and the region A is larger . In the next section, we study the use of the saddle point method for solving the problem of deplo ying sensors in a spatial region to locate the position of a source signal. V I . S O U R C E L O C A L I Z A T I O N W e now consider the use of the stochastic saddle point method given in (8) - (9) to solve an online source localization problem. In particular , we consider an array of N sensors, where l i ∈ R p denotes the position of the sensor i in some deployed en vironment A ⊂ R p . Each node seeks to learn the location of a source signal x ∈ R p through its access to noisy range observations of the form r i,t = k x − l i k + ε i,t where ε t = [ ε 1 ,t ; · · · ; ε N ,t ] is some unknown noise vector . The goal of each sensor i in the network is, given access to sequentially observed range measurements r i,t , to learn the position of the source x , assuming it is aware of its location l i in the deplo yed region. Range-based source localization has been studied in a variety of fields, from wireless communications to geophysics [29], [30]. Rather than considering a range-based least squares problem, which is nonconv ex and may be solved approximately using semidefinite relaxations [31], we consider the squared range-based least squares (SR-LS) problem, stated as x ∗ := argmin x ∈ R p N X i =1 E r i k l i − x k 2 − r 2 i 2 . (40) Although this problem is also noncon vex, it may be solved approximately in a lower -complexity manner as a quadratic program – see [32], Section II-B and references therein. T o do so, expand the square in the first term in the objectiv e stated (40) and consider the modified argument inside the expectation ( α − 2 l T i x + k l i k 2 − r 2 i ) 2 with the constraint k x k = α . Proceeding as in [32], Section II-B, approximate this transformation by a con ve x unconstrained problem by defining matrix A ∈ R N × ( p +1) whose i th row associated with sensor i is gi ven as A i = [ − 2 l T i ; 1] , and vector b ∈ R N with i th entry as b i = r 2 i − k l i k 2 and relaxing the constraint k x k = α . Further define y = [ x ; α ] ∈ R p +1 , which allo ws (40) to be approximated as y ∗ := argmin y ∈ R p +1 N X i =1 E b i k A i y − b i k 2 ; (41) which is a least mean-square error problem. W e note that the techniques in [32] to solve this problem e xactly do not apply to the online setting [33]. W e propose solving (41) in decentralized settings which more effecti vely allow for each sensor to operate based on real-time observations. T o do so, each sensor keeps a local copy y i of the global source estimate y based on information that is available with local information only and via message exchange with neighboring sensors. Howe ver , each sensor would still like to 11 0 100 200 300 400 500 600 700 800 900 1000 10 −1 10 0 10 1 10 2 t , nu mb er o f i t er at io ns E k A y j, t − b j k 2 , L oca l Ob je cti ve S P-P r oxi mi ty S P-C on se ns us DO G D (a) Local objective vs. iteration t 0 100 200 300 400 500 600 700 800 900 10 −2 10 −1 10 0 10 1 t , nu mb er o f i t er at io ns k x i, t − x ∗ k , S tan dard Erro r S P-P r oxi mi ty S P-C on se ns us DO G D (b) Standard error k x i,t − x ∗ k vs. iteration t 0 100 200 300 400 500 600 700 800 900 1000 10 −2 10 −1 10 0 10 1 10 2 t , nu mb er o f i t er at io ns P j ∈ n i g ( y i, t , y j, t ), Cons trai nt Vi ola tion S P- Pr oxi mi ty S P- Con se ns us DO G D (c) Constraint violation vs. iteration t Fig. 2: Comparison of proximity and consensus algorithms on the source localization problem stated in (40) using the conv ex approximation (41) for an N = 64 node grid network deployed as an 8 × 8 square in a 1000 × 1000 meter region for the case that the noise perturbing observations by node i is zero-mean Gaussian, with a variance proportional its distance to the source as σ 2 = 2 k l i − x ∗ k , where l i is the location of node i . W e run the saddle point method with proximity constraints [cf (45) - (46)] giv en in (44) using dual regularization δ = 10 − 7 , as compared with the saddle point method which executes a consensus constraint (3), as well as Distributed Online Gradient Descent (DOGD) [35], which is a weighted averaging gradient method. For the former two, we use hybrid step-size strategy t = min( , t 0 /t ) with t 0 = 100 and = 10 − 1 . 5 , and for DOGD we use constant step-size 10 − 1 . 5 . W e observe that the proximity-constrained saddle point method yields the best performance in terms of objecti ve conv ergence and standard error, although it incurs higher le vels of constraint violation. attain the greater estimation accuracy associated with aggregating range observ ations over the entire network. W e proceed to illustrate ho w this may be achie ved by using the proximity constrained optimization in Section II. In application domains such as wireless communications or acoustics [3], the quality of the observed range measurements is better for sensors which are in closer proximity to the source. Motiv ated by this fact, we consider the case where sensor i weights the importance of neighboring sensors j ∈ n i by aiming to keep its estimate x i within an ` 2 ball centered at its neighbors estimate x j , whose radius is giv en by the pairwise minimum of the estimated distance to the source. This goal may be achie ved via the quadratic constraint k x i − x j k 2 ≤ min {k x i − l i k 2 , k x j − l j k 2 } for all j ∈ n i (42) which, while nonconv ex, may be con ve xified by rearranging the right-hand side of (42) and replacing the resulting maximum by the log-sum-exp function – see [34], Chapter 2. Thus we obtain (1 / 2) k x i − x j k 2 + log e k x i − l i k 2 + e k x j − l j k 2 ≤ 0 , (43) which is a con ve x constraint, since the later term is a composition of a monotone function with a conv ex function. T aking (41) together with the constraint (43), and noting that a constraint on x i is equiv alent to a constraint on the first p entries of y i after appending a 0 to the p + 1 -th entry of l i , we may write min y ∈ R N ( p +1) N X i =1 E b i k A i y i − b i k 2 , (44) s.t. (1 / 2) k y i − y j k 2 + log e k y i − l i k 2 + e k y j − l j k 2 ≤ 0 , where the constraint for node i is with respect to all of its neighbors j ∈ n i . Observe that the problem in (44) is of the form (4). Define g ( y i , y j ) as the constraint function the left-hand side of (43). Then primal update of the saddle point method stated in (8) specialized to this problem setting for node i is stated as y i,t +1 = y i,t − t 2 A T i,t A i,t y i,t − b i,t (45) + X j ∈ n i λ ij,t e k y i,t − l i k 2 ( y i,t − l i ) e k y i,t − l i k 2 + e k y j,t − l j k 2 + ( y i,t − y j,t ) , where we omit the use of set projections for simplicity , while the dual update [cf. (9)] executed at the link layer of the sensor network is λ ij,t +1 = (1 − δ t ) λ ij,t + t g ( y i,t , y j,t ) i + . (46) W e turn to analyzing the empirical the performance of the saddle point updates (45) - (46) to solve localization problems in a decentralized manner, such that nodes more strongly weight the importance of sensors in closer proximity to the source in the sense of (43). Besides the local objectiv e E b i k A i y i − b i k 2 , which we kno w con verges to its contained optimal v alue, we also study the standard error to the source signal x ∗ , denoted as k x i,t − x ∗ k . Recall that we reco ver x i,t from y i,t by taking 12 0 100 200 300 400 500 600 700 800 900 1000 10 −2 10 −1 10 0 10 1 10 2 t , n umb er o f i te ra ti on s E k A y j, t − b j k 2 , L oca l Ob je cti ve 16 64 40 0 (a) Local objective vs. iteration t 0 100 200 300 400 500 600 700 800 900 1000 10 0 t , nu mb er o f i t er at io ns k x i, t − x ∗ k , S tan dard Erro r 16 64 40 0 (b) Standard error k x i,t − x ∗ k vs. iteration t 0 100 200 300 400 500 600 700 800 900 1000 10 0 10 1 10 2 t , nu mb er o f i t er at io ns P j ∈ n i g ( y i, t , y j, t ), Cons trai nt Vi ola tion 16 64 40 0 (c) Constraint violation vs. iteration t Fig. 3: Comparison of the saddle point method with proximity constraints [cf. (45) - (46)] with dual regularization δ = 10 − 7 and hybrid step-size strategy t = min( , t 0 /t ) with t 0 = 100 and = 10 − 1 . 5 on the source localization problem stated in (40) using the con ve x approximation (41). W e fix the network topology as a grid and v ary the number of sensors as N = 16 , N = 64 , and N = 400 which are deployed in a square region of size 1000 × 1000 square meters. The noise perturbing observations by sensor i is zero-mean Gaussian, with a v ariance proportional its distance to the source as σ 2 = 0 . 5 k l i − x ∗ k , where l i is the location of node i . Observe that in larger networks, the rate at which nodes are able to localize the source is slower in terms of objectiv e conv ergence and standard error to the true source. Moreov er, we see that the level of constraint violation is larger with increasing N . its first p elements. W e further consider the magnitude of the constraint violation for this problem, which when considering the proximity constrained problem in (44), is given by X j ∈ n i (1 / 2) g ( y i,t , y j,t ) = X j ∈ n i (1 / 2) k y i,t − y j,t k 2 (47) + log e k y i,t − l i,t k 2 + e k y j,t − l j k 2 , and when implementing consensus methods, is gi ven by X j ∈ n i h ( y i,t , y j,t ) = X j ∈ n i k y i,t − y j,t k (48) for a randomly chosen sensor in the network. Throughout the rest of this section, we fix the dual regularization parameter δ = 10 − 7 , and study the performance of the saddle point method with proximity constraints as compared with tw o methods which attempt to satisfy consensus constraints. W e further analyze the saddle point method in (45) - (46) for a variety of network sizes to understand the practical effect of the learning rate on the number of sensors, and for dif ferent spatial deployment strategies which induce different network topologies. A. Consensus Comparison In this subsection, we compare the saddle point method on a proximity constrained problem as compared with methods which implement variations of the consensus protocol. In particular , we run the saddle point method for the localization problem giv en in (45) - (46) with proximity constraints, as compared with the same primal-dual scheme when the consensus constraint in (3) is used. W e further compare these instantiations of the saddle point method with distributed online gradient descent (DOGD) [35], which is a scheme that operates by having each node selecting its next iterate by taking a weighted average of its neighbors and descending through the negati ve of the local stochastic gradient. For each of these methods, we run the localization procedure for a total of 1000 iterations for ˜ T = 100 dif ferent runs when each node initializes its local variable y i, 0 uniformly at random from the unit interv al, and plot the sample mean of the results. W e consider problem instances of (40) when the number N = 64 of sensors is fixed, and are spatially deployed in a grid formation as a 8 × 8 square in a planar ( p = 2 ) region of size 1000 × 1000 . Moreover , the noise perturbing the observations at node i is zero-mean Gaussian, with a variance proportional its distance to the source as σ 2 = 2 k l i − x ∗ k , where l i is the location of node i , and the true source signal x ∗ is located at the average location of the sensors. For the saddle point methods, we find a hybrid step-size strategy to be most ef fectiv e, and hence set t = min( , t 0 /t ) with t 0 = 100 and = 10 − 1 . 5 . For DOGD, we find best performance to correspond to using a constant outer step-size = 10 − 1 . 5 , along with a halving scheme step-size in the inner recursi ve a veraging loop [35]. W e plot the results of these methods for this problem instance in Figure 2 for an arbitrarily chosen node i ∈ V . Observe that the saddle point method which implements network proximity constraints method yields the best performance in terms of objectiv e conv ergence. In particular , by t = 500 iterations, in Figure 2a we observe that the saddle point algorithm implemented with proximity constraints (SP-Proximity) achiev es objectiv e conv ergence to a neighborhood, i.e. E b i k A i y i,t − b i k 2 ≤ 1 . In 13 0 100 200 300 400 500 600 700 800 900 1000 10 0 t , nu mb er o f i t er at io ns E k A y j, t − b j k 2 , L oca l Ob je cti ve G ri d U ni fo rm N orm al (a) Local objective vs. iteration t 0 100 200 300 400 500 600 700 800 900 1000 10 0 t , nu mb er o f i t er at io ns k x i, t − x ∗ k , S tan dard Erro r G ri d U ni fo rm N orm al (b) Standard error k x i,t − x ∗ k vs. iteration t 0 100 200 300 400 500 600 700 800 900 1000 10 0 10 1 10 2 t , nu mb er o f i t er at io ns P j ∈ n i g ( y i, t , y j, t ), Cons trai nt Vi ola tion G ri d U ni fo rm N orm al (c) Constraint violation vs. iteration t Fig. 4: Numerical results on the localization problem stated in (40) using the con ve x approximation (41) for the saddle point method with proximity constraints [cf. (45) - (46)] with hybrid step-size strategy t = min( , t 0 /t ) with t 0 = 100 and = 10 − 1 . 5 . W e run the algorithm for a variety of spatial deployment strategies, which induce different network topologies. W e consider a square region of size 1000 × 1000 square meters, and deploy nodes in grid formations, uniformly at random, and according to a two-dimensional Normal distribution. In the later two cases, sensors which are a distance of 50 meters are less are connected by an edge. The noise perturbing observ ations by sensor i is zero-mean Gaussian, with a variance proportional its distance to the source as σ 2 = 0 . 5 k l i − x ∗ k , where l i is the location of node i . W e see that while the Normal configuration yields the worst localization performance, it achieves the least levels of constraint violation. In contrast, Uniform and Grid configurations both are effectiv e spatial deployment strategies to localize the source in terms of local objective con ver gence and standard error . contrast, the saddle point with consensus constraints (SP-Consensus) and DOGD respectively experience numerical oscillations and di vergent behavior after a b urn-in period of t = 100 . This trend is confirmed in the plot of the standard error to the optimizer k x i,t − x ∗ k of the original problem (40) in Figure 2b. W e see that SP-Proximity yields con ver gence to a neighborhood between 10 − 1 and 1 by t = 200 iterations, whereas SP- Consensus and DOGD experience numerical oscillations and do not appear to localize the source signal x ∗ . While SP-Proximity exhibits superior beha vior in terms of objective and standard error con ver gence, it incurs larger le vels of constraint violation than its consensus counterpoints, as may be observed in Figure 2c. T o be specific, SP-Proximity on a verage experiences constraint violation [cf. (47)] on average an order of magnitude larger than SP-Consensus and DOGD [cf. (48)] for the first t = 400 iterations. After this benchmark, the magnitude of the constraint of the different methods conv erges to around 5 . Thus, we see that achieving smaller constraint violation and implementing consensus constraints may lead to inferior source localization accuracy . B. Impact of Network Size In this subsection, we study the effect of the size of the deployed sensor network on the ability of the proximity-constrained saddle point method to ef fectiv ely localize the source signal. W e fix the topology of the deployed sensors as a grid network, and again set the source signal x ∗ to be the av erage of node positions in a planar ( p = 2 ) spatial region A of size 1000 × 1000 meters. W e set the noise distribution which perturbs the range measurements of node i to be zero-mean Gaussian with variance . 5 k l i − x ∗ k . W e run the algorithm stated in (45) - (46) with hybrid step-size strategy t = min( , t 0 /t ) with t 0 = 100 and = 10 − 1 . 5 for a total of T = 1000 iterations for ˜ T = 100 total runs when each node initializes its local variable y i, 0 uniformly at random from the unit interv al, and plot the sample mean results for problem instances of (44) when the network size is varied as N = 16 , N = 64 , N = 400 , which correspond respectiv ely to 4 × 4 , 8 × 8 , and 20 × 20 grid sensor formations. W e plot the results of this numerical setup in Figure 3 for a randomly chosen sensor in the network. Observe that in Figure 3a, which sho ws the con ver gence behavior in terms of the local objective E b i k A i y i,t − b i k 2 versus iteration t , that the rate at which sensors are able to localize the source is comparable across the dif ferent network sizes; howe ver , the con ver gence accuracy is higher in smaller networks. In particular, by t = 1000 , we hav e observe the objectiv e con ver ges to respective values 0 . 03 , 0 . 08 , and 0 . 14 for the N = 16 , N = 64 , N = 400 node networks. This relationship between con ver gence accuracy and number of sensors in the network is corroborated in the plot of the standard error k x i,t − x ∗ k to the true source x ∗ in Figure 3b. W e see that the standard error across the dif ferent networks con verges to within a radius of 1 to the optimum, but the rate at which con ver gence is exhibited is comparable across the dif ferent network sizes. In particular , by t = 400 , we observe the standard error benchmarks 0 . 41 , 0 . 74 , and 0 . 9 for the N = 16 , N = 64 , and N = 400 node networks. A similar pattern may be gleaned from Figure 3c, in which we plot the magnitude of the constraint violation P j ∈ n i g ( y i,t , y j,t ) as given in (47) with iteration t . Observe that for the networks with N = 16 , N = 64 , and N = 400 sensors, respectively , we hav e the constraint violation benchmarks 2 . 1 , 4 , and 4 . 74 by t = 300 . Moreo ver , the rate at which benchmarks are achie ved is comparable across the dif ferent network sizes, such that the primary difference in the dual domain is the asymptotic magnitude of constraint violation, b ut not dual variable conv ergence rate. 14 C. Effect of Spatial Deployment W e turn to studying the impact of the way in which sensors are spatially deployed on their ability to localize the source signal, which implicitly is an analysis of the impact of the network topology on the empirical conv ergence behavior . T o do so, we consider a problem instance in which the source signal x ∗ is located at the av erage of sensor positions in the network in a planar ( p = 2 ) spatial region A of size 1000 × 1000 meters. Moreover , the noise distribution which perturbs the range measurements received at node i is fixed as zero-mean Gaussian with variance . 5 k l i − x ∗ k , implying that nodes which are closer to the source receiv e observations with higher SNR. Each node initializes its local v ariable y i, 0 uniformly at random from the unit interval, and then ex ecutes the saddle point method stated in (45) - (46) for a total of T = 1000 iterations for ˜ T = 100 total runs. W e consider the sample mean results of the ˜ T = 100 for problem instances of (44) when the sensor deployment strategy is either a grid formation, uniformly at random, or according to a two-dimensional Gaussian distribution. In the later two cases, sensors which are closer than a distance of 50 meters are connected. Since in general random networks of these types will not be connected, we repeatedly generate such networks until we obtain the first one which has the a comparable Fiedler number (second-smallest eigen value of the graph Laplacian matrix) as the grid network, which is a standard measure of network connecti vity – see [36], Ch. 1 for details. W e display the results of this localization experiment in Figure 4. In Figure 4a, we plot the local objectiv e as compared with iteration t across these different sensor deployment strategies. W e see that sensor localization performance is best in terms of objecti ve con ver gence in the grid network, followed by network topologies generated from uniform and Normal spatial deployment strate gies. In particular , by t = 400 , the grid, Uniform, and Normal sensor networks achie ve the objective ( E b i k A i y i,t − b i k 2 ) benchmarks 0 . 26 , 0 . 45 , and 0 . 83 . This trend is complicated by our analysis of these sensor networks’ ability to learn the true source x ∗ as measured by the standard error k x i,t − x ∗ k versus iteration t in Figure 4b. In particular , to achiev e the benchmark k x i,t − x ∗ k ≤ 1 we see that the Uniform topology requires t = 26 iterations, whereas the grid network requires t = 557 iterations, and the Normal network does not achieve error bound by t = 1000 . Howe ver , we observe that the grid network experiences more stable conv ergence behavior in terms of its error sequence, as compared with the other tw o networks. In Figure 4c, we display the constraint violation [cf. (47)] incurred by the proximity-constrained saddle point method when we v ary the sensor deployment strategy . Observe that the grid and Uniform network topologies incur comparable lev els of constraint violation, whereas the sensor network induced by choosing spatial locations according to a two-dimensional Gaussian distribution is able to maintain closer lev els of network proximity by nearly an order of magnitude. V I I . C O N C L U S I O N W e developed multi-agent stochastic optimization problems where the hypothesis that all agents are trying to learn common parameters may be violated. In doing so, agents to make decisions which giv e preference to locally observed information while incorporating the relev ant information of others. This problem class incorporates sequential estimation problems in multi-agent settings where observations are independent but not identically distributed. W e formulated this task as a decentralized stochastic program with con vex proximity constraints which incentivize distinct nodes to make decisions which are close to one another . W e considered an augmented Lagrangian relaxation of the problem, to which we apply a stochastic v ariant of the saddle point method of Arrow and Hurwicz to solve it. W e established that under a constant step-size regime the time-average suboptimality and constraint violation are contained in a neighborhood whose radius vanishes with increasing number of iterations (Theorem 1). As a consequence, we obtain in Corollary 1 that the average primal vectors con ver ge to the optimum while satisfying the network proximity constraints. Numerical analysis on a random field estimation problem in a sensor network illustrated the benefits of using the saddle point method with proximity constraints as compared with a simple LMMSE estimator scheme. W e find that these benefits are more pronounced in problem instances with lower SNR and lar ger spatial regions in which sensors are deployed. W e further considered a source localization problem in a sensor network, where sensors collect noisy range estimates whose SNR is proportional to their distance to the true source signal. In this problem setting, the proximity-constrained saddle point method outperforms methods which attempt to e xecute consensus constraints. A P P E N D I X A : P R OO F O F P RO P O S I T I O N 1 Begin by observing that since we assume that h i ( x i , x j ) = h j ( x j , x i ) for all x i ∈ X and x j ∈ X it must be that gradients are also equal and that, in particular , ∇ x i h i ( x i,t , x j,t ) = ∇ x i h j ( x j,t , x i,t ) . (49) T o compute the primal stochastic gradient of the Lagrangian in (6), observe that in the instantaneous Lagrangian in (7) only a fe w summands depend on x i . In the first sum only the one associated with the local objective f i ( x i , θ i,t ) depends on x i . 15 In the second sum the terms that depend on x i include the local constraints h i ( x i , x j ) − γ ij and the neighboring constraints h j ( x j , x i ) − γ j i . T aking gradients of these terms and recalling the equality in (49) yields, ∇ x i ˆ L t ( x t , λ t ) = ∇ x i f i ( x i,t ; θ i,t ) (50) + X j ∈ n i ( λ ij,t + λ j i,t ) T ∇ x i h i ( x i,t , x j,t ) . Writing (8) componentwise and substituting ∇ x i ˆ L t ( x t , λ t ) for its expression in (50), the result in (10) follows. T o prove (11) we just need to compute the gradient ˆ L t ( x t , λ t ) of the stochastic Lagrangian with respect to the Lagrange multipliers associated with edge ( i, j ) . By noting that only one summand in (7) depends on this multiplier we conclude that ∇ λ ij ˆ L t ( x t , λ t ) = h i ( x i,t , x j,t ) − γ ij − t δ λ ij,t . (51) After gathering terms in (51) and substituting the result into (9), we obtain (11). A P P E N D I X B : P R OO F O F L E M M A 1 Consider the squared 2-norm of the dif ference between the iterate x t +1 at time t + 1 and an arbitrary feasible point x ∈ X and use (8) to e xpress x t +1 in terms of x t , k x t +1 − x k 2 = kP X N [ x t − t ∇ x ˆ L t ( x t , λ t )] − x k 2 . (52) Since x ∈ X the distance between the projected vector P X [ x t − ∇ x ˆ L t ( x t , λ t )] and x is smaller than the distance before projection. Use this fact in (52) and expand the square to write k x t +1 − x k 2 ≤ k x t − t ∇ x ˆ L t ( x t , λ t ) − x k 2 = k x t − x k 2 − 2 t ∇ x ˆ L t ( x t , λ t ) T ( x t − x ) + 2 t k∇ x ˆ L t ( x t , λ t ) k 2 . (53) W e reorder terms of the abov e expression such that the gradient inner product is on the left-hand side, yielding ∇ x ˆ L t ( x t , λ t ) T ( x t − x ) (54) ≤ 1 2 t k x t − x k 2 − k x t +1 − x k 2 + t 2 k∇ x ˆ L t ( x t , λ t ) k 2 . Observe no w that since the functions f i,t ( x i , θ ) and h ij ( x i , x j ) are con vex, the online Lagrangian is a con ve x function of x [cf. (6)]. Thus, it follo ws from the first order con vexity condition that ˆ L t ( x t , λ t ) − ˆ L t ( x , λ t ) ≤ ∇ x ˆ L t ( x t , λ t ) T ( x t − x ) . (55) Substituting the upper bound in (54) for the right hand side of the inequality in (55) yields ˆ L t ( x t , λ t ) − ˆ L t ( x , λ t ) (56) ≤ 1 2 k x t − x k 2 − k x t +1 − x k 2 + t 2 k∇ x ˆ L t ( x t , λ t ) k 2 . W e set this analysis aside and proceed to repeat the steps in (52)-(56) for the distance between the iterate λ t +1 at time t + 1 and an arbitrary multiplier λ . k λ t +1 − λ k 2 = k [ λ t + t ∇ λ ˆ L t ( x t , λ t )] + − λ k 2 , (57) where we have substituted (9) to express λ t +1 in terms of λ t . Using the non-expansi ve property of the projection operator in (57) and expanding the square, we obtain k λ t +1 − λ k 2 ≤ k λ t + t ∇ λ ˆ L t ( x t , λ t ) − λ k 2 . (58) = k λ t − λ k 2 + 2 ∇ λ ˆ L t ( x t , λ t ) T ( λ t − λ ) + 2 k∇ λ ˆ L t ( x t , λ t ) k 2 . Reorder terms in the abo ve expression such that the gradient-iterate inner product term is on the left-hand side as ∇ λ ˆ L t ( x t , λ t ) T ( λ t − λ ) (59) ≥ 1 2 k λ t +1 − λ k 2 − k λ t − λ k 2 − t 2 k∇ λ ˆ L t ( x t , λ t ) k 2 . 16 Note that the online Lagrangian [cf. (6)] is a concav e function of its Lagrange multipliers, which implies that instantaneous Lagrangian dif ferences for fixed x t satisfy ˆ L t ( x t , λ t ) − ˆ L t ( x t , λ ) ≥ ∇ λ ˆ L t ( x t , λ t ) T ( λ t − λ ) . (60) By using the lo wer bound stated in (59) for the right hand side of (60), we may write ˆ L t ( x t , λ t ) − ˆ L t ( x t , λ ) (61) ≥ 1 2 t k λ t +1 − λ k 2 − k λ t − λ k 2 − t 2 k∇ λ ˆ L t ( x t , λ t ) k 2 . W e now turn to establishing a telescopic property of the instantaneous Lagrangian by combining the expressions in (56) and (61). T o do so observe that the term ˆ L t ( x t , λ t ) appears in both inequalities. Thus, subtraction in inequality (61) from those in (56) follo wed by reordering terms yields ˆ L t ( x t , λ ) − ˆ L t ( x , λ t ) ≤ 1 2 t k x t − x k 2 − k x t +1 − x k 2 + k λ t − λ k 2 − k λ t +1 − λ k 2 + 2 k∇ x ˆ L t ( x t , λ t ) k 2 + k∇ λ ˆ L t ( x t , λ t ) k 2 , which is as stated in (21). R E F E R E N C E S [1] A. Koppel, B. M. Sadler, and A. Ribeiro, “Proximity without consensus in online multi-agent optimization. ” in Proc. Int. Conf. Acoust. Speech Signal Pr ocess. , March 20-25 2016. [2] A. Koppel, B. M. Sadler, and A. Ribeiro, “Decentralized online optimization with heterogeneous data sources. ” in Proc. IEEE Global Conf. Signal and Info. Pr ocessing (submitted). , December 7-9 2016. [3] A. Sayed, A. T arighat, and N. Khajehnouri, “Network-based wireless location: challenges faced in developing techniques for accurate wireless location information, ” Signal Processing Magazine, IEEE , vol. 22, no. 4, pp. 24–40, July 2005. [4] A. Koppel, G. W arnell, E. Stump, and A. Ribeiro, “D4l: Decentralized dynamic discriminative dictionary learning, ” IEEE T rans. Signal and Info. Process. over Networks , vol. (submitted), June 2018, av ailable at http://www .seas.upenn.edu/ aribeiro/wiki. [5] F . Bullo, J. Cort ´ es, and S. Mart ´ ınez, Distributed Contr ol of Robotic Networks: A Mathematical Approac h to Motion Coordination Algorithms: A Mathematical Appr oach to Motion Coordination Algorithms , ser. Princeton Series in Applied Mathematics. Princeton Univ ersity Press, 2009. [Online]. A vailable: https://books.google.com/books?id=wRNcSTuK4sMC [6] Y . Cao, W . Y u, W . Ren, and G. Chen, “ An overvie w of recent progress in the study of distributed multi-agent coordination, ” Industrial Informatics, IEEE T ransactions on , vol. 9, no. 1, pp. 427–438, 2013. [7] C. Lopes and A. Sayed, “Diffusion least-mean squares ov er adaptiv e networks: Formulation and performance analysis, ” Signal Processing , IEEE T ransactions on , vol. 56, no. 7, pp. 3122–3136, July 2008. [8] A. Jadbabaie, J. Lin et al. , “Coordination of groups of mobile autonomous agents using nearest neighbor rules, ” Automatic Control, IEEE T ransactions on , vol. 48, no. 6, pp. 988–1001, 2003. [9] A. Ribeiro, “Optimal resource allocation in wireless communication and networking, ” EURASIP Journal on W ir eless Communications and Networking , vol. 2012, no. 1, pp. 1–19, 2012. [10] A. Ribeiro, “Ergodic stochastic optimization algorithms for wireless communication and networking, ” Signal Processing , IEEE T ransactions on , vol. 58, no. 12, pp. 6369–6386, Dec 2010. [11] M. Rabbat and R. Nowak, “Decentralized source localization and tracking [wireless sensor networks], ” in Acoustics, Speec h, and Signal Pr ocessing, 2004. Pr oceedings. (ICASSP ’04). IEEE International Conference on , vol. 3, May 2004, pp. iii–921–4 vol.3. [12] I. Schizas, A. Ribeiro, and G. Giannakis, “Consensus in ad hoc wsns with noisy links - part i: distributed estimation of deterministic signals, ” IEEE T rans. Signal Process. , vol. 56, no. 1, pp. 350–364, Jan. 2008. [13] V . Cevher , S. Becker , and M. Schmidt, “Conv ex optimization for big data: Scalable, randomized, and parallel algorithms for big data analytics, ” Signal Pr ocessing Magazine, IEEE , vol. 31, no. 5, pp. 32–43, Sept 2014. [14] K. Tsianos, S. Lawlor, and M. Rabbat, “Consensus-based distributed optimization: Practical issues and applications in large-scale machine learning, ” in Communication, Contr ol, and Computing (Allerton), 2012 50th Annual Allerton Conference on , Oct 2012, pp. 1543–1550. [15] D. Jakovetic, J. M. F . Xavier , and J. M. F . Moura, “Fast distributed gradient methods, ” CoRR , vol. abs/1112.2972, Apr . 2011. [16] S. Ram, A. Nedic, and V . V eerav alli, “Distributed stochastic subgradient projection algorithms for conv ex optimization, ” J Optimiz. Theory App. , vol. 147, no. 3, pp. 516–545, Sep. 2010. [17] K. Y uan, Q. Ling, and W . Y in, “On the con vergence of decentralized gradient descent, ” ArXiv e-prints 1310.7063 , Oct. 2013. [18] M. Rabbat, R. Nowak, and J. Bucklew , “Generalized consensus computation in networked systems with erasure links, ” in IEEE 6th W orkshop Signal Pr ocess. Adv . in W ireless Commun Pr ocess. , Jun. 5-8 2005, pp. 1088–1092. [19] F . Jakubiec and A. Ribeiro, “D-map: Distributed maximum a posteriori probability estimation of dynamic systems, ” IEEE T rans. Signal Pr ocess. , vol. 61, no. 2, pp. 450–466, Feb . 2013. [20] K. Arrow , L. Hurwicz, and H. Uzawa, Studies in linear and non-linear pr ogramming , ser . Stanford Mathematical Studies in the Social Sciences. Stanford Univ ersity Press, Stanford, Dec. 1958, vol. II. [21] A. Nedic and A. Ozdaglar , “Subgradient methods for saddle-point problems, ” J Optimiz. Theory App. , vol. 142, no. 1, pp. 205–228, Aug. 2009. [22] A. K oppel, F . Y . Jakubiec, and A. Ribeiro, “ A saddle point algorithm for networked online con vex optimization. ” in Pr oc. Int. Conf. Acoust. Speec h Signal Pr ocess. , May 4-9 2014, pp. 8292–8296. [23] A. Koppel, F . Jakubiec, and A. Ribeiro, “ A saddle point algorithm for networked online conv ex optimization, ” IEEE T rans. Signal Process. , p. 15, Oct 2015. [24] A. Koppel, F . Y . Jakubiec, and A. Ribeiro, “Regret bounds of a distributed saddle point algorithm. ” in Proc. Int. Conf. Acoust. Speech Signal Process. , April 19-24 2015. [25] Q. Ling and A. Ribeiro, “Decentralized dynamic optimization through the alternating direction method of multipliers, ” IEEE T ransactions on Signal Pr ocessing , vol. 62, no. 5, pp. 1185–1197, 2014. 17 [26] H. Robbins and S. Monro, “ A stochastic approximation method, ” Ann. Math. Statist. , vol. 22, no. 3, pp. 400–407, 09 1951. [27] J. Tsitsiklis, D. Bertsekas, and M. Athans, “Distributed asynchronous deterministic and stochastic gradient optimization algorithms, ” IEEE T rans. Autom. Contr ol , vol. 31, no. 9, pp. 803–812, 1986. [28] M. Dong, L. T ong, and B. M. Sadler ., “Information retriev al and processing in sensor networks: deterministic scheduling vs. random access, ” in Information Theory , 2004. ISIT 2004. Pr oceedings. International Symposium on , June 2004, pp. 79–. [29] R. Kozick and B. Sadler, “ Accuracy of source localization based on squared-range least squares (sr-ls) criterion, ” in Computational Advances in Multi- Sensor Adaptive Pr ocessing (CAMSAP), 2009 3rd IEEE International W orkshop on , Dec 2009, pp. 37–40. [30] P . Roux, M. Corciulo, M. Campillo, and D. Dub uq, “Source localization analysis using seismic noise data acquired in e xploration geophysics, ” A GU F all Meeting Abstracts , p. C2249, Dec. 2011. [31] K. Cheung, W .-K. Ma, and H. So, “ Accurate approximation algorithm for toa-based maximum lik elihood mobile location using semidefinite programming, ” in Acoustics, Speech, and Signal Pr ocessing, 2004. Pr oceedings. (ICASSP ’04). IEEE International Confer ence on , vol. 2, May 2004, pp. ii–145–8 vol.2. [32] A. Beck, P . Stoica, and J. Li, “Exact and approximate solutions of source localization problems, ” Signal Pr ocessing, IEEE T ransactions on , vol. 56, no. 5, pp. 1770–1778, May 2008. [33] J. J. Mor, “Generalizations of the trust region problem, ” OPTIMIZATION METHODS AND SOFTW ARE , vol. 2, pp. 189–209, 1993. [34] S. Boyd and L. V anderberghe, Conve x Pr ogramming . New Y ork, NY : Wile y , 2004. [35] K. I. Tsianos and M. G. Rabbat, “Distributed strongly conv ex optimization, ” CoRR , vol. abs/1207.3031, Jul. 2012. [36] F . R. K. Chung, Spectral Graph Theory . American Mathematical Society , 1997.

Original Paper

Loading high-quality paper...

Comments & Academic Discussion

Loading comments...

Leave a Comment