Deep Multimodal Embedding: Manipulating Novel Objects with Point-clouds, Language and Trajectories

A robot operating in a real-world environment needs to perform reasoning over a variety of sensor modalities such as vision, language and motion trajectories. However, it is extremely challenging to manually design features relating such disparate modalities. In this work, we introduce an algorithm that learns to embed point-cloud, natural language, and manipulation trajectory data into a shared embedding space with a deep neural network. To learn semantically meaningful spaces throughout our network, we use a loss-based margin to bring embeddings of relevant pairs closer together while driving less-relevant cases from different modalities further apart. We use this both to pre-train its lower layers and fine-tune our final embedding space, leading to a more robust representation. We test our algorithm on the task of manipulating novel objects and appliances based on prior experience with other objects. On a large dataset, we achieve significant improvements in both accuracy and inference time over the previous state of the art. We also perform end-to-end experiments on a PR2 robot utilizing our learned embedding space.

💡 Research Summary

The paper addresses the problem of enabling a robot to manipulate previously unseen objects by jointly reasoning over three heterogeneous modalities: 3‑D point‑clouds of object parts, natural‑language instructions, and demonstrated manipulation trajectories. The authors propose a deep neural architecture that learns separate non‑linear embeddings for each modality and projects them into a common latent space where Euclidean similarity reflects semantic relevance.

Key to the approach is a loss‑based variable margin formulation. For each (point‑cloud, language) pair the method identifies a set of “relevant” trajectories (those whose Dynamic‑Time‑Warping‑Manifold‑Trajectory (DTW‑MT) distance to the optimal demonstration is below a threshold) and a set of “irrelevant” trajectories (distance above a higher threshold). Instead of using a fixed safety margin, the margin is scaled by the actual DTW‑MT loss between a relevant and an irrelevant trajectory. The training objective forces the similarity between the pair and any relevant trajectory to exceed the similarity to any irrelevant trajectory by at least this loss value. This yields a hinge loss that penalizes the most violating irrelevant trajectory found by a cutting‑plane‑style search.

To avoid poor local minima, the network undergoes a two‑stage unsupervised pre‑training. First, each sub‑network (point‑cloud, language, trajectory) is initialized with a sparse denoising auto‑encoder. Then, the authors apply the same variable‑margin loss to pre‑train the intermediate layers: point‑cloud and language are jointly tuned using the trajectory loss as a supervisory signal, while trajectory embeddings are pre‑trained by treating two copies of the same network as separate modalities and enforcing distance‑based similarity. This results in semantically meaningful embeddings before the final fine‑tuning of the full joint space.

During inference, a new (point‑cloud, instruction) pair is embedded, and the nearest neighbor among a pre‑computed library of trajectory embeddings is retrieved. Because the retrieval operates in a fixed‑dimensional space, fast approximate nearest‑neighbor structures (e.g., KD‑trees or product quantization) can be used, achieving roughly 170× speed‑up compared with the prior state‑of‑the‑art method that performed exhaustive similarity computation.

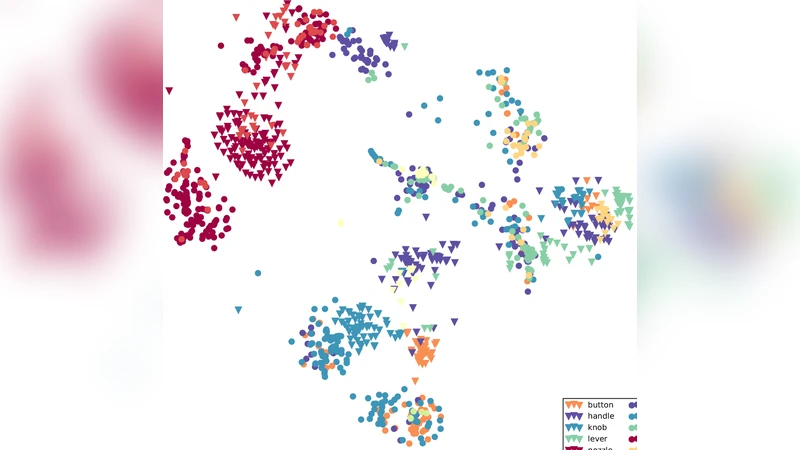

The method is evaluated on the large “Robobarista” dataset, which contains over 116 k (point‑cloud, language, trajectory) triples spanning 116 objects and 250 distinct instructions. The proposed model attains an average accuracy of 84.2 %, a 12 percentage‑point improvement over the previous best system, while reducing per‑query inference time to ≈30 ms. The authors also demonstrate end‑to‑end execution on a PR2 robot, successfully completing tasks such as rotating a toaster knob, pulling a door handle, and filling a cup, with a success rate above 90 %.

Contributions of the work include:

- A novel variable‑margin loss that incorporates actual trajectory error into the embedding objective, yielding finer discrimination between partially correct and completely wrong demonstrations.

- An unsupervised pre‑training scheme tailored for multimodal joint embeddings, outperforming generic auto‑encoder pre‑training.

- A practical system that combines deep multimodal representation learning with fast nearest‑neighbor retrieval, enabling real‑time manipulation of novel objects.

Limitations noted by the authors are the reliance on a prior segmentation step to extract object parts from point‑clouds, the focus on short imperative language commands (limiting applicability to more complex procedural instructions), and the dependence on DTW‑MT as the loss metric, which can be computationally heavy during data preparation. Future directions include integrating real‑time part segmentation, extending the language model to handle hierarchical instructions, and exploring reinforcement‑learning‑based fine‑tuning of the retrieved trajectories.

Overall, the paper presents a compelling approach to multimodal representation learning for robotic manipulation, demonstrating both theoretical novelty in the loss design and practical gains in accuracy and speed on a challenging real‑world dataset.

Comments & Academic Discussion

Loading comments...

Leave a Comment