GRASS: Generative Recursive Autoencoders for Shape Structures

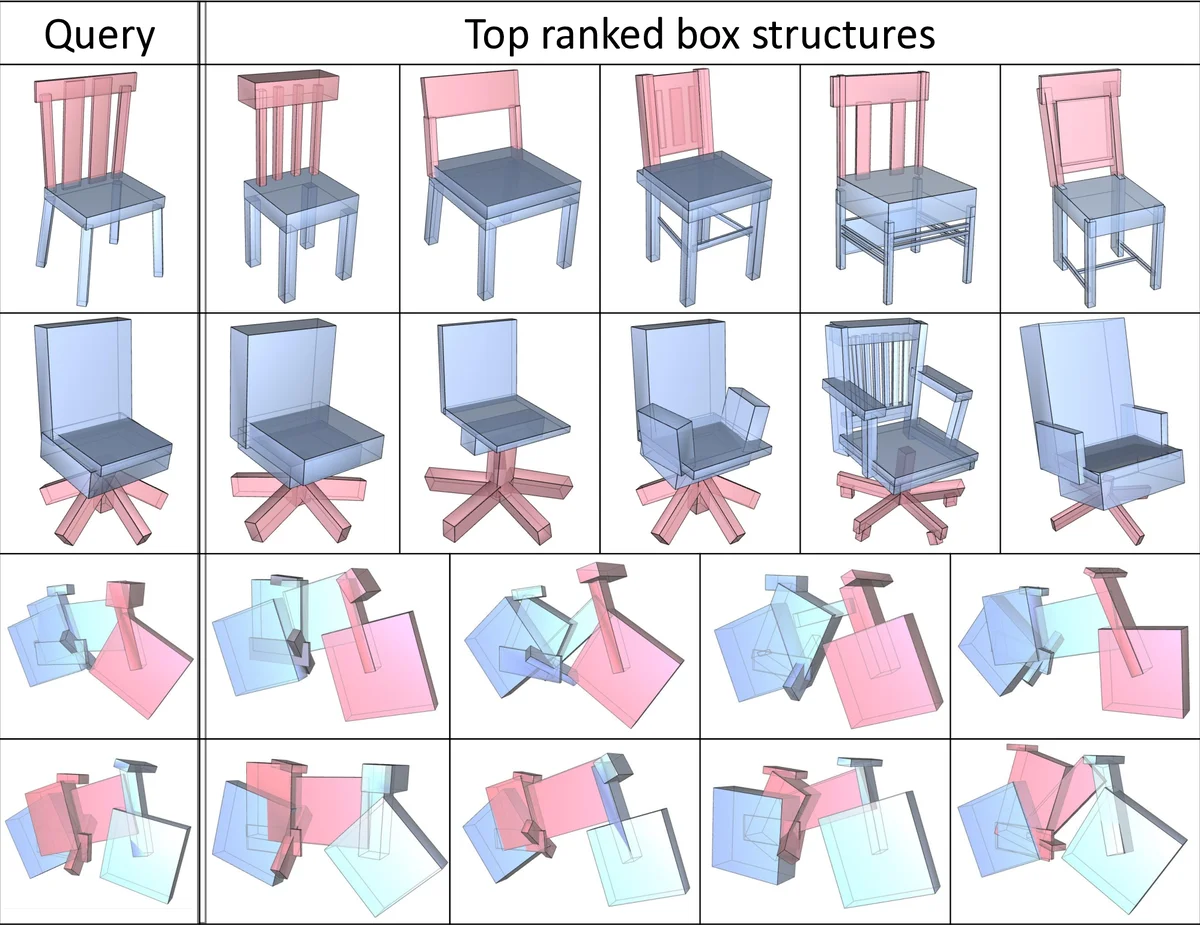

We introduce a novel neural network architecture for encoding and synthesis of 3D shapes, particularly their structures. Our key insight is that 3D shapes are effectively characterized by their hierarchical organization of parts, which reflects fundamental intra-shape relationships such as adjacency and symmetry. We develop a recursive neural net (RvNN) based autoencoder to map a flat, unlabeled, arbitrary part layout to a compact code. The code effectively captures hierarchical structures of man-made 3D objects of varying structural complexities despite being fixed-dimensional: an associated decoder maps a code back to a full hierarchy. The learned bidirectional mapping is further tuned using an adversarial setup to yield a generative model of plausible structures, from which novel structures can be sampled. Finally, our structure synthesis framework is augmented by a second trained module that produces fine-grained part geometry, conditioned on global and local structural context, leading to a full generative pipeline for 3D shapes. We demonstrate that without supervision, our network learns meaningful structural hierarchies adhering to perceptual grouping principles, produces compact codes which enable applications such as shape classification and partial matching, and supports shape synthesis and interpolation with significant variations in topology and geometry.

💡 Research Summary

The paper introduces GRASS (Generative Recursive Autoencoders for Shape Structures), a novel deep learning framework that learns to encode, model, and synthesize the hierarchical part structures of man‑made 3D objects. The authors observe that the most informative representation of a shape is not a voxel grid or point cloud, but a hierarchy of parts defined by adjacency and symmetry relationships. To capture this, each part is abstracted as an oriented bounding box (OBB) and encoded into a fixed‑length vector (e.g., 32‑D).

GRASS consists of three stages.

Stage 1 – Recursive Autoencoder. A recursive neural network (RvNN) processes an arbitrary set of OBBs in a bottom‑up fashion. Two types of internal nodes are defined: (i) assembly nodes that merge two adjacent parts, and (ii) symmetry nodes that group multiple parts generated by a common symmetry (reflection, rotation, or translation). The encoder repeatedly collapses pairs (or groups) of OBB codes into a parent code until a single root code remains. The decoder performs the inverse operation, expanding the root code back into the original OBB layout and the corresponding hierarchy. Reconstruction loss combines geometric errors of the OBB parameters with a penalty for mismatched tree structures, forcing the network to discover meaningful groupings without any supervision.

Stage 2 – Learning a Plausible Structure Manifold. After the autoencoder is trained, the distribution of root codes for a given object class (e.g., chairs) is modeled with a Generative Adversarial Network (GAN). The generator maps random noise to a low‑dimensional latent vector that lies on the manifold of “realistic” root codes; the discriminator distinguishes these from true root codes produced by the autoencoder. An auxiliary VAE‑GAN reconstruction term ensures that generated codes can be decoded into valid hierarchies. This stage yields a compact, continuous shape space where sampling, interpolation, and blending are straightforward.

Stage 3 – Part Geometry Synthesis. The hierarchy produced in Stage 2 consists only of OBBs. To obtain detailed geometry, a second 3D‑CNN (or 3D‑GAN) is trained to translate an OBB’s embedding, together with global and local context, into a voxel representation of the actual part. Because the network conditions on both the whole‑object code and the local parent‑child relationship, the same OBB can generate different detailed shapes depending on its position in the hierarchy, enabling context‑aware synthesis.

The authors evaluate GRASS on several tasks. Unsupervised training automatically discovers symmetry groups and adjacency clusters that align with human perceptual grouping principles. The fixed‑length root codes are used for shape classification via k‑NN, outperforming baseline voxel‑CNNs despite being far more compact. Interpolating between two root codes produces smooth, topology‑changing morphs (e.g., a chair gradually turning into a stool). Moreover, the framework can blend two hierarchies by swapping sub‑trees, yielding novel designs that inherit structural traits from both parents.

Key contributions are: (1) the first fully generative neural model for structured 3D shape representations; (2) an extended RvNN architecture capable of handling both adjacency‑based assembly and multiple symmetry types within a single recursive framework; (3) a demonstration that a fixed‑dimensional code can simultaneously encode geometry and hierarchy, enabling downstream applications such as classification, partial matching, interpolation, and part‑level synthesis.

Limitations include the reliance on OBB abstraction, which may not capture highly curved or non‑symmetric parts accurately, and potential instability in learning symmetry group orderings. Future work could replace OBBs with richer parametric primitives, integrate graph neural networks for non‑hierarchical relationships, and explore higher‑resolution geometry generation. Overall, GRASS represents a significant step toward generative models that respect the intrinsic combinatorial structure of man‑made objects, moving beyond voxel‑centric approaches and opening new possibilities for data‑driven design and analysis.

Comments & Academic Discussion

Loading comments...

Leave a Comment