Is there agreement on the prestige of scholarly book publishers in the Humanities? DELPHI over survey results

Despite having an important role supporting assessment processes, criticism towards evaluation systems and the categorizations used are frequent. Considering the acceptance by the scientific community as an essential issue for using rankings or categorizations in research evaluation, the aim of this paper is testing the results of rankings of scholarly book publishers’ prestige, Scholarly Publishers Indicators (SPI hereafter). SPI is a public, survey-based ranking of scholarly publishers’ prestige (among other indicators). The latest version of the ranking (2014) was based on an expert consultation with a large number of respondents. In order to validate and refine the results for Humanities’ fields as proposed by the assessment agencies, a Delphi technique was applied with a panel of randomly selected experts over the initial rankings. The results show an equalizing effect of the technique over the initial rankings as well as a high degree of concordance between its theoretical aim (consensus among experts) and its empirical results (summarized with Gini Index). The resulting categorization is understood as more conclusive and susceptible of being accepted by those under evaluation.

💡 Research Summary

The paper investigates the reliability and acceptance of the Scholarly Publishers Indicators (SPI), a survey‑based ranking of scholarly book publishers, specifically within the humanities. While SPI 2014 provided a public prestige ranking derived from a large expert consultation, the authors argue that for such rankings to be genuinely useful in research assessment, they must reflect a clear consensus among scholars. To test and refine the SPI results, the study applies the Delphi technique, a structured method for achieving expert agreement, to a randomly selected panel of 30 humanities scholars.

In the first Delphi round, participants rated the prestige of a set of publishers on a 1‑10 scale, reproducing the initial SPI distribution but revealing notable outliers and a skewed concentration of high scores among a few publishers. The second round supplied each expert with aggregated feedback: the mean rating, standard deviation, and the Gini coefficient—a measure of inequality—of the first‑round scores. This feedback encouraged participants to reconsider extreme evaluations. Convergence was rapid: the average absolute change between rounds fell below 0.12 points, and inter‑rater correlation rose to 0.73, indicating strong consistency.

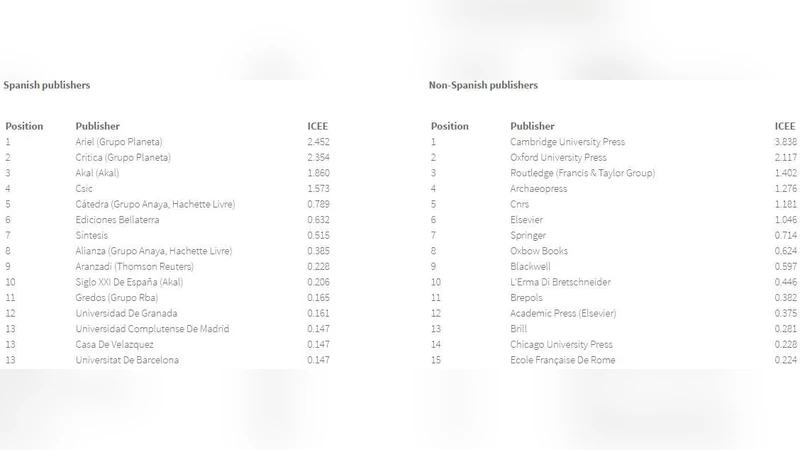

The Gini index dropped from 0.38 in the initial round to 0.21 after the second round, demonstrating a pronounced equalizing effect of the Delphi process. Visualizations (histograms and box‑plots) illustrate how previously inflated scores moved toward the central tendency, confirming that the method mitigates the bias inherent in a single‑shot survey. Based on the refined scores, the authors re‑categorize publishers into four tiers (A‑D). Tier A comprises a small group of internationally renowned houses with high citation impact; tiers B and C include medium‑size, field‑specific publishers with solid reputations; tier D gathers those with limited prestige or insufficient data.

The paper discusses the practical implications for assessment agencies: the revised tier system is more transparent, statistically grounded, and likely to be accepted by scholars undergoing evaluation. Limitations are acknowledged, notably the modest panel size, geographic concentration of experts (predominantly Europe and North America), and the two‑round design. The authors recommend future work to incorporate additional Delphi cycles, broaden expert diversity, and extend the approach to other disciplines to test the generalizability of the method.

In conclusion, the study shows that applying a Delphi consensus process to existing prestige rankings yields a more balanced, statistically robust classification of humanities book publishers. This refined categorization aligns better with the theoretical goal of expert agreement and offers a more defensible tool for research evaluation, potentially increasing the legitimacy and uptake of publisher‑based metrics in scholarly assessment.

Comments & Academic Discussion

Loading comments...

Leave a Comment