Designing labeled graph classifiers by exploiting the Renyi entropy of the dissimilarity representation

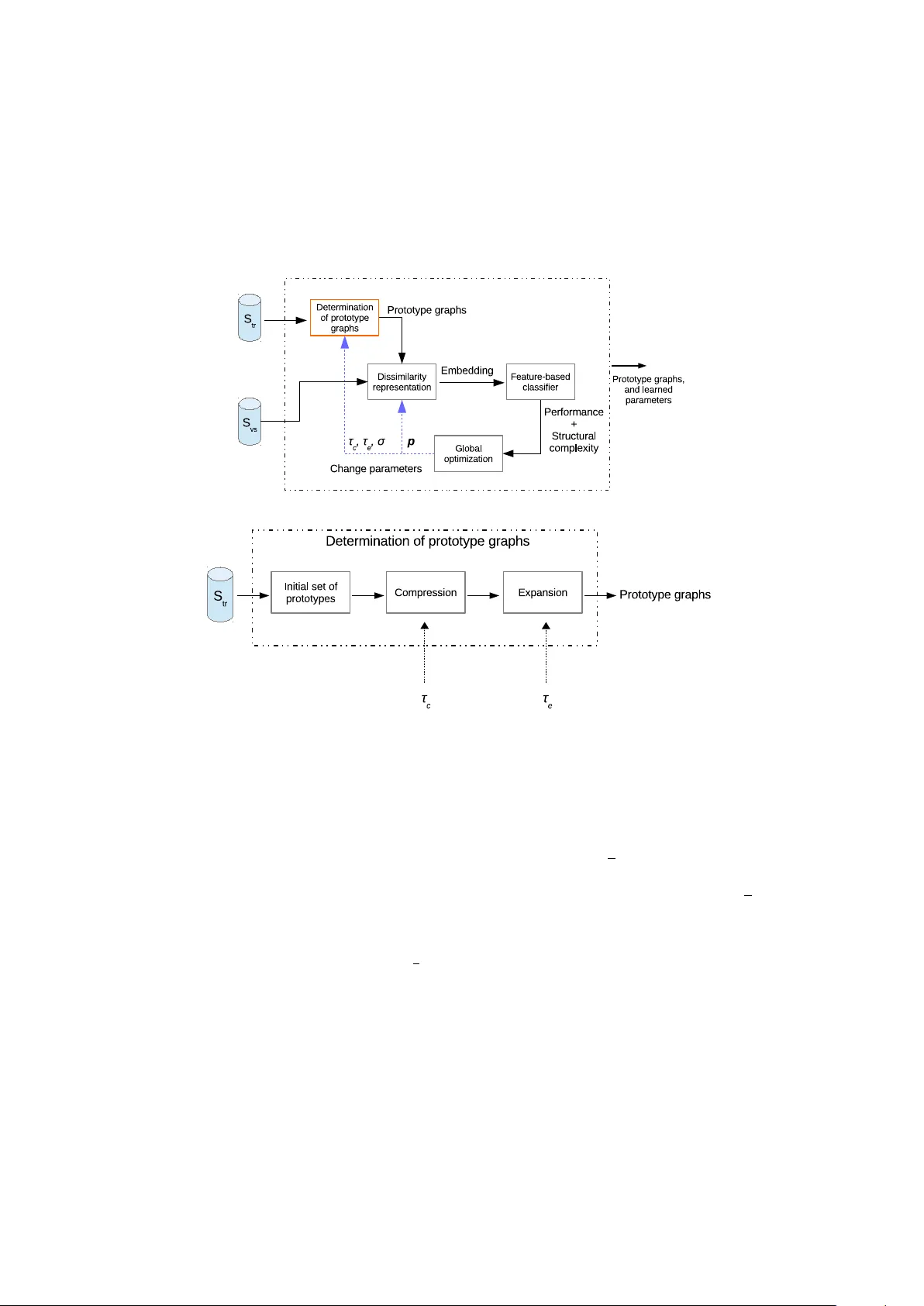

Representing patterns as labeled graphs is becoming increasingly common in the broad field of computational intelligence. Accordingly, a wide repertoire of pattern recognition tools, such as classifiers and knowledge discovery procedures, are nowaday…

Authors: Lorenzo Livi