Entropy? Honest!

Here we deconstruct, and then in a reasoned way reconstruct, the concept of “entropy of a system,” paying particular attention to where the randomness may be coming from. We start with the core concept of entropy as a COUNT associated with a DESCRIPTION; this count (traditionally expressed in logarithmic form for a number of good reasons) is in essence the number of possibilities—specific instances or “scenarios,” that MATCH that description. Very natural (and virtually inescapable) generalizations of the idea of description are the probability distribution and of its quantum mechanical counterpart, the density operator. We track the process of dynamically updating entropy as a system evolves. Three factors may cause entropy to change: (1) the system’s INTERNAL DYNAMICS; (2) unsolicited EXTERNAL INFLUENCES on it; and (3) the approximations one has to make when one tries to predict the system’s future state. The latter task is usually hampered by hard-to-quantify aspects of the original description, limited data storage and processing resource, and possibly algorithmic inadequacy. Factors 2 and 3 introduce randomness into one’s predictions and accordingly degrade them. When forecasting, as long as the entropy bookkeping is conducted in an HONEST fashion, this degradation will ALWAYS lead to an entropy increase. To clarify the above point we introduce the notion of HONEST ENTROPY, which coalesces much of what is of course already done, often tacitly, in responsible entropy-bookkeping practice. This notion, we believe, will help to fill an expressivity gap in scientific discourse. With its help we shall prove that ANY dynamical system—not just our physical universe—strictly obeys Clausius’s original formulation of the second law of thermodynamics IF AND ONLY IF it is invertible. Thus this law is a TAUTOLOGICAL PROPERTY of invertible systems!

💡 Research Summary

**

The paper “Entropy? Honest!” offers a fresh, philosophically grounded reinterpretation of entropy and introduces the notion of “Honest Entropy.” It begins by defining entropy as the count of all microscopic configurations that satisfy a given macroscopic description. This definition coincides with Boltzmann’s S = k ln W, but the author stresses that the logarithm is a convenient mathematical device for unit consistency, additivity, and scale reduction rather than an intrinsic physical requirement. The description itself can be generalized to a probability distribution or, in quantum mechanics, to a density operator, thereby unifying classical and quantum treatments under a single counting framework.

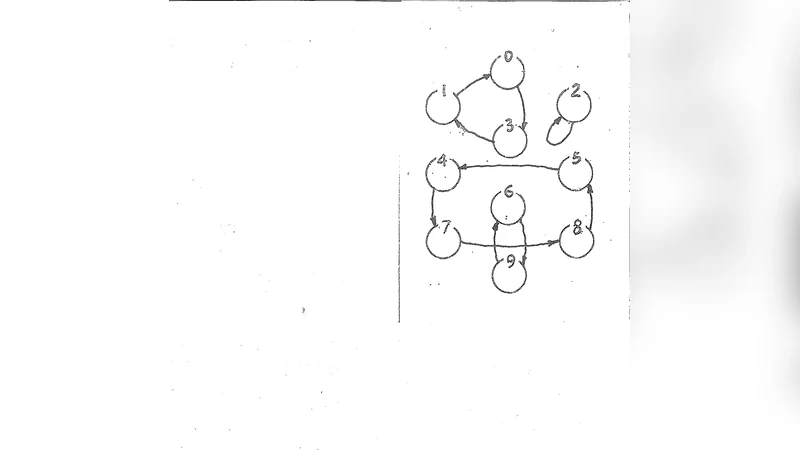

The author then identifies three distinct mechanisms that can change a system’s entropy over time: (1) internal dynamics, (2) unsolicited external influences, and (3) approximations made when predicting future states. Internal dynamics are split into reversible and irreversible transformations. In a reversible evolution, the mapping between microstates is one‑to‑one, so the count of compatible microstates—and thus entropy—remains unchanged. Irreversible processes (e.g., friction, mixing) create new microstates that were not previously accounted for, leading to an increase in the count. External influences inject unknown energy or information, which the observer cannot fully characterize; this uncertainty is automatically reflected as an entropy increase. Approximation errors arise from limited memory, computational resources, incomplete data, or algorithmic inadequacy. Because the model cannot capture the exact microscopic state, it must resort to a probabilistic description, again raising entropy.

“Honest Entropy” is defined as a bookkeeping practice that records the randomness introduced by the latter two mechanisms in a fully transparent way. The observer explicitly separates known information from unknown information, representing the latter by a probability distribution and incorporating it into the entropy calculation. By doing so, no hidden information is discarded; all uncertainty is accounted for, guaranteeing that entropy never decreases. This contrasts with informal or “lazy” treatments where hidden variables are ignored, potentially leading to apparent entropy reductions that violate the second law.

The central theorem proved in the paper states: a system’s honest entropy is invariant under reversible dynamics and strictly increases under any irreversible dynamics. Consequently, the classic Clausius formulation of the second law—entropy of an isolated system never decreases—is shown to be equivalent to the statement that the system is invertible. In other words, the second law is not a deep physical principle but a tautological consequence of the definition of invertibility when entropy is accounted for honestly. The paper thus reframes the second law as a definitionally true property of reversible systems rather than an empirical law.

To clarify common misconceptions, the author enumerates seven “myths” about entropy: (1) the belief that Shannon information entropy is fundamentally different from thermodynamic entropy; (2) the idea that entropy is a material property attached to a physical object; (3) the notion that entropy always increases monotonically, ignoring statistical fluctuations; (4) the claim that a deck of cards has an intrinsic entropy measurable by inspection; (5) the assumption that logarithms are essential to the concept of entropy; (6) the view that entropy is directly measurable like temperature or pressure; (7) the conviction that the second law is a universal physical law independent of information‑theoretic considerations. Each myth is dissected, showing how honest entropy resolves the underlying confusion by explicitly tracking the source of randomness.

A concise historical survey follows, tracing the evolution of the entropy concept from Clausius (1850) who introduced entropy as a thermodynamic quantity analogous to energy, through Boltzmann (1877) who linked it to the logarithm of the number of microstates, to Gibbs (1902) who generalized to the ensemble average S = −k∑p_i ln p_i, and finally to von Neumann (1927) who incorporated quantum uncertainty via the density operator. The author argues that each step can be viewed as a successive refinement of the same counting principle, culminating naturally in the honest‑entropy framework.

The paper concludes by suggesting practical applications of honest entropy across scientific, engineering, and societal domains. In predictive modeling, explicitly accounting for uncertainty prevents hidden information loss and safeguards against inadvertent violations of the second law. In data compression, cryptography, economic modeling, and biological systems, honest entropy provides a rigorous “information accounting” tool that can improve transparency, reliability, and robustness.

Overall, the work reframes entropy as an information‑theoretic bookkeeping device, demonstrates that entropy increase is a logical consequence of non‑invertibility when randomness is recorded honestly, and positions the second law of thermodynamics as a definitionally true statement about reversible dynamics. This perspective offers a meta‑level insight that bridges physics, information theory, and philosophy of science.

Comments & Academic Discussion

Loading comments...

Leave a Comment