LiftUpp: Support to develop learner performance

Various motivations exist to move away from the simple assessment of knowledge towards the more complex assessment and development of competence. However, to accommodate such a change, high demands are put on the supporting e-infrastructure in terms of intelligently collecting and analysing data. In this paper, we discuss these challenges and how they are being addressed by LiftUpp, a system that is now used in 70% of UK dental schools, and is finding wider applications in physiotherapy, medicine and veterinary science. We describe how data is collected for workplace-based development in dentistry using a dedicated iPad app, which enables an integrated approach to linking and assessing work flows, skills and learning outcomes. Furthermore, we detail how the various forms of collected data can be fused, visualized and integrated with conventional forms of assessment. This enables curriculum integration, improved real-time student feedback, support for administration, and informed instructional planning. Together these facets contribute to better support for the development of learners’ competence in situated learning setting, as well as an improved experience. Finally, we discuss several directions for future research on intelligent teaching systems that are afforded by using the design present within LiftUpp.

💡 Research Summary

The paper presents LiftUpp, a comprehensive digital platform designed to support workplace‑based assessment and competence development in health‑professional education, with a primary focus on dentistry. Recognising that modern graduate outcomes demand not only knowledge but demonstrable performance in real‑world contexts, the authors argue that traditional assessment systems are insufficient and that new e‑infrastructure is required to collect, fuse, and analyse complex data streams.

LiftUpp’s architecture consists of a central “core” that stores learning outcomes (both internal curriculum and external accreditation requirements), exam questions, and detailed workflow items that represent the steps of clinical procedures. This core is linked one‑to‑many with three functional modules: an assessment‑building module (supporting exam design, psychometrics, QA, and feedback), an iPad‑based data‑collection module, and a web‑portal for administration, analytics, and visualisation. By mapping every assessment artefact to learning outcomes, the system enables automatic verification of accreditation compliance and semi‑automatic generation of exams that satisfy those outcomes.

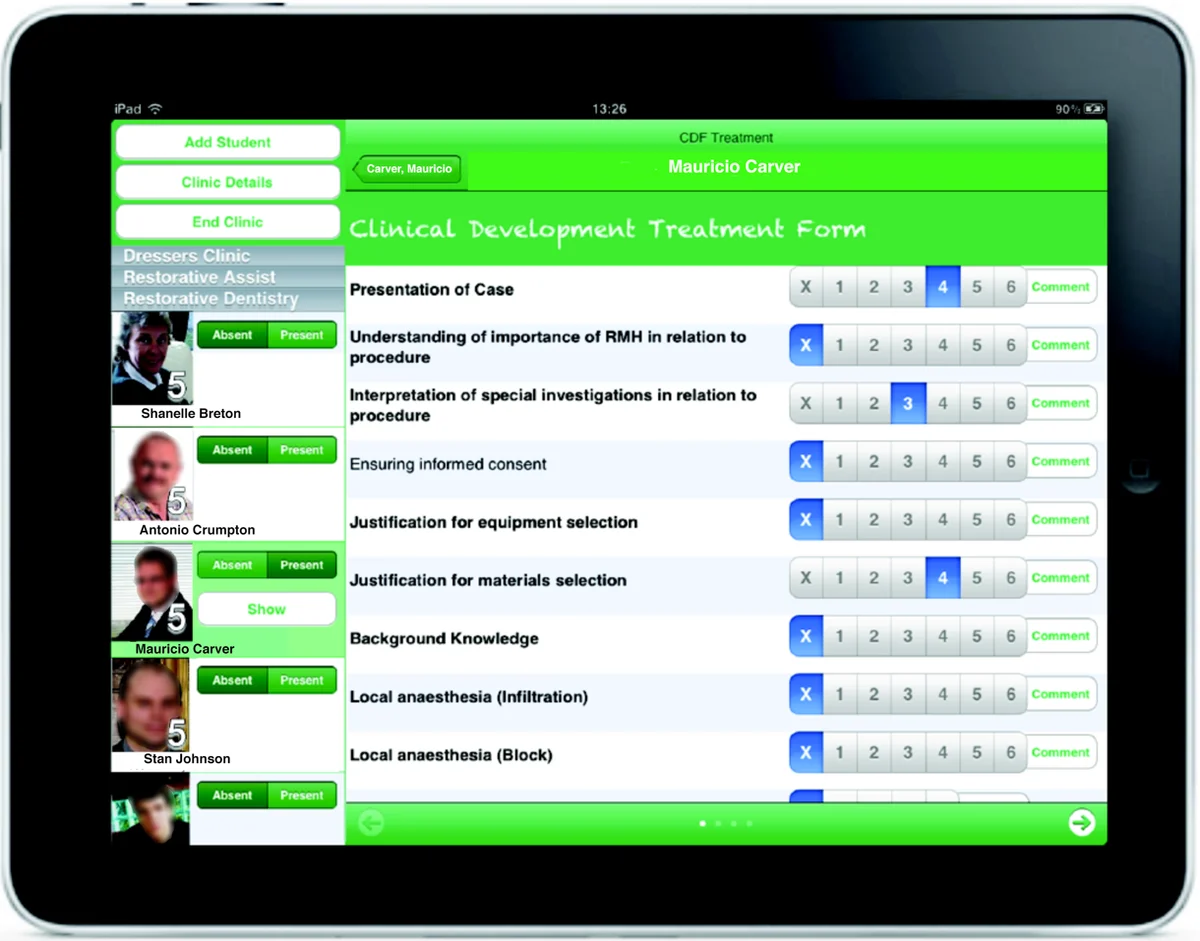

Data collection is performed in clinical settings using a purpose‑built iPad app. Observers (clinical staff) record student performance on a six‑point “developmental indicator” scale for each workflow item. The scale is grounded in evidence that links higher points to greater learner independence, and its reliability has been validated. The workflow model orders observations in the natural sequence of a procedure, allowing observers to capture up to 18 data points per session with minimal disruption. Over a typical dental program, a student accumulates roughly 5,000 observations across 30 skills, providing a rich longitudinal record. The app is location‑aware, works offline, and automatically synchronises data to the core once connectivity is restored. After each session, students receive personalised feedback on the device and must acknowledge receipt, ensuring a closed feedback loop.

The platform fuses these workplace observations with traditional assessment data (written exams, OSCEs, portfolios). Because every data point is linked to learning outcomes, the system can generate dashboards that display “sessional consistency” – the proportion of a student’s sessions in which performance remains stable at a target level. This moves assessment from simple counting of procedures to a nuanced view of sustained competence. The visualisation tools also support curriculum designers by highlighting gaps between taught content and assessed outcomes, and by providing item‑level performance analytics for continuous improvement of exam questions.

Beyond its current capabilities, LiftUpp is positioned as a test‑bed for artificial intelligence in education. The massive, time‑stamped, multi‑source dataset can feed deep‑learning sequence models (e.g., LSTMs, Transformers) or Bayesian networks to predict future performance, recommend the next optimal clinical task, and flag students at risk of failing to achieve competence. The authors note that unlike many existing AIED systems that rely on knowledge‑tracing of discrete knowledge components, LiftUpp captures observable behaviours, offering a more realistic foundation for AI‑driven tutoring in clinical environments.

Challenges identified include inter‑rater reliability of observational scores, the granularity limits of a six‑point scale, and the need to protect student and patient privacy under GDPR. The paper suggests ongoing work on rater calibration, scale refinement, and privacy‑preserving analytics (e.g., differential privacy).

In conclusion, LiftUpp demonstrates that a well‑engineered digital infrastructure can collect high‑frequency, high‑fidelity workplace data, integrate it with curriculum outcomes, and provide real‑time feedback and analytics that support both learners and educators. Its deployment across 70 % of UK dental schools and expansion into veterinary, physiotherapy, and nursing programs illustrates its scalability. The system lays the groundwork for future AI‑enhanced educational interventions, promising more personalised, data‑driven pathways to competence in health‑professional training.

Comments & Academic Discussion

Loading comments...

Leave a Comment