Enhanced Factored Three-Way Restricted Boltzmann Machines for Speech Detection

In this letter, we propose enhanced factored three way restricted Boltzmann machines (EFTW-RBMs) for speech detection. The proposed model incorporates conditional feature learning by multiplying the dynamical state of the third unit, which allows a m…

Authors: Pengfei Sun, Jun Qin

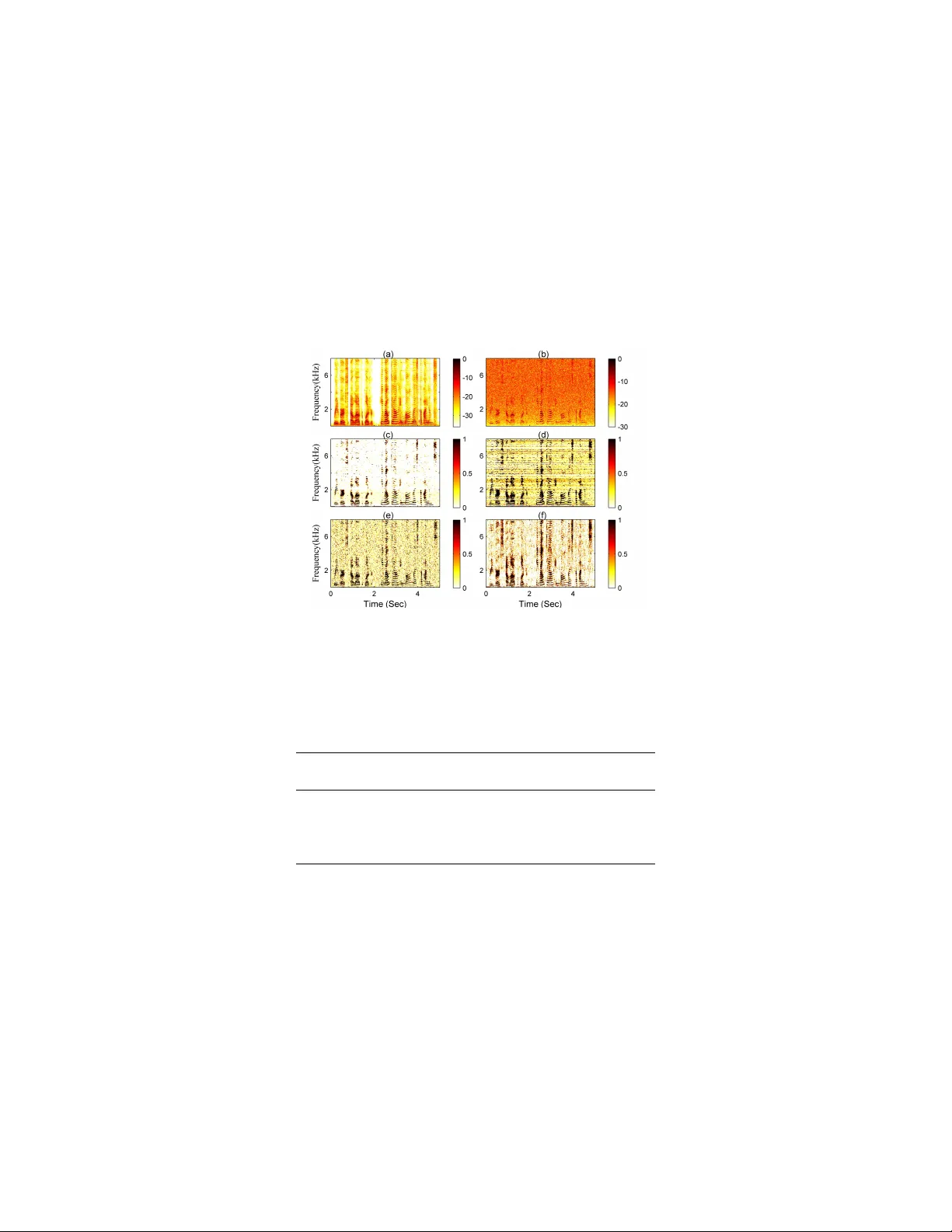

UNDER CONSIDERA TION A T P A TTERN RECOGNITION LETTERS, APRIL 2017 1 Enhanced F actored Three-W ay Restricted Boltzmann Machines for Speech Detection Pengfei Sun, Student Member , and Jun Qin, Member , IEEE, Abstract In this letter , we propose enhanced factored three-way restricted Boltzmann machines (EFTW -RBMs) for speech detection. The proposed model incorporates conditional feature learning by introducing a multiplicati ve input branch, which allows a modulation ov er visible-hidden node pairs. Instead of directly feeding previous frames of speech spectrum into this third unit, a specific algorithm, including weighting coef ficients and threshold shrinkage, is applied to obtain more correlated and representativ e inputs. T o reduce the parameters of the three-way network, low-rank factorization is utilized to decompose the interaction tensor , on which non-negati ve constraint is also imposed to address the sparsity characteristic. This enhanced model effecti vely strengthens the structured feature learning that helps to recover the speech components from noisy background. The validations based on the area-under-R OC-curv e (A UC) and signal distortion ratio (SDR) show that our EFTW -RBMs outperforms sev eral existing 1D and 2D (i.e., time and time-frequency domain) speech detection algorithms in various noisy en vironments. Index T erms speech detection, three-way restricted Boltzmann machines, recursiv e, sparsity . I . I N T RO D U C T I O N Speech detection (SD) greatly improves the separation of speech sources from background interferes [1]. Now a- days, SD techniques attract intense attentions in a general speech processing frame work, including automatic speech recognition (ASR) [2], speech enhancement [3] and speech coding [1]. Recently , deep neural network (DNN) based 1D SD algorithms show great advantages over con v entional voice activity detectors [4], [5]. The obvious benefits of such approaches lie on their easy integration into ASR, robust performance, and feature fusion capability . Zhang and W u [4] introduced deep belief network and used stacked Bernoulli-Bernoulli restricted Boltzmann machines (RBMs) to conduct the 1D SD. The idea that incorporating temporal context correlation to strengthen the dynamical detection is widely used in network structure design [6], [7]. Other DNN based 1D SD strategies might either focus on improving the front-end acoustic feature inputs (e.g., acoustic models and statistical models) [8], [9], or exploiting the supervised network structure in terms of sample training [10]. These DNN based approaches rely on comprehensi ve network training, and then are applied to binarily label the speech activities in the time domain. Howe ver , 1D SD methods integrate frequency features, and cannot re veal information in the joint time-frequenc y domain, which are generally more expressi ve on speech activities, compared with the binary v alues in 1D SD approaches. August 11, 2018 DRAFT UNDER CONSIDERA TION A T P A TTERN RECOGNITION LETTERS, APRIL 2017 2 In this study , we propose enhanced factored three-way RBMs (EFTW -RBMs) for both 1D and 2D SD. The main idea is utilizing backward sampling from the hidden layer that represents the speech features. The well-trained RBMs can effecti vely reconstruct the speech components, which further are normalized as the speech presence probability (SPP) v alues. The proposed EFTW -RBMs model introduces a multiplicati ve third branch to exploit the strong correlations in consecuti ve speech frames, therefore can more effecti vely to capture the speech structures. A continuously updated memorized input is provided by applying weighting coefficients α and threshold function S T , which retains global frames based on the locally updated three-way RBMs. Low rank factorization and non-negati ve regularization are applied for the network training. I I . P RO P O S E D M E T H O D A. Gated Restricted Boltzmann Machines In previous study [11], multiplicative gated RBMs are described by an energy function that captures correlations among the components of x , y and h E ( y , h ; x ) = − X ij k w ij k x i σ i y j σ j h k − X k w h k h k + X j ( y j − w y j ) 2 2 σ 2 j (1) where i , j and k inde x input, visible and hidden units, respectiv ely . The bold font represents the variable, and small cap denotes the observ ation. x i and y j are Gaussian units, and h k is the binary state of the hidden unit k . σ i and σ j are the standard de viations associated with x i and y j , respectively . The components w ij k of a three-way tensor connect units x i , y j and h k . The terms w h k and w y j represent biases of the hidden and visible units, respectively . The energy function assigns a probability to the joint configuration as: p ( y , h | x ) = 1 Z ( x ) exp ( − E ( y , h ; x )) (2) Z ( x ) = X h , y exp ( − E ( y , h ; x )) (3) where the normalization term Z ( x ) is summed over y and h , and hence defining the conditional distribution p ( y , h | x ) . Since there is no connections between the neurons in the same layer, inferences of the k th hidden and j th visible unit can be performed as p ( h k = 1 | y ; x ) = S (∆ E k ) (4) p ( y j = y | h ; x ) = N ( y | ∆ E j , σ 2 j ) (5) where N ( ·| µ, σ 2 ) denotes the Gaussian probability density function with mean µ and standard deviation σ . S ( · ) is the sigmoid activ ation function. ∆ E k and ∆ E j are the ov erall inputs of the k th hidden unit and j th visible unit, respectiv ely [12]. The gated RBMs allows the hidden units to model the transition between successiv e frames. August 11, 2018 DRAFT UNDER CONSIDERA TION A T P A TTERN RECOGNITION LETTERS, APRIL 2017 3 B. F actor ed Thr ee-W ay Restricted Boltzmann Machines T o reduce the parameters of three-way RBMs, the interaction tensor w ij k can be factored into decoupled matrices [11]. In our study , in order to reconstruct the inputs to obtain the weighting coefficients α , a symmetrical structure is proposed as shown in Fig. 1. As a result, the bias term w x i is added to the input unit x i to balance the structure of visible unit y j , and FTW -RBMs in the energy function (1) can be rewrite as − E ( y , h ; x ) = − X i ( x i − w x i ) 2 2 σ 2 i − X j ( y j − w y j ) 2 2 σ 2 j X k w h k h k + F X f =1 X ij k w x if w y j f w h kf x i σ i y j σ j h k (6) where the I × J × K parameter tensor w ij k is replaced by three matrices (i.e., w x if , w y j f , and w h kf ) with sizes I × F , J × F and K × F , in which f is the factor index. Accordingly , it can be reor ganized into − E ( y , h ; x ) = X k w h k h k − X j ( y j − w y j ) 2 2 σ 2 j − X i ( x i − w x i ) 2 2 σ 2 i + X f w x if X i x i σ i ! X j w y j f y j σ j X k w h kf h k ! (7) By noting f x f = P I i =1 w x if x i σ i , f y f = P J j =1 w y j f y j σ j , f h f = P K k =1 w h kf h k . The three factor layers as shown in Fig. 1 hav e the same size F , and the factor terms (i.e., W x x , W y y , and W h h ) correspond to three linear filters applied to the input, visible, and the hidden unit, respectiv ely . T o perform k-step Gibbs sampling in the factored model, the o verall inputs of each unit in the three layers are calculated as ∆ E k = X f w h kf X i w x if x i σ i X j w y j f y j σ j + w h k (8) ∆ E j = X f w y j f X i w x if x i σ i X k w h kf h k + w y j (9) ∆ E i = X f w x if X j w y j f y j σ j X k w h kf h k + w x i (10) In (8)-(10), the factor layers are multiplied element-wise (as the ⊗ illustrated in Fig. 1) through the same index f . These are then substituted in (4)-(5) for determining the probability distributions for each of the visible and hidden units. For input units, the symmetrical form N ( x | ∆ E i , σ 2 i ) is used. The FTW -RBMs model learn speech patterns in the hidden units by pairwise matching input filter responses and visible filter responses, and this procedure aims to find a set of filters that can reflect the correlations of consecutiv e speech frames in the training data. C. Enhanced Input Units In FTW -RBMs model described in section B, the consecutiv e frames of noisy speech are fed into the trained networks as input and visible units, respectiv ely . The SD results reflected by SPP distrib ution are obtained from the reconstructed visible units. The multiplicati ve structure of FTW -RBMs helps to amplify the speech features, howe v er , unav oidably introduces high sensitivity to transient noise components. T o obtain a more robust netw ork, August 11, 2018 DRAFT UNDER CONSIDERA TION A T P A TTERN RECOGNITION LETTERS, APRIL 2017 4 Fig. 1. The schematic of symmetrical three-way RBMs. The three factor layers have the same size, and ⊗ refers to element-wise multiplication. our EFTW -RBMs model selectively retains long-term speech features by proposing an enhanced input as shown in Fig. 2, in which three steps (i.e., S1, S2, and S3) are implemented in each data batch training loop. In S1, X t ∈ R n × ( m + n t ) and Y t ∈ R n × m are fed into the input and visible units ( t index different training batches in the time domain), where n t is the globally retained frames. X t = [ ˆ X t − 1 Y t − 1 ] , in which Y t − 1 are the visible units in ( t − 1) th training loop, and ˆ X t − 1 ∈ R n × n t is selected from pre vious input units X t − 1 . The backward sampling in terms of the input branch generates the weight coefficients α , which can be gi ven as α = N ( x t | ∆ E i , σ 2 i ) (11) (11) represents the normalized reconstruction of the input data batch, in which both hidden layer and visible layer are in volved. As a result, α reflects the correlation between the consecutive training units, and is also subjected to the in v erse constraint imposed by the visible unit y t . In S2, element-wise multiplied α X t is used as the input for network training. The reconstructed input units are notated as X t 0 . Accordingly , we define λ i = min j ≤ m || X t 0 (: , i ) − Y t (: , j ) || , i ≤ ( n t + m ) . λ i indicates the distance between i th column vector of X t and the matrix Y t . The smaller value λ i is, the closer to Y t the vector is. T o retain the global speech features, a criterion is proposed to obtain ˆ X t by selecting column vectors from X t , that is described as ˆ X t = { X t (: , i ) | i ∈ S n t ( D ) } (12) where S n t ( · ) is a threshold function that returns the index of n t smallest elements in D = { λ i } . In S3 step, the input units X t +1 = [ ˆ X t Y t ] at ( t + 1) th data batch are prepared for the next training loop. The enhanced input units in the proposed EFTW -RBMs include a dynamical bias through the weighting coeffi- cients α , whereas the backward sampling approach imposes the constraints of visible units. The threshold function also provides a benefit to retain the global features of speech, similar to the long short-term memory method [6]. When implementing the SD, the noisy speech are segmented into consecutiv e data batches as input and visible units, and accordingly the proposed EFTW -RBMs model can backwardly generate the reconstructed visible layer, which can be normalized as SPP . August 11, 2018 DRAFT UNDER CONSIDERA TION A T P A TTERN RECOGNITION LETTERS, APRIL 2017 5 Fig. 2. The schematic of EFTW -RBMs. In a batch training loop, it includes three steps, referring as S1, S2, S3. The arrow indicates the data flow direction. In the network, arrow with up direction means forward proper D. Pr obabilistic infer ence and learning rules T o train EFTW -RBMs, one needs to maximize the av erage log-probability L = log p ( y | x ) of a set of training pairs { ( x , y ) } . The deriv ati ve of the negati ve log-probability with respect to parameters θ is giv en as − ∂ L ∂ θ = h ∂ E ( y , h ; x ) ∂ θ i h − h ∂ E ( y , h ; x ) ∂ θ i h , y (13) where hi v denotes the expectation with respect to v ariable v . In practical, Marko v chain step running is used to approximate the a verages in Eq. (13). By dif ferentiating (6) with respect to the parameters, we get − ∂ E ∂ w h kf = − h k X i x i w x if X j y j w y j f (14) − ∂ E ∂ w y j f = − y j X i x i w x if X k h k w h kf (15) − ∂ E ∂ w x if = − x i X j y j w y j f X k h k w h kf (16) − ∂ E ∂ w h k = h k , − ∂ E ∂ w x i = x i , − ∂ E ∂ w y j = y j (17) T o encourage nonnegati vity in three factor matrices w kf , w if , and w j f , a quadratic barrier function is incorporated to modify the log probability . As a result, the objecti ve function is the regularized likelihood illustrated as [13] − L reg = L ( y ; x ) − β 2 X X f ( w ) (18) where f ( x ) = x 2 x < 0 0 x ≥ 0 Based on (18), the parameter update can be translated as w ← w + η ( hi h − hi h,y − β d w e − ) (19) August 11, 2018 DRAFT UNDER CONSIDERA TION A T P A TTERN RECOGNITION LETTERS, APRIL 2017 6 where d w e − denotes the negati ve part of the weight. The number of hidden units and factors should be in a region that exact number of hidden units and factors did not have a strong influence on the result. The procedure of EFTW -RBMs for SD can be illustrated as following: Algorithm 1: EFTW -RBMs for SD T raining for iteration ≤ N epoch do for Iteration ≤ N batch do y t = Y t ; x t = [ ˆ X t − 1 Y t ]; sample h t ∼ p ( h | y t , x t ) by (4)(8) ; calculate h ∂ E ∂ θ i h by (14)-(17); for iteration ≤ N step do sample h t,n ∼ p ( h | y t,n , x t,n ) by (4)(8); sample y t,n ∼ p ( y | x t,n , h t,n ) by (5)(9); calculate h ∂ E ∂ θ i h,y by (14)-(17) ; update parameter set { w h kf , w y j f , w x if , w h k , w y j , w x i } by (13) and (19) ; sample α t ∼ p ( x | y t,n , h t,n ) by (10); update ˆ X t by (12); SPP estimation for iteration ≤ N f rames do sample h t ∼ p ( h | y t ) by (8) ; sample α t ∼ p ( x | y t , h t ) by (10); update x t by (12); sample y t ∼ p ( y | h t , x t ) by (9); P (: , t ) = y t ; Output: SPP matrix P I I I . E X P E R I M E N T A L E V A L UA T I O N In our e v aluation experiments, the clean speech corpus, consisting of 600 training sentences and 120 test sentences, is obtained from IEEE wide band speech database [14]. Three typical noise samples (i.e., babble, Gaussian, and pink) from the NOIZUS-92 are used to synthesize noisy speech at three input signal-to-noise ratios (SNRs) (i.e., -5, 0, and 5 dB). All signals are resampled to 16 kHz sampling rate, and the spectrograms are calculated with a window length of 32 ms, and a hop of 16 ms. Both of EFTW -RBMs and FTW -RBMs are used to calculate 2D SPP , and the corresponding 1D SD is obtained by integrating the 2D SPP values along the frequency axis. Three state-of-the-arts (i.e., MLP-DNN [15], Y ing August 11, 2018 DRAFT UNDER CONSIDERA TION A T P A TTERN RECOGNITION LETTERS, APRIL 2017 7 [16], and DBN [4]) are used in 1D SD ev aluation. For 2D SPP e valuation, due to the lack of related DNN based algorithm, we compare EFTW -RBMs with two con ventional 2D SPP estimators, including Gerkmann [17], and EM-ML [18]. The basic network parameters of EFTW -RBMs and FTW -RBMs are set as: the number of visible units is 257, hidden units is 30, the number of epoches is 40, the learning rate is 0.001, the three-way factor f is 60, the gated frame number n t is 6, the nonnegati ve coefficient β is 0.5. and the momentum v alue is set as 0.1, and set to 0 after 20 epochs. The training and testing data are normalized. Area-under-R OC-curve (A UC) [4] is applied for the ev aluation of 1D SD, while speech distortion ratio (SDR) that used to e valuate 2D SPP estimation is defined as S D R = P P ( Y ( n, m ) P ( n, m ) − S ( n, m )) 2 P P S ( n, m ) 2 (20) where S is the clean speech, Y is the noisy speech, and P is the SPP . Fig. 3. Illustration of 1D SD by Ying, FTW -RBMs, and the proposed algorithm in pink noise environment at SNR=-5 dB. The output has been normalized to the range [0,1]. The straight lines are the optimal decision thresholds in terms of hit rate, and the notched lines show the hard decisions. Figure 3 sho ws the intuiti ve e v aluation of the 1D SD by Y ing’ s SD model [16], FTW -RBMs, and our EFTW -RBMs model in pink noise background at SNR = -5dB. The notched lines clearly demonstrate that EFTW -RBMs label the speech frames more accurately . T able I summarizes the averaged A UC values obtained by five 1D SD algorithms in three noise at various SNRs. The performance of EFTW -RBMs is slightly higher than that of MLP-DNN algorithm, and obviously higher than that of Y ing, FTW -RBMs, and DBN algorithms. T ABLE I T H E AU C R E S ULT S F O R 1 D S P E E CH D E T EC T I O N . T H E R E S ULT S A R E A V E RA GE D AC RO S S A L L T H E S P EE C H U T T ER A N C ES . Babble Gaussian Pink -5dB 0dB 5dB -5dB 0dB 5dB -5dB 0dB 5dB MLP-DNN 0.79 0.83 0.86 0.80 0.85 0.87 0.78 0.83 0.86 Y ing 0.61 0.65 0.69 0.59 0.62 0.65 0.62 0.67 0.70 DBN 0.75 0.79 0.82 0.77 0.61 0.85 0.72 0.76 0.81 FTW -RBMs 0.63 0.71 0.75 0.79 0.83 0.85 0.70 0.74 0.79 EFTW -RBMs 0.80 0.84 0.88 0.82 0.86 0.89 0.81 0.85 0.88 August 11, 2018 DRAFT UNDER CONSIDERA TION A T P A TTERN RECOGNITION LETTERS, APRIL 2017 8 Figure 4 presents the 2D SPP results obtained by four 2D SD algorithms in pink noise at SNR = 0 dB. Unlike 1D SD that is labeled by binary v alues, 2D SD is presented as SPP ranging at [0,1]. Both EM-ML and Gerkmann models are based on statistical estimators, and the prior knowledge about noise should be obtained by assuming the initial frames as noise. The results show that our EFTW -RBMs successfully capture most of the speech activities, interpreted by the SPP values, which are proportional to the magnitudes of speech components. Moreover , T able II summarizes av erage SDR results of four 2D SD algorithms in three different noise at various SNRs. The bold numbers show that EFTW -RBMs obtain the lowest SDR in all three noise at different SNRs. It indicates that our proposed approach can successfully detect speech with less distortion compared with three other 2D SD algorithms. Fig. 4. The spectrograms of (a) clean speech, (b) noisy speech in pink noise at SNR= 0dB, and the 2D SPP results obtained by (c)EM-ML, (d)FTW -RBMs,(e)Gerkmann, and (f) the proposed EFTW -RBMs T ABLE II T H E S D R R E S U L T S F O R 1 D A N D 2 D S P EE C H D E T E CT I O N . T H E R E S U L T S A R E A V ER A GE D AC RO S S A L L T H E S P E EC H U T T ER A N CE S . Babble Gaussian Pink -5dB 0dB 5dB -5dB 0dB 5dB -5dB 0dB 5dB Gerkmann 0.61 0.56 0.49 0.57 0.53 0.51 0.55 0.52 0.46 EM-ML 0.51 0.43 0.41 0.58 0.56 0.52 0.49 0.47 0.46 FTW -RBMs 0.56 0.53 0.50 0.47 0.45 0.44 0.51 0.48 0.45 EFTW -RBMs 0.45 0.42 0.39 0.44 0.43 0.40 0.42 0.40 0.37 I V . C O N C L U S I O N In this letter , we propose a EFTW -RBMs model for SD. This gated RBMs approach can effecti vely introduce the frame-wise correlation and retains long-term speech features. By applying weight coefficients and a threshold function, the enhanced input units help to reconstruct the speech components more robust, which significantly improv es the SPP estimation in the T -F domain. The implementation of the proposed model reduces parameters and concentrates the energy of speech features by using nonnegati ve regularization. The ev aluation results show that EFTW -RBMs demonstrate advantages ov er other state-of-the-arts in both 1D SD and 2D SD cases. The future August 11, 2018 DRAFT UNDER CONSIDERA TION A T P A TTERN RECOGNITION LETTERS, APRIL 2017 9 work will focus on improving the selection of gated features, extending current enhanced FRBM into a stacked deep model, and promoting current model into a dynamically updated online model. R E F E R E N C E S [1] J. Sohn, N. S. Kim, and W . Sung, “ A statistical model-based voice activity detection, ” IEEE signal pr ocessing letters , vol. 6, no. 1, pp. 1–3, 1999. [2] J. Li, L. Deng, Y . Gong, and R. Haeb-Umbach, “ An ov erview of noise-robust automatic speech recognition, ” IEEE/ACM Tr ansactions on Audio, Speech, and Language Processing , vol. 22, no. 4, pp. 745–777, 2014. [3] T . Gerkmann and R. C. Hendriks, “Unbiased mmse-based noise power estimation with lo w comple xity and low tracking delay , ” IEEE T ransactions on Audio, Speec h, and Language Processing , vol. 20, no. 4, pp. 1383–1393, 2012. [4] X.-L. Zhang and J. Wu, “Deep belief networks based voice activity detection, ” IEEE Tr ansactions on Audio, Speech, and Language Pr ocessing , vol. 21, no. 4, pp. 697–710, 2013. [5] S. O. Sadjadi and J. H. Hansen, “Unsupervised speech activity detection using voicing measures and perceptual spectral flux, ” IEEE Signal Pr ocessing Letters , vol. 20, no. 3, pp. 197–200, 2013. [6] S. Leglai ve, R. Hennequin, and R. Badeau, “Singing v oice detection with deep recurrent neural netw orks, ” in 2015 IEEE International Confer ence on Acoustics, Speech and Signal Pr ocessing (ICASSP) . IEEE, 2015, pp. 121–125. [7] F . Eyben, F . W eninger , S. Squartini, and B. Schuller, “Real-life voice activity detection with lstm recurrent neural networks and an application to hollywood movies, ” in 2013 IEEE International Confer ence on Acoustics, Speech and Signal Pr ocessing . IEEE, 2013, pp. 483–487. [8] N. Ryant, M. Liberman, and J. Y uan, “Speech activity detection on youtube using deep neural networks. ” in INTERSPEECH , 2013, pp. 728–731. [9] I. Hwang, H.-M. Park, and J.-H. Chang, “Ensemble of deep neural networks using acoustic environment classification for statistical model-based voice activity detection, ” Computer Speech & Language , v ol. 38, pp. 1–12, 2016. [10] S. Thomas, G. Saon, M. V an Segbroeck, and S. S. Narayanan, “Improvements to the ibm speech activity detection system for the darpa rats program, ” in 2015 IEEE International Confer ence on Acoustics, Speech and Signal Pr ocessing (ICASSP) . IEEE, 2015, pp. 4500–4504. [11] R. Memisevic and G. E. Hinton, “Learning to represent spatial transformations with factored higher-order boltzmann machines, ” Neural Computation , vol. 22, no. 6, pp. 1473–1492, 2010. [12] T . Y amashita, M. T anaka, E. Y oshida, Y . Y amauchi, and H. Fujiyoshi, “T o be bernoulli or to be gaussian, for a restricted boltzmann machine. ” in ICPR , 2014, pp. 1520–1525. [13] T . D. Nguyen, T . Tran, D. Q. Phung, and S. V enkatesh, “Learning parts-based representations with nonnegativ e restricted boltzmann machine. ” in ACML , 2013, pp. 133–148. [14] P . C. Loizou, Speech enhancement: theory and practice . CRC press, 2013. [15] M. V an Segbroeck, A. Tsiartas, and S. Narayanan, “ A robust frontend for vad: exploiting contextual, discriminative and spectral cues of human voice. ” in INTERSPEECH , 2013, pp. 704–708. [16] D. Y ing, Y . Y an, J. Dang, and F . K. Soong, “V oice activity detection based on an unsupervised learning framework, ” Audio, Speech, and Language Processing, IEEE T ransactions on , vol. 19, no. 8, pp. 2624–2633, 2011. [17] T . Gerkmann, C. Breithaupt, and R. Martin, “Improved a posteriori speech presence probability estimation based on a likelihood ratio with fixed priors, ” Audio, Speech, and Language Pr ocessing, IEEE T ransactions on , vol. 16, no. 5, pp. 910–919, 2008. [18] P . Sun and J. Qin, “Low-rank and sparsity analysis applied to speech enhancement via online estimated dictionary , ” IEEE Signal Pr ocessing Letters , v ol. 23, no. 12, pp. 1862–1866, 2016. August 11, 2018 DRAFT

Original Paper

Loading high-quality paper...

Comments & Academic Discussion

Loading comments...

Leave a Comment