Debugging Behaviour of Embedded-Software Developers: An Exploratory Study

Many researchers have studied the behaviour of successful developers while debugging desktop software. In this paper, we investigate the embedded-software debugging by intermediate programmers through an exploratory study. The bugs are semantic low-level errors, and the participants are students who completed a real-time operating systems course in addition to five other programming courses. We compare between the behaviour involved in successful debugging attempts versus unsuccessful ones. We describe some characteristics of smooth and successful debugging behaviour.

💡 Research Summary

The paper presents an exploratory study of how intermediate‑level programmers debug low‑level embedded software bugs. Fourteen university students who had completed a real‑time operating systems (RTOS) course and several other programming courses were recruited. All participants were familiar with the Janus MCF5307 ColdFire board and a small RTOS written in C (≈3 k lines, 23 source files, 15 headers). Seven semantic bugs were prepared, split into two categories: incorrect hardware configuration (four bugs) and memory leaks (three bugs). Each bug was presented with a brief report describing the erroneous behavior; participants examined one bug at a time.

Data were collected through three complementary methods: (1) screen video recording with CamStudio, later coded by three observers using CowLog to extract time‑stamped activities (code browsing, editing, document reading, compiling, testing) and techniques (tracing, print statements, etc.); (2) saving the entire project after each compilation attempt into a “.try” folder, allowing precise reconstruction of code changes; and (3) pre‑session, post‑session, and per‑bug questionnaires capturing perceived difficulty and self‑reported success. Inter‑rater reliability for video coding was substantial (Fleiss’ κ = 0.74).

The analysis focuses on differences between successful and unsuccessful debugging attempts. Six key observations emerged:

-

Locating bugs is harder than fixing them. In 93.75 % of post‑bug forms participants rated bug location as equally or more difficult than fixing. Only 63.82 % of bugs were actually located (i.e., the participant edited the correct function).

-

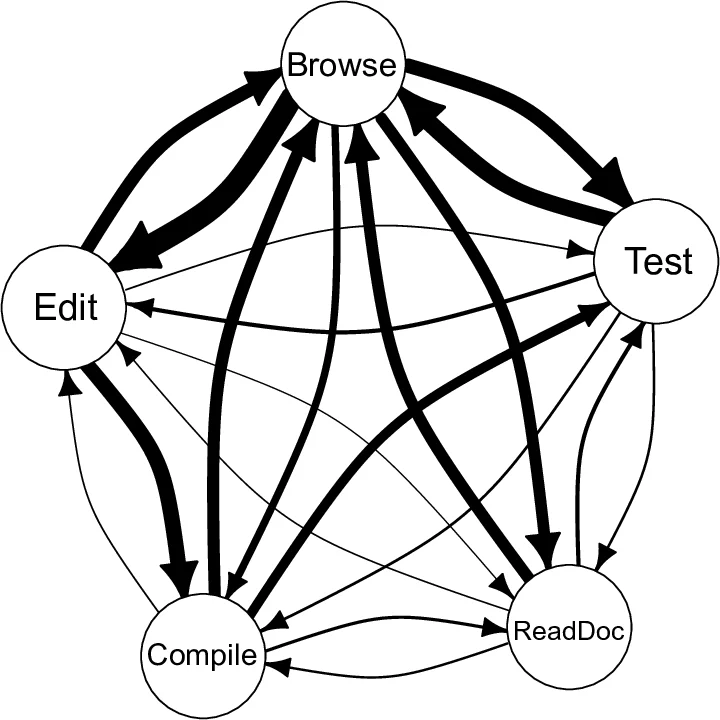

Activity visitation patterns differ markedly. Successful attempts showed a relatively linear cycle—code browsing → editing → compiling → testing—with an average of 26.97 transitions per bug. Unsuccessful attempts exhibited a much higher transition count (average 43.11), indicating indecisive or scattered behavior.

-

Considering alternatives improves success. For the memory‑leak bug (Bug‑3) that required two missing statements, many participants inserted only one line; only three participants who recognized the need for multiple fixes succeeded.

-

Single‑function edits per compilation try correlate with success. In 61.11 % of successful attempts participants edited only one function in a given try, which was associated with lower total editing time, shorter locate‑time, and reduced overall examination time.

-

Running the first compilation without any changes is a strong predictor of success. 88.88 % of successful attempts kept the initial compile unchanged, whereas 75 % of participants who edited before the first compile failed to fix the bug. This suggests that establishing a baseline execution trace is crucial in embedded contexts where hardware interaction can mask logical errors.

-

Avoiding “ping‑pong” behavior (re‑editing far‑away functions after having approached the bug) is essential. 88.88 % of successful attempts showed no ping‑pong pattern; conversely, 75 % of cases exhibiting ping‑pong remained unfixed. The pattern was visualized by plotting compilation try number against edited location, revealing frequent back‑and‑forth jumps in unsuccessful sessions.

Threats to validity were discussed. Video coding is inherently subjective, but the high κ value mitigates this concern. The study design allowed participants to choose bug order freely, reducing learning‑effect bias; no correlation was found between bug identifier or order and examination time. Bug “location” was operationalized as editing the correct function—a reasonable proxy given the small size of target functions (average 37 LOC).

In conclusion, the study demonstrates that debugging embedded software differs from desktop debugging due to hardware interaction, low‑level language semantics, and timing constraints. Successful debugging is characterized by a smooth, low‑transition workflow, establishing a baseline run, limiting edits to the relevant function, and maintaining focus on the identified bug location. These findings have practical implications for curricula—emphasizing disciplined debugging strategies—and for tool developers, who might design IDE features that visualize activity transitions, warn against multi‑function edits in a single compile, and automatically capture baseline runs.

Comments & Academic Discussion

Loading comments...

Leave a Comment