Detection and Analysis of 2016 US Presidential Election Related Rumors on Twitter

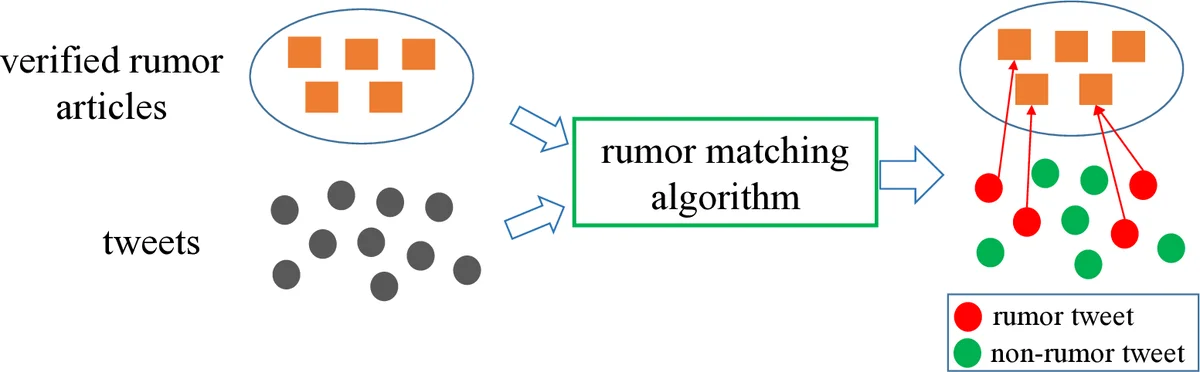

The 2016 U.S. presidential election has witnessed the major role of Twitter in the year’s most important political event. Candidates used this social media platform extensively for online campaigns. Meanwhile, social media has been filled with rumors, which might have had huge impacts on voters’ decisions. In this paper, we present a thorough analysis of rumor tweets from the followers of two presidential candidates: Hillary Clinton and Donald Trump. To overcome the difficulty of labeling a large amount of tweets as training data, we detect rumor tweets by matching them with verified rumor articles. We analyze over 8 million tweets collected from the followers of the two candidates. Our results provide answers to several primary concerns about rumors in this election, including: which side of the followers posted the most rumors, who posted these rumors, what rumors they posted, and when they posted these rumors. The insights of this paper can help us understand the online rumor behaviors in American politics.

💡 Research Summary

The paper presents a comprehensive study of rumor propagation on Twitter during the 2016 United States presidential election, focusing on the followers of the two major candidates, Hillary Clinton and Donald Trump. Recognizing the prohibitive cost and interpretability issues of traditional supervised rumor detection methods, the authors adopt a novel text‑matching approach that leverages verified rumor articles from Snopes.com as gold‑standard references.

Data Collection

The authors first compiled a corpus of 1,723 verified rumor articles from Snopes, covering a wide range of election‑related false claims. Using the Twitter API, they randomly sampled roughly 10,000 followers of each candidate from the millions of accounts that follow them. For each selected user, up to 3,000 of the most recent tweets were retrieved, yielding a total of 4,452,087 tweets from 7,283 Clinton followers and 4,279,050 tweets from 7,339 Trump followers—over 8.7 million tweets in all.

Labeled Test Set

To evaluate matching algorithms, a manually annotated test set was built. The authors randomly chose 100 rumor articles, extracted representative keywords, and searched the large tweet pool for candidate matches. Human annotators then verified whether each retrieved tweet actually corresponded to the rumor article. This process produced 2,500 rumor tweets linked to 86 distinct rumor articles, together with an equal number of non‑rumor tweets, enabling both binary classification and fine‑grained rumor identification experiments.

Matching Algorithms Compared

Five matching strategies were examined: (1) TF‑IDF, (2) BM25, (3) Word2Vec (averaged word vectors), (4) Doc2Vec (document embeddings), and (5) a lexicon‑based method derived from prior work. For the embedding‑based approaches, pre‑trained Word2Vec vectors (200 dimensions, trained on 27 billion tweets) and unsupervised Doc2Vec vectors (400 dimensions) were employed; similarity was measured via cosine distance. BM25 and TF‑IDF are classic bag‑of‑words models that incorporate term frequency and document length normalization.

Evaluation Results

Using the 5,000‑tweet validation set, precision‑recall curves were plotted for each method. BM25 achieved the highest F1 score (0.82) and the best overall trade‑off between precision and recall. TF‑IDF performed slightly worse (F1 = 0.758), while the embedding methods lagged (Word2Vec = 0.764, Doc2Vec = 0.745). The lexicon approach yielded very high precision (0.862) but negligible recall (0.008), making it unsuitable for large‑scale analysis. For the fine‑grained identification task—correctly assigning a tweet to its originating rumor article—BM25 again led with 79.9 % accuracy, marginally ahead of TF‑IDF (79.5 %).

Large‑Scale Rumor Detection

Given its superior performance, BM25 was selected for the full‑scale analysis. The similarity threshold was set to h = 30, a value that produced 94.7 % precision and 31.5 % recall on the test set, favoring precision to ensure that the subsequent behavioral analysis rested on reliable detections. Applying this filter to the 8.7 million tweets identified a substantial number of rumor tweets for further study.

Behavioral Findings

-

Overall Rumor Volume – Across the entire dataset, Clinton followers posted rumor tweets at a rate of 1.20 % of their total tweets, while Trump followers posted at 1.16 %. Thus, Clinton’s base was slightly more active in rumor generation overall.

-

Election‑Period Dynamics – When focusing on the election window (April through Election Day), the pattern reversed: Trump followers’ rumor ratio rose to 1.35 %, an 18 % increase over their all‑time baseline, whereas Clinton followers’ ratio modestly increased to 1.26 %. This suggests heightened rumor activity among Trump supporters as the election approached.

-

Concentration of Rumor Production – The distribution of rumor posting is highly skewed. The top 10 % of users (by number of rumor tweets) contributed roughly 50 % of all rumor tweets, and the top 20 % contributed about 70 %. Moreover, these prolific rumor posters also exhibited higher overall rumor‑to‑total‑tweet ratios, indicating a focused intent rather than random noise.

-

Case Study of an Individual User – One Trump follower authored 3,211 tweets in the dataset, of which 307 (9.6 %) were classified as rumors. Keyword analysis showed this user’s tweets heavily referenced “Clinton,” “Sanders,” “Trump,” and “election.” Within the rumor subset, 15 % concerned Clinton, 28 % concerned Sanders, and only 10 % concerned Trump, revealing a tendency to spread rumors about opponents rather than the candidate they follow.

-

Content of Rumors – By matching each rumor tweet back to its Snopes article, the authors could categorize the topics. The majority of rumors centered on the two candidates, their alleged scandals, and related policy issues. This mapping provides a concrete link between online rumor chatter and the fact‑checked claims circulating in the broader media ecosystem.

Implications and Limitations

The study demonstrates that a simple, interpretable matching technique can scale to millions of tweets while maintaining high precision, offering a practical tool for real‑time monitoring of political misinformation. However, reliance on exact or near‑exact lexical overlap means that more subtle, metaphorical, or heavily paraphrased rumors may be missed. The approach also assumes the existence of a comprehensive, up‑to‑date rumor repository; emerging rumors not yet catalogued on Snopes would escape detection. Finally, the analysis is confined to the U.S. two‑candidate context, limiting direct generalization to multi‑party systems or non‑English environments.

Future Directions

The authors suggest integrating modern transformer‑based sentence embeddings (e.g., BERT, RoBERTa) to capture deeper semantic similarity, potentially improving recall without sacrificing precision. Expanding the rumor knowledge base to include multilingual fact‑checking sites would enable cross‑cultural studies. Moreover, coupling the matching pipeline with network‑level diffusion analysis could reveal how rumor cascades propagate through follower graphs, offering richer insights for platform moderation and policy‑making.

In sum, the paper provides a methodologically sound, scalable framework for detecting election‑related rumors on Twitter and yields actionable insights into who spreads rumors, what they are about, and how their activity fluctuates over the campaign timeline.

Comments & Academic Discussion

Loading comments...

Leave a Comment