A Hierarchical Approach for Generating Descriptive Image Paragraphs

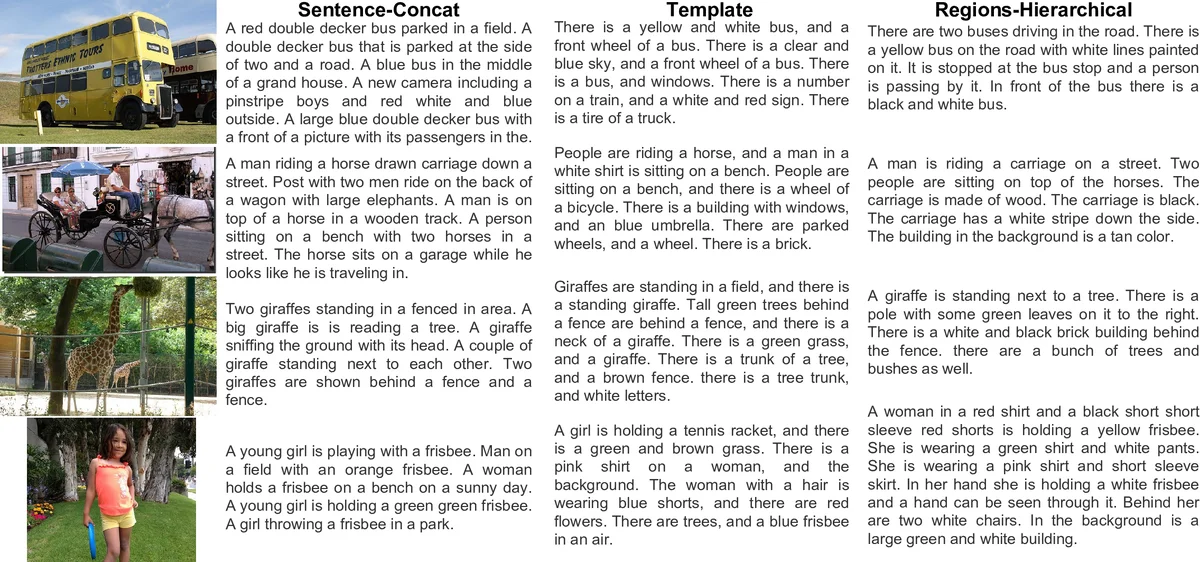

Recent progress on image captioning has made it possible to generate novel sentences describing images in natural language, but compressing an image into a single sentence can describe visual content in only coarse detail. While one new captioning approach, dense captioning, can potentially describe images in finer levels of detail by captioning many regions within an image, it in turn is unable to produce a coherent story for an image. In this paper we overcome these limitations by generating entire paragraphs for describing images, which can tell detailed, unified stories. We develop a model that decomposes both images and paragraphs into their constituent parts, detecting semantic regions in images and using a hierarchical recurrent neural network to reason about language. Linguistic analysis confirms the complexity of the paragraph generation task, and thorough experiments on a new dataset of image and paragraph pairs demonstrate the effectiveness of our approach.

💡 Research Summary

The paper addresses the limitation of traditional image captioning, which typically produces a single sentence that can only convey coarse information about an image, and dense captioning, which generates many region‑level phrases but lacks overall coherence. The authors propose a novel task: generating full paragraphs that describe an image in a detailed, unified manner. To achieve this, they design a system that decomposes both the visual input and the textual output into hierarchical components.

On the visual side, a region detector based on a VGG‑16 backbone and a Region Proposal Network (as used in dense captioning) extracts 50 candidate regions per image. Each region is represented by a 4096‑dimensional CNN feature vector. These region vectors are projected into a lower‑dimensional space and aggregated with element‑wise max‑pooling, producing a single pooled vector that captures the semantics of the whole scene while preserving the expressive power of a set function.

The language model consists of two stacked LSTMs. The “sentence‑level” LSTM receives the pooled visual vector, decides dynamically how many sentences to generate (using a halting probability at each step), and emits a topic vector for each sentence. The “word‑level” LSTM then takes the corresponding topic vector and generates the words of that sentence. This hierarchical recurrent architecture reduces the effective sequence length for each LSTM (≈5–6 steps for the sentence LSTM, ≈12 steps for the word LSTM) and therefore mitigates vanishing‑gradient problems that plague flat, word‑by‑word paragraph generators.

A new dataset was collected to evaluate the approach: 19,551 images from MS COCO and Visual Genome were each annotated with a single paragraph (average 67.5 tokens, about 5.7 sentences). Linguistic analysis shows that paragraphs are roughly six times longer than typical COCO captions, have three times higher lexical diversity, and contain more verbs and pronouns, indicating richer descriptions of actions and relationships. The authors also compute a diversity score based on CIDEr similarity, finding that sentences within a paragraph are far less redundant than multiple independent captions for the same image.

Experiments compare the hierarchical model against several baselines, including a non‑hierarchical word‑level LSTM, standard single‑sentence captioning models, and dense captioning pipelines. The proposed method outperforms all baselines on BLEU‑4, METEOR, and CIDEr, and human evaluations rate its output higher in coherence and detail. An ablation study demonstrates the benefit of transferring knowledge from a dense captioning model: initializing the region detector and language components with pretrained weights yields faster convergence and higher final scores. Replacing the dense‑captioning region detector with a pure object‑detector degrades paragraph quality, confirming that capturing background and relational cues is essential for rich paragraph generation.

In summary, the paper introduces a hierarchical visual‑language framework that jointly models image regions and sentence structure, enabling the generation of coherent, detailed paragraphs. By leveraging region‑level semantics, max‑pooling aggregation, and a two‑level LSTM, the system bridges the gap between fine‑grained visual understanding and long‑range linguistic reasoning. The work opens avenues for more expressive visual storytelling, advanced human‑computer interaction, and downstream tasks such as image‑based narrative generation or assistive technologies.

Comments & Academic Discussion

Loading comments...

Leave a Comment