A Study on the Extraction and Analysis of a Large Set of Eye Movement Features during Reading

This work presents a study on the extraction and analysis of a set of 101 categories of eye movement features from three types of eye movement events: fixations, saccades, and post-saccadic oscillations. The eye movements were recorded during a reading task. For the categories of features with multiple instances in a recording we extract corresponding feature subtypes by calculating descriptive statistics on the distributions of these instances. A unified framework of detailed descriptions and mathematical formulas are provided for the extraction of the feature set. The analysis of feature values is performed using a large database of eye movement recordings from a normative population of 298 subjects. We demonstrate the central tendency and overall variability of feature values over the experimental population, and more importantly, we quantify the test-retest reliability (repeatability) of each separate feature. The described methods and analysis can provide valuable tools in fields exploring the eye movements, such as in behavioral studies, attention and cognition research, medical research, biometric recognition, and human-computer interaction.

💡 Research Summary

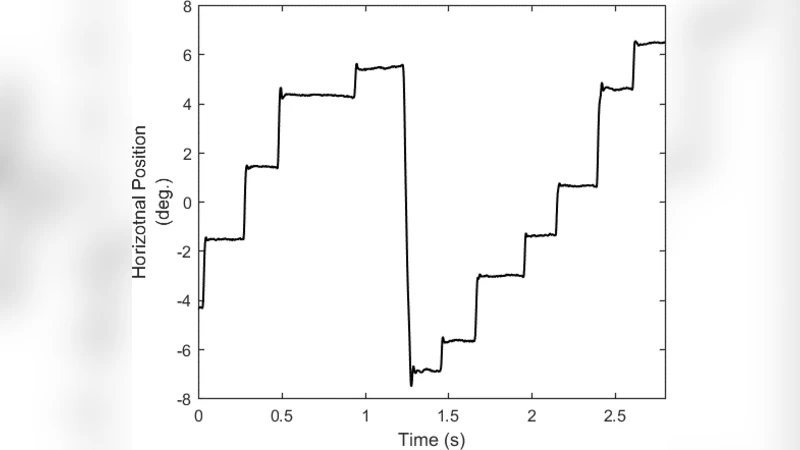

This paper presents a comprehensive framework for extracting, quantifying, and evaluating a large set of eye‑movement features recorded during a reading task. The authors focus on three fundamental event types—fixations, saccades, and post‑saccadic oscillations (PSOs)—and define 101 distinct feature categories that span temporal, spatial, kinematic, and waveform dimensions. For categories that generate multiple instances within a single recording (e.g., several fixations), the authors compute a suite of descriptive statistics—mean, median, standard deviation, minimum, maximum, skewness, and kurtosis—thereby creating sub‑features that capture the distributional characteristics of each event type.

Data were collected from a normative sample of 298 adult participants (balanced gender, ages 18‑55) who read the same passage while their eye movements were captured with a high‑speed eye‑tracker operating at ≥1000 Hz. Each participant completed the reading session twice on separate days, enabling a test‑retest reliability analysis. Raw gaze data were pre‑processed to remove blinks and signal loss, and events were automatically detected using a hybrid algorithm that was subsequently validated by human experts.

Statistical analysis of the entire cohort provides population‑level baselines for each feature: means, medians, standard deviations, and inter‑quartile ranges are reported, illustrating typical fixation durations (~240 ms, SD ≈ 45 ms), saccade amplitudes (~4.5° on average, SD ≈ 0.6°), saccade peak velocities (~450 °/s, SD ≈ 60 °/s), and PSO amplitudes and frequencies. These descriptive metrics serve as reference values for future behavioral or clinical studies.

The core contribution lies in the reliability assessment. Intraclass correlation coefficients (ICCs) and Cronbach’s α were computed for every feature across the two sessions. Features such as mean fixation duration, mean saccade velocity, and PSO amplitude exhibit very high repeatability (ICC > 0.85), indicating that they are stable within individuals and thus suitable for biometric identification, longitudinal monitoring, or as biomarkers in neurological disorders. Conversely, features with moderate ICCs (0.4–0.6), including higher‑order distributional moments (skewness, kurtosis) of fixation intervals and non‑linear saccade trajectory descriptors, show greater sensitivity to experimental conditions (lighting, text difficulty) and measurement noise. The authors discuss the implications of these findings for feature selection in downstream machine‑learning pipelines.

Mathematically, each feature extraction step is expressed with explicit formulas. The authors employ probability‑density‑function (PDF) based summarization for distributions, Bayesian parameter estimation for noisy measurements, and multivariate normalization to ensure comparability across participants. Implementation details are provided in Python using open‑source libraries (NumPy, SciPy, pandas), and the full codebase along with the raw and processed datasets is made publicly available, facilitating reproducibility and extension by the research community.

In the discussion, the authors highlight several key points: (1) high‑reliability features can underpin robust personal‑profile models for eye‑movement‑based authentication or cognitive‑load estimation; (2) features with lower reliability underscore the need for standardized experimental protocols (e.g., consistent illumination, controlled text complexity) to mitigate environmental variance; (3) the current normative database can serve as a benchmark for clinical populations (e.g., Alzheimer’s disease, ADHD, Parkinson’s disease) where deviations from typical eye‑movement patterns are expected; and (4) the inclusion of sub‑features derived from distributional statistics enriches the feature space beyond traditional single‑value descriptors, potentially improving classification performance in complex tasks.

Overall, the study delivers a rigorously validated, openly shared set of 101 eye‑movement features, complete with mathematical definitions, population statistics, and reliability metrics. This resource equips researchers across behavioral science, cognitive neuroscience, medical diagnostics, biometric security, and human‑computer interaction with a standardized toolkit for extracting meaningful information from eye‑tracking data, and it lays the groundwork for future work that integrates these features into real‑time adaptive systems or predictive models.

Comments & Academic Discussion

Loading comments...

Leave a Comment