Bolt-on Differential Privacy for Scalable Stochastic Gradient Descent-based Analytics

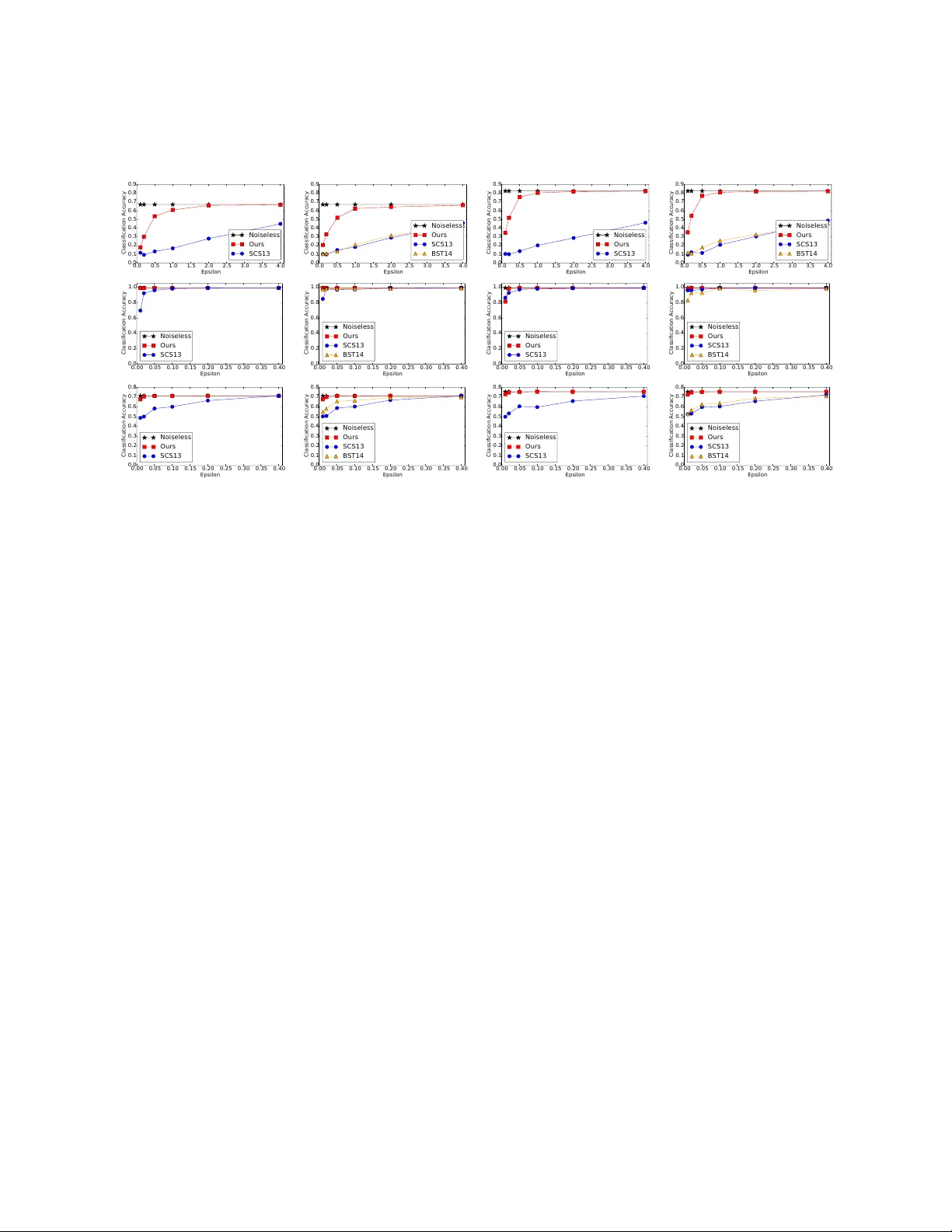

While significant progress has been made separately on analytics systems for scalable stochastic gradient descent (SGD) and private SGD, none of the major scalable analytics frameworks have incorporated differentially private SGD. There are two inter…

Authors: Xi Wu, Fengan Li, Arun Kumar