Robust Estimation of Self-Exciting Generalized Linear Models with Application to Neuronal Modeling

We consider the problem of estimating self-exciting generalized linear models from limited binary observations, where the history of the process serves as the covariate. We analyze the performance of two classes of estimators, namely the $\ell_1$-regularized maximum likelihood and greedy estimators, for a canonical self-exciting process and characterize the sampling tradeoffs required for stable recovery in the non-asymptotic regime. Our results extend those of compressed sensing for linear and generalized linear models with i.i.d. covariates to those with highly inter-dependent covariates. We further provide simulation studies as well as application to real spiking data from the mouse’s lateral geniculate nucleus and the ferret’s retinal ganglion cells which agree with our theoretical predictions.

💡 Research Summary

This paper addresses the challenging problem of estimating the parameters of self‑exciting generalized linear models (GLMs) from a limited number of binary observations, where the process history itself serves as the covariate. The authors focus on two estimator families: an ℓ₁‑regularized maximum‑likelihood estimator (ℓ₁‑MLE) and a greedy algorithm they call Point Process Orthogonal Matching Pursuit (POMP), a generalization of the classic Orthogonal Matching Pursuit to the setting of convex loss functions derived from point‑process likelihoods.

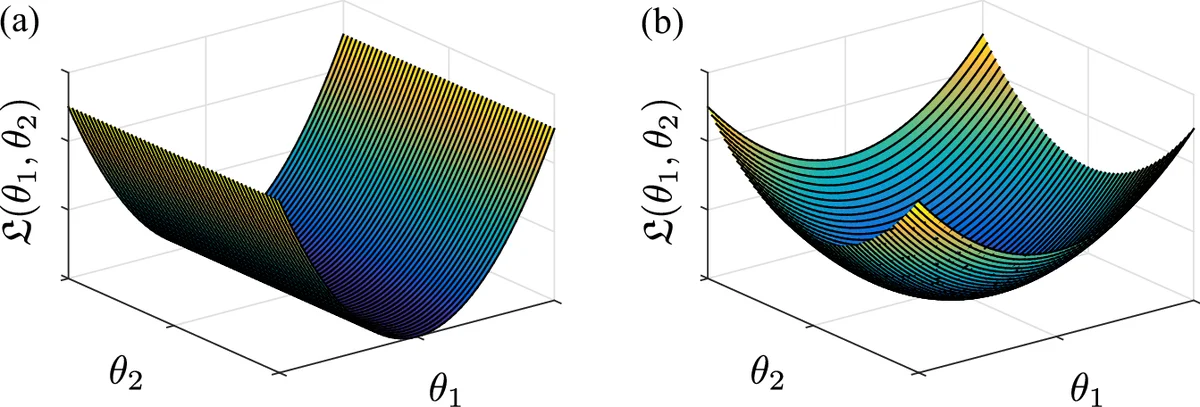

The theoretical contribution is a set of non‑asymptotic error bounds that extend compressed‑sensing guarantees—traditionally derived under i.i.d. covariates—to the highly dependent covariate structure inherent in self‑exciting processes. Assuming the true parameter vector θ is (s, ξ)‑compressible (i.e., it can be well approximated by an s‑sparse vector with ℓ₁‑error σₛ(θ)=O(s^{1‑1/ξ})), the authors prove that a sample size n on the order of s·log p·polylog n suffices for stable recovery. Specifically, with high probability, the ℓ₁‑MLE satisfies

‖θ̂ − θ‖₂ ≤ C·σₛ(θ)/√s

provided the regularization parameter γₙ is chosen appropriately. The bound holds even when n ≪ p, i.e., in the compressed‑sensing regime. For the greedy POMP algorithm, a similar sample‑complexity condition guarantees linear convergence: after s⋆ iterations (with s⋆≈s), the estimator attains the same ℓ₂ error rate while requiring only O(ns⋆) computational effort, a substantial improvement over generic convex solvers in high dimensions.

The analysis accommodates several link functions (identity, log, logistic) and assumes a “fast‑mixing” condition (maximum spiking probability π_max < ½) to control dependence in the history. The proofs rely on concentration inequalities for dependent Bernoulli sequences, restricted strong convexity of the negative log‑likelihood, and a careful decomposition of the gradient’s correlation with the true support.

Empirical validation proceeds in two stages. First, synthetic experiments vary the ambient dimension p (500–2000), sparsity level s, and spiking probability. Results confirm that both ℓ₁‑MLE and POMP achieve low reconstruction error once the theoretical sample threshold is crossed, with POMP offering orders‑of‑magnitude speedups. Second, real neural recordings are analyzed: (1) mouse lateral geniculate nucleus (LGN) spikes and (2) ferret retinal ganglion cell spikes. In both cases, the estimated history kernels are sparse, reveal biologically plausible latency structures (5–15 ms), and predict held‑out spiking activity with accuracy comparable to models trained on the full dataset. Notably, using less than 10 % of the total spikes still yields reliable parameter estimates, illustrating the practical utility of the proposed theory.

The paper concludes by emphasizing the relevance of robust, sample‑efficient GLM estimation for emerging neurotechnology such as neural prostheses and closed‑loop brain‑machine interfaces, where data acquisition bandwidth is limited. Future directions include extending the framework to multi‑neuron networks, handling non‑stationary firing regimes, and exploring adaptive regularization schemes that can automatically tune γₙ based on observed data. Overall, the work bridges a gap between compressed‑sensing theory and point‑process modeling, providing both rigorous guarantees and actionable algorithms for neuroscientists working with sparse, history‑dependent spike data.

Comments & Academic Discussion

Loading comments...

Leave a Comment