Overcoming model simplifications when quantifying predictive uncertainty

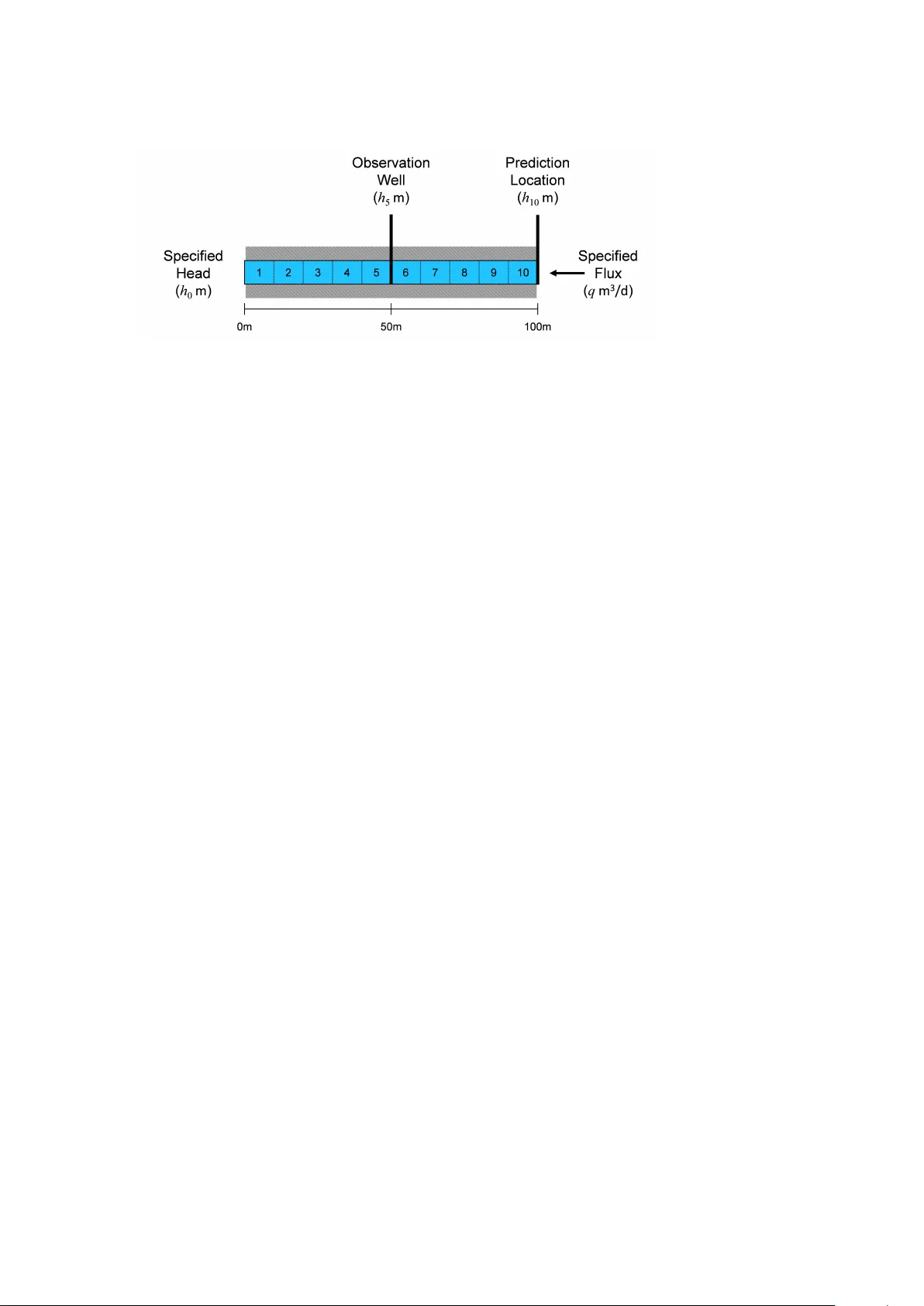

It is generally accepted that all models are wrong -- the difficulty is determining which are useful. Here, a useful model is considered as one that is capable of combining data and expert knowledge, through an inversion or calibration process, to ad…

Authors: George M. Mathews, John Vial