PriMaL: A Privacy-Preserving Machine Learning Method for Event Detection in Distributed Sensor Networks

This paper introduces PriMaL, a general PRIvacy-preserving MAchine-Learning method for reducing the privacy cost of information transmitted through a network. Distributed sensor networks are often used for automated classification and detection of abnormal events in high-stakes situations, e.g. fire in buildings, earthquakes, or crowd disasters. Such networks might transmit privacy-sensitive information, e.g. GPS location of smartphones, which might be disclosed if the network is compromised. Privacy concerns might slow down the adoption of the technology, in particular in the scenario of social sensing where participation is voluntary, thus solutions are needed which improve privacy without compromising on the event detection accuracy. PriMaL is implemented as a machine-learning layer that works on top of an existing event detection algorithm. Experiments are run in a general simulation framework, for several network topologies and parameter values. The privacy footprint of state-of-the-art event detection algorithms is compared within the proposed framework. Results show that PriMaL is able to reduce the privacy cost of a distributed event detection algorithm below that of the corresponding centralized algorithm, within the bounds of some assumptions about the protocol. Moreover the performance of the distributed algorithm is not statistically worse than that of the centralized algorithm.

💡 Research Summary

The paper introduces PriMaL (PRIvacy‑preserving MAchine‑Learning), a general framework that sits on top of any existing event‑detection algorithm and reduces the privacy cost associated with data transmitted in distributed sensor networks. The authors motivate the work with two concrete case studies—fire detection using infrared cameras in households and earthquake detection via smartphones—both of which involve privacy‑sensitive measurements such as location or image data. In these scenarios, participants are voluntary (social sensing) and a “honest‑but‑curious” supervisor may legitimately process the data for detection while also attempting to infer private information.

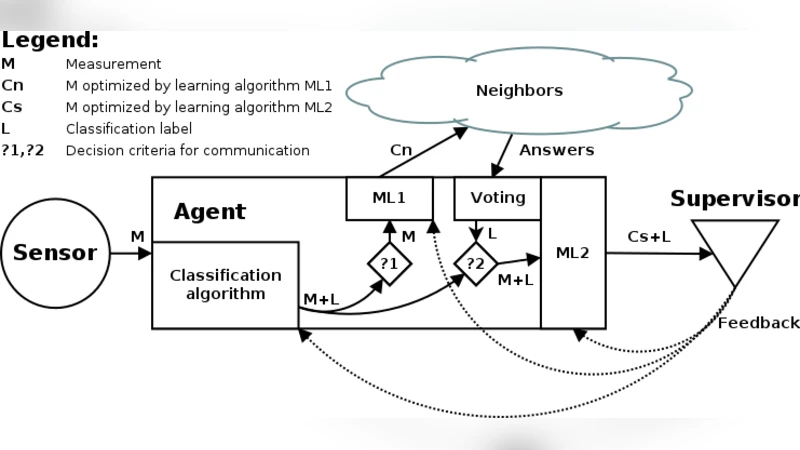

PriMaL’s core idea is to let each sensor be paired with an autonomous agent that learns a local classifier (the authors use one‑class SVMs but the method is classifier‑agnostic). When an event is detected, the agent sends a minimal message to its one‑hop neighbours containing only an anonymised agent ID, the location (sensor ID), the raw measurement, a timestamp, the event type, and the sensor type. The privacy cost is defined as the amount of privacy‑sensitive information contained in transmitted messages; the protocol therefore randomises agent IDs at each transmission and limits the scope of messages to one‑hop to prevent long‑range tracking.

The paper formalises the network model (sensors, agents, supervisor), the communication protocol, and the performance metrics: standard detection quality (precision, recall, F‑measure) together with communication cost and privacy cost. The authors then implement a simulation framework that can generate arbitrary network topologies (star, mesh, tree) and vary key parameters such as the number of sensors, event occurrence probability, and per‑message cost.

Three baselines are compared: (1) a centralized system where all raw measurements are sent to a single supervisor (high privacy cost, optimal accuracy), (2) a naïve distributed system that forwards only local alarms (low privacy cost, reduced accuracy), and (3) PriMaL. Results show that PriMaL achieves detection performance statistically indistinguishable from the centralized baseline (precision and recall differences are not significant) while reducing the privacy cost by roughly 30‑45 % across all topologies. In the GPS‑based earthquake scenario, masking location information and transmitting only the necessary measurement dramatically cuts privacy exposure; in the infrared fire scenario, sending only light intensity rather than full images yields similar benefits. Moreover, by transmitting only “outlier” messages (those that are likely to be events) the communication overhead is also lowered.

The authors acknowledge that PriMaL’s guarantees rely on specific assumptions: (i) agent IDs must be freshly randomised to prevent linking attacks, (ii) communication is limited to one‑hop neighbours, and (iii) the supervisor does not deviate from the honest‑but‑curious model. If any of these assumptions are violated, privacy leakage can increase sharply.

Future work is outlined as extending the framework to asynchronous streaming data, handling multi‑class events, supporting heterogeneous sensor modalities simultaneously, and designing robust protocols against malicious agents (beyond honest‑but‑curious).

In summary, PriMaL demonstrates that a lightweight machine‑learning layer can be integrated into existing distributed detection pipelines to substantially lower privacy exposure without sacrificing detection accuracy. This makes the approach attractive for IoT, smart‑city, and disaster‑response applications where user participation hinges on strong privacy guarantees.

Comments & Academic Discussion

Loading comments...

Leave a Comment