A Survey of Available Corpora for Building Data-Driven Dialogue Systems

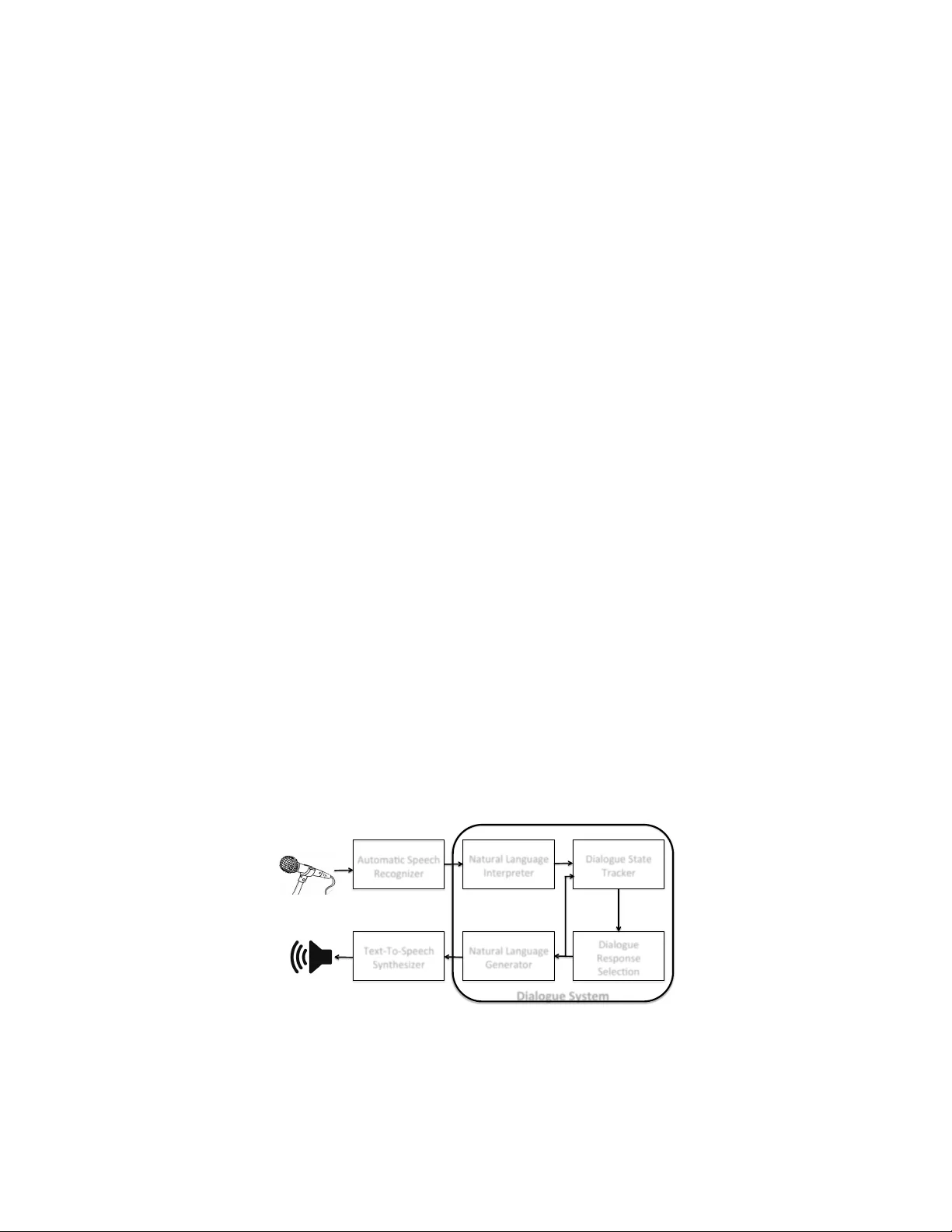

During the past decade, several areas of speech and language understanding have witnessed substantial breakthroughs from the use of data-driven models. In the area of dialogue systems, the trend is less obvious, and most practical systems are still b…

Authors: Iulian Vlad Serban, Ryan Lowe, Peter Henderson