Recent Advances in Features Extraction and Description Algorithms: A Comprehensive Survey

Computer vision is one of the most active research fields in information technology today. Giving machines and robots the ability to see and comprehend the surrounding world at the speed of sight creates endless potential applications and opportunities. Feature detection and description algorithms can be indeed considered as the retina of the eyes of such machines and robots. However, these algorithms are typically computationally intensive, which prevents them from achieving the speed of sight real-time performance. In addition, they differ in their capabilities and some may favor and work better given a specific type of input compared to others. As such, it is essential to compactly report their pros and cons as well as their performances and recent advances. This paper is dedicated to provide a comprehensive overview on the state-of-the-art and recent advances in feature detection and description algorithms. Specifically, it starts by overviewing fundamental concepts. It then compares, reports and discusses their performance and capabilities. The Maximally Stable Extremal Regions algorithm and the Scale Invariant Feature Transform algorithms, being two of the best of their type, are selected to report their recent algorithmic derivatives.

💡 Research Summary

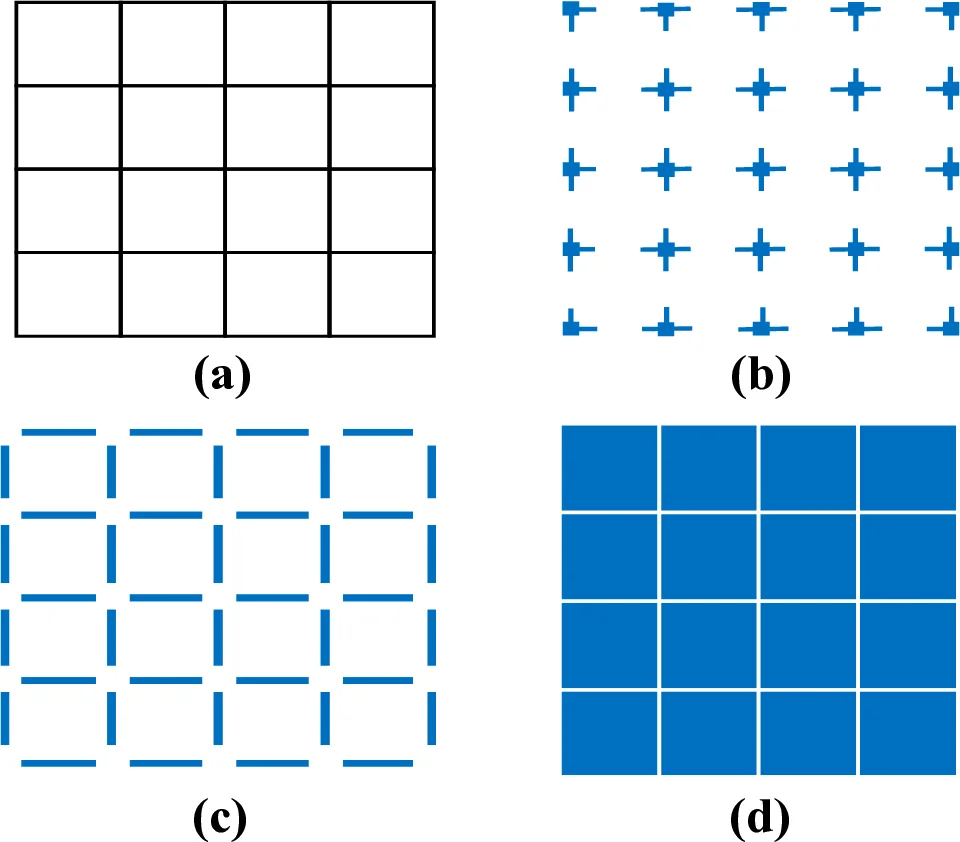

The paper presents a comprehensive survey of feature detection and description algorithms, which are fundamental to computer‑vision systems that must operate at “the speed of sight.” After outlining the motivation—high‑resolution video, satellite imagery, and the need for real‑time performance—the authors enumerate the essential qualities of an ideal local feature: distinctiveness, locality, sufficient quantity, accuracy, efficiency, repeatability, invariance, and robustness. They classify existing detectors into edge‑based, corner‑based, blob‑based, and region‑based families, summarizing classic methods such as Sobel, Canny, Harris, DoG, SURF, KAZE, and especially the Maximally Stable Extremal Regions (MSER) and Scale‑Invariant Feature Transform (SIFT).

Tables compare these methods across invariance, rotation, scale handling, repeatability, localization, robustness, and computational efficiency, showing that MSER and SIFT consistently rank highest. Consequently, the survey devotes a large portion to the recent derivatives of these two algorithms.

For MSER, five major extensions are described: (1) 3‑D and N‑dimensional extensions that operate on volumetric or multi‑channel data; (2) a linear‑time implementation that improves cache locality and achieves O(N) complexity; (3) X‑MSER, which fuses depth information with intensity to detect bright and dark regions simultaneously; (4) a parallel MSER that processes both extremal polarities in a single pass, reducing execution time and power consumption; and (5) related concepts such as Extremal Regions of the Extremal Levels and Tree‑based Morse Regions. The authors note that several of these variants have been prototyped on FPGA or ASIC platforms, highlighting hardware‑friendly designs.

SIFT’s derivative landscape is richer. The paper discusses ASIFT, an affine‑invariant version that simulates all possible viewpoints but incurs a dramatic computational cost; CSIFT, which operates in a color‑invariant space and improves robustness to blur and affine changes while being more sensitive to illumination; and numerous attempts to shrink the 128‑dimensional descriptor, alter histogram sampling patterns, or replace Gaussian pyramids with faster approximations. The authors emphasize that while SIFT’s complexity offers many avenues for improvement, each modification trades off speed, memory, or robustness.

Beyond algorithmic details, the survey addresses practical deployment concerns: the need for DSPs, GPUs, FPGAs, SoCs, and ASICs to meet real‑time constraints; the impact of memory bandwidth, power budgets, and resolution scaling; and the importance of matching algorithmic characteristics to specific application domains such as surveillance, autonomous navigation, or remote sensing. The paper concludes that no single detector satisfies all requirements; future research will likely focus on hybrid methods, deep‑learning‑based feature learning, and ultra‑low‑power edge implementations that combine the best qualities of MSER, SIFT, and their modern derivatives.

Comments & Academic Discussion

Loading comments...

Leave a Comment