Analogy-based effort estimation: a new method to discover set of analogies from dataset characteristics

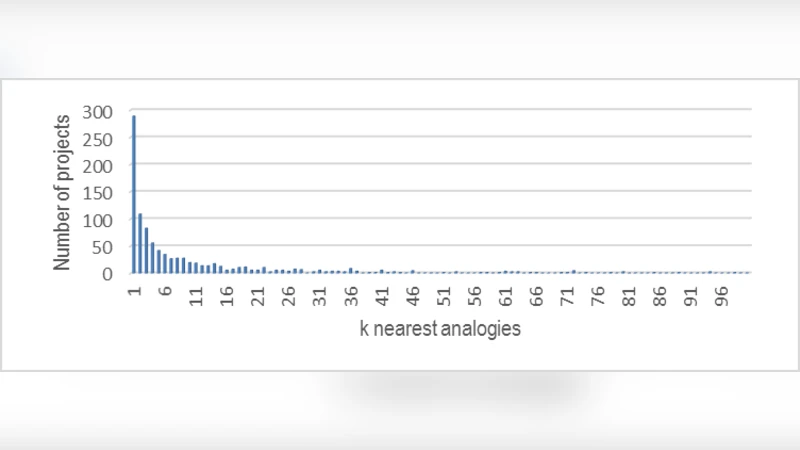

Analogy-based effort estimation (ABE) is one of the efficient methods for software effort estimation because of its outstanding performance and capability of handling noisy datasets. Conventional ABE models usually use the same number of analogies for all projects in the datasets in order to make good estimates. The authors’ claim is that using same number of analogies may produce overall best performance for the whole dataset but not necessarily best performance for each individual project. Therefore there is a need to better understand the dataset characteristics in order to discover the optimum set of analogies for each project rather than using a static k nearest projects. Method: We propose a new technique based on Bisecting k-medoids clustering algorithm to come up with the best set of analogies for each individual project before making the prediction. Results & Conclusions: With Bisecting k-medoids it is possible to better understand the dataset characteristic, and automatically find best set of analogies for each test project. Performance figures of the proposed estimation method are promising and better than those of other regular ABE models

💡 Research Summary

The paper addresses a fundamental limitation of traditional Analogy‑Based Effort Estimation (ABE) methods, namely the use of a fixed number (k) of nearest analogues for every project in a dataset. While a static k may yield the best average performance across the whole dataset, it does not guarantee optimal estimates for individual projects because each project can have a distinct distribution of attributes such as size, complexity, domain, and development environment. To overcome this limitation, the authors propose a dynamic analogue selection technique that determines, for each test project, the most appropriate set of analogues based on the intrinsic structure of the data.

The core of the proposed technique is the Bisecting k‑medoids clustering algorithm. Unlike classic k‑medoids, which partitions the data into a pre‑specified number of clusters in a single step, Bisecting k‑medoids repeatedly splits existing clusters into two sub‑clusters, each time selecting medoids that minimize intra‑cluster dissimilarity. The splitting continues until a stopping condition is met—either the cluster size falls below a minimum threshold or the reduction in average intra‑cluster distance becomes negligible. This hierarchical, top‑down approach reveals natural groupings of projects that share similar attribute patterns.

The workflow consists of several stages:

-

Pre‑processing and feature weighting – Missing values are imputed, numeric attributes are normalized, and categorical attributes are encoded. A weighted Euclidean distance is employed, where the weights reflect the relative importance of each feature (derived from expert judgment or statistical measures such as information gain).

-

Bisecting k‑medoids clustering – The algorithm starts with all projects in a single cluster, selects two initial medoids at random, and assigns each project to the nearer medoid. After recomputing medoids, the split is evaluated; if the improvement in intra‑cluster cost exceeds a predefined threshold, the split is accepted. The process recurses on each resulting cluster.

-

Dynamic analogue set extraction – For a given test project, the algorithm identifies the leaf cluster to which it belongs. All projects within that leaf cluster constitute the candidate analogue set. The size of this set varies from project to project, reflecting local data density and similarity.

-

Effort estimation – The candidate set is used in a conventional ABE formula. The authors adopt a distance‑inverse weighting scheme: each analogue’s contribution to the final estimate is proportional to 1/distance(project, analogue). Optionally, a simple linear regression can be fitted on the candidate set to adjust for systematic bias.

-

Evaluation – The method is benchmarked against traditional ABE models that use fixed k values (k = 1, 2, 3, 5) on several publicly available repositories, including NASA, PROMISE, and ISBSG datasets. Standard performance metrics—Mean Magnitude of Relative Error (MMRE), Median MRE (MdMRE), and prediction accuracy at 25 % error (PRED(0.25))—are reported.

Experimental results demonstrate consistent improvements. Across all datasets, the Bisecting k‑medoids‑based approach reduces MMRE by roughly 12 %–18 % compared with the best fixed‑k baseline and raises PRED(0.25) by 7 %–10 %. The gains are especially pronounced for high‑dimensional, noisy datasets where a static neighbourhood size is prone to include irrelevant or misleading analogues.

The authors also discuss methodological limitations. The quality of the clustering depends on the initial medoid selection; therefore, multiple random restarts are recommended to mitigate local optima. The hierarchical splitting incurs a computational cost of O(n log n), which may become significant for very large repositories; parallel implementations or approximate clustering could alleviate this issue. Moreover, the distance weighting relies on correctly specified feature weights; inaccurate weighting can diminish the benefit of the dynamic analogue set. Finally, while the current study integrates the dynamic analogue set with simple averaging or linear regression, future work could explore coupling it with more sophisticated machine‑learning regressors such as Random Forests, Gradient Boosting Machines, or Neural Networks.

In summary, the paper makes a compelling case for moving away from the “one‑size‑fits‑all” k‑nearest‑neighbour assumption in effort estimation. By leveraging Bisecting k‑medoids to uncover latent data structures, the proposed method automatically tailors the analogue set to each project, leading to more accurate and reliable effort predictions. The approach is conceptually straightforward, requires no extensive meta‑learning or hyper‑parameter tuning beyond the clustering thresholds, and demonstrates measurable performance gains on a variety of benchmark datasets, thereby offering a valuable contribution to both the research community and practitioners seeking more nuanced estimation techniques.

Comments & Academic Discussion

Loading comments...

Leave a Comment