Automated Assistants to Identify and Prompt Action on Visual News Bias

Bias is a common problem in today’s media, appearing frequently in text and in visual imagery. Users on social media websites such as Twitter need better methods for identifying bias. Additionally, activists –those who are motivated to effect change related to some topic, need better methods to identify and counteract bias that is contrary to their mission. With both of these use cases in mind, in this paper we propose a novel tool called UnbiasedCrowd that supports identification of, and action on bias in visual news media. In particular, it addresses the following key challenges (1) identification of bias; (2) aggregation and presentation of evidence to users; (3) enabling activists to inform the public of bias and take action by engaging people in conversation with bots. We describe a preliminary study on the Twitter platform that explores the impressions that activists had of our tool, and how people reacted and engaged with online bots that exposed visual bias. We conclude by discussing design and implication of our findings for creating future systems to identify and counteract the effects of news bias.

💡 Research Summary

**

The paper presents UnbiasedCrowd, an integrated system designed to automatically detect visual bias in news articles, expose it to the public, and stimulate collective action. The system is organized around three functional modules.

-

Bias Detection Module – Users specify a news story; the system queries Google’s relevance API to retrieve multiple articles covering the same event. Images are extracted from each article, represented using Fisher Vectors, and clustered with K‑means. By visualizing these clusters, activists can quickly spot discrepancies such as one outlet repeatedly showing violent protest scenes while another shows peaceful crowds, thereby revealing potential visual framing bias.

-

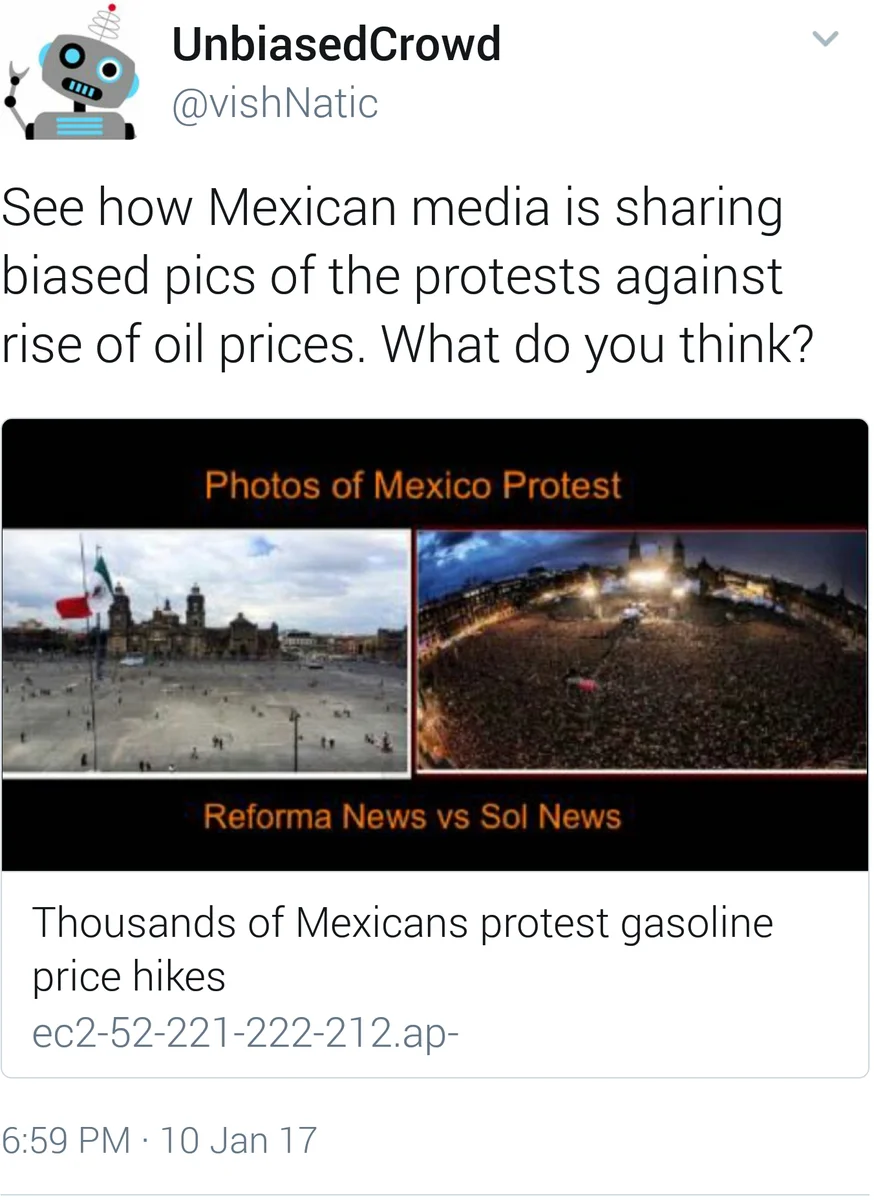

Bias Exposure Module – After identifying a suspect bias, activists select representative images from the clusters and generate a collage (image macro). The macro is posted automatically as a Twitter Summary Card. Clicking the card redirects users to a dedicated UnbiasedCrowd web page that displays the full set of collected images, allowing anyone to verify the claim and assess the evidence themselves. This design emphasizes transparency and gives users agency to judge the bias rather than being passively told.

-

Call‑to‑Action Module – The system deploys Twitter bots that search for users discussing the target story (via hashtags or shared links). The bots send the image macro to these users and initiate a scripted conversation: first asking whether they perceive a bias, then probing what actions they might consider (e.g., sharing with friends, joining a protest). While the bots handle the initial interaction, activists can intervene at any point to provide further guidance or organize offline actions.

The authors evaluated the prototype through two small‑scale studies. An interview study with three Mexican activists (focused on media coverage of energy‑reform protests) revealed three primary user needs: (a) Transparency – the ability to view the raw image collection builds credibility; (b) Education – a desire for the tool to serve as a step‑by‑step learning platform that empowers ordinary citizens to detect bias independently; and (c) Contextual Annotation – activists wanted to add explanatory notes to image clusters, yet they recognized that such annotations could also bias viewers, suggesting a need for multi‑perspective labeling.

A pilot deployment on Twitter targeted 30 users who had recently used hashtags such as #Gasolinazo, #ReformaEnergetica, and #PEMEX. The bots received 53 replies, with most participants responding at least twice. Qualitative analysis identified two distinct reaction patterns. “Evangelist” users embraced the bias claim, amplified the message, and encouraged their networks to examine the images. “Defender” users, conversely, justified the original media choices and contested the bias interpretation. This polarization illustrates that automated bias exposure can both raise awareness and trigger defensive backlash, depending on pre‑existing attitudes.

The paper draws several design implications. First, exposing the full evidence set (all images) is crucial for trust. Second, the system should incorporate educational scaffolds that teach users how to conduct visual bias analysis themselves. Third, any annotation feature must be carefully designed to avoid re‑introducing bias; a crowdsourced, multi‑viewpoint labeling approach may mitigate this risk. Fourth, while bots are effective for initial outreach, sustained activism likely requires human oversight and follow‑up mechanisms to translate online awareness into offline collective action.

Future work outlined includes (i) extending the platform to train non‑activist crowds to detect bias, (ii) conducting larger longitudinal studies to measure changes in users’ media literacy and participation in civic actions, and (iii) refining the clustering and detection pipeline (e.g., incorporating deep visual embeddings) to improve scalability across diverse news domains.

In sum, UnbiasedCrowd demonstrates that a semi‑automated pipeline—combining computer‑vision‑driven bias detection, transparent evidence presentation, and bot‑mediated civic engagement—can empower both activists and ordinary citizens to recognize and act upon visual news bias, while also highlighting the nuanced social dynamics that arise when algorithmic tools intervene in public discourse.

Comments & Academic Discussion

Loading comments...

Leave a Comment