An Information-Theoretic Framework for Fast and Robust Unsupervised Learning via Neural Population Infomax

A framework is presented for unsupervised learning of representations based on infomax principle for large-scale neural populations. We use an asymptotic approximation to the Shannon's mutual information for a large neural population to demonstrate t…

Authors: Wentao Huang, Kechen Zhang

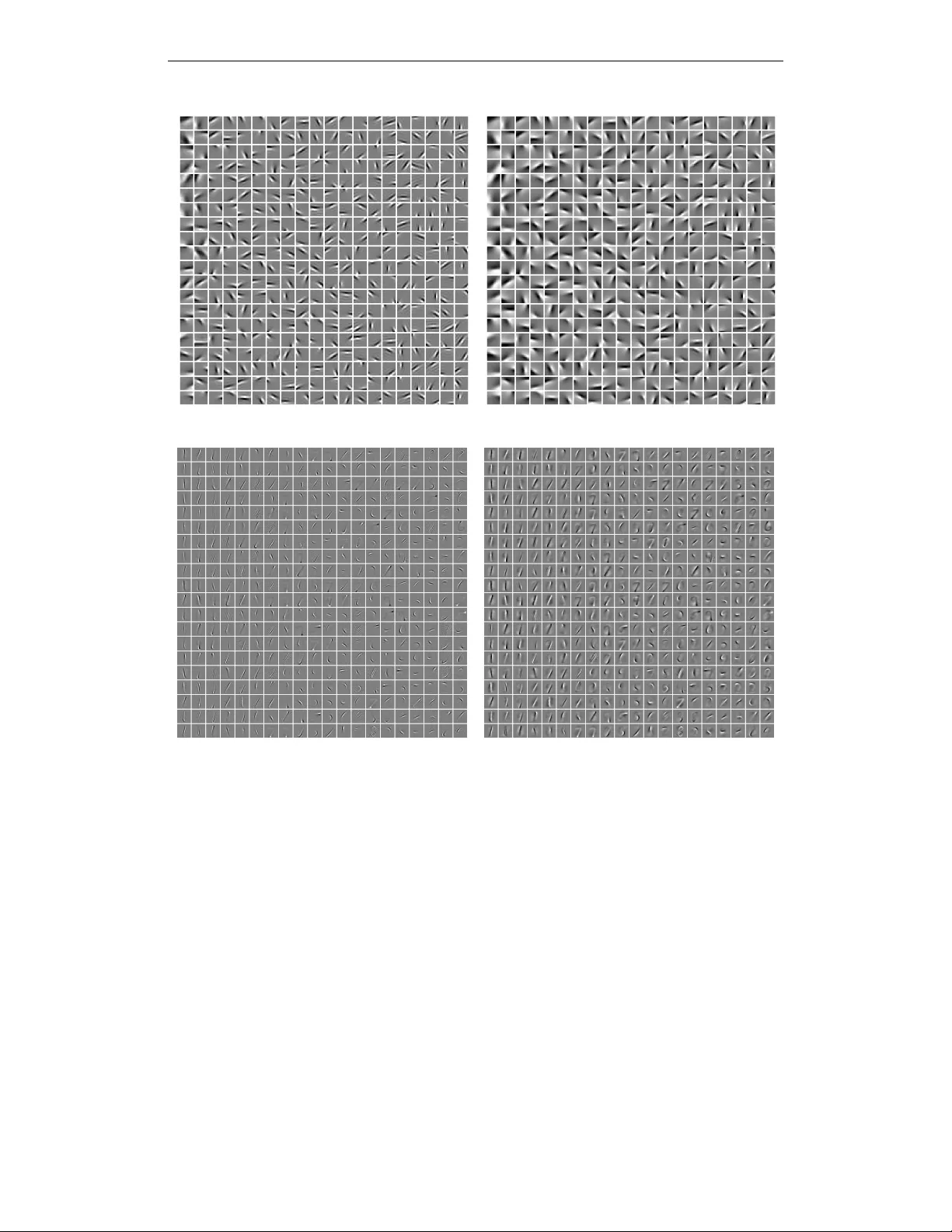

Published as a conference paper at ICLR 2017 A N I N F O R M A T I O N - T H E O R E T I C F R A M E W O R K F O R F A S T A N D R O B U S T U N S U P E RV I S E D L E A R N I N G V I A N E U R A L P O P U L A T I O N I N F O M A X W entao Huang & K echen Zhang Department of Biomedical Engineering Johns Hopkins Univ ersity School of Medicine Baltimore, MD 21205, USA { whuang21,kzhang4 } @jhmi.edu A B S T R A C T A framew ork is presented for unsupervised learning of representations based on infomax principle for large-scale neural populations. W e use an asymptotic ap- proximation to the Shannon’ s mutual information for a large neural population to demonstrate that a good initial approximation to the global information-theoretic optimum can be obtained by a hierarchical infomax method. Starting from the initial solution, an ef ficient algorithm based on gradient descent of the final ob- jectiv e function is proposed to learn representations from the input datasets, and the method w orks for complete, o vercomplete, and undercomplete bases. As con- firmed by numerical experiments, our method is robust and highly efficient for extracting salient features from input datasets. Compared with the main e xisting methods, our algorithm has a distinct adv antage in both the training speed and the robustness of unsupervised representation learning. Furthermore, the proposed method is easily extended to the supervised or unsupervised model for training deep structure networks. 1 I N T R O D U C T I O N How to disco v er the unkno wn structures in data is a ke y task for machine learning. Learning good representations from observed data is important because a clearer description may help rev eal the underlying structures. Representation learning has drawn considerable attention in recent years (Bengio et al., 2013). One category of algorithms for unsupervised learning of representations is based on probabilistic models (Lewicki & Sejnowski, 2000; Hinton & Salakhutdinov, 2006; Lee et al., 2008), such as maximum lik elihood (ML) estimation, maximum a posteriori (MAP) probabil- ity estimation, and related methods. Another category of algorithms is based on reconstruction error or generati v e criterion (Olshausen & Field, 1996; Aharon et al., 2006; V incent et al., 2010; Mairal et al., 2010; Goodfello w et al., 2014), and the objecti ve functions usually in v olve squared errors with additional constraints. Sometimes the reconstruction error or generati ve criterion may also have a probabilistic interpretation (Olshausen & Field, 1997; V incent et al., 2010). Shannon’ s information theory is a powerful tool for description of stochastic systems and could be utilized to provide a characterization for good representations (V incent et al., 2010). Ho we ver , computational difficulties associated with Shannon’ s mutual information (MI) (Shannon, 1948) hav e hindered its wider applications. The Monte Carlo (MC) sampling (Y arro w et al., 2012) is a conv er - gent method for estimating MI with arbitrary accurac y , but its computational inef ficienc y makes it unsuitable for difficult optimization problems especially in the cases of high-dimensional input stim- uli and lar ge population networks. Bell and Sejno wski (Bell & Sejno wski, 1995; 1997) ha v e directly applied the infomax approach (Linsker, 1988) to independent component analysis (ICA) of data with independent non-Gaussian components assuming additive noise, but their method requires that the number of outputs be equal to the number of inputs. The extensions of ICA to o vercomplete or undercomplete bases incur increased algorithm complexity and difficulty in learning of parameters (Lewicki & Sejno wski, 2000; Kreutz-Delgado et al., 2003; Karklin & Simoncelli, 2011). 1 Published as a conference paper at ICLR 2017 Since Shannon MI is closely related to ML and MAP (Huang & Zhang, 2016), the algorithms of representation learning based on probabilistic models should be amenable to information-theoretic treatment. Representation learning based on reconstruction error could be accommodated also by information theory , because the in verse of Fisher information (FI) is the Cram ´ er-Rao lower bound on the mean square decoding error of any unbiased decoder (Rao, 1945). Hence minimizing the reconstruction error potentially maximizes a lower bound on the MI (V incent et al., 2010). Related problems arise also in neuroscience. It has long been suggested that the real nerv ous sys- tems might approach an information-theoretic optimum for neural coding and computation (Barlo w, 1961; Atick, 1992; Borst & Theunissen, 1999). Howe ver , in the cerebral corte x, the number of neu- rons is huge, with about 10 5 neurons under a square millimeter of cortical surface (Carlo & Stevens, 2013). It has often been computationally intractable to precisely characterize information coding and processing in large neural populations. T o address all these issues, we present a framework for unsupervised learning of representations in a large-scale nonlinear feedforward model based on infomax principle with realistic biological constraints such as neuron models with Poisson spikes. First we adopt an objecti ve function based on an asymptotic formula in the large population limit for the MI between the stimuli and the neural population responses (Huang & Zhang, 2016). Since the objectiv e function is usually noncon v ex, choosing a good initial v alue is v ery important for its optimization. Starting from an initial v alue, we use a hierarchical infomax approach to quickly find a tentativ e global optimal solution for each layer by analytic methods. Finally , a fast conv er gence learning rule is used for optimizing the final objec- tiv e function based on the tentative optimal solution. Our algorithm is robust and can learn complete, ov ercomplete or undercomplete basis v ectors quickly from different datasets. Experimental results showed that the con v ergence rate of our method was significantly faster than other existing methods, often by an order of magnitude. More importantly , the number of output units processed by our method can be v ery lar ge, much lar ger than the number of inputs. As far as we know , no existing model can easily deal with this situation. 2 M E T H O D S 2 . 1 A P P ROX I M A T I O N O F M U T U A L I N F O R M A T I O N F O R N E U R A L P O P U L A T I O N S Suppose the input x is a K -dimensional v ector , x = ( x 1 , · · · , x K ) T , the outputs of N neurons are denoted by a vector , r = ( r 1 , · · · , r N ) T , where we assume N is large, generally N K . W e denote random v ariables by upper case letters, e.g., random variables X and R , in contrast to their vector v alues x and r . The MI between X and R is defined by I ( X ; R ) = D ln p ( x | r ) p ( x ) E r , x , where h·i r , x denotes the expectation with respect to the probability density function (PDF) p ( r , x ) . Our goal is to maxmize MI I ( X ; R ) by finding the optimal PDF p ( r | x ) under some constraint conditions, assuming that p ( r | x ) is characterized by a noise model and acti v ation functions f ( x ; θ n ) with parameters θ n for the n -th neuron ( n = 1 , · · · , N ). In other words, we optimize p ( r | x ) by solving for the optimal parameters θ n . Unfortunately , it is intractable in most cases to solve for the optimal parameters that maximizes I ( X ; R ) . Howev er , if p ( x ) and p ( r | x ) are twice continuously differentiable for almost ev ery x ∈ R K , then for large N we can use an asymptotic formula to approximate the true value of I ( X ; R ) with high accuracy (Huang & Zhang, 2016): I ( X ; R ) ' I G = 1 2 ln det G ( x ) 2 π e x + H ( X ) , (1) where det ( · ) denotes the matrix determinant and H ( X ) = − h ln p ( x ) i x is the stimulus entropy , G ( x ) = J ( x ) + P ( x ) , (2) J ( x ) = − ∂ 2 ln p ( r | x ) ∂ x ∂ x T r | x , (3) P ( x ) = − ∂ 2 ln p ( x ) ∂ x ∂ x T . (4) Assuming independent noises in neuronal responses, we hav e p ( r | x ) = Q N n =1 p ( r n | x ; θ n ) , and the Fisher information matrix becomes J ( x ) ≈ N P K 1 k =1 α k S ( x ; θ k ) , where S ( x ; θ k ) = 2 Published as a conference paper at ICLR 2017 D ∂ ln p ( r | x ; θ k ) ∂ x ∂ ln p ( r | x ; θ k ) ∂ x T E r | x and α k > 0 ( k = 1 , · · · , K 1 ) is the population density of param- eter θ k , with P K 1 k =1 α k = 1 , and 1 ≤ K 1 ≤ N (see Appendix A.1 for details). Since the cerebral cortex usually forms functional column structures and each column is composed of neurons with the same properties (Hubel & W iesel, 1962), the positi v e integer K 1 can be reg arded as the number of distinct classes in the neural population. Therefore, gi ven the activ ation function f ( x ; θ k ) , our goal becomes to find the optimal popula- tion distrib ution density α k of parameter v ector θ k so that the MI between the stimulus x and the response r is maximized. By Eq. (1), our optimization problem can be stated as follows: minimize Q G [ { α k } ] = − 1 2 h ln (det ( G ( x ))) i x , (5) subject to K 1 X k =1 α k = 1 , α k > 0 , ∀ k = 1 , · · · , K 1 . (6) Since Q G [ { α k } ] is a conv ex function of { α k } (Huang & Zhang, 2016), we can readily find the optimal solution for small K by efficient numerical methods. For large K , howe ver , finding an optimal solution by numerical methods becomes intractable. In the following we will propose an alternativ e approach to this problem. Instead of directly solving for the density distribution { α k } , we optimize the parameters { α k } and { θ k } simultaneously under a hierarchical infomax framework. 2 . 2 H I E R A R C H I C A L I N F O M A X For clarity , we consider neuron model with Poisson spik es although our method is easily applicable to other noise models. The acti v ation function f ( x ; θ n ) is generally a nonlinear function, such as sigmoid and rectified linear unit (ReLU) (Nair & Hinton, 2010). W e assume that the nonlinear function for the n -th neuron has the following form: f ( x ; θ n ) = ˜ f ( y n ; ˜ θ n ) , where y n = w T n x . (7) with w n being a K -dimensional weights vector , ˜ f ( y n ; ˜ θ n ) is a nonlinear function, θ n = ( w T n , ˜ θ T n ) T and ˜ θ n are the parameter vectors ( n = 1 , · · · , N ). In general, it is very dif ficult to find the optimal parameters, θ n , n = 1 , · · · , N , for the following reasons. First, the number of output neurons N is very large, usually N K . Second, the activ ation function f ( x ; θ n ) is a nonlinear function, which usually leads to a noncon ve x optimization problem. For nonconv ex optimization problems, the selection of initial values often has a great influence on the final optimization results. Our approach meets these challenges by making better use of the lar ge number of neurons and by finding good initial values by a hierarchical infomax method. W e di vide the nonlinear transformation into two stages, mapping first from x to y n ( n = 1 , · · · , N ), and then from y n to ˜ f ( y n ; ˜ θ n ) , where y n can be re garded as the membrane potential of the n -th neuron, and ˜ f ( y n ; ˜ θ n ) as its firing rate. As with the real neurons, we assume that the membrane potential is corrupted by noise: ˘ Y n = Y n + Z n , (8) where Z n ∼ N 0 , σ 2 is a normal distribution with mean 0 and variance σ 2 . Then the mean membrane potential of the k -th class subpopulation with N k = N α k neurons is giv en by ¯ Y k = 1 N k N k X n =1 ˘ Y k n = Y k + ¯ Z k , k = 1 , · · · , K 1 , (9) ¯ Z k ∼ N (0 , N − 1 k σ 2 ) . (10) Define vectors ˘ y = ( ˘ y 1 , · · · , ˘ y N ) T , ¯ y = ( ¯ y 1 , · · · , ¯ y K 1 ) T and y = ( y 1 , · · · , y K 1 ) T , where y k = w T k x ( k = 1 , · · · , K 1 ). Notice that ˘ y n ( n = 1 , · · · , N ) is also divided into K 1 classes, the same as for r n . If we assume f ( x ; θ k ) = ˜ f ( ¯ y k ; ˜ θ k ) , i.e. assuming an additi ve Gaussian noise for y n (see Eq. 9), then the random variables X , Y , ˘ Y , ¯ Y and R form a Markov chain, denoted by X → Y → ˘ Y → ¯ Y → R (see Figure 1), and we hav e the following proposition (see Appendix A.2). 3 Published as a conference paper at ICLR 2017 X Y R Y Y W X Y + Z ( T 1 /N k f ( ) Y x x x y - y - y - y m 1 ( y m N k ( y m N k K 1 r m N k y m i ( r N 1 y N 1 ( y N ( r N y N y n i ( y n 1 ( y n 1 y n i y m i y m 1 y N 1 r i y i y 1 y i ( y 1 ( r n i r n 1 r m i r m 1 r 1 1 k K k 1 Figure 1: A neural network interpretaton for random v ariables X , Y , ˘ Y , ¯ Y , R . Proposition 1. W ith the random variables X , Y , ˘ Y , ¯ Y , R and Markov chain X → Y → ˘ Y → ¯ Y → R , the following equations hold, I ( X ; R ) = I ( Y ; R ) ≤ I ( ˘ Y ; R ) ≤ I ( ¯ Y ; R ) , (11) I ( X ; R ) ≤ I ( X ; ¯ Y ) = I ( X ; ˘ Y ) ≤ I ( X ; Y ) , (12) and for lar ge N k ( k = 1 , · · · , K 1 ), I ( ˘ Y ; R ) ' I ( ¯ Y ; R ) ' I ( Y ; R ) = I ( X ; R ) , (13) I ( X ; Y ) ' I ( X ; ¯ Y ) = I ( X ; ˘ Y ) . (14) A major advantage of incorporating membrane noise is that it facilitates finding the optimal solution by using the infomax principle. Moreov er , the optimal solution obtained this way is more robust; that is, it discourages ov erfitting and has a strong ability to resist distortion. W ith vanishing noise σ 2 → 0 , we have ¯ Y k → Y k , ˜ f ( ¯ y k ; ˜ θ k ) ' ˜ f ( y k ; ˜ θ k ) = f ( x ; θ k ) , so that Eqs. (13) and (14) hold as in the case of large N k . T o optimize MI I ( Y ; R ) , the probability distribution of random v ariable Y , p ( y ) , needs to be de- termined, i.e. maximizing I ( Y ; R ) about p ( y ) under some constraints should yield an optimal distribution: p ∗ ( y ) = arg max p ( y ) I ( Y ; R ) . Let C = max p ( y ) I ( Y ; R ) be the channel capacity of neural population coding, and we alw ays have I ( X ; R ) ≤ C (Huang & Zhang, 2016). T o find a suitable linear transformation from X to Y that is compatible with this distrib ution p ∗ ( y ) , a reason- able choice is to maximize I ( X ; ˘ Y ) ( ≤ I ( X ; Y )) , where ˘ Y is a noise-corrupted version of Y . This implies minimum information loss in the first transformation step. Ho wev er , there may exist many transformations from X to ˘ Y that maximize I ( X ; ˘ Y ) (see Appendix A.3.1). Ideally , if we can find a transformation that maximizes both I ( X ; ˘ Y ) and I ( Y ; R ) simultaneously , then I ( X ; R ) reaches its maximum value: I ( X ; R ) = max p ( y ) I ( Y ; R ) = C . From the discussion above we see that maximizing I ( X ; R ) can be divided into two steps, namely , maximizing I ( X ; ˘ Y ) and maximizing I ( Y ; R ) . The optimal solutions of max I ( X ; ˘ Y ) and max I ( Y ; R ) will pro vide a good initial approximation that tend to be very close to the optimal solution of max I ( X ; R ) . Similarly , we can extend this method to multilayer neural population networks. For example, a two- layer network with outputs R (1) and R (2) form a Markov chain, X → ˜ R (1) → R (1) → ¯ R (1) → 4 Published as a conference paper at ICLR 2017 R (2) , where random v ariable ˜ R (1) is similar to Y , random variable R (1) is similar to ˘ Y , and ¯ R (1) is similar to ¯ Y in the above. Then we can show that the optimal solution of max I ( X ; R (2) ) can be approximated by the solutions of max I ( X ; R (1) ) and max I ( ˜ R (1) ; R (2) ) , with I ( ˜ R (1) ; R (2) ) ' I ( ¯ R (1) ; R (2) ) . More generally , consider a highly nonlinear feedforward neural network that maps the input x to output z , with z = F ( x ; θ ) = h L ◦ · · · ◦ h 1 ( x ) , where h l ( l = 1 , · · · , L ) is a linear or nonlinear function (Montufar et al., 2014). W e aim to find the optimal parameter θ by maximizing I ( X ; Z ) . It is usually difficult to solv e the optimization problem when there are man y local e xtrema for F ( x ; θ ) . Howe ver , if each function h l is easy to optimize, then we can use the hierarchical infomax method described abov e to get a good initial approximation to its global optimization solution, and go from there to find the final optimal solution. This information-theoretic consideration from the neural population coding point of vie w may help e xplain why deep structure networks with unsupervised pre-training hav e a po werful ability for learning representations. 2 . 3 T H E O B J E C T I V E F U N C T I O N The optimization processes for maximizing I ( X ; ˘ Y ) and maximizing I ( Y ; R ) are discussed in detail in Appendix A.3. First, by maximizing I ( X ; ˘ Y ) (see Appendix A.3.1 for details), we can get the optimal weight parameter w k ( k = 1 , · · · , K 1 , see Eq. 7) and its population density α k (see Eq. 6) which satisfy W = [ w 1 , · · · , w K 1 ] = a U 0 Σ − 1 / 2 0 C , (15) α 1 = · · · = α K 1 = K − 1 1 , (16) where a = q K 1 K − 1 0 , C = [ c 1 , · · · , c K 1 ] ∈ R K 0 × K 1 , CC T = I K 0 , I K 0 is a K 0 × K 0 identity matrix with integer K 0 ∈ [1 , K ] , the diagonal matrix Σ 0 ∈ R K 0 × K 0 and matrix U 0 ∈ R K × K 0 are giv en in (A.44) and (A.45), with K 0 giv en by Eq. (A.52). Matrices Σ 0 and U 0 can be obtained by Σ and U with U T 0 U 0 = I K 0 and U 0 Σ 0 U T 0 ≈ UΣU T ≈ xx T x (see Eq. A.23). The optimal weight parameter w k (15) means that the input variable x must first undergo a whitening- like transformation ˆ x = Σ − 1 / 2 0 U T 0 x , and then goes through the transformation y = a C T ˆ x , with matrix C to be optimized below . Note that weight matrix W satisfies rank( W ) = min( K 0 , K 1 ) , which is a lo w rank matrix, and its lo w dimensionality helps reduce o verfitting during training (see Appendix A.3.1). By maximizing I ( Y ; R ) (see Appendix A.3.2), we further solve the the optimal parameters ˜ θ k for the nonlinear functions ˜ f ( y k ; ˜ θ k ) , k = 1 , · · · , K 1 . Finally , the objective function for our optimiza- tion problem (Eqs. 5 and 6) turns into (see Appendix A.3.3 for details): minimize Q [ C ] = − 1 2 D ln det C ˆ ΦC T E ˆ x , (17) subject to CC T = I K 0 , (18) where ˆ Φ = diag φ ( ˆ y 1 ) 2 , · · · , φ ( ˆ y K 1 ) 2 , φ ( ˆ y k ) = a − 1 | ∂ g k ( ˆ y k ) /∂ ˆ y k | ( k = 1 , · · · , K 1 ), g k ( ˆ y k ) = 2 q ˜ f ( ˆ y k ; ˜ θ k ) , ˆ y k = a − 1 y k = c T k ˆ x , and ˆ x = Σ − 1 / 2 0 U T 0 x . W e apply the gradient descent method to optimize the objectiv e function, with the gradient of Q [ C ] giv en by: dQ [ C ] d C = − C ˆ ΦC T − 1 C ˆ Φ + ˆ x ω T ˆ x , (19) where ω = ( ω 1 , · · · , ω K 1 ) T , ω k = φ ( ˆ y k ) φ 0 ( ˆ y k ) c T k C ˆ ΦC T − 1 c k , k = 1 , · · · , K 1 . When K 0 = K 1 (or K 0 > K 1 ), the objectiv e function Q [ C ] can be reduced to a simpler form, and its gradient is also easy to compute (see Appendix A.4.1). Howe ver , when K 0 < K 1 , it is computationally expensiv e to update C by applying the gradient of Q [ C ] directly , since it requires matrix inv ersion for e v ery ˆ x . W e use another objective function ˆ Q [ C ] (see Eq. A.118) which is an approximation to Q [ C ] , but its gradient is easier to compute (see Appendix A.4.2). The function 5 Published as a conference paper at ICLR 2017 ˆ Q [ C ] is the approximation of Q [ C ] , ideally they ha ve the same optimal solution for the parameter C . Usually , for optimizing the objectiv e in Eq. 17, the orthogonality constraint (Eq. 18) is unnecessary . Howe ver , this orthogonality constraint can accelerate the conv ergence rate if we emplo y it for the initial iteration to update C (see Appendix A.5). 3 E X P E R I M E N TA L R E S U LT S W e have applied our methods to the natural images from Olshausen’ s image dataset (Olshausen & Field, 1996) and the images of handwritten digits from MNIST dataset (LeCun et al., 1998) using Matlab 2016a on a computer with 12 Intel CPU cores (2.4 GHz). The gray level of each raw image was normalized to the range of 0 to 1 . M image patches with size w × w = K for training were randomly sampled from the images. W e used the Poisson neuron model with a modified sigmoidal tuning function ˜ f ( y ; ˜ θ ) = 1 4(1+exp( − β y − b )) 2 , with g ( y ) = 2 q ˜ f ( y ; ˜ θ ) = 1 1+exp( − β y − b ) , where ˜ θ = ( β , b ) T . W e obtained the initial v alues (see Appendix A.3.2): b 0 = 0 and β 0 ≈ 1 . 81 q K 1 K − 1 0 . For our experiments, we set β = 0 . 5 β 0 for iteration epoch t = 1 , · · · , t 0 and β = β 0 for t = t 0 + 1 , · · · , t max , where t 0 = 50 . Firstly , we tested the case of K = K 0 = K 1 = 144 and randomly sampled M = 10 5 image patches with size 12 × 12 from the Olshausen’ s natural images, assuming that N = 10 6 neurons were divided into K 1 = 144 classes and = 1 (see Eq. A.52 in Appendix). The input patches were preprocessed by the ZCA whitening filters (see Eq. A.68). T o test our algorithms, we chose the batch size to be equal to the number of training samples M , although we could also choose a smaller batch size. W e updated the matrix C from a random start, and set parameters t max = 300 , v 1 = 0 . 4 , and τ = 0 . 8 for all experiments. In this case, the optimal solution C looked similar to the optimal solution of IICA (Bell & Sejnowski, 1997). W e also compared with the fast ICA algorithm (FICA) (Hyv ¨ arinen, 1999), which is faster than IICA. W e also tested the restricted Boltzmann machine (RBM) (Hinton et al., 2006) for a unsupervised learning of representations, and found that it could not easily learn Gabor-like filters from Olshausen’ s image dataset as trained by contrastive div ergence. Howe ver , an improved method by adding a sparsity constraint on the output units, e.g., sparse RBM (SRBM) (Lee et al., 2008) or sparse autoencoder (Hinton, 2010), could attain Gabor -like filters from this dataset. Similar results with Gabor-lik e filters were also reproduced by the denoising autoencoders (V incent et al., 2010), which method requires a careful choice of parameters, such as noise level, learning rate, and batch size. In order to compare our methods, i.e. Algorithm 1 (Alg.1, see Appendix A.4.1) and Algorithm 2 (Alg.2, see Appendix A.4.2), with other methods, i.e. IICA, FICA and SRBM, we implemented these algorithms using the same initial weights and the same training data set (i.e. 10 5 image patches preprocessed by the ZCA whitening filters). T o get a good result by IICA, we must carefully select the parameters; we set the batch size as 50 , the initial learning rate as 0 . 01 , and final learning rate as 0 . 0001 , with an exponential decay with the epoch of iterations. IICA tends to hav e a faster con v ergence rate for a bigger batch size but it may become harder to escape local minima. For FICA, we chose the nonlinearity function f ( u ) = log cosh( u ) as contrast function, and for SRBM, we set the sparseness control constant p as 0 . 01 and 0 . 03 . The number of epoches for iterations was set to 300 for all algorithms. Figure 2 shows the filters learned by our methods and other methods. Each filter in Figure 2(a) corresponds to a column vector of matrix ˇ C (see Eq. A.69), where each vector for display is normalized by ˇ c k ← ˇ c k / max( | ˇ c 1 ,k | , · · · , | ˇ c K,k | ) , k = 1 , · · · , K 1 . The results in Figures 2(a), 2(b) and 2(c) look v ery similar to one another , and slightly dif ferent from the results in Figure 2(d) and 2(e). There are no Gabor-like filters in Figure 2(f), which corresponds to SRBM with p = 0 . 03 . Figure 3 shows how the coefficient entropy (CFE) (see Eq. A.122) and the conditional entropy (CDE) (see Eq. A.125) varied with training time. W e calculated CFE and CDE by sampling once ev ery 10 epoches from a total of 300 epoches. These results show that our algorithms had a fast con v ergence rate towards stable solutions while having CFE and CDE values similar to the algorithm of IICA, which conv er ged much more slowly . Here the values of CFE and CDE should be as small 6 Published as a conference paper at ICLR 2017 (a) (b) (c) (d) (e) (f) Figure 2: Comparison of filters obtained from 10 5 natural image patches of size 12 × 12 by our methods (Alg.1 and Alg.2) and other methods. The number of output filters was K 1 = 144 . ( a ): Alg.1. ( b ): Alg.2. ( c ): IICA. ( d ): FICA. ( e ): SRBM ( p = 0 . 01 ). ( f ): SRBM ( p = 0 . 03 ). 10 0 10 1 10 2 time (seconds) 1.8 1.85 1.9 1.95 2 coefficient entropy (bits) Alg.1 Alg.2 IICA FICA SRBM (p = 0.01) SRBM (p = 0.03) (a) 10 0 10 1 10 2 time (seconds) -400 -350 -300 -250 -200 -150 conditional entropy (bits) Alg.1 Alg.2 IICA (b) 10 0 10 1 10 2 time (seconds) -200 -100 0 100 200 300 conditional entropy (bits) SRBM (p = 0.01) SRBM (p = 0.03) SRBM (p = 0.05) SRBM (p = 0.10) (c) Figure 3: Comparison of quantization effects and con ver gence rate by coefficient entropy (see A.122) and conditional entropy (see A.125) corresponding to training results (filters) shown in Fig- ure 2. The coefficient entropy (panel a ) and conditional entropy (panel b and c ) are shown as a function of training time on a logarithmic scale. All experiments run on the same machine using Matlab . Here we sampled once e v ery 10 epoches out of a total of 300 epoches. W e set epoch number t 0 = 50 for Alg.1 and Alg.2 and the start time to 1 second. as possible for a good representation learned from the same data set. Here we set epoch number t 0 = 50 in our algorithms (see Alg.1 and Alg.2), and the start time was set to 1 second. This explains the step seen in Figure 3 (b) for Alg.1 and Alg.2 since the parameter β was updated when epoch number t = t 0 . FICA had a conv ergence rate close to our algorithms but had a big CFE, which is reflected by the quality of the filter results in Figure 2. The con ver gence rate and CFE for SRBM were close to IICA, but SRBM had a much bigger CDE than IICA, which implies that the information had a greater loss when passing through the system optimized by SRBM than by IICA or our methods. 7 Published as a conference paper at ICLR 2017 From Figure 3(c) we see that the CDE (or MI I ( X ; R ) , see Eq. A.124 and A.125) decreases (or increases) with the increase of the value of the sparseness control constant p . Note that a smaller p means sparser outputs. Hence, in this sense, increasing sparsity may result in sacrificing some information. On the other hand, a weak sparsity constraint may lead to failure of learning Gabor- like filters (see Figure 2(f)), and increasing sparsity has an adv antage in reducing the impact of noise in many practical cases. Similar situation also occurs in sparse coding (Olshausen & Field, 1997), which provides a class of algorithms for learning o vercomplete dictionary representations of the input signals. Howe ver , its training is time consuming due to its expensi v e computational cost, although many new training algorithms ha ve emerged (e.g. Aharon et al., 2006; Elad & Aharon, 2006; Lee et al., 2006; Mairal et al., 2010). See Appendix A.5 for additional experimental results. 4 C O N C L U S I O N S In this paper, we hav e presented a framework for unsupervised learning of representations via in- formation maximization for neural populations. Information theory is a po werful tool for machine learning and it also provides a benchmark of optimization principle for neural information pro- cessing in nervous systems. Our frame work is based on an asymptotic approximation to MI for a large-scale neural population. T o optimize the infomax objective, we first use hierarchical infomax to obtain a good approximation to the global optimal solution. Analytical solutions of the hierarchi- cal infomax are further impro v ed by a fast conv er gence algorithm based on gradient descent. This method allows us to optimize highly nonlinear neural networks via hierarchical optimization using infomax principle. From the vie wpoint of information theory , the unsupervised pre-training for deep learning (Hinton & Salakhutdinov, 2006; Bengio et al., 2007) may be reinterpreted as a process of hierarchical infomax, which might help explain why unsupervised pre-training helps deep learning (Erhan et al., 2010). In our frame w ork, a pre-whitening step can emer ge naturally by the hierarchical infomax, which might also explain why a pre-whitening step is useful for training in many learning algorithms (Coates et al., 2011; Bengio, 2012). Our model naturally incorporates a considerable degree of biological realism. It allows the opti- mization of a large-scale neural population with noisy spiking neurons while taking into account of multiple biological constraints, such as membrane noise, limited energy , and bounded connection weights. W e employ a technique to attain a low-rank weight matrix for optimization, so as to reduce the influence of noise and discourage ov erfitting during training. In our model, many parameters are optimized, including the population density of parameters, filter weight vectors, and parameters for nonlinear tuning functions. Optimizing all these model parameters could not be easily done by many other methods. Our experimental results suggest that our method for unsupervised learning of representations has obvious adv antages in its training speed and robustness ov er the main existing methods. Our model has a nonlinear feedforward structure and is conv enient for fast learning and inference. This simple and flexible framework for unsupervised learning of presentations should be readily extended to training deep structure networks. In future work, it w ould interesting to use our method to train deep structure networks with either unsupervised or supervised learning. A C K N OW L E D G M E N T S W e thank Prof. Honglak Lee for sharing Matlab code for algorithm comparison, Prof. Shan T an for discussions and comments and Kai Liu for helping draw Figure 1. Supported by grant NIH-NIDCD R01 DC013698. R E F E R E N C E S Aharon, M., Elad, M., & Bruckstein, A. (2006). K-SVD: An algorithm for designing overcomplete dictionaries for sparse representation. Signal Pr ocessing, IEEE T ransactions on , 54(11), 4311– 4322. 8 Published as a conference paper at ICLR 2017 Amari, S. (1999). Natural gradient learning for over - and under-complete bases in ica. Neural Comput. , 11(8), 1875–1883. Atick, J. J. (1992). Could information theory pro vide an ecological theory of sensory processing? Network: Comp. Neural. , 3(2), 213–251. Barlow , H. B. (1961). Possible principles underlying the transformation of sensory messages. Sen- sory Communication , (pp. 217–234). Bell, A. J. & Sejnowski, T . J. (1995). An information-maximization approach to blind separation and blind decon v olution. Neural Comput. , 7(6), 1129–1159. Bell, A. J. & Sejnowski, T . J. (1997). The ”independent components” of natural scenes are edge filters. V ision Res. , 37(23), 3327–3338. Bengio, Y . (2012). Deep learning of representations for unsupervised and transfer learning. Unsu- pervised and T ransfer Learning Challenges in Machine Learning , 7, 19. Bengio, Y ., Courville, A., & V incent, P . (2013). Representation learning: A re vie w and new per - spectiv es. P attern Analysis and Machine Intelligence, IEEE T ransactions on , 35(8), 1798–1828. Bengio, Y ., Lamblin, P ., Popo vici, D., Larochelle, H., et al. (2007). Greedy layer-wise training of deep networks. Advances in neural information pr ocessing systems , 19, 153. Borst, A. & Theunissen, F . E. (1999). Information theory and neural coding. Natur e neur oscience , 2(11), 947–957. Carlo, C. N. & Stevens, C. F . (2013). Structural uniformity of neocortex, revisited. Pr oceedings of the National Academy of Sciences , 110(4), 1488–1493. Coates, A., Ng, A. Y ., & Lee, H. (2011). An analysis of single-layer networks in unsupervised feature learning. In International conference on artificial intelligence and statistics (pp. 215– 223). Cortes, C. & V apnik, V . (1995). Support-vector networks. Machine learning , 20(3), 273–297. Cov er , T . M. & Thomas, J. A. (2006). Elements of Information, 2nd Edition . New Y ork: Wile y- Interscience. Edelman, A., Arias, T . A., & Smith, S. T . (1998). The geometry of algorithms with orthogonality constraints. SIAM J. Matrix Anal. Appl. , 20(2), 303–353. Elad, M. & Aharon, M. (2006). Image denoising via sparse and redundant representations over learned dictionaries. Image Pr ocessing, IEEE T r ansactions on , 15(12), 3736–3745. Erhan, D., Bengio, Y ., Courville, A., Manzagol, P .-A., V incent, P ., & Bengio, S. (2010). Why does unsupervised pre-training help deep learning? The J ournal of Mac hine Learning Resear c h , 11, 625–660. Goodfellow , I., Pouget-Abadie, J., Mirza, M., Xu, B., W arde-Farle y , D., Ozair , S., Courville, A., & Bengio, Y . (2014). Generative adv ersarial nets. In Advances in Neural Information Pr ocessing Systems (pp. 2672–2680). Hinton, G. (2010). A practical guide to training restricted boltzmann machines. Momentum , 9(1), 926. Hinton, G., Osindero, S., & T eh, Y .-W . (2006). A fast learning algorithm for deep belief nets. Neur al computation , 18(7), 1527–1554. Hinton, G. E. & Salakhutdinov , R. R. (2006). Reducing the dimensionality of data with neural networks. Science , 313(5786), 504–507. Huang, W . & Zhang, K. (2016). Information-theoretic bounds and approximations in neural popu- lation coding. Neural Comput, submitted, URL https://arxiv .or g/abs/1611.01414 . 9 Published as a conference paper at ICLR 2017 Hubel, D. H. & Wiesel, T . N. (1962). Receptive fields, binocular interaction and functional archi- tecture in the cat’ s visual cortex. The Journal of physiology , 160(1), 106–154. Hyv ¨ arinen, A. (1999). Fast and rob ust fixed-point algorithms for independent component analysis. Neural Networks, IEEE T ransactions on , 10(3), 626–634. Karklin, Y . & Simoncelli, E. P . (2011). Efficient coding of natural images with a population of noisy linear-nonlinear neurons. In Advances in neural information pr ocessing systems , volume 24 (pp. 999–1007). K onstantinides, K. & Y ao, K. (1988). Statistical analysis of effecti v e singular values in matrix rank determination. Acoustics, Speech and Signal Pr ocessing, IEEE T r ansactions on , 36(5), 757–763. Kreutz-Delgado, K., Murray , J. F ., Rao, B. D., Engan, K., Lee, T . S., & Sejno wski, T . J. (2003). Dictionary learning algorithms for sparse representation. Neural computation , 15(2), 349–396. LeCun, Y ., Bottou, L., Bengio, Y ., & Haffner , P . (1998). Gradient-based learning applied to docu- ment recognition. Pr oceedings of the IEEE , 86(11), 2278–2324. Lee, H., Battle, A., Raina, R., & Ng, A. Y . (2006). Efficient sparse coding algorithms. In Advances in neural information pr ocessing systems (pp. 801–808). Lee, H., Ekanadham, C., & Ng, A. Y . (2008). Sparse deep belief net model for visual area v2. In Advances in neural information pr ocessing systems (pp. 873–880). Lewicki, M. S. & Olshausen, B. A. (1999). Probabilistic framework for the adaptation and compar- ison of image codes. JOSA A , 16(7), 1587–1601. Lewicki, M. S. & Sejno wski, T . J. (2000). Learning overcomplete representations. Neur al compu- tation , 12(2), 337–365. Linsker , R. (1988). Self-Organization in a perceptual network. Computer , 21(3), 105–117. Mairal, J., Bach, F ., Ponce, J., & Sapiro, G. (2009). Online dictionary learning for sparse coding. In Pr oceedings of the 26th annual international confer ence on machine learning (pp. 689–696).: A CM. Mairal, J., Bach, F ., Ponce, J., & Sapiro, G. (2010). Online learning for matrix factorization and sparse coding. The Journal of Mac hine Learning Resear c h , 11, 19–60. Montufar , G. F ., P ascanu, R., Cho, K., & Bengio, Y . (2014). On the number of linear regions of deep neural networks. In Advances in Neural Information Pr ocessing Systems (pp. 2924–2932). Nair , V . & Hinton, G. E. (2010). Rectified linear units improv e restricted boltzmann machines. In Pr oceedings of the 27th International Conference on Machine Learning (ICML-10) (pp. 807– 814). Olshausen, B. A. & Field, D. J. (1996). Emer gence of simple-cell receptive field properties by learning a sparse code for natural images. Natur e , 381(6583), 607–609. Olshausen, B. A. & Field, D. J. (1997). Sparse coding with an ov ercomplete basis set: A strategy employed by v1? V ision Res. , 37(23), 3311–3325. Rao, C. R. (1945). Information and accuracy attainable in the estimation of statistical parameters. Bulletin of the Calcutta Mathematical Society , 37(3), 81–91. Shannon, C. (1948). A mathematical theory of communications. Bell System T echnical J ournal , 27, 379–423 and 623–656. Sriv astava, N., Hinton, G., Krizhevsk y , A., Sutskev er , I., & Salakhutdinov , R. (2014). Dropout: A simple way to prev ent neural networks from overfitting. The Journal of Machine Learning Resear ch , 15(1), 1929–1958. 10 Published as a conference paper at ICLR 2017 V incent, P ., Larochelle, H., Lajoie, I., Bengio, Y ., & Manzagol, P .-A. (2010). Stacked denoising autoencoders: Learning useful representations in a deep netw ork with a local denoising criterion. The Journal of Mac hine Learning Resear ch , 11, 3371–3408. Y arrow , S., Challis, E., & Series, P . (2012). Fisher and shannon information in finite neural popula- tions. Neural computation , 24(7), 1740–1780. A P P E N D I X A . 1 F O R M U L A S F O R A P P R O X I M A T I O N O F M U T U A L I N F O R M A T I O N It follows from I ( X ; R ) = D ln p ( x | r ) p ( x ) E r , x and Eq. (1) that the conditional entropy should read: H ( X | R ) = − h ln p ( x | r ) i r , x ' − 1 2 ln det G ( x ) 2 π e x . (A.1) The Fisher information matrix J ( x ) (see Eq. 3), which is symmetric and positi v e semidefinite, can be written also as J ( x ) = ∂ ln p ( r | x ) ∂ x ∂ ln p ( r | x ) ∂ x T r | x . (A.2) If we suppose p ( r | x ) is conditional independent, namely , p ( r | x ) = Q N n =1 p ( r n | x ; θ n ) , then we hav e (see Huang & Zhang, 2016) J ( x ) = N Z Θ p ( θ ) S ( x ; θ ) d θ , (A.3) S ( x ; θ ) = ∂ ln p ( r | x ; θ ) ∂ x ∂ ln p ( r | x ; θ ) ∂ x T r | x , (A.4) where p ( θ ) is the population density function of parameter θ , p ( θ ) = 1 N N X n =1 δ ( θ − θ n ) , (A.5) and δ ( · ) denotes the Dirac delta function. It can be proved that the approximation function of MI I G [ p ( θ )] (Eq. 1) is concave about p ( θ ) (Huang & Zhang, 2016). In Eq. (A.3), we can approximate the continuous integral by a discrete summation for numerical computation, J ( x ) ≈ N K 1 X k =1 α k S ( x ; θ k ) , (A.6) where P K 1 k =1 α k = 1 , α k > 0 , k = 1 , · · · , K 1 , 1 ≤ K 1 ≤ N . For Poisson neuron model, by Eq. (A.4) we ha ve (see Huang & Zhang, 2016) p ( r | x ; θ ) = f ( x ; θ ) r r ! exp ( − f ( x ; θ )) , (A.7) S ( x ; θ ) = 1 f ( x ; θ ) ∂ f ( x ; θ ) ∂ x ∂ f ( x ; θ ) ∂ x T = ∂ g ( x ; θ ) ∂ x ∂ g ( x ; θ ) ∂ x T , (A.8) where f ( x ; θ ) ≥ 0 is the acti v ation function (mean response) of neuron and g ( x ; θ ) = 2 p f ( x ; θ ) . (A.9) 11 Published as a conference paper at ICLR 2017 Similarly , for Gaussian noise model, we hav e p ( r | x ; θ ) = 1 σ √ 2 π exp − ( r − f ( x ; θ )) 2 2 σ 2 ! , (A.10) S ( x ; θ ) = 1 σ 2 ∂ f ( x ; θ ) ∂ x ∂ f ( x ; θ ) ∂ x T , (A.11) where σ > 0 denotes the standard de viation of noise. Sometimes we do not know the specific form of p ( x ) and only know M samples, x 1 , · · · , x M , which are independent and identically distributed (i.i.d.) samples drawn from the distribution p ( x ) . Then we can use the empirical av erage to approximate the integral in Eq. (1): I G ≈ 1 2 M X m =1 ln (det ( G ( x m ))) + H ( X ) . (A.12) A . 2 P R O O F O F P R O P O S I T I O N 1 Proof . It follo ws from the data-processing inequality (Cov er & Thomas, 2006) that I ( X ; R ) ≤ I ( Y ; R ) ≤ I ( ˘ Y ; R ) ≤ I ( ¯ Y ; R ) , (A.13) I ( X ; R ) ≤ I ( X ; ¯ Y ) ≤ I ( X ; ˘ Y ) ≤ I ( X ; Y ) . (A.14) Since p ( ¯ y k | x ) = p ( ˘ y k 1 , · · · , ˘ y k N k | x ) = N ( w T k x , N − 1 k σ 2 ) , k = 1 , · · · , K 1 , (A.15) we hav e p ( ¯ y | x ) = p ( ˘ y | x ) , (A.16) p ( ¯ y ) = p ( ˘ y ) , (A.17) I ( X ; ¯ Y ) = I ( X ; ˘ Y ) . (A.18) Hence, by (A.14) and (A.18), expression (12) holds. On the other hand, when N k is large, from Eq. (10) we kno w that the distrib ution of ¯ Z k , namely , N 0 , N − 1 k σ 2 , approaches a Dirac delta function δ ( ¯ z k ) . Then by (7) and (9) we have p ( r | ¯ y ) ' p ( r | y ) = p ( r | x ) and I ( X ; R ) = I ( Y ; R ) − ln p ( r | y ) p ( r | x ) r , x = I ( Y ; R ) , (A.19) I ( Y ; R ) = I ( ¯ Y ; R ) − ln p ( r | ¯ y ) p ( r | y ) r , y , ¯ y ' I ( ¯ Y ; R ) , (A.20) I ( Y ; R ) = I ( ˘ Y ; R ) − ln p ( r | ˘ y ) p ( r | y ) r , y , ˘ y ' I ( ˘ Y ; R ) , (A.21) I ( X ; Y ) = I ( X ; ¯ Y ) − ln p ( x | ¯ y ) p ( x | y ) x , y , ¯ y ' I ( X ; ¯ Y ) . (A.22) It follows from (A.13) and (A.19) that (11) holds. Combining (11), (12) and (A.20)–(A.22), we immediately get (13) and (14). This completes the proof of Proposition 1 . A . 3 H I E R A R C H I C A L O P T I M I Z AT I O N F O R M A X I M I Z I N G I ( X ; R ) In the follo wing, we will discuss the optimization procedure for maximizing I ( X ; R ) in two stages: maximizing I ( X ; ˘ Y ) and maximizing I ( Y ; R ) . 12 Published as a conference paper at ICLR 2017 A . 3 . 1 T H E 1 S T S TAG E In the first stage, our goal is to maximize the MI I ( X ; ˘ Y ) and get the optimal parameters w k ( k = 1 , · · · , K 1 ). Assume that the stimulus x has zero mean (if not, let x ← x − h x i x ) and cov ariance matrix Σ x . It follows from eigendecomposition that Σ x = xx T x ≈ 1 M − 1 XX T = UΣU T , (A.23) where X = [ x 1 , · · · , x M ] , U = [ u 1 , · · · , u K ] ∈ R K × K is an unitary orthogonal matrix and Σ = diag σ 2 1 , · · · , σ 2 K is a positiv e diagonal matrix with σ 1 ≥ · · · ≥ σ K > 0 . Define ˜ x = Σ − 1 / 2 U T x , (A.24) ˜ w k = Σ 1 / 2 U T w k , (A.25) y k = ˜ w T k ˜ x , (A.26) where k = 1 , · · · , K 1 . The cov ariance matrix of ˜ x is giv en by Σ ˜ x = D ˜ x ˜ x T E ˜ x ≈ I K , (A.27) and I K is a K × K identity matrix. From (1) and (A.11) we have I ( X ; ˘ Y ) = I ( ˜ X ; ˘ Y ) and I ( ˜ X ; ˘ Y ) ' I 0 G = 1 2 ln det ˜ G 2 π e !! + H ( ˜ X ) , (A.28) ˜ G ≈ N σ − 2 K 1 X k =1 α k ˜ w k ˜ w T k + I K . (A.29) The following approximations are useful (see Huang & Zhang, 2016): p ( ˜ x ) ≈ N (0 , I K ) , (A.30) P ( ˜ x ) = − ∂ 2 ln p ( ˜ x ) ∂ ˜ x ∂ ˜ x T ≈ I K . (A.31) By the central limit theorem, the distribution of random v ariable ˜ X is closer to a normal distribu- tion than the distrib ution of the original random variable X . On the other hand, the PCA models assume multiv ariate gaussian data whereas the ICA models assume multiv ariate non-gaussian data. Hence by a PCA-like whitening transformation (A.24) we can use the approximation (A.31) with the Laplace’ s method of asymptotic expansion, which only requires that the peak be close to its mean while random variable ˜ X needs not be exactly Gaussian. W ithout any constraints on the Gaussian channel of neural populations, especially the peak firing rates, the capacity of this channel may grow indefinitely: I ( ˜ X ; ˘ Y ) → ∞ . The most common constraint on the neural populations is an energy or power constraint which can also be regarded as a signal-to-noise ratio (SNR) constraint. The SNR for the output ˘ y n of the n -th neuron is giv en by SNR n = 1 σ 2 D w T n x 2 E x ≈ 1 σ 2 ˜ w T n ˜ w n , n = 1 , · · · , N . (A.32) W e require that 1 N N X n =1 SNR n ≈ 1 σ 2 K 1 X k =1 α k ˜ w T k ˜ w k ≤ ρ , (A.33) where ρ is a positiv e constant. Then by Eq. (A.28), (A.29) and (A.33), we have the following optimization problem: minimize Q 0 G [ ˆ W ] = − 1 2 ln det N σ − 2 ˆ W ˆ W T + I K , (A.34) subject to h = T r ˆ W ˆ W T − E ≤ 0 , (A.35) 13 Published as a conference paper at ICLR 2017 where T r ( · ) denotes matrix trace and ˆ W = ˜ WA 1 / 2 = Σ 1 / 2 U T W A 1 / 2 = [ ˆ w 1 , · · · , ˆ w K 1 ] , (A.36) A = diag ( α 1 , · · · , α K 1 ) , (A.37) W = [ w 1 , · · · , w K 1 ] , (A.38) ˜ W = [ ˜ w 1 , · · · , ˜ w K 1 ] , (A.39) E = ρσ 2 . (A.40) Here E is a constant that does not af fect the final optimal solution so we set E = 1 . Then we obtain an optimal solution as follows: W = a U 0 Σ − 1 / 2 0 V T 0 , (A.41) A = K − 1 1 I K 1 , (A.42) a = q E K 1 K − 1 0 = q K 1 K − 1 0 , (A.43) Σ 0 = diag σ 2 1 , · · · , σ 2 K 0 , (A.44) U 0 = U ( : , 1 : K 0 ) ∈ R K × K 0 , (A.45) V 0 = V ( : , 1 : K 0 ) ∈ R K 1 × K 0 , (A.46) where V = [ v 1 , · · · , v K 1 ] is an K 1 × K 1 unitary orthogonal matrix, parameter K 0 represents the size of the reduced dimension ( 1 ≤ K 0 ≤ K ), and its v alue will be determined belo w . Now the optimal parameters w n ( n = 1 , · · · , N ) are clustered into K 1 classes (see Eq. A.6) and obey an uniform discrete distribution (see also Eq. A.60 in Appendix A.3.2). When K = K 0 = K 1 , the optimal solution of W in Eq. (A.41) is a whitening-like filter . When V = I K , the optimal matrix W is the principal component analysis (PCA) whitening filters. In the symmetrical case with V = U , the optimal matrix W becomes a zero component analysis (ZCA) whitening filter . If K < K 1 , this case leads to an overcomplete solution, whereas when K 0 ≤ K 1 < K , the undercomplete solution arises. Since K 0 ≤ K 1 and K 0 ≤ K , Q 0 G achiev es its minimum when K 0 = K . Ho we ver , in practice other factors may prevent it from reaching this minimum. For example, consider the a verage of squared weights, ς = K 1 X k =1 α k k w k k 2 = T r W A W T = E K 0 K 0 X k =1 σ − 2 k , (A.47) where k·k denotes the Frobenius norm. The v alue of ς is extremely large when any σ k becomes vanishingly small. For real neurons these weights of connection are not allowed to be too large. Hence we impose a limitation on the weights: ς ≤ E 1 , where E 1 is a positive constant. This yields another constraint on the objectiv e function, ˜ h = E K 0 K 0 X k =1 σ − 2 k − E 1 ≤ 0 . (A.48) From (A.35) and (A.48) we get the optimal K 0 = arg max ˜ K 0 E ˜ K − 1 0 P ˜ K 0 k =1 σ − 2 k . By this con- straint, small values of σ 2 k will often result in K 0 < K and a lo w-rank matrix W (Eq. A.41). On the other hand, the low-rank matrix W can filter out the noise of stimulus x . Consider the transformation Y = W T X with X = [ x 1 , · · · , x M ] and Y = [ y 1 , · · · , y M ] for M samples. It follows from the singular v alue decomposition (SVD) of X that X = US ˜ V T , (A.49) where U is giv en in (A.23), ˜ V is a M × M unitary orthogonal matrix, S is a K × M diagonal matrix with non-negati v e real numbers on the diagonal, S k,k = √ M − 1 σ k ( k = 1 , · · · , K , K ≤ M ), and SS T = ( M − 1) Σ . Let ˘ X = √ M − 1 U 0 Σ 1 / 2 0 ˜ V T 0 ≈ X , (A.50) 14 Published as a conference paper at ICLR 2017 where ˜ V 0 = ˜ V ( : , 1 : K 0 ) ∈ R M × K 0 , Σ 0 and U 0 are gi ven in (A.44) and (A.45), respectiv ely . Then Y = W T X = a V 0 Σ − 1 / 2 0 U T 0 US ˜ V T = W T ˘ X = a √ M − 1 V 0 ˜ V T 0 , (A.51) where ˘ X can be regarded as a denoised version of X . The determination of the effecti v e rank K 0 ≤ K of the matrix ˘ X by using singular values is based on various criteria (K onstantinides & Y ao, 1988). Here we choose K 0 as follows: K 0 = arg min K 0 0 v u u t P K 0 0 k =1 σ 2 k P K k =1 σ 2 k ≥ , (A.52) where is a positive constant ( 0 < ≤ 1 ). Another adv antage of a low-rank matrix W is that it can significantly reduce o verfitting for learning neural population parameters. In practice, the constraint (A.47) is equi v alent to a weight-decay reg- ularization term used in many other optimization problems (Cortes & V apnik, 1995; Hinton, 2010), which can reduce o verfitting to the training data. T o prev ent the neural netw orks from o verfitting, Sriv astava et al. (2014) presented a technique to randomly drop units from the neural network dur - ing training, which may in fact be reg arded as an attempt to reduce the rank of the weight matrix because the dropout can result in a sparser weights (lower rank matrix). This means that the update is only concerned with k eeping the more important components, which is similar to first performing a denoising process by the SVD low rank approximation. In this stage, we hav e obtained the optimal parameter W (see A.41). The optimal v alue of matrix V 0 can also be determined, as shown in Appendix A.3.3. A . 3 . 2 T H E 2 N D S TAG E For this stage, our goal is to maximize the MI I ( Y ; R ) and get the optimal parameters ˜ θ k , k = 1 , · · · , K 1 . Here the input is y = ( y 1 , · · · , y K 1 ) T and the output r = ( r 1 , · · · , r N ) T is also clustered into K 1 classes. The responses of N k neurons in the k -th subpopulation obey a Pois- son distrib ution with mean ˜ f ( e T k y ; ˜ θ k ) , where e k is a unit vector with 1 in the k -th element and y k = e T k y . By (A.24) and (A.26), we have h y k i y k = 0 , (A.53) σ 2 y k = y 2 k y k = k ˜ w k k 2 . (A.54) Then for large N , by (1)–(4) and (A.30) we can use the following approximation, I ( Y ; R ) ' ˘ I F = 1 2 * ln det ˘ J ( y ) 2 π e !!+ y + H ( Y ) , (A.55) where ˘ J ( y ) = diag N α 1 | g 0 1 ( y 1 ) | 2 , · · · , N α K 1 g 0 K 1 ( y K 1 ) 2 , (A.56) g 0 k ( y k ) = ∂ g k ( y k ) ∂ y k , k = 1 , · · · , K 1 , (A.57) g k ( y k ) = 2 q ˜ f ( y k ; ˜ θ k ) , k = 1 , · · · , K 1 . (A.58) It is easy to get that ˘ I F = 1 2 K 1 X k =1 * ln N α k | g 0 k ( y k ) | 2 2 π e !+ y + H ( Y ) ≤ 1 2 K 1 X k =1 * ln | g 0 k ( y k ) | 2 2 π e !+ y − K 1 2 ln K 1 N + H ( Y ) , (A.59) 15 Published as a conference paper at ICLR 2017 where the equality holds if and only if α k = 1 K 1 , k = 1 , · · · , K 1 , (A.60) which is consistent with Eq. (A.42). On the other hand, it follows from the Jensen’ s inequality that ˘ I F = * ln p ( y ) − 1 det ˘ J ( y ) 2 π e ! 1 / 2 + y ≤ ln Z det ˘ J ( y ) 2 π e ! 1 / 2 d y , (A.61) where the equality holds if and only if p ( y ) − 1 det ˘ J ( y ) 1 / 2 is a constant, which implies that p ( y ) = det ˘ J ( y ) 1 / 2 R det ˘ J ( y ) 1 / 2 d y = Q K 1 k =1 | g 0 k ( y k ) | R Q K 1 k =1 | g 0 k ( y k ) | d y . (A.62) From (A.61) and (A.62), maximizing ˜ I F yields p ( y k ) = | g 0 k ( y k ) | R | g 0 k ( y k ) | dy k , k = 1 , · · · , K 1 . (A.63) W e assume that (A.63) holds, at least approximately . Hence we can let the peak of g 0 k ( y k ) be at y k = h y k i y k = 0 and y 2 k y k = σ 2 y k = k ˜ w k k 2 . Then combining (A.57), (A.61) and (A.63) we find the optimal parameters ˜ θ k for the nonlinear functions ˜ f ( y k ; ˜ θ k ) , k = 1 , · · · , K 1 . A . 3 . 3 T H E F I N A L O B J E C T I V E F U N C T I O N In the preceding sections we hav e obtained the initial optimal solutions by maximizing I X ; ˘ Y and I ( Y ; R ) . In this section, we will discuss how to find the final optimal V 0 and other parameters by maximizing I ( X ; R ) from the initial optimal solutions. First, we hav e y = ˜ W T ˜ x = a ˆ y , (A.64) where a is given in (A.43) and ˆ y = ( ˆ y 1 , · · · , ˆ y K 1 ) T = C T ˆ x = ˇ C T ˇ x , (A.65) ˆ x = Σ − 1 / 2 0 U T 0 x , (A.66) C = V T 0 ∈ R K 0 × K 1 , (A.67) ˇ x = U 0 Σ − 1 / 2 0 U T 0 x = U 0 ˆ x , (A.68) ˇ C = U 0 C = [ ˇ c 1 , · · · , ˇ c K 1 ] . (A.69) It follows that I ( X ; R ) = I ˜ X ; R ' ˜ I G = 1 2 ln det G ( ˆ x ) 2 π e ˆ x + H ( ˜ X ) , (A.70) G ( ˆ x ) = N ˆ W ˆ Φ ˆ W T + I K , (A.71) ˆ W = Σ 1 / 2 U T W A 1 / 2 = a q K − 1 1 I K K 0 C = q K − 1 0 I K K 0 C , (A.72) 16 Published as a conference paper at ICLR 2017 where I K K 0 is a K × K 0 diagonal matrix with value 1 on the diagonal and ˆ Φ = Φ 2 , (A.73) Φ = diag ( φ ( ˆ y 1 ) , · · · , φ ( ˆ y K 1 )) , (A.74) φ ( ˆ y k ) = a − 1 ∂ g k ( ˆ y k ) ∂ ˆ y k , (A.75) g k ( ˆ y k ) = 2 q ˜ f ( ˆ y k ; ˜ θ k ) , (A.76) ˆ y k = a − 1 y k = c T k ˆ x , k = 1 , · · · , K 1 . (A.77) Then we hav e det ( G ( ˆ x )) = det N K − 1 0 C ˆ ΦC T + I K 0 . (A.78) For lar ge N and K 0 / N → 0 , we have det ( G ( ˆ x )) ≈ det ( J ( ˆ x )) = det N K − 1 0 C ˆ ΦC T , (A.79) ˜ I G ≈ ˜ I F = − Q − K 2 ln (2 π e ) − K 0 2 ln ( ε ) + H ( ˜ X ) , (A.80) Q = − 1 2 D ln det C ˆ ΦC T E ˆ x , (A.81) ε = K 0 N . (A.82) Hence we can state the optimization problem as: minimize Q [ C ] = − 1 2 D ln det C ˆ ΦC T E ˆ x , (A.83) subject to CC T = I K 0 . (A.84) The gradient from (A.83) is giv en by: dQ [ C ] d C = − C ˆ ΦC T − 1 C ˆ Φ + ˆ x ω T ˆ x , (A.85) where C = [ c 1 , · · · , c K 1 ] , ω = ( ω 1 , · · · , ω K 1 ) T , and ω k = φ ( ˆ y k ) φ 0 ( ˆ y k ) c T k C ˆ ΦC T − 1 c k , k = 1 , · · · , K 1 . (A.86) In the following we will discuss ho w to get the optimal solution of C for two specific cases. A . 4 A L G O R I T H M S F O R O P T I M I Z A T I O N O B J E C T I V E F U N C T I O N A . 4 . 1 A L G O R I T H M 1 : K 0 = K 1 Now CC T = C T C = I K 1 , then by Eq. (A.83) we hav e Q 1 [ C ] = − * K 1 X k =1 ln ( φ ( ˆ y k )) + ˆ x , (A.87) dQ 1 [ C ] d C = − ˆ x ω T ˆ x , (A.88) ω k = φ 0 ( ˆ y k ) φ ( ˆ y k ) , k = 1 , · · · , K 1 . (A.89) Under the orthogonality constraints (Eq. A.84), we can use the follo wing update rule for learning C (Edelman et al., 1998; Amari, 1999): C t +1 = C t + µ t d C t dt , (A.90) d C t dt = − dQ 1 [ C t ] d C t + C t dQ 1 [ C t ] d C t T C t , (A.91) 17 Published as a conference paper at ICLR 2017 where the learning rate parameter µ t changes with the iteration count t , t = 1 , · · · , t max . Here we can use the empirical a verage to approximate the integral in (A.88) (see Eq. A.12). W e can also apply stochastic gradient descent (SGD) method for online updating of C t +1 in (A.90). The orthogonality constraint (Eq. A.84) can accelerate the con v ergence rate. In practice, the orthog- onal constraint (A.84) for objectiv e function (A.83) is not strictly necessary in this case. W e can completely discard this constraint condition and consider minimize Q 2 [ C ] = − * K 1 X k =1 ln ( φ ( ˆ y k )) + ˆ x − 1 2 ln det C T C , (A.92) where we assume rank ( C ) = K 1 = K 0 . If we let d C dt = − CC T dQ 2 [ C ] d C , (A.93) then T r dQ 2 [ C ] d C d C T dt = − T r C T dQ 2 [ C ] d C dQ 2 [ C ] d C T C ≤ 0 . (A.94) Therefore we can use an update rule similar to Eq. A.90 for learning C . In f act, the method can also be extended to the case K 0 > K 1 by using the same objectiv e function (A.92). The learning rate parameter µ t (see A.90) is updated adaptiv ely , as follo ws. First, calculate µ t = v t κ t , t = 1 , · · · , t max , (A.95) κ t = 1 K 1 K 1 X k =1 k∇ C t (: , k ) k k C t (: , k ) k , (A.96) and C t +1 by (A.90) and (A.91), then calculate the v alue Q 1 C t +1 . If Q 1 C t +1 < Q 1 [ C t ] , then let v t +1 ← v t , continue for the next iteration; otherwise, let v t ← τ v t , µ t ← v t /κ t and recalculate C t +1 and Q 1 C t +1 . Here 0 < v 1 < 1 and 0 < τ < 1 are set as constants. After getting C t +1 for each update, we employ a Gram–Schmidt orthonormalization process for matrix C t +1 , where the orthonormalization process can accelerate the con vergence. Howe ver , we can discard the Gram– Schmidt orthonormalization process after iterati v e t 0 ( > 1 ) epochs for more accurate optimization solution C . In this case, the objectiv e function is gi v en by the Eq. (A.92). W e can also further optimize parameter b by gradient descent. When K 0 = K 1 , the objective function Q 2 [ C ] in Eq. (A.92) without constraint is the same as the objectiv e function of infomax ICA (IICA) (Bell & Sejno wski, 1995; 1997), and as a consequence we should get the same optimal solution C . Hence, in this sense, the IICA may be regarded as a special case of our method. Our method has a wider range of applications and can handle more generic situations. Our model is deri v ed by neural populations with a huge number of neurons and it is not restricted to additi v e noise model. Moreo ver , our method has a f aster con v ergence rate during training than IICA (see Section 3). A . 4 . 2 A L G O R I T H M 2 : K 0 ≤ K 1 In this case, it is computationally expensiv e to update C by using the gradient of Q (see Eq. A.85), since it needs to compute the in verse matrix for e v ery ˆ x . Here we pro vide an alternativ e method for learning the optimal C . First, we consider the following inequalities. 18 Published as a conference paper at ICLR 2017 Proposition 2. The following inequations hold, 1 2 D ln det C ˆ ΦC T E ˆ x ≤ 1 2 ln det C D ˆ Φ E ˆ x C T , (A.97) ln det CΦC T ˆ x ≤ ln det C h Φ i ˆ x C T (A.98) ≤ 1 2 ln det C h Φ i 2 ˆ x C T (A.99) ≤ 1 2 ln det C D ˆ Φ E ˆ x C T , (A.100) ln det CΦC T ≤ 1 2 ln det C ˆ ΦC T , (A.101) wher e C ∈ R K 0 × K 1 , K 0 ≤ K 1 , and CC T = I K 0 . Proof . Functions ln det C D ˆ Φ E ˆ x C T and ln det C h Φ i ˆ x C T are concav e functions about p ( ˆ x ) (see the proof of Proposition 5.2. in Huang & Zhang, 2016), which f act establishes inequalities (A.97) and (A.98). Next we will pro ve the inequality (A.101). By SVD, we ha ve CΦ = ¨ U ¨ D ¨ V T , (A.102) where ¨ U is a K 0 × K 0 unitary orthogonal matrix, ¨ V = [ ¨ v 1 , ¨ v 2 , · · · , ¨ v K 1 ] is an K 1 × K 1 unitary orthogonal matrix, and ¨ D is an K 0 × K 1 rectangular diagonal matrix with K 0 positiv e real numbers on the diagonal. By the matrix Hadamard’ s inequality and Cauchy–Schwarz inequality we hav e det CΦC T CΦC T det C ˆ ΦC T − 1 = det ¨ D ¨ V T C T C ¨ V ¨ D T ¨ D ¨ D T − 1 = det ¨ V T 1 C T C ¨ V 1 = det C ¨ V 1 2 ≤ K 0 Y k =1 C ¨ V 1 2 k,k ≤ K 0 Y k =1 CC T 2 k,k ¨ V T 1 ¨ V 1 2 k,k = 1 , (A.103) where ¨ V 1 = [ ¨ v 1 , ¨ v 2 , · · · , ¨ v K 0 ] ∈ R K 1 × K 0 . The last equality holds because of CC T = I K 0 and ¨ V T 1 ¨ V 1 = I K 0 . This establishes inequality (A.101) and the equality holds if and only if K 0 = K 1 or C ¨ V 1 = I K 0 . Similarly , we get inequality (A.99): ln det C h Φ i ˆ x C T ≤ 1 2 ln det C h Φ i 2 ˆ x C T . (A.104) By Jensen’ s inequality , we ha v e h φ ( ˆ y k ) i 2 ˆ x ≤ D φ ( ˆ y k ) 2 E ˆ x , ∀ k = 1 , · · · , K 1 . (A.105) Then it follows from (A.105) that inequality (A.100) holds: 1 2 ln det C h Φ i 2 ˆ x C T ≤ 1 2 ln det C D ˆ Φ E ˆ x C T . (A.106) 19 Published as a conference paper at ICLR 2017 This completes the proof of Proposition 2 . By Proposition 2, if K 0 = K 1 then we get 1 2 D ln det ˆ Φ E ˆ x ≤ 1 2 ln det D ˆ Φ E ˆ x , (A.107) h ln (det ( Φ )) i ˆ x ≤ ln (det ( h Φ i ˆ x )) (A.108) = 1 2 ln det h Φ i 2 ˆ x (A.109) ≤ 1 2 ln det D ˆ Φ E ˆ x , (A.110) ln (det ( Φ )) = 1 2 ln det ˆ Φ . (A.111) On the other hand, it follows from (A.81) and Proposition 2 that ln det CΦC T ˆ x ≤ − Q ≤ 1 2 ln det C D ˆ Φ E ˆ x C T , (A.112) ln det CΦC T ˆ x ≤ − ˆ Q ≤ 1 2 ln det C D ˆ Φ E ˆ x C T . (A.113) Hence we can see that ˆ Q is close to Q (see A.81). Moreov er , it follo ws from the Cauchy–Schwarz inequality that D ( Φ ) k,k E ˆ x = h φ ( ˆ y k ) i ˆ y k ≤ Z φ ( ˆ y k ) 2 d ˆ y k Z p ( ˆ y k ) 2 d ˆ y k 1 / 2 , (A.114) where k = 1 , · · · , K 1 , the equality holds if and only if the following holds: p ( ˆ y k ) = φ ( ˆ y k ) R φ ( ˆ y k ) d ˆ y k , k = 1 , · · · , K 1 , (A.115) which is the similar to Eq. (A.63). Since I ( X ; R ) = I ( Y ; R ) (see Proposition 1), by maximizing I ( X ; R ) we hope the equality in inequality (A.61) and equality (A.63) hold, at least approximativ ely . On the other hand, let C opt = arg min C Q [ C ] = arg max C D ln det( C ˆ ΦC T ) E ˆ x , (A.116) ˆ C opt = arg min C ˆ Q [ C ] = arg max C ln det C h Φ i 2 ˆ x C T , (A.117) C opt and ˆ C opt make (A.63) and (A.115) to hold true, which implies that they are the same optimal solution: C opt = ˆ C opt . Therefore, we can use the following objectiv e function ˆ Q [ C ] as a substitute for Q [ C ] and write the optimization problem as: minimize ˆ Q [ C ] = − 1 2 ln det C h Φ i 2 ˆ x C T , (A.118) subject to CC T = I K 0 . (A.119) The update rule (A.90) may also apply here and a modified algorithm similar to Algorithm 1 may be used for parameter learning. A . 5 S U P P L E M E N TA RY E X P E R I M E N T S A . 5 . 1 Q UA N T I TA T I V E M E T H O D S F O R C O M PA R I S O N T o quantify the ef ficienc y of learning representations by the abo ve algorithms, we calculate the co- efficient entropy (CFE) for estimating coding cost as follows (Lewicki & Olshausen, 1999; Lewicki & Sejnowski, 2000): ˇ y k = ζ ˇ w T k ˇ x , k = 1 , · · · , K 1 , (A.120) ζ = K 1 P K 1 k =1 k ˇ w k k , (A.121) 20 Published as a conference paper at ICLR 2017 where ˇ x is defined by Eq. (A.68), and ˇ w k is the corresponding optimal filter . T o estimate the probability density of coefficients q k ( ˇ y k ) ( k = 1 , · · · , K 1 ) from the M training samples, we apply the kernel density estimation for q k ( ˇ y k ) and use a normal kernel with an adapti ve optimal windo w width. Then we define the CFE h as h = 1 K 1 K 1 X k =1 H k ( ˇ Y k ) , (A.122) H k ( ˇ Y k ) = − ∆ P n q k ( n ∆) log 2 q k ( n ∆) , (A.123) where q k ( ˇ y k ) is quantized as discrete q k ( n ∆) and ∆ is the step size. Methods such as IICA and SRBM as well as our methods have feedforward structures in which information is transferred directly through a nonlinear function, e.g., the sigmoid function. W e can use the amount of transmitted information to measure the results learned by these methods. Consider a neural population with N neurons, which is a stochastic system with nonlinear transfer functions. W e chose a sigmoidal transfer function and Gaussian noise with standard deviation set to 1 as the system noise. In this case, from (1), (A.8) and (A.11), we see that the approximate MI I G is equiv alent to the case of the Poisson neuron model. It follo ws from (A.70)–(A.82) that I ( X ; R ) = I ˜ X ; R = H ( ˜ X ) − H ˜ X | R ' ˜ I G = H ( ˜ X ) − h 1 , (A.124) H ˜ X | R ' h 1 = − 1 2 ln det 1 2 π e N K − 1 0 C ˆ ΦC T + I K 0 ˆ x , (A.125) where we set N = 10 6 . A good representation should make the MI I ( X ; R ) as big as possible. Equiv alently , for the same inputs, a good representation should make the conditional entropy (CDE) H ˜ X | R (or h 1 ) as small as possible. (a) (b) (c) (d) (e) (f) Figure 4: Comparison of basis vectors obtained by our method and other methods. Panel ( a )–( e ) correspond to panel ( a )–( e ) in Figure 2, where the basis vectors are gi ven by (A.130). The basis vectors in panel ( f ) are learned by MBDL and gi v en by (A.127). 21 Published as a conference paper at ICLR 2017 A . 5 . 2 C O M PA R I S O N O F B A S I S V E C T O R S W e compared our algorithm with an up-to-date sparse coding algorithm, the mini-batch dictionary learning (MBDL) as gi v en in (Mairal et al., 2009; 2010) and inte grated in Python library , i.e. scikit- learn. The input data w as the same as the abov e, i.e. 10 5 nature image patches preprocessed by the ZCA whitening filters. W e denotes the optimal dictionary learned by MBDL as ˇ B ∈ R K × K 1 for which each column represents a basis vector . Now we ha v e x ≈ UΣ 1 / 2 U T ˇ By = ˜ By , (A.126) ˜ B = UΣ 1 / 2 U T ˇ B , (A.127) where y = ( y 1 , · · · , y K 1 ) T is the coefficient v ector . Similarly , we can obtain a dictionary from the filter matrix C . Suppose rank ( C ) = K 0 ≤ K 1 , then it follows from (A.64) that ˆ x = a CC T − 1 Cy . (A.128) By (A.66) and (A.128), we get x ≈ By = a BC T Σ − 1 / 2 0 U T 0 x , (A.129) B = a − 1 U 0 Σ 1 / 2 0 CC T − 1 C = [ b 1 , · · · , b K 1 ] , (A.130) where y = W T x = a C T Σ − 1 / 2 0 U T 0 x , the vectors b 1 , · · · , b K 1 can be re garded as the basis vectors and the strict equality holds when K 0 = K 1 = K . Recall that X = [ x 1 , · · · , x M ] = US ˜ V T (see Eq. A.49) and Y = [ y 1 , · · · , y M ] = W T X = a √ M − 1 C T ˜ V T 0 , then we get ˘ X = BY = √ M − 1 U 0 Σ 1 / 2 0 ˜ V T 0 ≈ X . Hence, Eq. (A.129) holds. The basis vectors shown in Figure 4(a) – 4(e) correspond to filters in Figure 2(a) – 2(e). And Fig- ure 4(f) illustrates the optimal dictionary ˜ B learned by MBDL, where we set the re gularization pa- rameter as λ = 1 . 2 / √ K , the batch size as 50 and the total number of iterations to perform as 20000 , which took about 3 hours for training. From Figure 4 we see that these basis vectors obtained by the abov e algorithms hav e local Gabor-like shapes e xcept for those by SRBM. If rank( ˇ B ) = K = K 1 , then the matrix ˇ B − T can be regarded as a filter matrix like matrix ˇ C (see Eq. A.69). Ho we ver , from the column v ector of matrix ˇ B − T we cannot find an y local Gabor -like filter that resembles the filters shown in Figure 2. Our algorithm has less computational cost and a much faster con v er gence rate than the sparse coding algorithm. Moreover , the sparse coding method inv olves a dynamic generativ e model that requires relaxation and is therefore unsuitable for f ast inference, whereas the feedforward framew ork of our model is easy for inference because it only requires e v aluating the nonlinear tuning functions. A . 5 . 3 L E A R N I N G O V E R C O M P L E T E B A S E S W e ha ve trained our model on the Olshausen’ s nature image patches with a highly overcomplete setup by optimizing the objectiv e (A.118) by Alg.2 and got Gabor -like filters. The results of 400 typical filters chosen from 1024 output filters are displayed in Figure 5(a) and corresponding base (see Eq. A.130) are shown in Figure 5(b). Here the parameters are K 1 = 1024 , t max = 100 , v 1 = 0 . 4 , τ = 0 . 8 , and = 0 . 98 (see A.52), from which we got rank ( B ) = K 0 = 82 . Compared to the ICA-like results in Figure 2(a) – 2(c), the average size of Gabor-lik e filters in Figure 5(a) is bigger , indicating that the small noise-like local structures in the images ha v e been filtered out. W e have also trained our model on 60,000 images of handwritten digits from MNIST dataset (LeCun et al., 1998) and the resultant 400 typical optimal filters and bases are sho wn in Figure 5(c) and Figure 5(d), respectively . All parameters were the same as Figure 5(a) and Figure 5(b): K 1 = 1024 , t max = 100 , v 1 = 0 . 4 , τ = 0 . 8 and = 0 . 98 , from which we got rank ( B ) = K 0 = 183 . From these figures we can see that the salient features of the input images are reflected in these filters and bases. W e could also get the similar ov ercomplete filters and bases by SRBM and MBDL. Howe v er , the results depended sensitiv ely on the choice of parameters and the training took a long time. 22 Published as a conference paper at ICLR 2017 (a) (b) (c) (d) Figure 5: Filters and bases obtained from Olshausen’ s image dataset and MNIST dataset by Al- gorithm 2. ( a ) and ( b ): 400 typical filters and the corresponding bases obtained from Olshausen’ s image dataset, where K 0 = 82 and K 1 = 1024 . ( c ) and ( d ): 400 typical filters and the corresponding bases obtained from the MNIST dataset, where K 0 = 183 and K 1 = 1024 . Figure 6 sho ws that CFE as a function of training time for Alg.2, where Figure 6(a) corresponds to Figure 5(a)-5(b) for learning nature image patches and Figure 6(b) corresponds to Figure 5(c)-5(d) for learning MNIST dataset. W e set parameters t max = 100 and τ = 0 . 8 for all experiments and varied parameter v 1 for each experiment, with v 1 = 0 . 2 , 0 . 4 , 0 . 6 or 0 . 8 . These results indicate a fast con v ergence rate for training on dif ferent datasets. Generally , the con vergence is insensitiv e to the change of parameter v 1 . W e hav e also performed additional tests on other image datasets and got similar results, confirming the speed and robustness of our learning method. Compared with other methods, e.g., IICA, FICA, MBDL, SRBM or sparse autoencoders etc., our method appeared to be more ef ficient and rob ust for unsupervised learning of representations. W e also found that complete and o vero v ercomplete filters and bases learned by our methods had local Gabor -like shapes while the results by SRBM or MBDL did not hav e this property . 23 Published as a conference paper at ICLR 2017 10 0 10 1 10 2 time (seconds) 1.75 1.8 1.85 1.9 1.95 coefficient entropy (bits) v 1 = 0.2 v 1 = 0.4 v 1 = 0.6 v 1 = 0.8 (a) 10 0 10 1 10 2 time (seconds) 1.6 1.7 1.8 1.9 2 2.1 coefficient entropy (bits) v 1 = 0.2 v 1 = 0.4 v 1 = 0.6 v 1 = 0.8 (b) Figure 6: CFE as a function of training time for Alg.2, with v 1 = 0 . 2 , 0 . 4 , 0 . 6 or 0 . 8 . In all experiments parameters were set to t max = 100 , t 0 = 50 and τ = 0 . 8 . ( a ): corresponding to Figure 5(a) or Figure 5(b). ( b ): corresponding to Figure 5(c) or Figure 5(d). A . 5 . 4 I M AG E D E N O I S I N G Similar to the sparse coding method applied to image denoising (Elad & Aharon, 2006), our method (see Eq. A.130) can also be applied to image denoising, as sho wn by an example in Figure 7. The filters or bases were learned by using 7 × 7 image patches sampled from the left half of the image, and subsequently used to reconstruct the right half of the image which was distorted by Gaussian noise. A common practice for ev aluating the results of image denoising is by looking at the difference between the reconstruction and the original image. If the reconstruction is perfect the dif ference should look like Gaussian noise. In Figure 7(c) and 7(d) a dictionary ( 100 bases) was learned by MBDL and orthogonal matching pursuit was used to estimate the sparse solution. 1 For our method (shown in Figure 7(b)), we first get the optimal filters parameter W , a lo w rank matrix ( K 0 < K ), then from the distorted image patches x m ( m = 1 , · · · , M ) we get filter outputs y m = W T x m and the reconstruction ˘ x m = By m (parameters: = 0 . 975 and K 0 = K 1 = 14 ). As can be seen from Figure 7, our method worked better than dictionary learning, although we only used 14 bases compared with 100 bases used by dictionary learning. Our method is also more ef ficient. W e can get better optimal bases B by a generative model using our infomax approach (details not sho wn). 1 Python source code is av ailable at http://scikit-learn.or g/stable/ downloads/plot image denoising.py 24 Published as a conference paper at ICLR 2017 (a) (b) (c) (d) Figure 7: Image denoising. ( a ): the right half of the original image is distorted by Gaussian noise and the norm of the difference between the distorted image and the original image is 23 . 48 . ( b ): image denoising by our method (Algorithm 1), with 14 bases used. ( c ) and ( d ): image denoising using dictionary learning, with 100 bases used. 25

Original Paper

Loading high-quality paper...

Comments & Academic Discussion

Loading comments...

Leave a Comment